TECHNICAL ASSET FINGERPRINT

88af431b6f7778f919b59937

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## Diagram: Comparison of Question Answering Approaches

### Overview

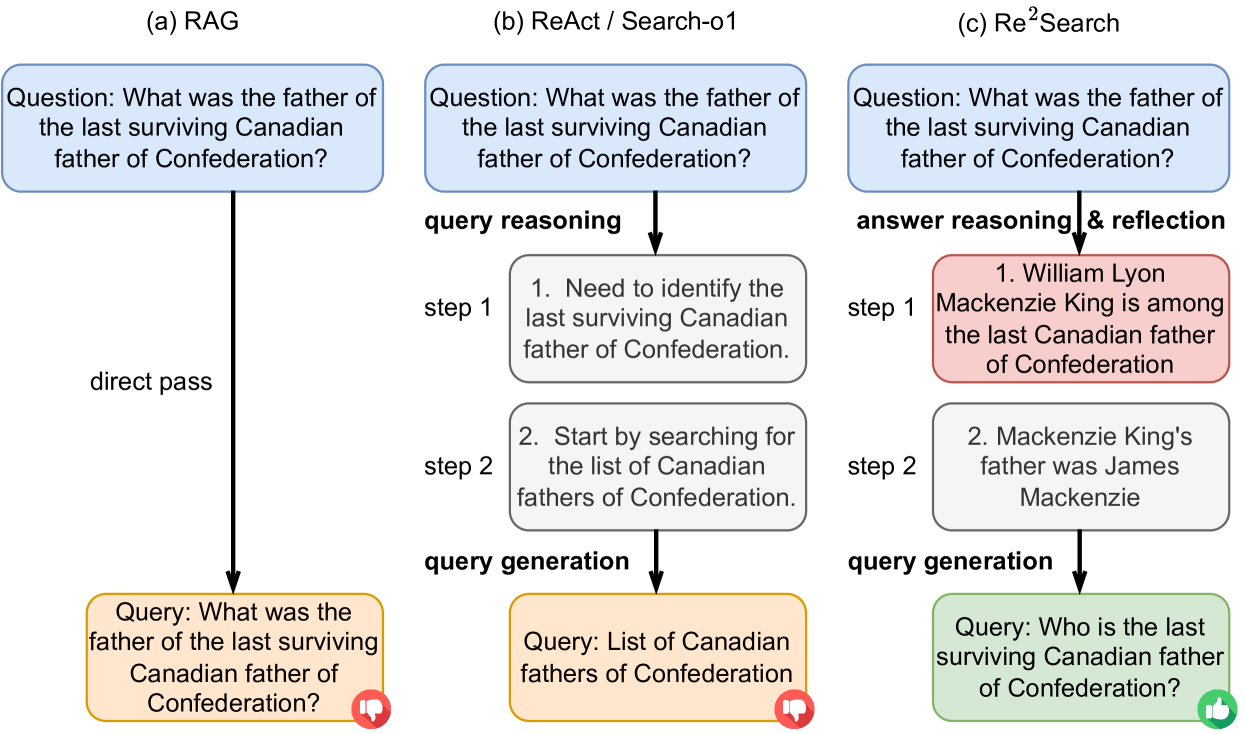

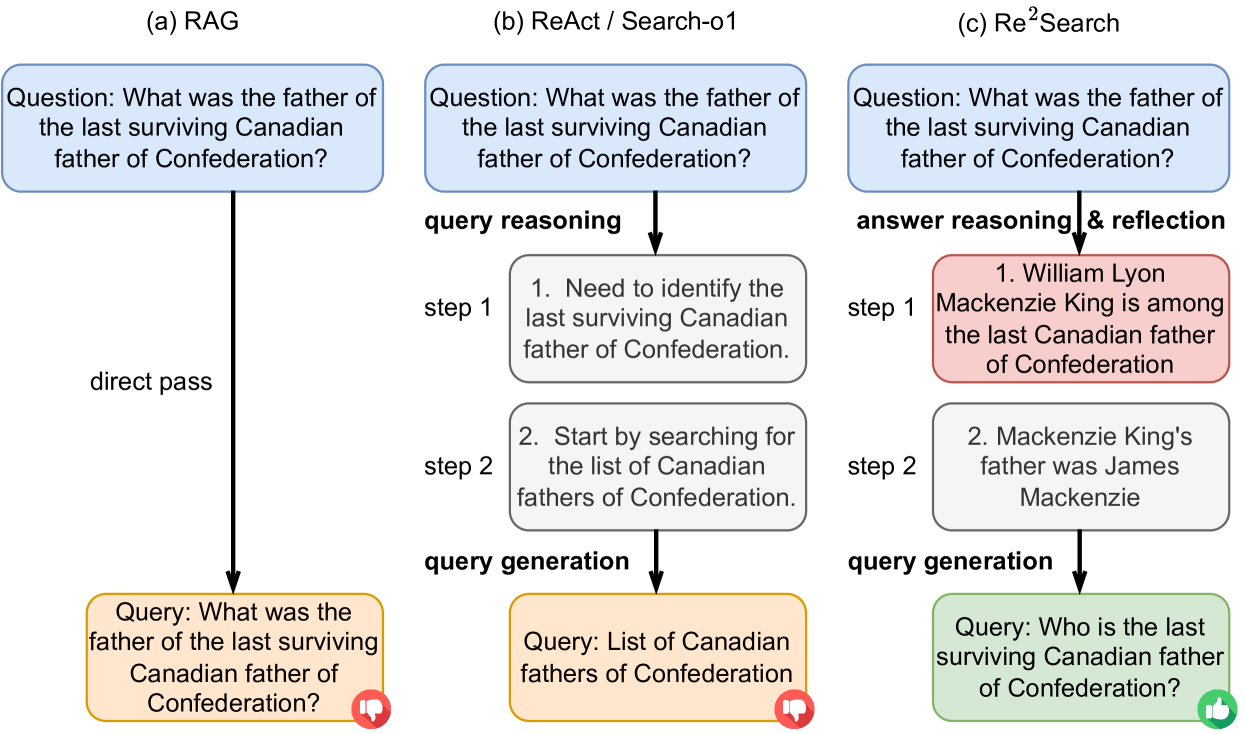

The image presents a comparative diagram illustrating three different approaches to answering a complex question: RAG (Retrieval-Augmented Generation), ReAct/Search-o1, and Re²Search. Each approach is depicted as a flowchart, showing the steps involved in processing the question and generating a query. The diagram highlights the differences in reasoning and query generation strategies among the three methods.

### Components/Axes

* **Titles:** The diagram is divided into three sections, each labeled with a title: (a) RAG, (b) ReAct / Search-o1, and (c) Re²Search.

* **Question:** Each section starts with the same initial question, presented in a light blue rounded box: "What was the father of the last surviving Canadian father of Confederation?"

* **Flow Arrows:** Black arrows indicate the flow of information and processing steps within each approach.

* **Process Steps:** Rectangular boxes represent individual steps in the reasoning and query generation process. These boxes are colored differently depending on the approach:

* RAG: Orange box for the final query.

* ReAct/Search-o1: Gray boxes for reasoning steps, orange box for the final query.

* Re²Search: Red box for answer reasoning, gray box for a subsequent step, and green box for the final query.

* **Step Labels:** Each reasoning step is labeled with "step 1", "step 2", etc.

* **Query Generation Labels:** The sections for ReAct/Search-o1 and Re²Search have labels indicating "query reasoning" and "query generation". The Re²Search section also has a label for "answer reasoning & reflection".

* **Feedback Icons:** Each final query box has a small icon in the bottom-right corner: a thumbs-down icon (red) for RAG and ReAct/Search-o1, and a thumbs-up icon (green) for Re²Search.

### Detailed Analysis

**RAG (Retrieval-Augmented Generation):**

* The initial question (light blue box) is "What was the father of the last surviving Canadian father of Confederation?".

* A "direct pass" arrow leads directly to the query generation stage.

* The generated query (orange box) is the same as the initial question: "What was the father of the last surviving Canadian father of Confederation?".

* A red thumbs-down icon is present.

**ReAct / Search-o1:**

* The initial question (light blue box) is "What was the father of the last surviving Canadian father of Confederation?".

* The process involves "query reasoning" in two steps (gray boxes):

* Step 1: "Need to identify the last surviving Canadian father of Confederation."

* Step 2: "Start by searching for the list of Canadian fathers of Confederation."

* The "query generation" stage (orange box) produces the query: "List of Canadian fathers of Confederation".

* A red thumbs-down icon is present.

**Re²Search:**

* The initial question (light blue box) is "What was the father of the last surviving Canadian father of Confederation?".

* The process involves "answer reasoning & reflection" in two steps:

* Step 1 (red box): "William Lyon Mackenzie King is among the last Canadian father of Confederation".

* Step 2 (gray box): "Mackenzie King's father was James Mackenzie".

* The "query generation" stage (green box) produces the query: "Who is the last surviving Canadian father of Confederation?".

* A green thumbs-up icon is present.

### Key Observations

* RAG directly uses the initial question as the query, without any intermediate reasoning steps.

* ReAct/Search-o1 performs query reasoning to generate a more specific query aimed at retrieving a list of relevant individuals.

* Re²Search incorporates answer reasoning and reflection, leading to a refined query that directly asks for the last surviving Canadian father of Confederation.

* The thumbs-up/thumbs-down icons suggest a qualitative assessment of the effectiveness of each approach, with Re²Search being the most successful.

### Interpretation

The diagram illustrates how different question-answering approaches handle a complex question. RAG's direct pass approach may be less effective for questions requiring reasoning or specific information retrieval strategies. ReAct/Search-o1 attempts to improve upon this by incorporating query reasoning, but still generates a query that requires further processing. Re²Search, with its answer reasoning and reflection, appears to be the most effective, generating a query that directly addresses the question and leads to a more accurate answer. The thumbs-up/thumbs-down icons visually reinforce this assessment. The diagram highlights the importance of reasoning and reflection in question answering systems, particularly for complex or nuanced queries.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Diagram: Comparison of Retrieval-Augmented Generation (RAG), ReAct/Search-01, and Re²Search

### Overview

This diagram illustrates and compares three different approaches to answering a question: Retrieval-Augmented Generation (RAG), ReAct/Search-01, and Re²Search. Each approach is presented as a flow diagram, showing the question asked, the steps taken to answer it, and the final query generated. The diagram highlights the differences in reasoning and query generation strategies employed by each method.

### Components/Axes

The diagram is divided into three columns, labeled (a) RAG, (b) ReAct / Search-01, and (c) Re²Search. Each column represents a different approach. Within each column, the diagram shows:

* **Question:** The initial question posed to the system.

* **Steps:** Numbered steps outlining the reasoning or action taken.

* **Query Generation:** The final query sent to an external source (presumably a search engine or knowledge base).

* **Arrows:** Indicate the flow of information and the sequence of steps.

* **Shapes:** Different shapes are used to represent different elements:

* Rounded rectangles: Questions and direct passes.

* Rectangles with rounded corners: Reasoning steps.

* Parallelograms: Query generation.

* Speech bubbles: Indicate a query being sent.

* **Icons:** Thumbs up/down icons are used to indicate the success or failure of a query.

### Detailed Analysis or Content Details

**(a) RAG**

* **Question:** "What was the father of the last surviving Canadian father of Confederation?"

* **Direct Pass:** The question is passed directly to the query generation stage.

* **Query:** "What was the father of the last surviving Canadian father of Confederation?"

* **Icon:** Question mark icon.

**(b) ReAct / Search-01**

* **Question:** "What was the father of the last surviving Canadian father of Confederation?"

* **Step 1:** "Need to identify the last surviving Canadian father of Confederation."

* **Step 2:** "Start searching for the list of Canadian fathers of Confederation."

* **Query Generation:** "List of Canadian fathers of Confederation"

* **Icon:** Thumbs up icon.

**(c) Re²Search**

* **Question:** "What was the father of the last surviving Canadian father of Confederation?"

* **Step 1:** "William Lyon Mackenzie King is among the last Canadian father of Confederation."

* **Step 2:** "Mackenzie King's father was James Mackenzie"

* **Query Generation:** "Who is the last surviving Canadian father of Confederation?"

* **Icon:** Thumbs up icon.

### Key Observations

* RAG directly passes the question as a query without any intermediate reasoning steps.

* ReAct/Search-01 breaks down the question into smaller steps, first identifying the need to find the last surviving father of Confederation, then generating a query to find a list of fathers.

* Re²Search incorporates answer reasoning and reflection, first identifying a potential candidate (William Lyon Mackenzie King) and then generating a query to confirm the information.

* ReAct/Search-01 and Re²Search both use thumbs up icons, suggesting successful query generation, while RAG uses a question mark, implying uncertainty or a need for further processing.

### Interpretation

The diagram demonstrates a progression in complexity and sophistication in question-answering approaches. RAG represents a simple retrieval-based method, while ReAct/Search-01 and Re²Search introduce reasoning steps to refine the query and improve accuracy. Re²Search further enhances this by incorporating answer reasoning and reflection, potentially leading to more reliable results. The use of icons suggests a feedback mechanism for evaluating query success. The diagram highlights the benefits of breaking down complex questions into smaller, manageable steps and leveraging external knowledge sources to enhance the reasoning process. The differences in approach suggest trade-offs between simplicity, computational cost, and accuracy. The diagram is a visual representation of the evolution of question-answering systems, showcasing the increasing importance of reasoning and reflection in achieving more intelligent and reliable results.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Comparative Diagram: Three AI Reasoning Approaches for a Historical Query

### Overview

The image is a technical diagram comparing three different artificial intelligence (AI) or language model reasoning architectures—labeled (a) RAG, (b) ReAct / Search-o1, and (c) Re²Search—applied to the same factual question. It visually contrasts their workflows, from receiving a question to generating a search query, highlighting differences in reasoning steps and outcomes. The diagram uses color-coded boxes, arrows, and icons to denote process flow, content type, and success/failure.

### Components/Axes

The diagram is organized into three vertical columns, each representing a distinct method.

**Column (a) RAG:**

* **Top (Blue Box):** Contains the initial question.

* **Process Arrow:** A single, long black arrow labeled "direct pass" connects the question directly to the query output.

* **Bottom (Orange Box):** Contains the generated search query. A red "thumbs-down" icon is attached to the bottom-right corner.

**Column (b) ReAct / Search-o1:**

* **Top (Blue Box):** Contains the identical initial question.

* **Process Flow:** An arrow labeled "query reasoning" leads to a sequence of two gray reasoning steps.

* **Step 1 (Gray Box):** "1. Need to identify the last surviving Canadian father of Confederation."

* **Step 2 (Gray Box):** "2. Start by searching for the list of Canadian fathers of Confederation."

* **Process Arrow:** An arrow labeled "query generation" leads from the reasoning steps to the query output.

* **Bottom (Orange Box):** Contains the generated search query. A red "thumbs-down" icon is attached.

**Column (c) Re²Search:**

* **Top (Blue Box):** Contains the identical initial question.

* **Process Flow:** An arrow labeled "answer reasoning & reflection" leads to a sequence of two steps, with the first being a distinct pink/red color.

* **Step 1 (Pink/Red Box):** "1. William Lyon Mackenzie King is among the last Canadian father of Confederation"

* **Step 2 (Gray Box):** "2. Mackenzie King's father was James Mackenzie"

* **Process Arrow:** An arrow labeled "query generation" leads from the reasoning steps to the query output.

* **Bottom (Green Box):** Contains the generated search query. A green "thumbs-up" icon is attached.

### Detailed Analysis

**Transcription of All Text:**

* **Common Question (All Columns):** "Question: What was the father of the last surviving Canadian father of Confederation?"

* **Column (a) RAG:**

* Process Label: "direct pass"

* Generated Query: "Query: What was the father of the last surviving Canadian father of Confederation?"

* **Column (b) ReAct / Search-o1:**

* Process Label (top): "query reasoning"

* Step 1: "1. Need to identify the last surviving Canadian father of Confederation."

* Step 2: "2. Start by searching for the list of Canadian fathers of Confederation."

* Process Label (bottom): "query generation"

* Generated Query: "Query: List of Canadian fathers of Confederation"

* **Column (c) Re²Search:**

* Process Label (top): "answer reasoning & reflection"

* Step 1: "1. William Lyon Mackenzie King is among the last Canadian father of Confederation"

* Step 2: "2. Mackenzie King's father was James Mackenzie"

* Process Label (bottom): "query generation"

* Generated Query: "Query: Who is the last surviving Canadian father of Confederation?"

**Flow and Logic:**

1. **RAG (a):** Performs no intermediate reasoning. It passes the complex, nested question directly as a search query. This is marked as ineffective (red thumbs-down).

2. **ReAct / Search-o1 (b):** Engages in "query reasoning," breaking the problem into logical sub-tasks (identify the person, then find a list). However, the final generated query ("List of Canadian fathers of Confederation") is a generic, intermediate step that does not directly answer the original question. This is also marked as ineffective (red thumbs-down).

3. **Re²Search (c):** Engages in "answer reasoning & reflection." It first retrieves or reasons about a specific fact (identifying William Lyon Mackenzie King as a relevant figure), then reflects on that fact to derive a second piece of information (his father's name). This internal knowledge synthesis allows it to generate a highly targeted and effective search query ("Who is the last surviving Canadian father of Confederation?") that directly addresses the core of the original question. This is marked as effective (green thumbs-up).

### Key Observations

* **Color Semantics:** Blue denotes input questions. Orange denotes generated queries that are ineffective. Green denotes an effective generated query. Gray denotes neutral reasoning steps. Pink/Red denotes a reasoning step that contains a specific, retrieved fact.

* **Structural Contrast:** The complexity of the internal process increases from left to right (a: none, b: two generic steps, c: two specific, knowledge-rich steps).

* **Outcome Correlation:** The method that incorporates specific factual recall and reflection (c) before query generation is the only one that produces a successful outcome, as indicated by the icons.

* **Language:** All text in the diagram is in English.

### Interpretation

This diagram serves as a conceptual comparison of AI agent architectures for complex question answering. It argues that simply retrieving information (RAG) or performing step-by-step reasoning to decompose a query (ReAct) is insufficient for questions requiring multi-hop factual inference.

The core demonstration is that the **Re²Search** method is superior because it integrates a "reflection" phase. Before generating an external search query, it first accesses or reasons about its internal knowledge base to form a partial answer or hypothesis (Step 1: identifying a key person). It then uses that intermediate result to refine its understanding and generate a more precise, second-step query (Step 2: knowing the father's name leads to a query about the person's identity). This mimics a more human-like, iterative problem-solving process where initial knowledge guides subsequent investigation.

The diagram suggests that for AI systems to handle complex, nested questions effectively, they must move beyond direct retrieval or linear planning. They need mechanisms for internal knowledge activation and self-reflection to guide their information-seeking behavior, thereby generating queries that are more likely to retrieve the final answer directly. The red and green thumbs icons provide a clear, non-technical verdict on the efficacy of each approach for the given task.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Flowchart: Comparative Analysis of Question Answering Methods for Historical Query

### Overview

The image presents a comparative flowchart of three question-answering methodologies (RAG, ReAct/Search-o1, Re²Search) applied to the historical question: "What was the father of the last surviving Canadian father of Confederation?" Each method is visualized with distinct steps, reasoning processes, and generated queries, accompanied by thumbs-up/thumbs-down icons indicating effectiveness.

### Components/Axes

1. **Three Method Sections**:

- (a) RAG (Red)

- (b) ReAct/Search-o1 (Blue)

- (c) Re²Search (Green)

2. **Key Elements**:

- Central question box at the top of each section

- Step-by-step reasoning/process boxes

- Generated query boxes at the bottom

- Thumbs icons (red thumbs-down for ineffective steps, green thumbs-up for effective steps)

- Arrows indicating flow direction

### Detailed Analysis

#### (a) RAG Method

- **Question**: "What was the father of the last surviving Canadian father of Confederation?"

- **Process**:

1. Direct pass through the system

- **Generated Query**: "What was the father of the last surviving Canadian father of Confederation?" (same as original question)

- **Effectiveness**: Red thumbs-down icon (ineffective)

#### (b) ReAct/Search-o1 Method

- **Question**: "What was the father of the last surviving Canadian father of Confederation?"

- **Process**:

1. Query reasoning: "Need to identify the last surviving Canadian father of Confederation"

2. Query generation: "List of Canadian fathers of Confederation"

- **Generated Query**: "List of Canadian fathers of Confederation"

- **Effectiveness**: Red thumbs-down icon (ineffective)

#### (c) Re²Search Method

- **Question**: "What was the father of the last surviving Canadian father of Confederation?"

- **Process**:

1. Answer reasoning & reflection: "William Lyon Mackenzie King is among the last Canadian father of Confederation"

2. Query generation: "Who is the last surviving Canadian father of Confederation?"

- **Generated Query**: "Who is the last surviving Canadian father of Confederation?"

- **Effectiveness**: Green thumbs-up icon (effective)

### Key Observations

1. **Method Effectiveness**:

- RAG and ReAct/Search-o1 methods receive negative feedback (red thumbs-down)

- Re²Search method receives positive feedback (green thumbs-up)

2. **Query Evolution**:

- RAG: No query refinement (direct pass)

- ReAct/Search-o1: Shifts to list generation

- Re²Search: Progresses to specific identity query

3. **Historical Context**:

- Final answer in Re²Search implies James Mackenzie as the father of William Lyon Mackenzie King

### Interpretation

The flowchart demonstrates a progression from ineffective to effective query handling:

1. **RAG's Limitation**: Fails to refine the query, resulting in circular questioning

2. **ReAct's Partial Success**: Identifies the need for list generation but doesn't complete the reasoning chain

3. **Re²Search's Success**: Combines answer reasoning with reflection to isolate the specific historical figure (James Mackenzie) through iterative query refinement

The thumbs icons suggest that Re²Search's approach of combining answer reasoning with reflection produces the most effective query refinement for historical fact extraction. This aligns with the known historical fact that William Lyon Mackenzie King (Canada's longest-serving Prime Minister) was the son of James Mackenzie, a Father of Confederation.

DECODING INTELLIGENCE...