## Diagram: Comparison of Question Answering Approaches

### Overview

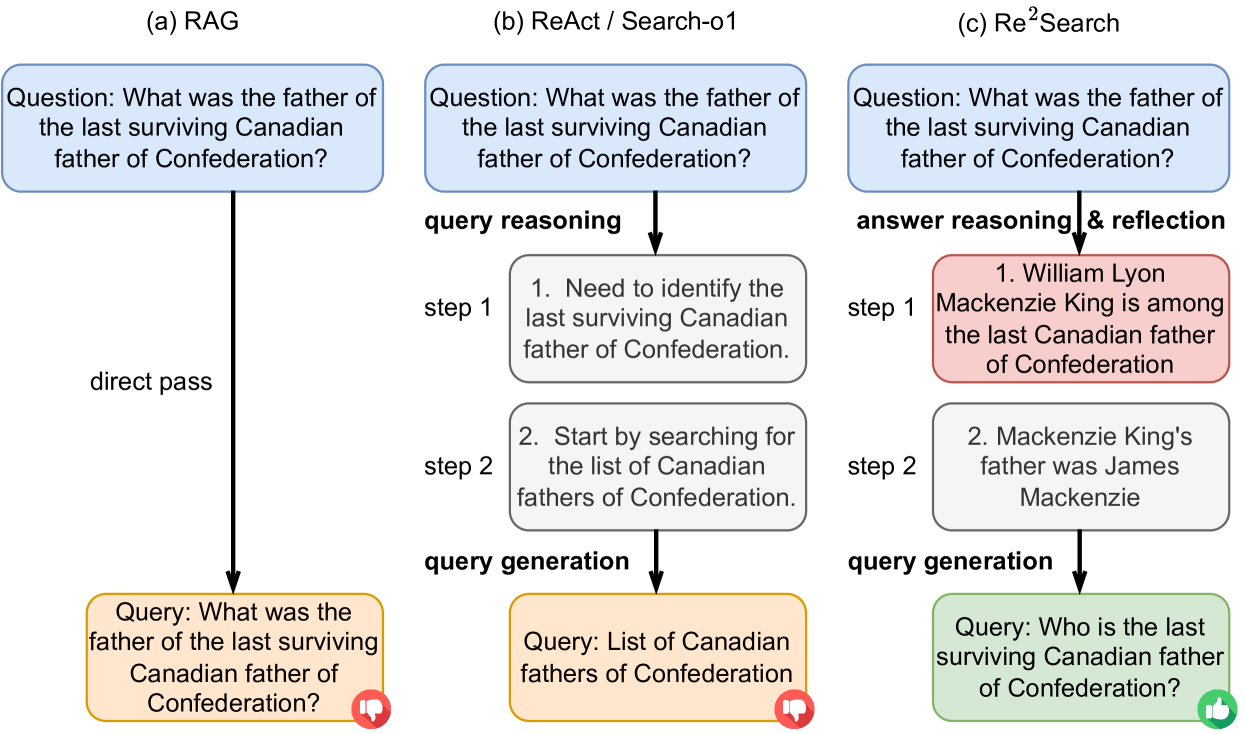

The image presents a comparative diagram illustrating three different approaches to answering a complex question: RAG (Retrieval-Augmented Generation), ReAct/Search-o1, and Re²Search. Each approach is depicted as a flowchart, showing the steps involved in processing the question and generating a query. The diagram highlights the differences in reasoning and query generation strategies among the three methods.

### Components/Axes

* **Titles:** The diagram is divided into three sections, each labeled with a title: (a) RAG, (b) ReAct / Search-o1, and (c) Re²Search.

* **Question:** Each section starts with the same initial question, presented in a light blue rounded box: "What was the father of the last surviving Canadian father of Confederation?"

* **Flow Arrows:** Black arrows indicate the flow of information and processing steps within each approach.

* **Process Steps:** Rectangular boxes represent individual steps in the reasoning and query generation process. These boxes are colored differently depending on the approach:

* RAG: Orange box for the final query.

* ReAct/Search-o1: Gray boxes for reasoning steps, orange box for the final query.

* Re²Search: Red box for answer reasoning, gray box for a subsequent step, and green box for the final query.

* **Step Labels:** Each reasoning step is labeled with "step 1", "step 2", etc.

* **Query Generation Labels:** The sections for ReAct/Search-o1 and Re²Search have labels indicating "query reasoning" and "query generation". The Re²Search section also has a label for "answer reasoning & reflection".

* **Feedback Icons:** Each final query box has a small icon in the bottom-right corner: a thumbs-down icon (red) for RAG and ReAct/Search-o1, and a thumbs-up icon (green) for Re²Search.

### Detailed Analysis

**RAG (Retrieval-Augmented Generation):**

* The initial question (light blue box) is "What was the father of the last surviving Canadian father of Confederation?".

* A "direct pass" arrow leads directly to the query generation stage.

* The generated query (orange box) is the same as the initial question: "What was the father of the last surviving Canadian father of Confederation?".

* A red thumbs-down icon is present.

**ReAct / Search-o1:**

* The initial question (light blue box) is "What was the father of the last surviving Canadian father of Confederation?".

* The process involves "query reasoning" in two steps (gray boxes):

* Step 1: "Need to identify the last surviving Canadian father of Confederation."

* Step 2: "Start by searching for the list of Canadian fathers of Confederation."

* The "query generation" stage (orange box) produces the query: "List of Canadian fathers of Confederation".

* A red thumbs-down icon is present.

**Re²Search:**

* The initial question (light blue box) is "What was the father of the last surviving Canadian father of Confederation?".

* The process involves "answer reasoning & reflection" in two steps:

* Step 1 (red box): "William Lyon Mackenzie King is among the last Canadian father of Confederation".

* Step 2 (gray box): "Mackenzie King's father was James Mackenzie".

* The "query generation" stage (green box) produces the query: "Who is the last surviving Canadian father of Confederation?".

* A green thumbs-up icon is present.

### Key Observations

* RAG directly uses the initial question as the query, without any intermediate reasoning steps.

* ReAct/Search-o1 performs query reasoning to generate a more specific query aimed at retrieving a list of relevant individuals.

* Re²Search incorporates answer reasoning and reflection, leading to a refined query that directly asks for the last surviving Canadian father of Confederation.

* The thumbs-up/thumbs-down icons suggest a qualitative assessment of the effectiveness of each approach, with Re²Search being the most successful.

### Interpretation

The diagram illustrates how different question-answering approaches handle a complex question. RAG's direct pass approach may be less effective for questions requiring reasoning or specific information retrieval strategies. ReAct/Search-o1 attempts to improve upon this by incorporating query reasoning, but still generates a query that requires further processing. Re²Search, with its answer reasoning and reflection, appears to be the most effective, generating a query that directly addresses the question and leads to a more accurate answer. The thumbs-up/thumbs-down icons visually reinforce this assessment. The diagram highlights the importance of reasoning and reflection in question answering systems, particularly for complex or nuanced queries.