## Line Chart: LM Loss vs. Compute (PFLOP/s-days)

### Overview

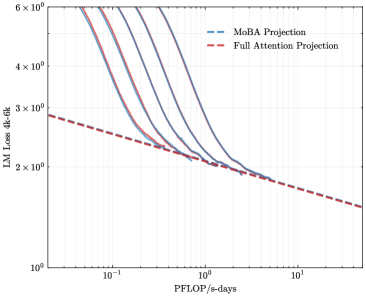

The image is a line chart plotted on a log-log scale. It compares the projected language model (LM) loss on a 4,000-token context ("4k ctx") against the amount of compute, measured in PetaFLOP per second-days (PFLOP/s-days). Two distinct projection lines are shown, illustrating different scaling behaviors.

### Components/Axes

* **Chart Type:** 2D line chart with logarithmic axes.

* **Y-Axis (Vertical):**

* **Label:** `LM Loss 4k ctx`

* **Scale:** Logarithmic, ranging from `10^0` (1) to `6 x 10^0` (6).

* **Major Ticks:** 1, 2, 3, 4, 5, 6.

* **X-Axis (Horizontal):**

* **Label:** `PFLOP/s-days`

* **Scale:** Logarithmic, ranging from `10^-1` (0.1) to `10^1` (10).

* **Major Ticks:** 0.1, 1, 10.

* **Legend:**

* **Position:** Top-right corner of the chart area.

* **Entry 1:** `MoBA Projection` - Represented by a blue dashed line (`--`).

* **Entry 2:** `Full Attention Projection` - Represented by a red dashed line (`--`).

### Detailed Analysis

**1. MoBA Projection (Blue Dashed Line):**

* **Trend:** The line exhibits a steep, downward slope that gradually flattens. It starts at a very high loss value for low compute and decreases rapidly as compute increases, showing strong initial scaling.

* **Approximate Data Points:**

* At ~0.1 PFLOP/s-days: Loss is off the top of the chart (>> 6).

* At ~0.3 PFLOP/s-days: Loss ≈ 6.

* At ~0.5 PFLOP/s-days: Loss ≈ 4.

* At ~1 PFLOP/s-days: Loss ≈ 2.5.

* At ~2 PFLOP/s-days: Loss ≈ 2.0.

* At ~5 PFLOP/s-days: Loss ≈ 1.8 (line ends near this point).

**2. Full Attention Projection (Red Dashed Line):**

* **Trend:** The line has a much shallower, consistent downward slope across the entire compute range. It represents a more gradual, power-law scaling trend.

* **Approximate Data Points:**

* At ~0.1 PFLOP/s-days: Loss ≈ 2.9.

* At ~1 PFLOP/s-days: Loss ≈ 2.2.

* At ~10 PFLOP/s-days: Loss ≈ 1.5.

**3. Relationship Between Lines:**

* The MoBA line starts significantly above the Full Attention line at low compute (< ~0.8 PFLOP/s-days).

* The two lines intersect at approximately **0.8 - 1.0 PFLOP/s-days**, where both project a loss of roughly **2.3 - 2.4**.

* For compute greater than ~1 PFLOP/s-days, the MoBA Projection line falls **below** the Full Attention Projection line, indicating a lower projected loss for the same amount of compute in this regime.

### Key Observations

1. **Crossing Point:** The most significant feature is the intersection of the two projection lines. This suggests a critical compute threshold where the efficiency advantage shifts from one method (Full Attention) to the other (MoBA).

2. **Scaling Behavior:** The MoBA projection shows a "knee" or flattening curve, suggesting diminishing returns at higher compute levels. The Full Attention projection maintains a more constant rate of improvement (on a log-log plot).

3. **Initial Disparity:** At very low compute budgets, the Full Attention model is projected to have a substantially lower loss than the MoBA model.

### Interpretation

This chart presents a comparative scaling law analysis for two different model architectures or training methodologies: "MoBA" (likely an acronym for a specific architecture like Mixture of Block Attention) and standard "Full Attention."

* **What the data suggests:** The projection implies that the MoBA architecture may be less sample-efficient at very small scales but possesses superior scaling properties. Once a sufficient compute threshold (~1 PFLOP/s-days) is crossed, it is projected to achieve lower loss values than a Full Attention model trained with the same compute budget.

* **How elements relate:** The x-axis (compute) is the independent variable driving the reduction in the dependent variable (model loss). The two lines represent two different functions (scaling laws) mapping compute to performance. The crossing point is the key insight, defining the regime where one approach becomes more favorable than the other.

* **Notable implications:** This type of analysis is crucial for strategic decision-making in AI research and development. It argues that investing in the MoBA architecture is beneficial for large-scale projects, as it promises better final performance for massive compute investments, despite potentially worse performance for small-scale experiments. The flattening of the MoBA curve also hints at a potential performance ceiling or a need for further architectural innovation at extreme scales.