## Histogram: Distribution of Human Subjects in Validated XAI Papers

### Overview

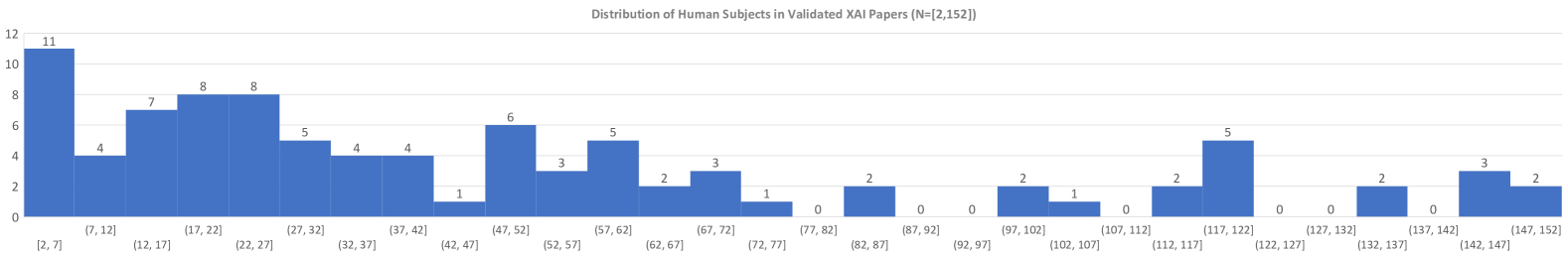

The image displays a histogram titled "Distribution of Human Subjects in Validated XAI Papers (N=[2,152])". It visualizes the frequency distribution of the number of human participants used across a set of 2,152 validated papers in the field of Explainable Artificial Intelligence (XAI). The chart shows a right-skewed distribution, indicating that most studies use a small number of human subjects, with a long tail of studies using progressively larger sample sizes.

### Components/Axes

* **Chart Title:** "Distribution of Human Subjects in Validated XAI Papers (N=[2,152])" (Top Center).

* **Y-Axis (Vertical):** Represents the count or frequency of papers. The axis is labeled with numerical markers: 0, 2, 4, 6, 8, 10, 12.

* **X-Axis (Horizontal):** Represents the range (bin) of human subjects per paper. The bins are presented as intervals, using a mix of closed `[a, b]` and half-open `(a, b]` notation. The bins are, from left to right:

`[2, 7]`, `(7, 12]`, `(12, 17]`, `(17, 22]`, `(22, 27]`, `(27, 32]`, `(32, 37]`, `(37, 42]`, `(42, 47]`, `(47, 52]`, `(52, 57]`, `(57, 62]`, `(62, 67]`, `(67, 72]`, `(72, 77]`, `(77, 82]`, `(82, 87]`, `(87, 92]`, `(92, 97]`, `(97, 102]`, `(102, 107]`, `(107, 112]`, `(112, 117]`, `(117, 122]`, `(122, 127]`, `(127, 132]`, `(132, 137]`, `(137, 142]`, `(142, 147]`, `(147, 152]`.

* **Data Series:** A single series represented by blue vertical bars. The exact count for each bin is annotated directly above its corresponding bar.

### Detailed Analysis

The following table lists each bin (range of human subjects) and the corresponding number of papers (frequency) as labeled on the chart:

| Bin (Range of Human Subjects) | Number of Papers |

| :--- | :--- |

| [2, 7] | 11 |

| (7, 12] | 4 |

| (12, 17] | 7 |

| (17, 22] | 8 |

| (22, 27] | 8 |

| (27, 32] | 5 |

| (32, 37] | 4 |

| (37, 42] | 4 |

| (42, 47] | 1 |

| (47, 52] | 6 |

| (52, 57] | 3 |

| (57, 62] | 5 |

| (62, 67] | 2 |

| (67, 72] | 3 |

| (72, 77] | 1 |

| (77, 82] | 0 |

| (82, 87] | 2 |

| (87, 92] | 0 |

| (92, 97] | 0 |

| (97, 102] | 2 |

| (102, 107] | 1 |

| (107, 112] | 0 |

| (112, 117] | 2 |

| (117, 122] | 5 |

| (122, 127] | 0 |

| (127, 132] | 0 |

| (132, 137] | 2 |

| (137, 142] | 0 |

| (142, 147] | 3 |

| (147, 152] | 2 |

**Trend Verification:** The visual trend is a strong right skew. The tallest bar is the first bin `[2, 7]` with 11 papers. The frequency generally decreases as the number of subjects increases, but with notable fluctuations and a long tail extending to the maximum bin of `(147, 152]`.

### Key Observations

1. **Dominant Small Sample Sizes:** The most frequent category is studies with 2 to 7 human subjects (11 papers). The next most common ranges are 12-27 subjects, with several bins containing 7-8 papers each.

2. **Right-Skewed Distribution:** The histogram has a long tail to the right, indicating a small number of studies use very large sample sizes (over 100 subjects), while the vast majority use fewer than 50.

3. **Gaps in Distribution:** There are several bins with a frequency of 0, particularly in the higher ranges (e.g., 77-82, 87-97, 107-112, 122-132, 137-142). This suggests a non-uniform, potentially clustered approach to sample size selection in the literature.

4. **Secondary Peaks:** There are minor secondary peaks in frequency around the `(47, 52]` bin (6 papers) and the `(117, 122]` bin (5 papers), which may represent common sample size targets for certain types of user studies.

5. **Total Count Discrepancy:** The title states N=[2,152], which likely refers to the total number of papers analyzed. Summing the frequencies from all bars yields a total of 105 papers represented in this histogram. This indicates the histogram is showing the distribution for a subset of the total papers—specifically, those that included human subject validation.

### Interpretation

This histogram provides a quantitative snapshot of experimental practice in validated XAI research. The data suggests that **human-subject validation in XAI is predominantly conducted with small-scale studies**, often involving fewer than 30 participants. This is consistent with common practices in HCI and user study research, where in-depth qualitative insights or pilot studies are valued, and large-N studies are resource-intensive.

The right-skewed distribution and the presence of gaps imply that **there is no standard or widely adopted sample size** for XAI validation studies. The secondary peaks at specific ranges (like ~50 and ~120 subjects) might correspond to conventions from related fields (e.g., psychology) or practical constraints of online participant recruitment platforms.

The significant difference between the total papers in the dataset (2,152) and those with human-subject data shown here (105) is a critical finding. It implies that **the majority of "validated" XAI papers may not involve direct human evaluation**, relying instead on other forms of validation (e.g., technical metrics, case studies). This highlights a potential gap in the field between algorithmic development and human-centered evaluation. The chart effectively argues for a need to understand and potentially improve the rigor and scale of human-subject research in XAI.