\n

## Diagram: Multimodal AI Grounding Process

### Overview

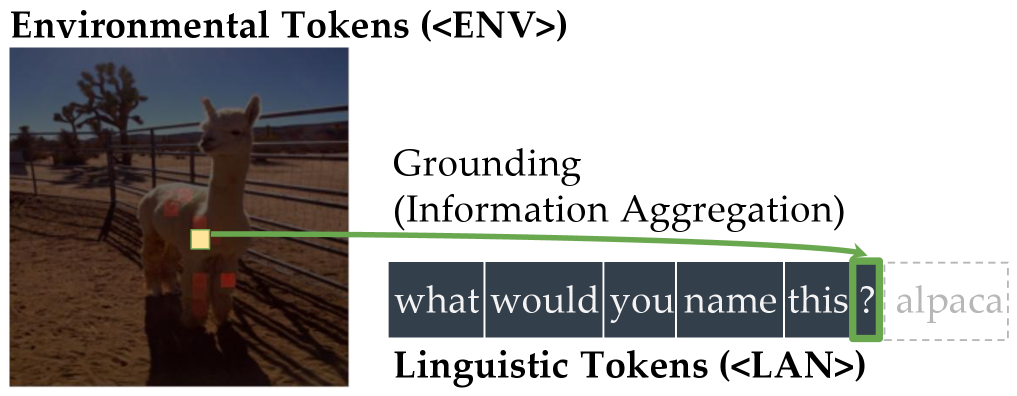

The image is a conceptual diagram illustrating a multimodal AI process where visual information from an image ("Environmental Tokens") is connected to a linguistic query ("Linguistic Tokens") through a process labeled "Grounding (Information Aggregation)". The diagram uses a photograph of an alpaca as the visual input and a text sequence as the linguistic input.

### Components/Axes

The diagram is composed of two primary regions:

1. **Left Region (Environmental Tokens):**

* **Title:** "Environmental Tokens (<ENV>)" is displayed at the top left.

* **Content:** A photograph of a light-colored alpaca standing in a dirt enclosure. A metal fence and Joshua trees are visible in the background under a blue sky.

* **Annotations:** Several small, colored square markers are superimposed on the alpaca's body (red, orange, yellow). A single **yellow square** on the alpaca's side is the origin point for a connecting arrow.

2. **Right Region (Linguistic Tokens & Process):**

* **Process Label:** "Grounding (Information Aggregation)" is written in the upper-middle area.

* **Linguistic Sequence:** A horizontal row of dark blue boxes containing the white text: `what` | `would` | `you` | `name` | `this` | `?`. This is labeled below as "Linguistic Tokens (<LAN>)".

* **Output/Answer:** To the right of the question mark box, there is a dashed-outline box containing the word `alpaca` in light gray text.

* **Connection:** A **green arrow** originates from the yellow square on the alpaca in the photograph and points directly to the question mark (`?`) box in the linguistic token sequence.

### Detailed Analysis

* **Text Transcription:**

* Top Title: `Environmental Tokens (<ENV>)`

* Process Label: `Grounding (Information Aggregation)`

* Linguistic Tokens (in boxes): `what`, `would`, `you`, `name`, `this`, `?`

* Label below tokens: `Linguistic Tokens (<LAN>)`

* Answer in dashed box: `alpaca`

* **Spatial Grounding & Flow:**

* The **yellow square** is positioned on the mid-left side of the alpaca's torso in the photograph.

* The **green arrow** flows from this specific visual point (left side of image) to the linguistic token representing the question (right side of image).

* The legend/answer (`alpaca`) is placed to the immediate right of the question mark, suggesting it is the generated or retrieved response.

* **Component Isolation:**

* **Header:** Contains the title "Environmental Tokens (<ENV>)".

* **Main Diagram:** Contains the photograph, the "Grounding" label, the token sequence, and the connecting arrow.

* **Footer:** Contains the label "Linguistic Tokens (<LAN>)".

### Key Observations

1. The diagram explicitly models a two-stage input system: visual data (`<ENV>`) and textual data (`<LAN>`).

2. The core operation is "Grounding," defined here as "Information Aggregation," which links a specific region of the visual input to a specific token in the linguistic input.

3. The process is demonstrated with a concrete example: the system is asked to name the subject of the image, and the answer "alpaca" is provided.

4. The colored markers on the alpaca (red, orange, yellow) suggest that multiple visual features or regions can be identified and potentially grounded, though only the yellow one is used in this specific flow.

### Interpretation

This diagram is a schematic representation of a **multimodal grounding mechanism** in an AI system. It visually explains how the model connects raw sensory data (pixels in an image) with symbolic language (words and punctuation).

* **What it demonstrates:** The system doesn't just see an image and read text separately. It performs an active alignment ("grounding") where a specific visual feature (represented by the yellow square on the alpaca) is associated with the conceptual query ("what would you name this ?"). This aggregated information allows the model to produce the correct linguistic token (`alpaca`) as an answer.

* **Relationships:** The green arrow is the most critical element, representing the inference or attention link that bridges the modalities. The dashed box for "alpaca" indicates it is an output derived from the grounding process, not an initial input.

* **Underlying Concept:** The diagram argues that for an AI to understand and respond to a question about an image, it must first "ground" the linguistic query in the relevant parts of the visual scene. The "Information Aggregation" subtitle suggests this involves combining features from the identified visual region with the context of the question to formulate a response. The example is simple (object naming), but the framework implies applicability to more complex visual question-answering tasks.