# Technical Document Extraction: Relative Expert Load Heatmaps

## 1. Document Overview

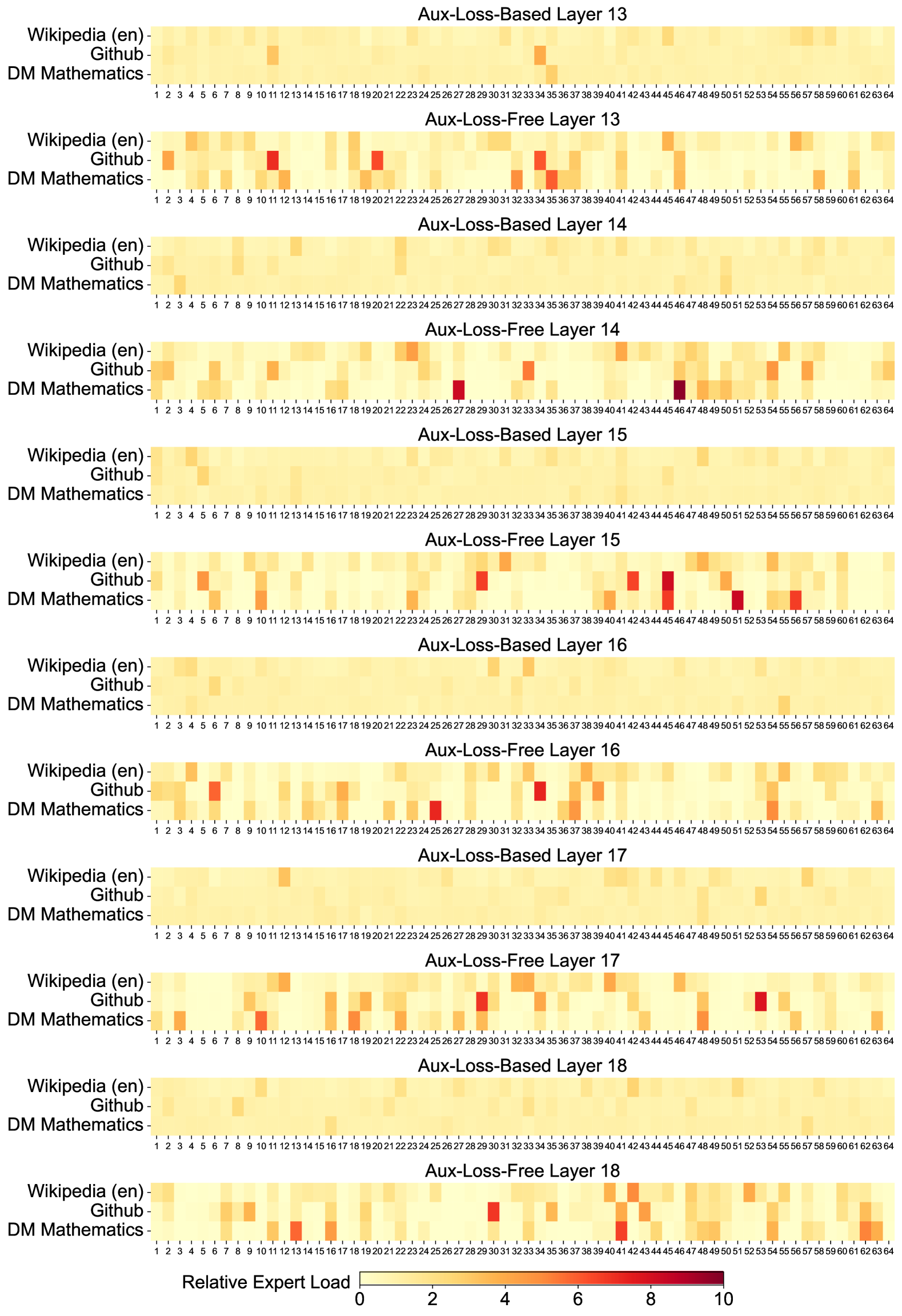

This image contains a series of 12 heatmaps organized in a vertical stack. The charts compare the "Relative Expert Load" across different layers of a Mixture-of-Experts (MoE) neural network, specifically contrasting two training methodologies: **Aux-Loss-Based** and **Aux-Loss-Free**.

## 2. Global Metadata and Legend

* **Language:** English (en).

* **Metric:** Relative Expert Load.

* **Color Scale (Legend):** Located at the bottom center.

* **Range:** 0 to 10.

* **Gradient:** Light yellow (0) $\rightarrow$ Orange (5) $\rightarrow$ Deep Red (10).

* **X-Axis (Common to all charts):** Expert Index, ranging from **1 to 64**.

* **Y-Axis (Common to all charts):** Data Domains.

1. Wikipedia (en)

2. Github

3. DM Mathematics

## 3. Component Analysis by Layer

The heatmaps are presented in pairs (Aux-Loss-Based vs. Aux-Loss-Free) for Layers 13 through 18.

### Layer 13

* **Aux-Loss-Based Layer 13:** Shows a very uniform, low-intensity distribution (mostly light yellow). A slight increase in load is visible for Github at Expert 34 and DM Mathematics at Expert 35.

* **Aux-Loss-Free Layer 13:** Shows significantly higher variance and specialization.

* **Wikipedia:** Peaks around Experts 2, 5, 10.

* **Github:** Strong peak (Red, ~8-9) at Expert 11; secondary peaks at 20, 34, 46.

* **DM Mathematics:** Peaks at Experts 12, 33, 35, 46, 58, 62.

### Layer 14

* **Aux-Loss-Based Layer 14:** Highly uniform distribution. Almost no visible specialization; all values appear to be $<2$.

* **Aux-Loss-Free Layer 14:**

* **Wikipedia:** Moderate load at Experts 1, 24, 44, 55.

* **Github:** Moderate load at Experts 6, 11, 33, 54, 57.

* **DM Mathematics:** Distinct high-intensity peaks (Deep Red, ~10) at Experts **27** and **46**.

### Layer 15

* **Aux-Loss-Based Layer 15:** Uniform distribution with very slight intensity increases for Github at Expert 5.

* **Aux-Loss-Free Layer 15:**

* **Wikipedia:** Moderate load at Experts 2, 31.

* **Github:** Strong peaks at Experts 6, 29, 42, 45 (Red, ~9).

* **DM Mathematics:** Strong peaks at Experts 11, 23, 41, 45, 52, 57.

### Layer 16

* **Aux-Loss-Based Layer 16:** Uniform distribution. Slight yellow-orange tint at Expert 33 for Wikipedia and Expert 55 for DM Mathematics.

* **Aux-Loss-Free Layer 16:**

* **Wikipedia:** Peaks at Experts 2, 5, 30, 41.

* **Github:** Strong peak at Expert 6 (Orange-Red, ~7).

* **DM Mathematics:** Peaks at Experts 17, 25, 34, 54.

### Layer 17

* **Aux-Loss-Based Layer 17:** Uniform distribution. Slight intensity at Expert 11 for Wikipedia.

* **Aux-Loss-Free Layer 17:**

* **Wikipedia:** Peaks at Experts 11, 45.

* **Github:** Strong peak at Expert 29 (Red, ~8).

* **DM Mathematics:** Peaks at Experts 2, 10, 17, 21, 34, 47, 53 (Red, ~9).

### Layer 18

* **Aux-Loss-Based Layer 18:** Uniform distribution. Minimal variation across all 64 experts.

* **Aux-Loss-Free Layer 18:**

* **Wikipedia:** Peaks at Experts 1, 40.

* **Github:** Peaks at Experts 9, 11, 30, 41, 43.

* **DM Mathematics:** Strong peaks at Experts 15, 41, 54, 63.

## 4. Key Trends and Observations

1. **Methodological Contrast:** The **Aux-Loss-Based** models exhibit a "load balancing" effect where the work is spread almost evenly across all 64 experts. This results in a "washed out" heatmap with very few distinct features.

2. **Expert Specialization:** The **Aux-Loss-Free** models show high levels of specialization. Specific experts (e.g., Expert 46 in Layer 14 for DM Mathematics) take on a disproportionately high relative load (up to 10x the average).

3. **Domain Separation:** In the Aux-Loss-Free charts, different domains (Wikipedia vs. Github vs. Math) often activate different sets of experts within the same layer, indicating the model has learned to route domain-specific information to specialized sub-networks.

4. **Vertical Consistency:** While specialization is present in all Aux-Loss-Free layers, the specific "hot" experts change from layer to layer, suggesting a complex routing logic that evolves through the depth of the network.