## Neural Network Diagram: Two-layer DAE with Bottleneck and Skip Connection

### Overview

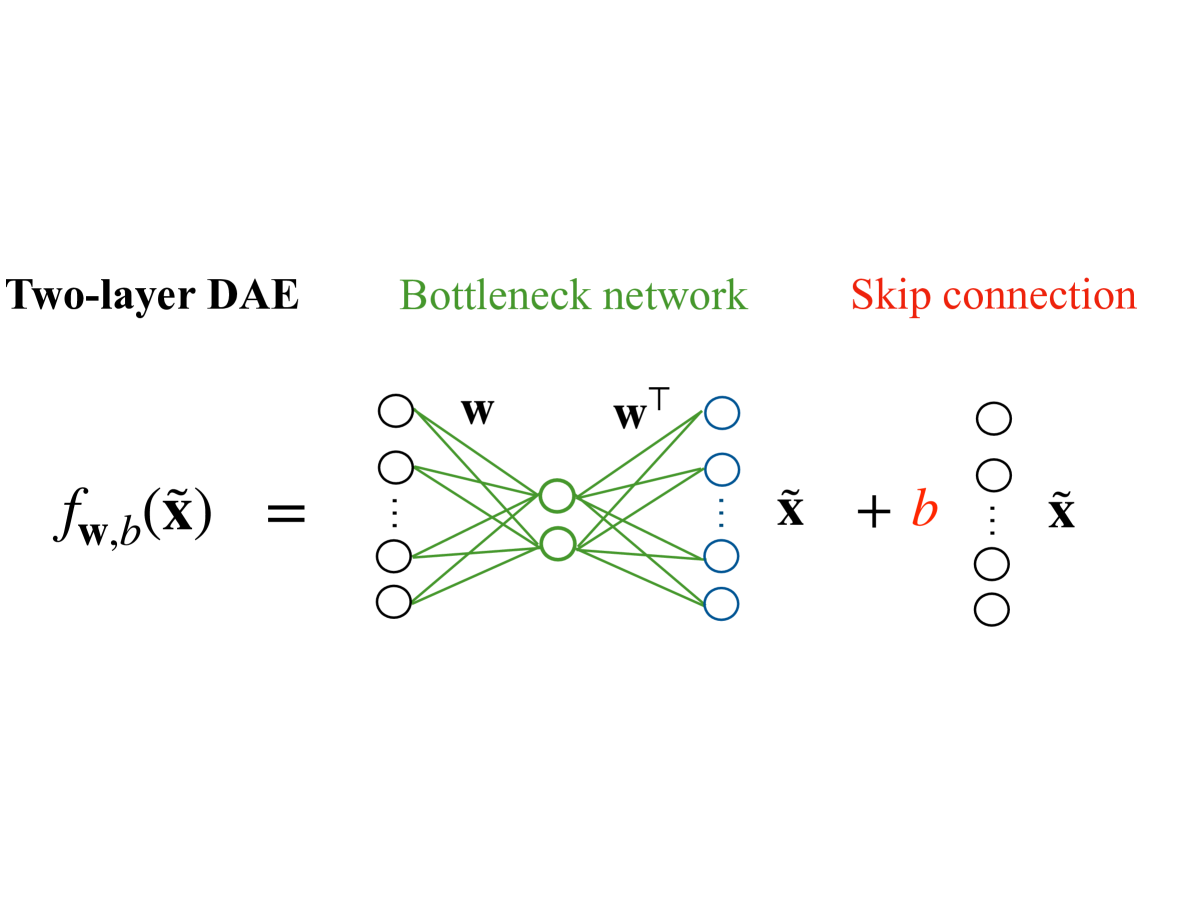

The image is a diagram illustrating the architecture of a two-layer Denoising Autoencoder (DAE) with a bottleneck network and a skip connection. It visually represents the flow of data through the network, highlighting the key components and their interconnections.

### Components/Axes

* **Title:** Two-layer DAE (left), Bottleneck network (center, green), Skip connection (right, red)

* **Equation:** *f<sub>w,b</sub>(x̃)* = ... *x̃* + *b* ... *x̃*

* **Nodes:**

* Input Layer: Represented by a column of four white circles on the left, with an ellipsis indicating potentially more nodes.

* Bottleneck Layer: Represented by two green circles in the center.

* Output Layer: Represented by a column of four blue circles to the right of the bottleneck layer, with an ellipsis indicating potentially more nodes.

* Skip Connection Layer: Represented by a column of four white circles on the right, with an ellipsis indicating potentially more nodes.

* **Connections:**

* Input to Bottleneck: Green lines connecting each node in the input layer to each node in the bottleneck layer. The connections are labeled with "w".

* Bottleneck to Output: Green lines connecting each node in the bottleneck layer to each node in the output layer. The connections are labeled with "w<sup>T</sup>".

* Skip Connection: A direct connection from the input (x̃) to the output, bypassing the bottleneck.

### Detailed Analysis

* **Input Layer:** Four nodes are explicitly shown, with an ellipsis suggesting more.

* **Bottleneck Layer:** Two nodes are shown.

* **Output Layer:** Four nodes are explicitly shown, with an ellipsis suggesting more.

* **Skip Connection Layer:** Four nodes are explicitly shown, with an ellipsis suggesting more.

* **Connections:**

* Each input node connects to both bottleneck nodes.

* Each bottleneck node connects to each output node.

* The skip connection directly connects the input to the output.

* **Equation Breakdown:**

* *f<sub>w,b</sub>(x̃)* represents the function performed by the DAE, where *w* represents the weights and *b* represents the bias. *x̃* represents the input.

* The equation shows that the output is a function of the input *x̃*, the weights *w*, and the bias *b*. The skip connection adds *b* to the transformed input.

### Key Observations

* The diagram clearly illustrates the bottleneck architecture, where the input is compressed into a lower-dimensional representation before being reconstructed.

* The skip connection provides a direct path for the input to the output, potentially helping to preserve information and improve performance.

* The use of different colors (green and blue) distinguishes the bottleneck and output layers.

### Interpretation

The diagram represents a two-layer Denoising Autoencoder (DAE) architecture. The DAE aims to learn a compressed representation of the input data by encoding it into a lower-dimensional space (the bottleneck) and then decoding it back to the original input space. The bottleneck forces the network to learn the most important features of the data. The skip connection allows the network to bypass the bottleneck, potentially improving the reconstruction accuracy and allowing the network to learn both low-level and high-level features. The equation *f<sub>w,b</sub>(x̃)* = ... *x̃* + *b* ... *x̃* summarizes the transformation performed by the network, where the input *x̃* is transformed by the weights *w* and bias *b*, and then combined with the original input *x̃* via the skip connection.