\n

## Diagram: Two-layer Denoising Autoencoder (DAE) Architecture

### Overview

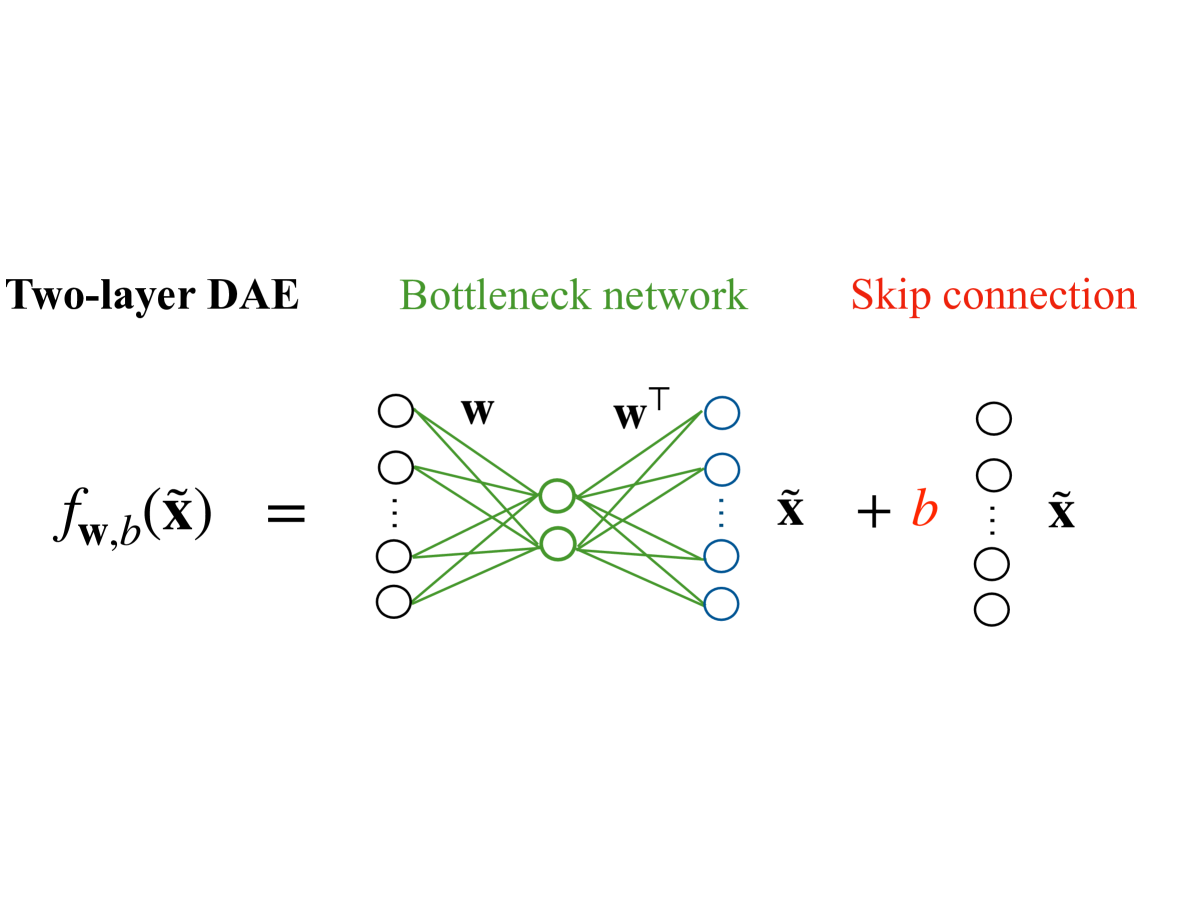

The image depicts the architecture of a two-layer Denoising Autoencoder (DAE). It illustrates the flow of data through a bottleneck network with a skip connection. The diagram is primarily a visual representation of the mathematical function `f_{w,b}(X)`.

### Components/Axes

The diagram consists of three main sections:

1. **Left Side:** The mathematical function `f_{w,b}(X)` is displayed.

2. **Center:** A bottleneck network is shown with connections labeled 'w' and 'wᵀ'.

3. **Right Side:** A skip connection is illustrated, adding a bias 'b' to the reconstructed output.

Labels:

* "Two-layer DAE" (top-left, green text)

* "Bottleneck network" (center-top, green text)

* "Skip connection" (top-right, red text)

* `f_{w,b}(X)` (left side, black text)

* 'w' (connections between input and bottleneck layers, black text)

* 'wᵀ' (connections between bottleneck and output layers, black text)

* 'X̃' (output of the bottleneck layer, black text)

* 'b' (bias added in the skip connection, black text)

* 'X̃' (final reconstructed output, black text)

### Detailed Analysis or Content Details

The diagram shows a neural network with the following structure:

* **Input Layer:** Represented by a series of circles on the left. The number of nodes is not explicitly specified, but is indicated by the "..." symbol.

* **Bottleneck Layer:** A smaller set of circles in the center, representing a lower-dimensional representation of the input. The number of nodes is also not explicitly specified.

* **Output Layer:** A series of circles on the right, representing the reconstructed output. The number of nodes appears to match the input layer.

* **Connections:**

* Connections from the input layer to the bottleneck layer are labeled 'w'.

* Connections from the bottleneck layer to the output layer are labeled 'wᵀ' (w transpose).

* A skip connection adds a bias 'b' to the output of the bottleneck layer.

* **Mathematical Function:** The entire process is represented by the function `f_{w,b}(X)`, where:

* `w` represents the weights of the connections.

* `b` represents the bias.

* `X` represents the input.

* `X̃` represents the reconstructed output.

### Key Observations

The diagram highlights the key components of a DAE: the bottleneck layer for dimensionality reduction and the skip connection for preserving information. The use of 'wᵀ' suggests a symmetric weight structure, potentially related to autoencoder properties. The diagram does not provide any numerical values or specific details about the network's size or activation functions.

### Interpretation

The diagram illustrates a two-layer Denoising Autoencoder, a type of neural network used for unsupervised learning and dimensionality reduction. The DAE aims to learn a compressed representation of the input data (through the bottleneck layer) while also being robust to noise. The skip connection helps to preserve information during the reconstruction process. The function `f_{w,b}(X)` mathematically describes the transformation of the input `X` into the reconstructed output `X̃` using weights `w` and bias `b`. The diagram is a conceptual representation and does not provide specific implementation details. The absence of numerical values suggests that the diagram is intended to convey the general architecture rather than specific performance characteristics.