## Diagram: Two-layer DAE Architecture with Bottleneck and Skip Connection

### Overview

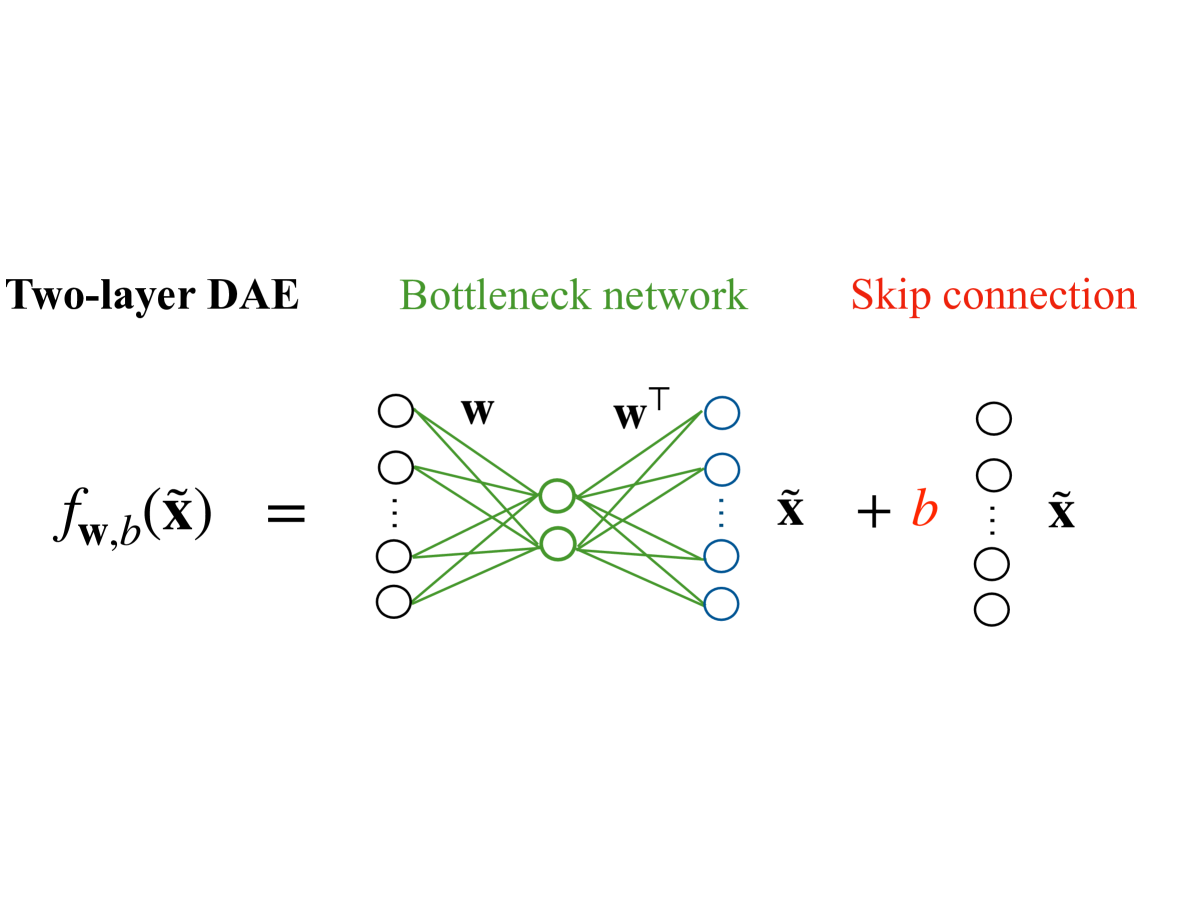

The image illustrates a two-layer Deep Autoencoder (DAE) architecture, emphasizing a bottleneck network and skip connections. It combines a mathematical equation with a visual representation of the network's structure.

### Components/Axes

1. **Left Section**:

- **Label**: "Two-layer DAE"

- **Equation**: $ f_{w,b}(\tilde{x}) = \tilde{x} + b $

- **Description**: Represents the output function of the DAE, where $ \tilde{x} $ is the input and $ b $ is a bias term.

2. **Middle Section**:

- **Label**: "Bottleneck network"

- **Visual Elements**:

- **Input Nodes**: Circles on the left (unlabeled).

- **Hidden Layers**: Two layers of interconnected nodes (green and blue circles).

- **Weights**:

- $ W $: Green lines connecting input to first hidden layer.

- $ W^T $: Blue lines connecting second hidden layer to output.

- **Description**: The bottleneck compresses the input data into a lower-dimensional representation.

3. **Right Section**:

- **Label**: "Skip connection"

- **Visual Elements**:

- **Input Nodes**: Circles on the left (unlabeled).

- **Output Nodes**: Circles on the right (unlabeled).

- **Operation**: $ \tilde{x} + b $ (red text).

- **Description**: The skip connection adds the original input $ \tilde{x} $ to the output of the bottleneck network, preserving information.

### Detailed Analysis

- **Equation**: $ f_{w,b}(\tilde{x}) = \tilde{x} + b $

- The output is the input $ \tilde{x} $ plus a bias term $ b $, indicating a linear transformation with a bias.

- **Bottleneck Network**:

- The network uses weight matrices $ W $ and $ W^T $ to encode and decode the input.

- The green and blue lines represent the forward and backward weight connections, respectively.

- **Skip Connection**:

- The red text $ \tilde{x} + b $ shows the direct addition of the input to the output, bypassing the bottleneck.

### Key Observations

- The diagram highlights the interplay between the bottleneck (compression) and skip connection (information preservation).

- The use of $ W $ and $ W^T $ suggests a symmetric encoding-decoding process.

- The skip connection ensures the original input is retained, which is critical for tasks like denoising or feature retention.

### Interpretation

This architecture demonstrates a standard DAE design where the bottleneck forces the network to learn a compressed representation of the input. The skip connection mitigates information loss during compression, a common technique in autoencoders to improve reconstruction quality. The equation $ f_{w,b}(\tilde{x}) = \tilde{x} + b $ simplifies the output as a linear combination of the input and bias, emphasizing the role of the skip connection in maintaining the original data structure.

No numerical data or trends are present in the image; the focus is on architectural components and their functional relationships.