\n

## Chart: Attention Matrix / Alignment Plot

### Overview

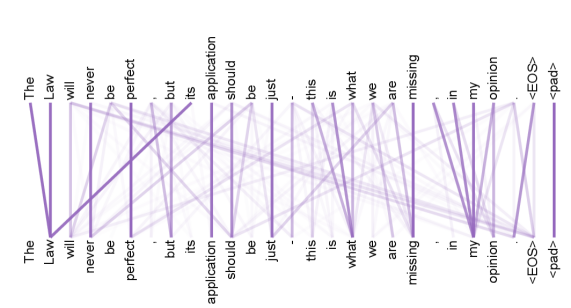

The image depicts an attention matrix or alignment plot, visually representing relationships between a sequence of words. The x-axis and y-axis both display the same sequence of words: "The", "Law", "will", "never", "be", "perfect", "but", "its", "application", "should", "be", "just", "this", "is", "what", "we", "are", "missing", "in", "my", "opinion", "<EOS>", "<pad>". Lines connect words on the x-axis to words on the y-axis, with line intensity indicating the strength of the relationship. The lines are a light purple color.

### Components/Axes

* **X-axis:** "The", "Law", "will", "never", "be", "perfect", "but", "its", "application", "should", "be", "just", "this", "is", "what", "we", "are", "missing", "in", "my", "opinion", "<EOS>", "<pad>".

* **Y-axis:** "The", "Law", "will", "never", "be", "perfect", "but", "its", "application", "should", "be", "just", "this", "is", "what", "we", "are", "missing", "in", "my", "opinion", "<EOS>", "<pad>".

* **Lines:** Represent attention weights or alignment scores between words. The intensity of the lines is not easily quantifiable from the image.

* **Special Tokens:** "<EOS>" (End of Sentence) and "<pad>" (Padding) are included in the sequence.

### Detailed Analysis

The chart shows a complex network of connections between the words. Here's a breakdown of some notable connections, noting the difficulty in precise quantification:

* **"The" to "Law":** A strong connection exists between "The" and "Law", indicated by a relatively thick line.

* **"Law" to "will":** A connection exists between "Law" and "will".

* **"will" to "never":** A connection exists between "will" and "never".

* **"never" to "be":** A connection exists between "never" and "be".

* **"be" to "perfect":** A connection exists between "be" and "perfect".

* **"perfect" to "but":** A connection exists between "perfect" and "but".

* **"but" to "its":** A connection exists between "but" and "its".

* **"its" to "application":** A connection exists between "its" and "application".

* **"application" to "should":** A connection exists between "application" and "should".

* **"should" to "be":** A connection exists between "should" and "be".

* **"be" to "just":** A connection exists between "be" and "just".

* **"just" to "this":** A connection exists between "just" and "this".

* **"this" to "is":** A connection exists between "this" and "is".

* **"is" to "what":** A connection exists between "is" and "what".

* **"what" to "we":** A connection exists between "what" and "we".

* **"we" to "are":** A connection exists between "we" and "are".

* **"are" to "missing":** A connection exists between "are" and "missing".

* **"missing" to "in":** A connection exists between "missing" and "in".

* **"in" to "my":** A connection exists between "in" and "my".

* **"my" to "opinion":** A connection exists between "my" and "opinion".

* **"opinion" to "<EOS>":** A connection exists between "opinion" and "<EOS>".

* **"<EOS>" to "<pad>":** A connection exists between "<EOS>" and "<pad>".

* **Self-Attention:** Many words have connections to themselves (e.g., "The" to "The", "Law" to "Law"), indicating self-attention.

The lines are generally sparse, meaning most words do *not* have strong connections to most other words.

### Key Observations

* The chart appears to represent the attention weights learned by a model (likely a neural network) processing the given sentence.

* The connections are not symmetrical; the attention from "A" to "B" is not necessarily the same as from "B" to "A".

* The presence of "<EOS>" and "<pad>" suggests this is likely output from a sequence-to-sequence model or a transformer.

* The connections are relatively localized, meaning words tend to attend to nearby words in the sequence.

### Interpretation

This attention matrix visualizes how a model focuses on different parts of the input sequence when processing it. The lines represent the model's "attention" – which words it considers most relevant when dealing with a particular word. The strong connection between "The" and "Law" suggests the model recognizes "Law" as the primary subject being discussed. The connections forming a chain through the sentence indicate the model is processing the sentence sequentially. The connections to "<EOS>" and "<pad>" are expected, as these tokens mark the end of the sentence and padding for batch processing.

The sparsity of the connections suggests the model is not attending to every word equally, which is a desirable property for efficient processing. The pattern of connections provides insight into the model's understanding of the sentence's structure and meaning. The model appears to be capturing the relationships between words in a way that aligns with human intuition about sentence structure.