## Text Alignment Visualization: Attention Weights Between Two Identical Sequences

### Overview

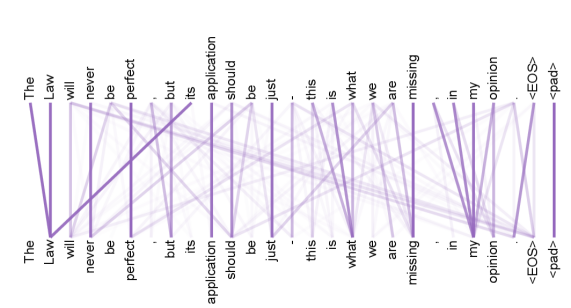

The image is a visualization of attention or alignment weights between two identical sequences of text. It displays two horizontal rows of words, with the top row representing a source sequence and the bottom row representing a target sequence. A network of purple lines of varying opacity connects words from the top row to words in the bottom row, illustrating the strength of association or "attention" between them. The visualization is likely from a natural language processing model, such as a transformer, showing self-attention or alignment between two copies of the same sentence.

### Components/Axes

* **Top Row (Source Sequence):** A horizontal line of text reading: `The Law will never be perfect , but its application should be just . this is what we are missing . in my opinion <EOS> <pad>`

* **Bottom Row (Target Sequence):** An identical horizontal line of text reading: `The Law will never be perfect , but its application should be just . this is what we are missing . in my opinion <EOS> <pad>`

* **Connecting Lines:** A dense web of purple lines connecting each word in the top row to multiple words in the bottom row. The opacity (darkness) of each line varies, indicating the weight or strength of the attention connection. Darker lines represent stronger attention.

* **Special Tokens:** The sequences end with `<EOS>` (End Of Sequence) and `<pad>` (padding token), which are standard in machine learning text processing.

### Detailed Analysis

The visualization shows a dense, many-to-many attention pattern. Each word in the source (top) sequence attends to, or has a connection with, nearly every word in the target (bottom) sequence, but with varying strengths.

**Spatial Grounding & Trend Verification:**

* **Positioning:** The source sequence is aligned along the top edge of the image. The target sequence is aligned along the bottom edge. The connecting lines span the vertical space between them.

* **Connection Pattern:** The strongest (darkest) connections are typically vertical, linking each word to its direct counterpart in the other row (e.g., "Law" top to "Law" bottom). However, there is significant diagonal cross-attention. For example:

* The word "perfect" in the top row has a notably strong diagonal connection to the word "just" in the bottom row.

* The word "just" in the top row has a strong connection back to "perfect" in the bottom row.

* The final tokens (`<EOS>`, `<pad>`) in the top row show a fan of connections to many words in the bottom sequence, particularly to the final tokens and the words "opinion" and "missing".

* **Trend:** The overall visual trend is a complex, interwoven mesh rather than a simple one-to-one mapping. This indicates that the model's internal representation for each word is informed by a broad context from the entire sequence, not just its immediate neighbor.

### Key Observations

1. **Dense Connectivity:** This is not a sparse attention pattern. Almost every source word has a non-zero weight connection to almost every target word.

2. **Strong Diagonal Bias:** Despite the dense connections, the darkest lines form a clear diagonal from top-left to bottom-right, confirming that a word's strongest attention is typically to its aligned counterpart.

3. **Semantic Cross-Attention:** The strong cross-connection between "perfect" and "just" is a key observation. These words are semantically related (both describing a quality of the law or its application), suggesting the model is capturing meaningful relationships beyond simple position.

4. **Special Token Behavior:** The `<EOS>` and `<pad>` tokens exhibit a "global attention" pattern, connecting weakly to many parts of the sequence. This is common, as these tokens often serve as a repository for sequence-level information or padding.

### Interpretation

This visualization provides a window into the "reasoning" of a neural network processing language. It demonstrates that the model does not process words in isolation. Instead, the meaning and representation of each word (like "perfect" or "just") are dynamically constructed based on a weighted combination of all other words in the context.

The strong connection between "perfect" and "just" is particularly insightful. It suggests the model has learned an implicit semantic link: the discussion of the law's *perfection* is contextually tied to the ideal of its *just* application. This goes beyond syntactic parsing and indicates the model is capturing conceptual relationships within the text.

The dense, overlapping lines represent the model's mechanism for building a rich, context-aware understanding of the sentence. Every word's final representation is a blend of its own meaning and the meanings of all the words it attends to, with the line opacity quantifying that blend. This is the fundamental operation that allows such models to perform complex tasks like translation, summarization, and question answering.