## Heatmap: Layer-wise Parameter Importance in a Neural Network

### Overview

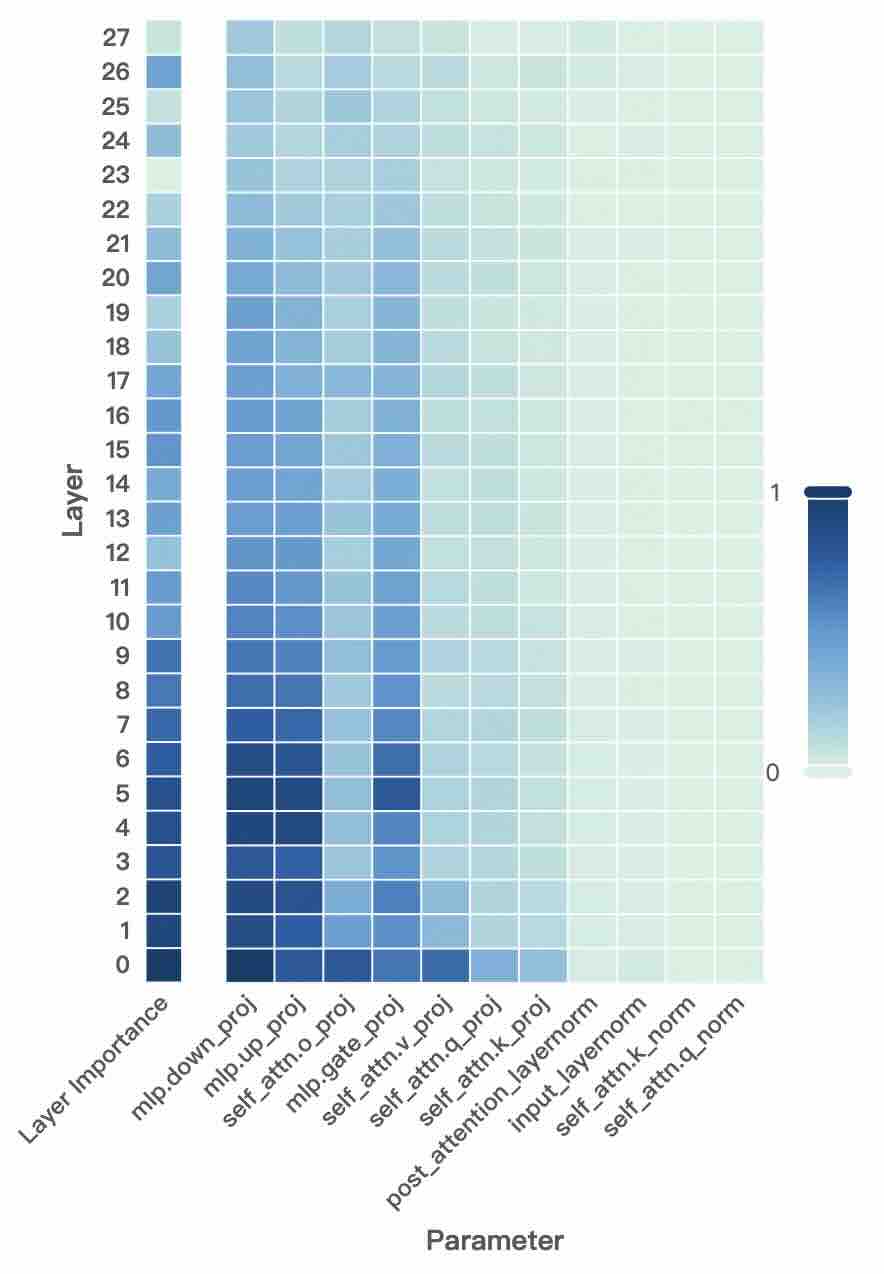

The image is a heatmap visualizing the importance of different parameters across 28 layers (0 to 27) of a neural network, likely a transformer-based model. The importance is represented by a color gradient, with darker blue indicating higher importance (closer to 1) and lighter blue/green indicating lower importance (closer to 0). The heatmap reveals a clear pattern where importance is concentrated in the lower layers and specific parameter types.

### Components/Axes

* **Y-axis (Vertical):** Labeled "Layer". It lists discrete layer numbers from 0 at the bottom to 27 at the top.

* **X-axis (Horizontal):** Labeled "Parameter". It lists 11 distinct parameter types, which are components of a transformer block. From left to right:

1. `Layer Importance` (An aggregate column)

2. `mlp.down_proj`

3. `mlp.up_proj`

4. `self_attn.o_proj`

5. `mlp.gate_proj`

6. `self_attn.v_proj`

7. `self_attn.q_proj`

8. `self_attn.k_proj`

9. `post_attention_layernorm`

10. `input_layernorm`

11. `self_attn.k_norm`

12. `self_attn.q_norm`

* **Color Bar/Legend:** Positioned on the right side of the chart. It is a vertical gradient bar labeled from `0` (bottom, light greenish-blue) to `1` (top, dark blue). This defines the scale for interpreting the color of each cell in the heatmap.

### Detailed Analysis

The heatmap is a grid where each cell's color corresponds to the importance value of a specific parameter at a specific layer.

**Spatial Grounding & Trend Verification:**

* **Aggregate Trend (Leftmost Column - `Layer Importance`):** This column shows a strong vertical gradient. Importance is highest (darkest blue) in the lowest layers (0-6), gradually becomes medium blue in the middle layers (7-19), and is lowest (lightest blue) in the highest layers (20-27). This indicates a general trend where lower layers are more critical to the model's function.

* **Parameter-Specific Trends:**

* **High Importance Cluster (Left-Center):** The columns for `mlp.down_proj`, `mlp.up_proj`, `self_attn.o_proj`, and `mlp.gate_proj` show the darkest blue cells, particularly in layers 0 through approximately 12. The darkest cells appear in layers 0-6 for `mlp.down_proj` and `mlp.up_proj`.

* **Moderate Importance Cluster (Center):** The columns for `self_attn.v_proj`, `self_attn.q_proj`, and `self_attn.k_proj` show medium blue shades, primarily in the lower to middle layers (0-15), fading to light blue in higher layers.

* **Low Importance Cluster (Right):** The four normalization parameter columns (`post_attention_layernorm`, `input_layernorm`, `self_attn.k_norm`, `self_attn.q_norm`) are consistently very light greenish-blue (near 0) across all 28 layers. This indicates these parameters have uniformly low measured importance.

**Key Data Points (Approximate):**

* **Highest Importance:** Cells in the `mlp.down_proj` and `mlp.up_proj` columns for layers 0-5 are the darkest, suggesting importance values likely in the range of 0.8 to 1.0.

* **Layer 0 Anomaly:** Layer 0 shows high importance across almost all parameter types except the normalization layers, making it the most "important" layer overall.

* **Transition Zone:** Around layers 12-15, there is a visible transition where the blue in the MLP and attention projection columns becomes noticeably lighter.

* **Uniformly Low:** All cells in the four rightmost normalization columns appear to have values < 0.1 across all layers.

### Key Observations

1. **Layer Hierarchy:** There is a clear importance hierarchy by layer depth: Lower Layers > Middle Layers > Higher Layers.

2. **Parameter Type Hierarchy:** MLP projection parameters (`down_proj`, `up_proj`, `gate_proj`) and the attention output projection (`o_proj`) are deemed more important than the attention query/key/value projections (`q_proj`, `k_proj`, `v_proj`), which are in turn more important than all normalization parameters.

3. **Normalization Insignificance:** Layer normalization parameters (`layernorm`, `k_norm`, `q_norm`) show negligible importance across the entire network depth according to this metric.

4. **Concentrated Criticality:** The most critical parameters for the model's operation appear to be concentrated in the first quarter of the network (layers 0-6), specifically within the MLP blocks.

### Interpretation

This heatmap likely visualizes the results of a parameter pruning sensitivity analysis or an attribution method (like integrated gradients) applied to a transformer model. The data suggests:

* **Functional Load Distribution:** The model's core computational "work" or feature transformation is heavily front-loaded. The lower layers are performing the most critical transformations on the input data, with the MLP layers (which typically handle non-linear feature processing) being paramount.

* **Redundancy in Depth:** The higher layers (20+) contribute less to the final output, as measured by this importance metric. This could indicate redundancy, suggesting the model might be pruned or compressed by removing or simplifying these higher layers with minimal performance impact.

* **Normalization as a Stable Framework:** The consistently low importance of normalization parameters aligns with their role as stabilizing, scaling components rather than primary feature extractors. They are necessary for training dynamics but may not be individually critical for the final inference output.

* **Architectural Insight:** The high importance of `mlp.down_proj` and `mlp.up_proj` highlights the significance of the expansion and contraction of dimensionality within the MLP blocks for model performance. The `self_attn.o_proj` (output projection of attention) being more important than `q_proj`, `k_proj`, `v_proj` suggests that the final combination of attention heads is a more critical operation than the initial projection into query, key, and value spaces.

**In summary, this heatmap provides a diagnostic map of the model's "brain," showing that its foundational processing in early-layer MLPs is most vital, while later layers and normalization functions play a less critical, possibly supportive or redundant, role.**