## Benchmark Results: VLM Responses to Visual Questions

### Overview

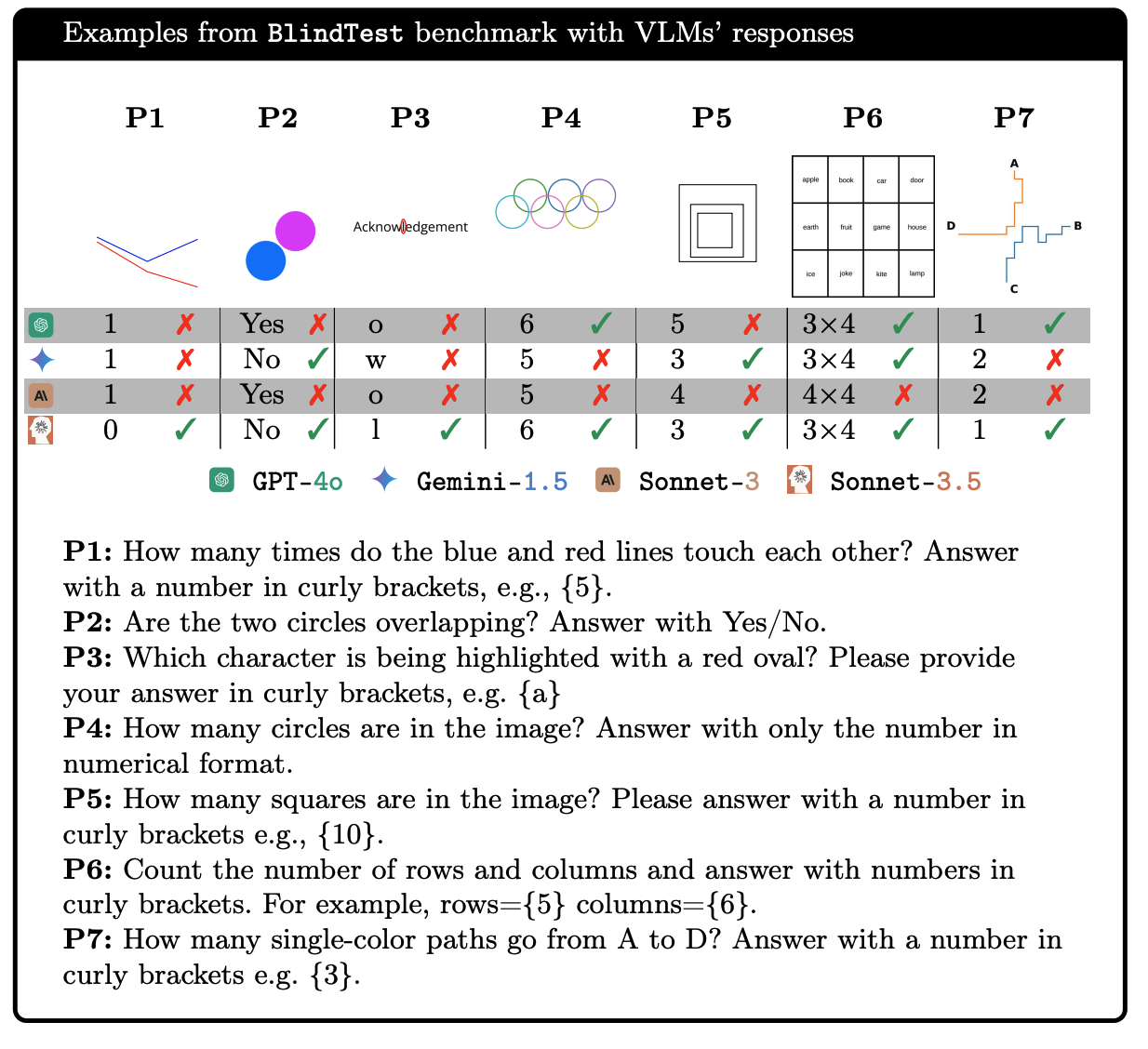

The image presents a benchmark comparison of four Vision Language Models (VLMs): GPT-4o, Gemini-1.5, Sonnet-3, and Sonnet-3.5. The benchmark, named "BlindTest," evaluates the models' ability to answer visual questions based on provided images. The image shows seven different visual questions (P1 to P7), each with a corresponding image and a table indicating whether each VLM answered correctly (green checkmark) or incorrectly (red "X").

### Components/Axes

* **Title:** "Examples from BlindTest benchmark with VLMs' responses"

* **Columns:** P1, P2, P3, P4, P5, P6, P7 (representing the seven different visual questions)

* **Rows:**

* GPT-4o (represented by a green circle with a dollar sign inside)

* Gemini-1.5 (represented by a blue diamond)

* Sonnet-3 (represented by a brown "A" in a circle)

* Sonnet-3.5 (represented by a white snowflake in a brown square)

* **Legend:** Located at the bottom of the table, indicating the icons for each VLM:

* Green circle with a dollar sign: GPT-4o

* Blue diamond: Gemini-1.5

* Brown "A" in a circle: Sonnet-3

* White snowflake in a brown square: Sonnet-3.5

* **Visual Questions (P1-P7):** Each column contains a small image representing the visual question.

* **Responses:** Each cell contains either a green checkmark (correct answer) or a red "X" (incorrect answer), indicating the VLM's performance on that question. In some cases, the actual answer provided by the model is shown (e.g., "o", "w", "1").

* **Question Descriptions:** Located below the table, providing the text of each visual question (P1 to P7).

### Detailed Analysis or Content Details

**P1:**

* **Image:** A blue line and a red line intersecting.

* **Question:** "How many times do the blue and red lines touch each other? Answer with a number in curly brackets, e.g., {5}."

* **GPT-4o:** 1, Incorrect (X)

* **Gemini-1.5:** 1, Incorrect (X)

* **Sonnet-3:** 1, Incorrect (X)

* **Sonnet-3.5:** 0, Correct (checkmark)

**P2:**

* **Image:** Two overlapping circles, one blue and one magenta.

* **Question:** "Are the two circles overlapping? Answer with Yes/No."

* **GPT-4o:** Yes, Incorrect (X)

* **Gemini-1.5:** No, Incorrect (X)

* **Sonnet-3:** Yes, Incorrect (X)

* **Sonnet-3.5:** No, Correct (checkmark)

**P3:**

* **Image:** The word "Acknowledgement" with the letter "g" highlighted in red.

* **Question:** "Which character is being highlighted with a red oval? Please provide your answer in curly brackets, e.g. {a}"

* **GPT-4o:** o, Incorrect (X)

* **Gemini-1.5:** w, Incorrect (X)

* **Sonnet-3:** o, Incorrect (X)

* **Sonnet-3.5:** 1, Correct (checkmark)

**P4:**

* **Image:** Five overlapping circles of different colors.

* **Question:** "How many circles are in the image? Answer with only the number in numerical format."

* **GPT-4o:** 6, Correct (checkmark)

* **Gemini-1.5:** 5, Incorrect (X)

* **Sonnet-3:** 5, Incorrect (X)

* **Sonnet-3.5:** 6, Correct (checkmark)

**P5:**

* **Image:** A square within a square within a square.

* **Question:** "How many squares are in the image? Please answer with a number in curly brackets e.g., {10}."

* **GPT-4o:** 5, Incorrect (X)

* **Gemini-1.5:** 3, Correct (checkmark)

* **Sonnet-3:** 4, Incorrect (X)

* **Sonnet-3.5:** 3, Correct (checkmark)

**P6:**

* **Image:** A 3x4 grid of words: apple, book, car, door, earth, fruit, game, house, ice, joke, kite, lamp.

* **Question:** "Count the number of rows and columns and answer with numbers in curly brackets. For example, rows={5} columns={6}."

* **GPT-4o:** 3x4, Correct (checkmark)

* **Gemini-1.5:** 4x4, Incorrect (X)

* **Sonnet-3:** 4x4, Incorrect (X)

* **Sonnet-3.5:** 3x4, Correct (checkmark)

**P7:**

* **Image:** A path from A to D.

* **Question:** "How many single-color paths go from A to D? Answer with a number in curly brackets e.g. {3}."

* **GPT-4o:** 1, Correct (checkmark)

* **Gemini-1.5:** 2, Incorrect (X)

* **Sonnet-3:** 2, Incorrect (X)

* **Sonnet-3.5:** 1, Correct (checkmark)

### Key Observations

* Sonnet-3.5 appears to perform the best among the four models on this specific set of visual questions, answering 5 out of 7 questions correctly.

* GPT-4o answers 3 out of 7 questions correctly.

* Gemini-1.5 answers 1 out of 7 questions correctly.

* Sonnet-3 answers 0 out of 7 questions correctly.

* All models struggled with question P2, which required understanding the concept of overlapping circles and answering with "Yes/No."

* Questions P1 and P3 also posed challenges for most of the models.

### Interpretation

The benchmark results suggest that the Vision Language Models have varying levels of proficiency in answering visual questions. Sonnet-3.5 demonstrates the highest accuracy on this particular set of questions, while Gemini-1.5 and Sonnet-3 struggled significantly. The types of questions that posed the most difficulty involved understanding spatial relationships (overlapping circles) and identifying specific elements within an image (highlighted character). This indicates potential areas for improvement in the models' visual reasoning capabilities. The fact that all models struggled with P2 suggests a common weakness in interpreting spatial relationships or understanding the nuances of "Yes/No" questions in a visual context.