\n

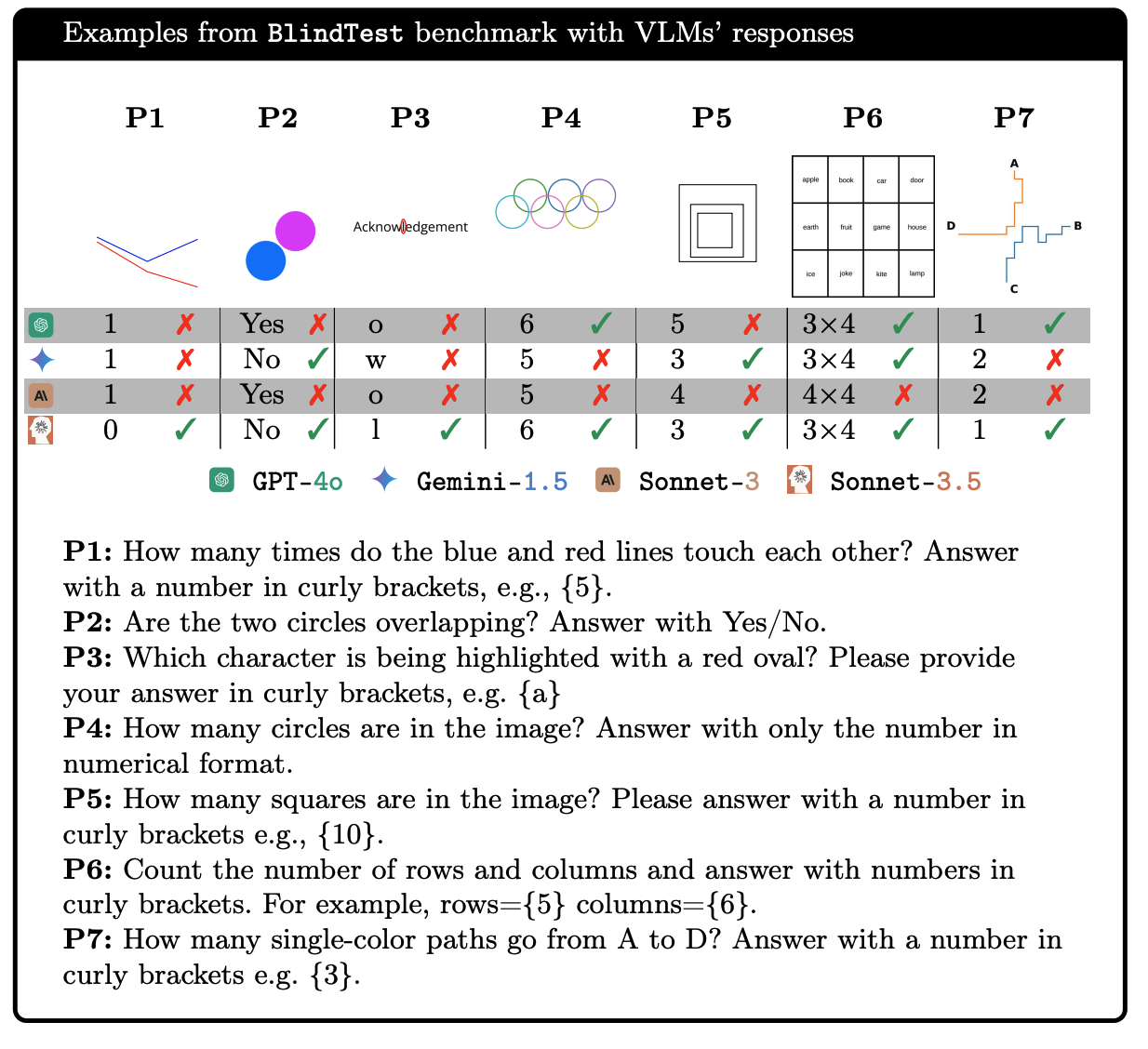

## Table: BlindTest Benchmark with VLMs' Responses

### Overview

This image presents a table comparing the responses of four Visual Language Models (VLMs) – GPT-4o, Gemini-1.5, Sonnet-3, and Sonnet-3.5 – to seven different visual reasoning tasks (P1 through P7). Each task involves a simple image and a question requiring visual understanding. The table shows the responses of each model to each task, marked with symbols like numbers, "Yes/No", letters, and checkmarks/crosses.

### Components/Axes

* **Columns:** Represent the seven visual reasoning tasks (P1 to P7).

* **Rows:** Represent the four VLMs: GPT-4o (green), Gemini-1.5 (blue), Sonnet-3 (orange), and Sonnet-3.5 (purple).

* **Cells:** Contain the responses of each model to each task. The responses are a mix of numerical values, text ("Yes", "No", letters), and symbols (checkmarks, crosses, circles).

* **Legend:** Located at the bottom-center of the image, associating colors with each VLM.

* **Task Descriptions:** Below the table, each task (P1-P7) is described with its question.

### Detailed Analysis or Content Details

Here's a breakdown of the responses for each task:

* **P1: How many times do the blue and red lines touch each other? Answer with a number in curly brackets, e.g., {5}.**

* GPT-4o: 1

* Gemini-1.5: X

* Sonnet-3: 1

* Sonnet-3.5: 0

* **P2: Are the two circles overlapping? Answer with Yes/No.**

* GPT-4o: X

* Gemini-1.5: No

* Sonnet-3: Yes

* Sonnet-3.5: No

* **P3: Which character is being highlighted with a red oval? Please provide your answer in curly brackets, e.g. {a}**

* GPT-4o: X

* Gemini-1.5: o

* Sonnet-3: X

* Sonnet-3.5: 1

* **P4: How many circles are in the image? Answer with only the number in numerical format.**

* GPT-4o: 6

* Gemini-1.5: X

* Sonnet-3: 5

* Sonnet-3.5: 6

* **P5: How many squares are in the image? Please answer with a number in curly brackets e.g., {10}.**

* GPT-4o: X

* Gemini-1.5: 5

* Sonnet-3: 3

* Sonnet-3.5: 3

* **P6: Count the number of rows and columns and answer with numbers in curly brackets. rows={5} columns={6}.**

* GPT-4o: 3x4

* Gemini-1.5: 4x4

* Sonnet-3: 3x4

* Sonnet-3.5: 3x4

* **P7: How many single-color paths go from A to D? Answer with a number in curly brackets e.g. {3}.**

* GPT-4o: 1

* Gemini-1.5: X

* Sonnet-3: 2

* Sonnet-3.5: 1

### Key Observations

* There is significant disagreement among the models on several tasks (e.g., P2, P3, P4).

* GPT-4o and Sonnet-3 often provide numerical answers, while Gemini-1.5 frequently responds with "X", indicating an inability to answer or a failure to understand the task.

* Sonnet-3.5's responses are generally consistent with Sonnet-3.

* The "X" responses are used to indicate incorrect or invalid answers.

### Interpretation

This table demonstrates the varying capabilities of different VLMs in performing basic visual reasoning tasks. The discrepancies in responses highlight the challenges in developing models that can reliably interpret visual information and answer questions accurately. The use of different response formats (numbers, text, symbols) suggests that the models may have different internal representations of the visual data and different strategies for generating answers. The frequent "X" responses from Gemini-1.5 suggest it may struggle with certain types of visual tasks or have a higher threshold for providing an answer. The tasks themselves are designed to test specific aspects of visual understanding, such as counting, spatial reasoning, and object recognition. The results indicate that even relatively simple visual reasoning tasks can be challenging for current VLMs. The tasks are designed to be simple, but require a degree of understanding of the visual scene. The fact that the models disagree on some of the answers suggests that there is still room for improvement in the field of visual language modeling.