## Table: Examples from BlindTest Benchmark with VLMs' Responses

### Overview

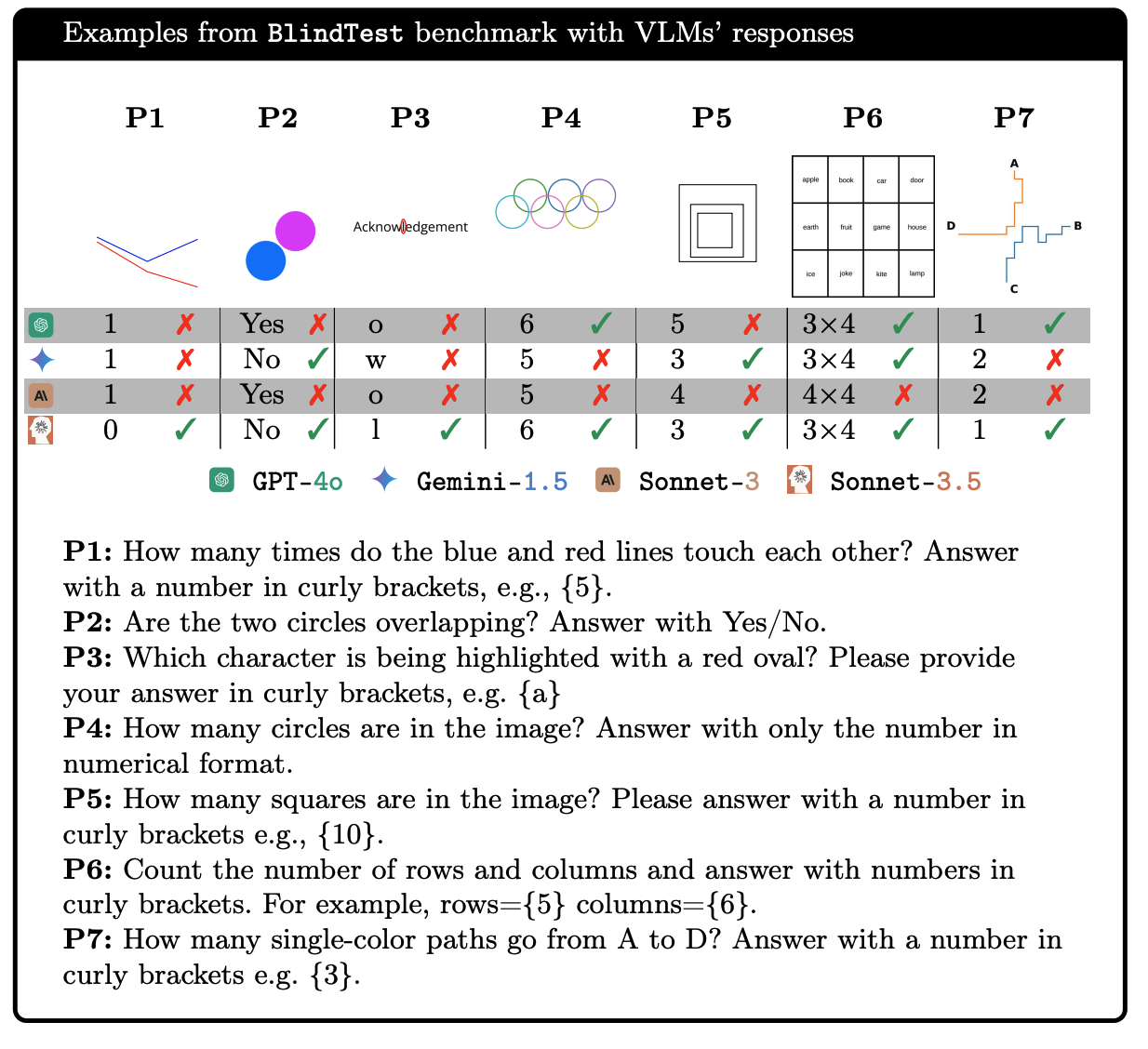

This image is a technical figure presenting a comparative evaluation of four Vision-Language Models (VLMs) on a set of visual reasoning tasks from the "BlindTest" benchmark. It consists of a header, a visual example row, a results table, a legend, and a list of the corresponding questions (P1-P7).

### Components/Axes

* **Header:** "Examples from BlindTest benchmark with VLMs' responses"

* **Visual Example Row:** Seven labeled panels (P1 through P7), each containing a simple visual puzzle.

* **Results Table:** A 4-row by 7-column grid. The rows correspond to different AI models, and the columns correspond to the puzzles (P1-P7). Each cell contains the model's answer and a correctness indicator (✓ for correct, ✗ for incorrect).

* **Legend (Bottom Center):** Maps model icons to names:

* Green hexagon icon: **GPT-4o**

* Blue four-pointed star icon: **Gemini-1.5**

* Brown "AI" icon: **Sonnet-3**

* Orange gear/robot icon: **Sonnet-3.5**

* **Question List (Below Table):** Seven questions (P1-P7) corresponding to the visual puzzles.

### Detailed Analysis

**Visual Puzzles (P1-P7):**

* **P1:** Two intersecting lines, one blue and one red.

* **P2:** Two circles, one blue and one magenta, overlapping.

* **P3:** The word "Acknowledgement" with the letter 'l' highlighted by a red oval.

* **P4:** Five interlocking rings (like the Olympic symbol).

* **P5:** Three concentric squares.

* **P6:** A 3x4 grid of words (apple, book, car, door; earth, fruit, game, house; ice, joke, kite, lamp).

* **P7:** A diagram with points A, B, C, D connected by colored paths (orange, blue, green).

**Model Responses Table:**

The table below lists each model's answer for each puzzle and whether it was correct.

| Model (Legend) | P1 Answer | P1 Correct? | P2 Answer | P2 Correct? | P3 Answer | P3 Correct? | P4 Answer | P4 Correct? | P5 Answer | P5 Correct? | P6 Answer | P6 Correct? | P7 Answer | P7 Correct? |

| :--- | :--- | :--- | :--- | :--- | :--- | :--- | :--- | :--- | :--- | :--- | :--- | :--- | :--- | :--- |

| **GPT-4o** | 1 | ✗ | Yes | ✗ | o | ✗ | 6 | ✓ | 5 | ✗ | 3×4 | ✓ | 1 | ✓ |

| **Gemini-1.5** | 1 | ✗ | No | ✓ | w | ✗ | 5 | ✗ | 3 | ✓ | 3×4 | ✓ | 2 | ✗ |

| **Sonnet-3** | 1 | ✗ | Yes | ✗ | o | ✗ | 5 | ✗ | 4 | ✗ | 4×4 | ✗ | 2 | ✗ |

| **Sonnet-3.5** | 0 | ✓ | No | ✓ | l | ✓ | 6 | ✓ | 3 | ✓ | 3×4 | ✓ | 1 | ✓ |

**Questions (P1-P7):**

* **P1:** How many times do the blue and red lines touch each other? Answer with a number in curly brackets, e.g., {5}.

* **P2:** Are the two circles overlapping? Answer with Yes/No.

* **P3:** Which character is being highlighted with a red oval? Please provide your answer in curly brackets, e.g. {a}

* **P4:** How many circles are in the image? Answer with only the number in numerical format.

* **P5:** How many squares are in the image? Please answer with a number in curly brackets e.g., {10}.

* **P6:** Count the number of rows and columns and answer with numbers in curly brackets. For example, rows={5} columns={6}.

* **P7:** How many single-color paths go from A to D? Answer with a number in curly brackets e.g. {3}.

### Key Observations

1. **Performance Variability:** No single model achieved a perfect score. **Sonnet-3.5** performed best, answering 6 out of 7 questions correctly. **Sonnet-3** performed worst, with only 1 correct answer.

2. **Task Difficulty:** Certain tasks proved consistently challenging.

* **P1 (Line Intersections):** Three models incorrectly answered "1". Only Sonnet-3.5 correctly identified "0" intersections.

* **P3 (Character Highlight):** Three models failed to identify the highlighted letter 'l', with two answering 'o' and one answering 'w'.

* **P5 (Square Count):** Models gave divergent answers (5, 3, 4, 3). The correct answer appears to be 3 (the three concentric squares).

3. **Consensus Errors:** For P1, GPT-4o, Gemini-1.5, and Sonnet-3 all gave the same incorrect answer ("1"). For P3, GPT-4o and Sonnet-3 both gave the same incorrect answer ("o").

4. **Simple Successes:** All models correctly answered P6 (grid dimensions: 3 rows × 4 columns). Most models (3 out of 4) correctly answered P4 (circle count: 6) and P2 (overlap: Yes/No).

### Interpretation

This figure serves as a qualitative benchmark to expose the limitations and strengths of current VLMs on fundamental visual perception and reasoning tasks. The "BlindTest" appears designed to test capabilities that are trivial for humans but can trip up AI models, such as:

* **Precise Spatial Reasoning:** Counting intersections (P1) or nested shapes (P5).

* **Fine-Grained Visual Discrimination:** Identifying a specifically highlighted character within a word (P3).

* **Basic Object Counting:** Counting circles (P4) or determining grid structure (P6).

* **Abstract Path Analysis:** Counting valid paths in a diagram (P7).

The results suggest that while models like Sonnet-3.5 show significant improvement, robust and human-like visual understanding remains a challenge. The consistent errors on P1 and P3 indicate potential systematic weaknesses in how models process certain types of visual relationships or text-in-image recognition. This benchmark provides a valuable diagnostic tool for developers to identify and target these specific failure modes in future model iterations.