TECHNICAL ASSET FINGERPRINT

8a5b6ba35dc4b6ef3e5dbdfd

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

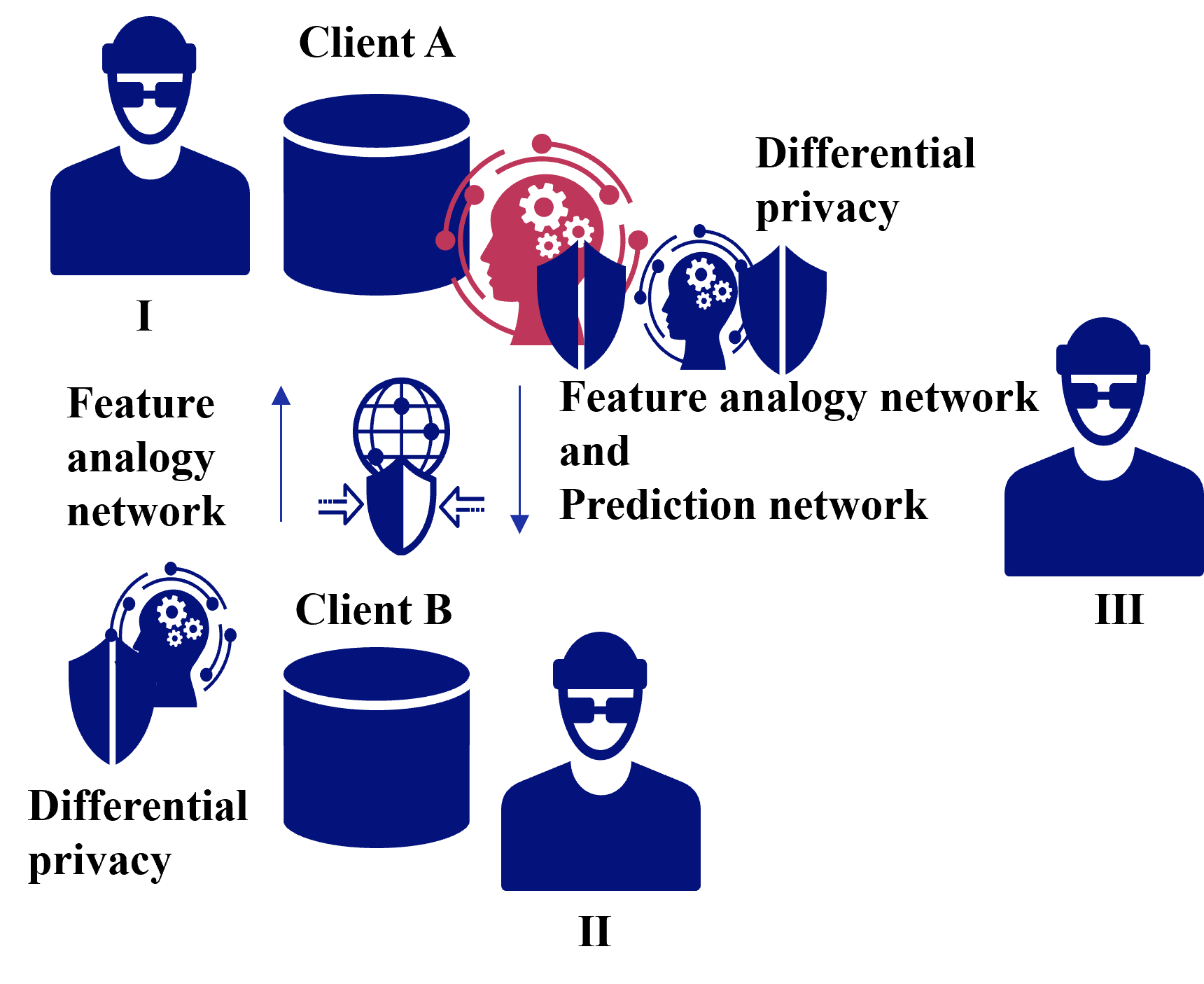

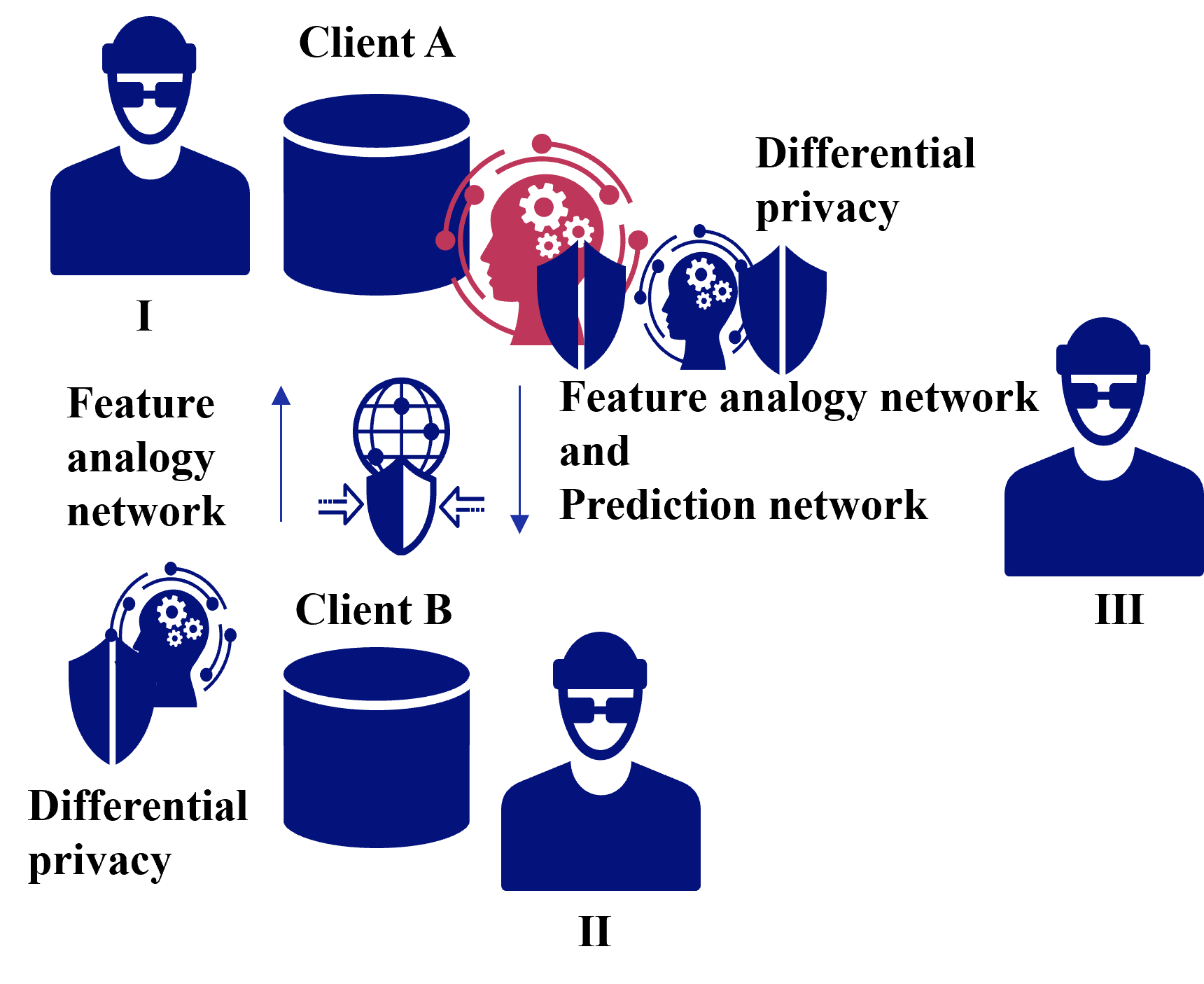

## Data Flow Diagram: Federated Learning with Differential Privacy

### Overview

The image is a data flow diagram illustrating a federated learning system with differential privacy. It depicts two clients (A and B) interacting with a central entity (III) through feature analogy and prediction networks, while incorporating differential privacy mechanisms.

### Components/Axes

* **Clients:** Client A and Client B, represented by data storage icons and user icons labeled I and II respectively.

* **Central Entity:** Represented by a user icon labeled III.

* **Data Flow:** Arrows indicate the direction of data flow between clients and the central entity.

* **Feature Analogy Network:** Text label indicating the network used for feature analogy between Client A and Client B.

* **Prediction Network:** Text label indicating the network used for prediction.

* **Differential Privacy:** Text labels indicating the application of differential privacy at Client A and Client B.

* **Privacy Shields:** Shield icons represent privacy mechanisms.

* **Processing Heads:** Head icons with gears represent data processing.

### Detailed Analysis or Content Details

1. **Client A (Top-Left):**

* Labeled "Client A".

* A user icon labeled "I" is positioned to the left of a cylindrical data storage icon.

* A red head icon with gears inside a red circle and a blue shield is positioned to the right of the data storage.

* "Feature analogy network" text is positioned below the user icon.

* An upward arrow points from a globe icon with a shield towards Client A's data storage.

2. **Client B (Bottom-Left):**

* Labeled "Client B".

* A cylindrical data storage icon is positioned to the left of a user icon labeled "II".

* A blue head icon with gears inside a blue circle and a blue shield is positioned below and to the left of the data storage.

* "Differential privacy" text is positioned below the head icon.

* A downward arrow points from the globe icon with a shield towards Client B's data storage.

3. **Central Entity (Right):**

* Represented by a user icon labeled "III".

* Positioned to the right of the diagram.

4. **Data Flow and Networks:**

* "Feature analogy network" text is positioned between Client A and Client B, with arrows indicating data flow between them via a globe icon with a shield.

* "Feature analogy network and Prediction network" text is positioned between Client A and the central entity.

* "Differential privacy" text is positioned between Client A and the central entity.

### Key Observations

* The diagram illustrates a federated learning setup where Client A and Client B exchange feature information through a feature analogy network.

* Differential privacy is applied at both Client A and Client B to protect data privacy.

* A prediction network is used to send data from Client A to the central entity.

### Interpretation

The diagram depicts a federated learning system designed to protect data privacy. Clients A and B collaborate by sharing feature analogies, while differential privacy mechanisms are implemented to prevent the disclosure of sensitive information. The central entity receives data from Client A through a prediction network, enabling model training without direct access to raw client data. The globe icon with a shield suggests a secure communication channel between the clients. The red color of the head icon with gears and shield near Client A may indicate a potential risk or a different type of privacy mechanism compared to Client B.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Diagram: Federated Learning with Differential Privacy

### Overview

The image depicts a diagram illustrating a federated learning system incorporating differential privacy. It shows two clients (A and B) contributing to a central "Feature analogy network and Prediction network" while maintaining differential privacy. The diagram highlights the flow of information and the application of privacy mechanisms.

### Components/Axes

The diagram consists of the following components:

* **Client A:** Represented by a dark blue silhouette wearing a hard hat, a cylindrical database, and a "Differential privacy" shield. Labeled "I".

* **Client B:** Similar to Client A, represented by a dark blue silhouette wearing a hard hat, a cylindrical database, and a "Differential privacy" shield. Labeled "II".

* **Feature analogy network and Prediction network:** A central component depicted as a globe with gears overlaid, and a "Differential privacy" shield.

* **Differential privacy shields:** Blue shields with white gears, indicating the application of differential privacy.

* **Arrows:** Red arrows indicate the flow of information between clients and the central network. Double-sided arrows indicate bidirectional flow.

* **Text Labels:** "Client A", "Client B", "Feature analogy network", "Differential privacy", "Feature analogy network and Prediction network".

* **Roman Numerals:** I, II, and III are used to label the clients and the central network.

* **Client III:** A dark blue silhouette wearing a hard hat, labeled "III".

### Detailed Analysis or Content Details

The diagram illustrates the following process:

1. **Client A (I)** sends data to the "Feature analogy network and Prediction network". This is indicated by a red arrow originating from the database associated with Client A.

2. **Client B (II)** sends data to the "Feature analogy network and Prediction network". This is indicated by a red arrow originating from the database associated with Client B.

3. The "Feature analogy network and Prediction network" processes the data from both clients. The globe with gears suggests a complex processing mechanism.

4. The "Feature analogy network and Prediction network" sends information back to both Client A and Client B. This is indicated by double-sided red arrows.

5. Both Client A and Client B utilize "Differential privacy" shields, suggesting that privacy-preserving mechanisms are applied to the data before or after transmission.

6. Client III is present but does not appear to be directly involved in the data flow.

There are no numerical values or specific data points presented in the diagram. It is a conceptual illustration of a system architecture.

### Key Observations

* The diagram emphasizes the decentralized nature of federated learning, with data residing on the clients.

* Differential privacy is a key component of the system, indicated by the shields on both clients and the central network.

* The bidirectional arrows suggest an iterative process of model training and refinement.

* The presence of Client III without direct connection suggests a potential role as an observer or a future participant.

### Interpretation

The diagram illustrates a federated learning system designed to protect user privacy. Federated learning allows a model to be trained on decentralized data sources (Client A and Client B) without directly exchanging the data itself. Instead, the clients send model updates or gradients to a central server (Feature analogy network and Prediction network), which aggregates them to improve the global model.

The inclusion of "Differential privacy" shields indicates that noise or other privacy-preserving techniques are applied to the data or model updates to prevent the identification of individual users. This is crucial for protecting sensitive information while still enabling collaborative learning.

The diagram suggests a closed-loop system where the central network learns from the clients and provides feedback, iteratively improving the model's performance. The role of Client III is unclear, but it could represent a separate entity that benefits from the trained model or a potential future participant in the federated learning process.

The diagram is a high-level conceptual representation and does not provide details about the specific algorithms or techniques used for federated learning or differential privacy. It serves as a visual aid for understanding the overall architecture and key principles of the system.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Diagram: Federated Learning System with Differential Privacy and Feature Analogy Networks

### Overview

The image is a technical diagram illustrating a multi-party machine learning system, likely a federated learning framework. It depicts two clients (Client A and Client B) and a central server or aggregator, with components for differential privacy and neural networks (Feature Analogy Network and Prediction Network). The diagram uses icons and text labels to show the flow of data and model components between entities.

### Components/Axes

The diagram is organized into three primary regions, labeled with Roman numerals:

**Region I (Top-Left):**

* **Entity:** A blue human icon labeled "Client A".

* **Data Store:** A blue cylinder icon representing a database, positioned to the right of the Client A icon.

* **Privacy Component:** A red icon depicting a human head silhouette with gears inside, surrounded by a circular network of nodes and lines. This is labeled "Differential privacy" to its right.

* **Network Component:** A blue icon of a human head silhouette with gears inside, protected by a shield. This is positioned to the right of the red differential privacy icon.

**Region II (Bottom-Center):**

* **Entity:** A blue human icon labeled "Client B".

* **Data Store:** A blue cylinder icon representing a database, positioned to the left of the Client B icon.

* **Privacy Component:** A blue icon depicting a human head silhouette with gears inside, protected by a shield. This is positioned to the left of the Client B database and is labeled "Differential privacy" below it.

**Region III (Right):**

* **Entity:** A blue human icon labeled "III". This likely represents a central server, aggregator, or another client.

**Central Network & Flow Components:**

* **Feature Analogy Network Label:** The text "Feature analogy network" appears twice. Once on the left side, between Regions I and II. Once on the right side, as part of a longer label.

* **Prediction Network Label:** The text "Prediction network" appears on the right side, below "Feature analogy network".

* **Combined Label:** The full text "Feature analogy network and Prediction network" is positioned in the center-right area.

* **Globe/Network Icon:** A blue globe icon with a grid pattern, overlaid with a shield. It has two horizontal arrows pointing towards it from the left and right, and two vertical arrows (one pointing up, one pointing down) on its left and right sides, respectively. This icon is centrally located between the left and right sides of the diagram.

### Detailed Analysis

The diagram illustrates a data and model flow between clients and a central entity, incorporating privacy-preserving techniques.

1. **Client A (Region I):** Possesses local data (database icon). It applies a "Differential privacy" mechanism (red icon) to its data or model updates. The output from this process is then processed by a "Feature analogy network" (blue shielded head icon).

2. **Client B (Region II):** Also possesses local data (database icon). It applies its own "Differential privacy" mechanism (blue shielded head icon) to its data or updates.

3. **Central Communication Hub:** The globe/shield icon in the center represents a secure communication channel or aggregation server. The horizontal arrows suggest bidirectional data flow between the clients (via their respective networks) and this central hub. The vertical arrows may indicate the upload of local updates and the download of a global model.

4. **Central Entity (Region III):** Labeled "III", this entity is connected to the central hub. The label "Feature analogy network and Prediction network" is placed near it, suggesting this entity hosts or manages these global network components. The flow implies that processed updates from clients are aggregated here, and a global model (incorporating the Feature Analogy and Prediction networks) is distributed back.

**Text Transcription (All text is in English):**

* Client A

* I

* Feature analogy network

* Differential privacy

* Feature analogy network and Prediction network

* Client B

* II

* Differential privacy

* III

### Key Observations

* **Asymmetric Privacy Icons:** The "Differential privacy" component for Client A is red and lacks a shield, while for Client B it is blue and includes a shield. This could indicate different privacy algorithms, different stages of application (e.g., noise addition vs. secure aggregation), or simply a visual distinction.

* **Network Component Placement:** The "Feature analogy network" icon (blue shielded head) is shown as part of Client A's pipeline but is also referenced in the central label associated with Entity III. This suggests it is a shared model component.

* **Centralized Architecture:** The diagram follows a star topology, with clients (I and II) communicating through a central hub (globe icon) to a main server/aggregator (III).

* **Lack of Explicit Data Flow Lines:** While arrows indicate general communication directions, specific lines connecting icons (e.g., from Client A's database to its differential privacy icon) are not drawn, leaving the exact sequence of operations to be inferred.

### Interpretation

This diagram represents a **privacy-preserving federated learning system**. The core idea is to train a machine learning model across multiple decentralized clients (Client A and Client B) holding local data, without sharing the raw data.

* **Differential Privacy (DP):** This is a key privacy technique. The presence of DP icons at each client indicates that local data or model updates are being perturbed with statistical noise before being shared. This protects the confidentiality of individual data points within each client's dataset. The different visual styles for Client A and B might imply heterogeneous privacy requirements or methods.

* **Feature Analogy Network & Prediction Network:** These are likely the core neural network architectures being trained. The "Feature analogy network" may be responsible for learning invariant representations or mappings between features across different clients' data distributions (addressing data heterogeneity). The "Prediction network" performs the final task (e.g., classification). The fact that they are labeled centrally suggests they form the global model that is iteratively updated by aggregating the differentially private updates from all clients.

* **Workflow:** The inferred workflow is: 1) Each client trains the global model locally on its private data. 2) They apply differential privacy to their model updates (gradients). 3) The privatized updates are sent securely (via the globe/shield channel) to the central server (III). 4) The server aggregates these updates (e.g., via federated averaging) to improve the global Feature Analogy and Prediction Networks. 5) The updated global model is sent back to the clients for the next training round.

* **Purpose:** The system aims to achieve two goals simultaneously: **collaborative learning** (improving a shared model by leveraging diverse data from multiple sources) and **privacy preservation** (ensuring no client's raw data can be reverse-engineered from the shared updates, thanks to differential privacy). This is crucial in domains like healthcare or finance where data sensitivity is paramount.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Diagram: Federated Learning System with Differential Privacy

### Overview

The diagram illustrates a federated learning architecture involving three entities (Client A, Client B, and Client III) and two data containers (I and II). It emphasizes data privacy through differential privacy mechanisms and feature analogy networks. Arrows indicate data flow, and shields/gears symbolize privacy and processing steps.

### Components/Axes

- **Entities**:

- Client A (I), Client B (II), Client III (III)

- Data containers (blue cylinders) labeled I and II

- **Networks**:

- Feature analogy network (red gears/shield)

- Prediction network (blue shield)

- **Privacy Mechanisms**:

- Differential privacy (blue shields with "Differential privacy" labels)

- **Flow Direction**:

- Data moves from Client A → Feature analogy network → Prediction network → Client B (via differential privacy).

- Data moves from Client B → Differential privacy → Feature analogy network → Prediction network → Client III.

### Detailed Analysis

1. **Client A (I)**:

- Data container (blue cylinder) labeled "Client A."

- Outputs to a **feature analogy network** (red gears/shield), which connects to a **prediction network** (blue shield).

- Differential privacy is applied before data reaches Client B.

2. **Client B (II)**:

- Data container (blue cylinder) labeled "Client B."

- Receives data from Client A’s prediction network via differential privacy.

- Outputs to a **feature analogy network** and **prediction network**, then to Client III via differential privacy.

3. **Client III (III)**:

- Receives processed data from Client B’s prediction network after differential privacy.

4. **Key Symbols**:

- **Shields**: Represent differential privacy (blue) and prediction networks (blue).

- **Gears**: Represent feature analogy networks (red).

- **Arrows**: Indicate data flow direction.

### Key Observations

- **Bidirectional Collaboration**: Client A and Client B exchange data through shared networks, suggesting collaborative model training.

- **Privacy Emphasis**: Differential privacy is applied at multiple stages (before data leaves Client A, before data reaches Client B, and before data reaches Client III).

- **Feature Alignment**: The feature analogy network (red gears) likely aligns data features between clients to improve prediction accuracy.

### Interpretation

This diagram represents a **secure, privacy-preserving federated learning system**. Clients (A and B) contribute data without sharing raw information, using differential privacy to anonymize data at each transfer. The feature analogy network ensures compatibility between clients’ data features, while the prediction network aggregates insights. Client III may act as a central server or another participant in the federated system. The use of shields and gears visually reinforces the balance between data utility (gears) and privacy (shields).

**Notable Patterns**:

- Differential privacy is prioritized at every data handoff, minimizing exposure.

- The feature analogy network acts as a bridge between disparate data sources, enabling cross-client collaboration.

- Client III’s role is ambiguous but likely involves receiving finalized, privacy-protected insights.

**Underlying Logic**:

The system prioritizes **data minimization** (only sharing necessary features) and **privacy guarantees** (differential privacy at every stage). The red gears (feature analogy network) suggest a focus on aligning heterogeneous data, while blue shields (prediction/differential privacy) emphasize secure processing.

DECODING INTELLIGENCE...