## Diagram: Large Language Model Reasoning Process

### Overview

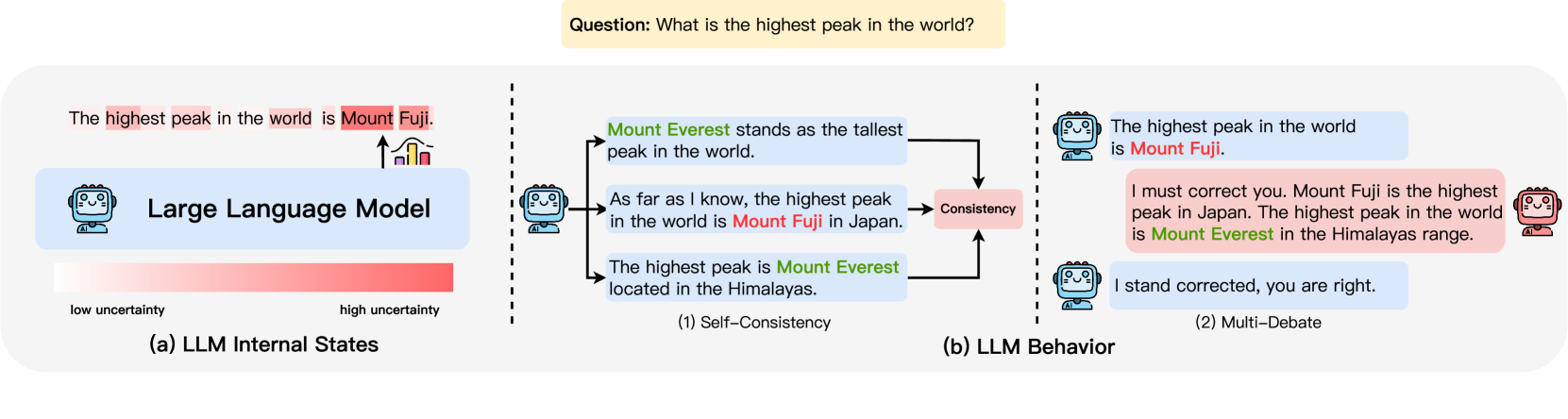

The diagram illustrates the internal reasoning process of a Large Language Model (LLM) when answering the question "What is the highest peak in the world?" It contrasts two reasoning strategies: **Self-Consistency** and **Multi-Debate**, while visualizing uncertainty levels through a bar chart.

---

### Components/Axes

#### (a) LLM Internal States

- **Bar Chart**:

- **X-axis**: Uncertainty levels (labeled "low uncertainty" and "high uncertainty").

- **Y-axis**: "Large Language Model" (title).

- **Legend**: Implied by bar color (red = high uncertainty).

- **Text**: "The highest peak in the world is Mount Fuji" (highlighted in red, indicating high uncertainty).

#### (b) LLM Behavior

- **Self-Consistency (1)**:

- **Components**:

- **Input**: Question: "What is the highest peak in the world?"

- **Internal Debate**:

- "Mount Everest stands as the tallest peak in the world."

- "As far as I know, the highest peak in the world is Mount Fuji in Japan."

- "The highest peak is Mount Everest located in the Himalayas."

- **Output**: Resolves to "Mount Everest" (correct answer).

- **Flow**: Arrows indicate iterative refinement toward consistency.

- **Multi-Debate (2)**:

- **Components**:

- **Input**: Question: "What is the highest peak in the world?"

- **Internal Debate**:

- "The highest peak in the world is Mount Fuji."

- "I must correct you. Mount Fuji is the highest peak in Japan. The highest peak in the world is Mount Everest in the Himalayas range."

- "I stand corrected, you are right."

- **Output**: Final answer: "Mount Everest."

- **Flow**: Arrows show back-and-forth correction.

---

### Detailed Analysis

#### (a) LLM Internal States

- The bar chart shows **high uncertainty** (red bar) associated with the model's initial assertion that "Mount Fuji" is the highest peak. This suggests the model's confidence is low for this claim.

#### (b) LLM Behavior

1. **Self-Consistency**:

- The model generates multiple candidate answers (Everest vs. Fuji) but resolves to **Mount Everest** as the consistent answer.

- Uncertainty is reduced through internal validation.

2. **Multi-Debate**:

- The model engages in a self-correcting dialogue, initially asserting "Mount Fuji" but later acknowledging "Mount Everest" as the correct answer.

- Demonstrates iterative refinement and error correction.

---

### Key Observations

1. **Uncertainty Correlation**: The red bar in (a) directly correlates with the model's initial incorrect assertion about Mount Fuji, indicating high uncertainty.

2. **Resolution Strategies**:

- **Self-Consistency** resolves ambiguity by favoring the most widely accepted fact (Everest).

- **Multi-Debate** explicitly models uncertainty through adversarial reasoning and correction.

3. **Final Answer Alignment**: Both strategies converge on **Mount Everest**, but Multi-Debate provides a more transparent path to the correct answer.

---

### Interpretation

The diagram highlights how LLMs manage uncertainty and reasoning errors:

- **High Uncertainty States**: Initial claims (e.g., "Mount Fuji") are flagged as low-confidence, reflecting the model's awareness of potential inaccuracies.

- **Self-Consistency**: Acts as a "sanity check" to prioritize widely accepted facts (Everest).

- **Multi-Debate**: Mimics human-like reasoning by simulating counterarguments and corrections, leading to more robust conclusions.

- **Practical Implication**: Structured reasoning frameworks (like Multi-Debate) may outperform simpler methods in complex, factually ambiguous tasks by explicitly modeling and resolving contradictions.

The model's ability to self-correct underscores the importance of iterative reasoning in reducing uncertainty and improving factual accuracy.