## Heatmap: Average JS Divergence Across Layers and Components

### Overview

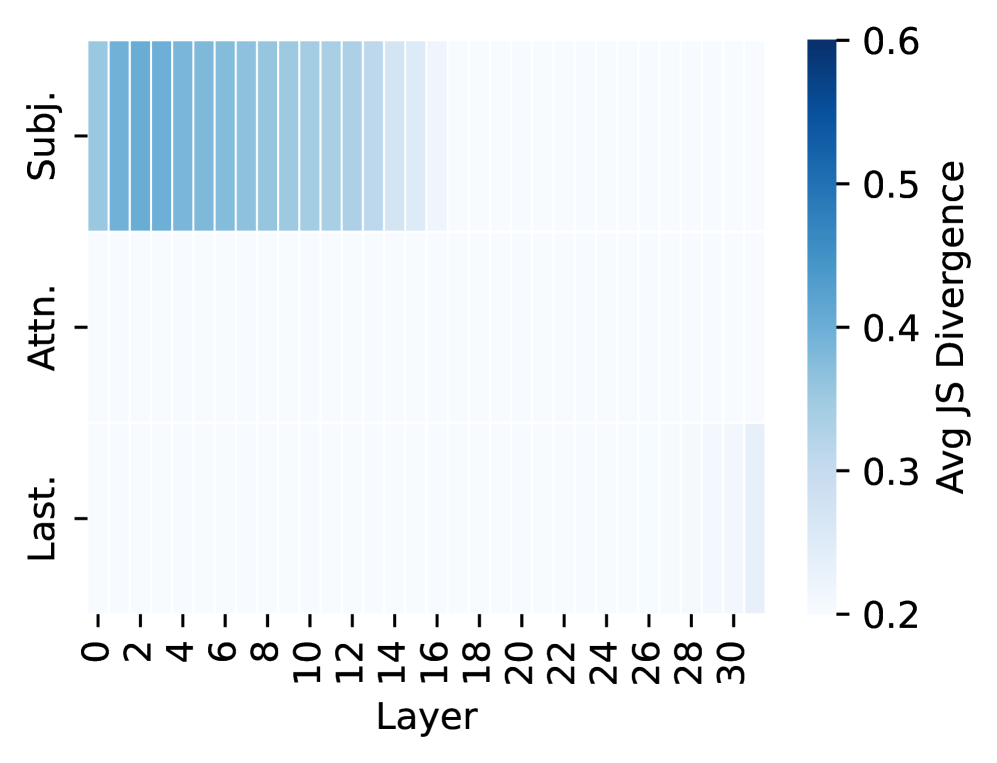

The image is a heatmap visualizing the average Jensen-Shannon (JS) divergence across 31 layers (0–30) for three components: "Subj." (subject), "Attn." (attention), and "Last." (final layer). The color gradient ranges from light blue (low divergence, ~0.2) to dark blue (high divergence, ~0.6), with the legend positioned on the right.

---

### Components/Axes

- **X-axis (Layer)**: Labeled "Layer," with integer increments from 0 to 30.

- **Y-axis (Components)**: Three categories:

- "Subj." (subject)

- "Attn." (attention)

- "Last." (final layer)

- **Color Scale**: "Avg JS Divergence" (0.2–0.6), with darker blue indicating higher divergence.

---

### Detailed Analysis

1. **Subj. (Subject)**:

- Dark blue bars dominate the first 15 layers (0–14), indicating high divergence (~0.5–0.6).

- Gradual lightening from layer 15 onward, with values dropping to ~0.3 by layer 30.

- Spatial grounding: Darkest bars are clustered in the top-left region.

2. **Attn. (Attention)**:

- Uniform light blue across all layers (~0.2–0.3), with no significant variation.

- Slightly darker in the first 10 layers (~0.25), but no clear trend.

3. **Last. (Final Layer)**:

- Light blue throughout, with minimal divergence (~0.2–0.3).

- No discernible pattern across layers.

---

### Key Observations

- **Highest Divergence**: "Subj." in early layers (0–14) shows the strongest variability.

- **Stability**: "Attn." and "Last." exhibit consistently low divergence, suggesting stability.

- **Transition**: "Subj." divergence decreases sharply after layer 15, implying a shift in focus or representation.

---

### Interpretation

The data suggests that subject-related features ("Subj.") are highly variable in early layers but stabilize later, potentially reflecting a model's initial exploration of subject-specific patterns followed by refinement. Attention ("Attn.") and final layer representations ("Last.") remain stable, indicating consistent processing or aggregation of information. The sharp drop in "Subj." divergence after layer 15 may signal a transition from exploration to exploitation in the model's architecture. This pattern could inform layer-wise analysis of neural network behavior, particularly in tasks requiring subject-specific attention.