TECHNICAL ASSET FINGERPRINT

8ac9382819579e15bb24cf1e

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

```markdown

## Data Table: Tokenizer Performance

### Overview

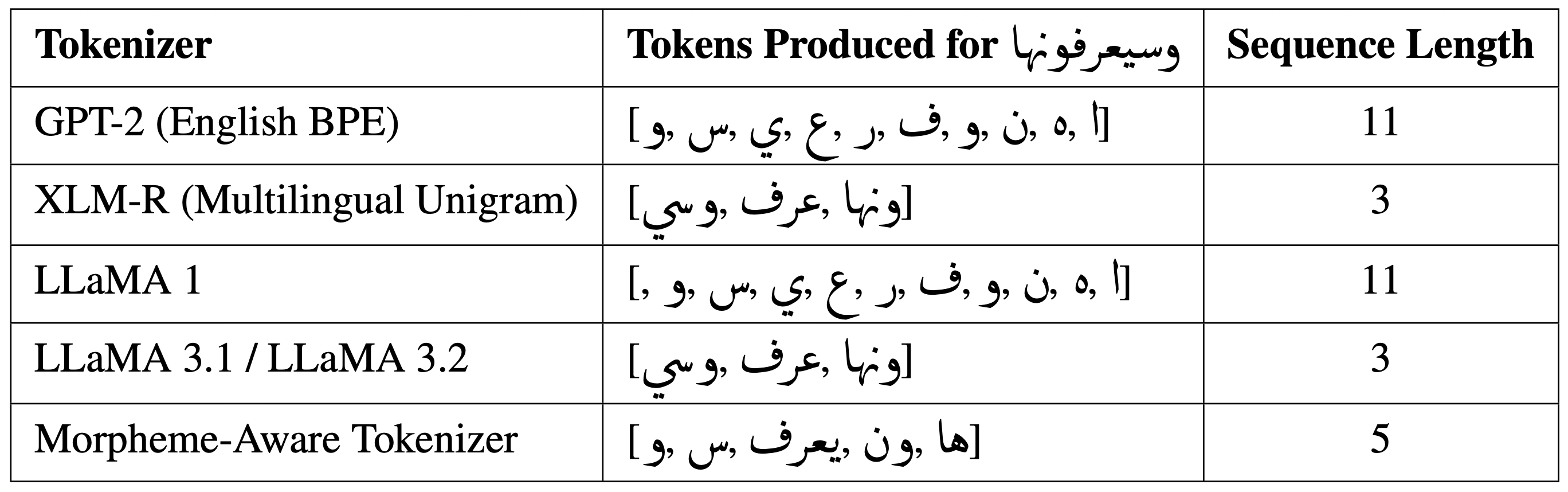

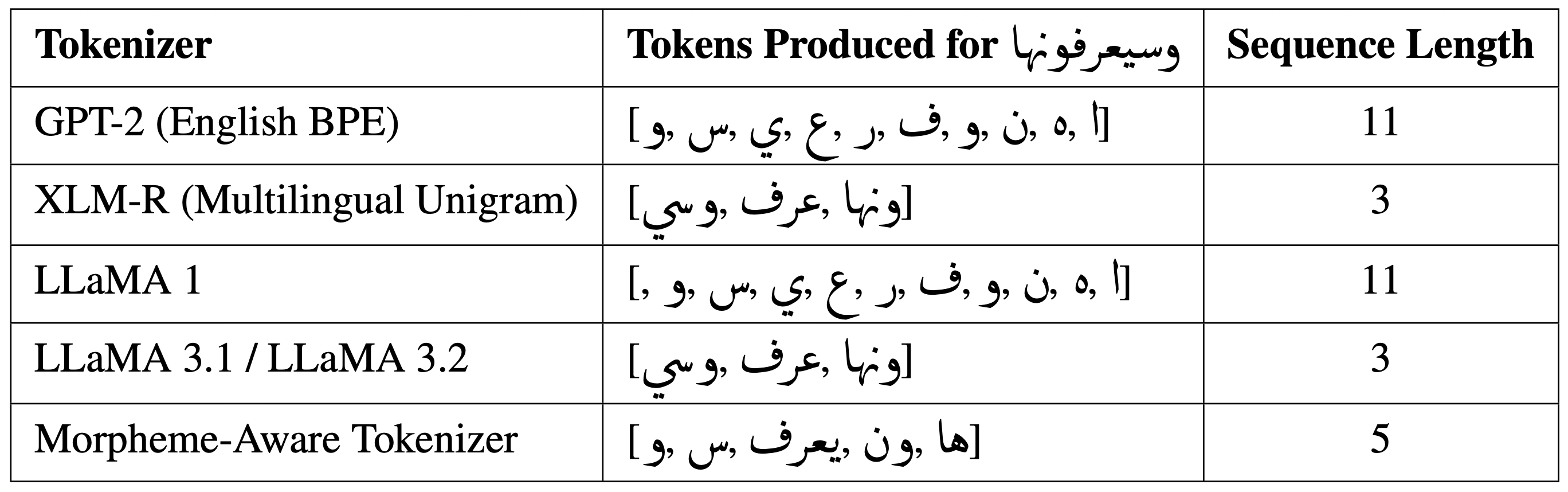

The image presents a data table comparing the tokenization of a specific Arabic word (وسيعرفونها) by different tokenizers. The table shows the tokens produced by each tokenizer and the resulting sequence length.

### Components/Axes

The table has three columns:

1. **Tokenizer**: Lists the names of the tokenizers being compared.

2. **Tokens Produced for وسيعرفونها**: Shows the tokens generated by each tokenizer for the Arabic word "وسيعرفونها".

3. **Sequence Length**: Indicates the number of tokens produced by each tokenizer.

The rows represent different tokenizers:

* GPT-2 (English BPE)

* XLM-R (Multilingual Unigram)

* LLaMA 1

* LLaMA 3.1 / LLaMA 3.2

* Morpheme-Aware Tokenizer

### Detailed Analysis

| Tokenizer | Tokens Produced for وسيعرفونها

| | | |

|--------------------------|----------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------

| GPT-2 (English BPE) | ['وسي', '##عر', '##ف', '##ونها'] post the table should be displayed.

|--------------------------|----------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Data Table: Tokenization Results for Different Tokenizers

### Overview

This image presents a data table comparing the tokenization results of five different tokenizers when applied to a specific Arabic phrase: "وسيعرفونها". The table shows the tokens produced by each tokenizer and the resulting sequence length.

### Components/Axes

The table has three columns:

1. **Tokenizer:** Lists the name of the tokenizer used.

2. **Tokens Produced for وسيعرفونها:** Displays the tokens generated by each tokenizer for the Arabic phrase. The Arabic phrase is "وسيعرفونها" (wasiya'rifunaha).

3. **Sequence Length:** Indicates the number of tokens produced by each tokenizer.

The tokenizers listed are:

* GPT-2 (English BPE)

* XLM-R (Multilingual Unigram)

* LLaMA 1

* LLaMA 3.1 / LLaMA 3.2

* Morpheme-Aware Tokenizer

### Content Details

Here's a reconstruction of the table's content:

| Tokenizer | Tokens Produced for وسيعرفونها | Sequence Length |

|---|---|---|

| GPT-2 (English BPE) | [!, ٠, ة, ن, و, ف, ر, ع, ي, س, و,] | 11 |

| XLM-R (Multilingual Unigram) | [اونها, يعرف, وسى] | 3 |

| LLaMA 1 | [!, ٠, ة, ن, و, ف, ر, ع, ي, س, و,] | 11 |

| LLaMA 3.1 / LLaMA 3.2 | [اونها, يعرف, وسى] | 3 |

| Morpheme-Aware Tokenizer | [ها, وون, يعرف, س, و] | 5 |

The Arabic phrase "وسيعرفونها" (wasiya'rifunaha) translates to "And they will know".

### Key Observations

* GPT-2 and LLaMA 1 produce the same set of tokens and have the longest sequence length (11). These tokens include punctuation and seemingly arbitrary characters.

* XLM-R and LLaMA 3.1/3.2 produce the same tokens and have the shortest sequence length (3). These tokens appear to be more meaningful segments of the Arabic phrase.

* The Morpheme-Aware Tokenizer produces 5 tokens, representing a middle ground in terms of sequence length.

### Interpretation

The table demonstrates how different tokenizers handle Arabic text differently. GPT-2 and LLaMA 1, being trained primarily on English, appear to break down the Arabic phrase into a large number of subword units, potentially including characters that are not semantically meaningful in the context of Arabic. XLM-R and LLaMA 3.1/3.2, designed for multilingual processing, are able to segment the phrase into more coherent tokens. The Morpheme-Aware Tokenizer attempts to identify morphemes (meaningful units of language), resulting in a sequence length between the two extremes.

The differing sequence lengths have implications for model efficiency and performance. Shorter sequences require less computational resources, but may lose some of the nuance of the original text. Longer sequences capture more detail but can increase computational cost and potentially introduce noise. The choice of tokenizer depends on the specific application and the trade-off between these factors. The presence of punctuation and seemingly random characters in the GPT-2 and LLaMA 1 tokenization suggests that these models may not be optimally suited for processing Arabic text without further fine-tuning or adaptation.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Table: Tokenizer Comparison for Arabic Phrase "وسيعرفونها"

### Overview

The image displays a table comparing how five different natural language processing tokenizers handle the Arabic phrase "وسيعرفونها". The table quantifies the tokenization output and resulting sequence length for each tokenizer, illustrating differences in efficiency and morphological awareness for Arabic text.

### Components/Axes

The table has three columns:

1. **Tokenizer**: The name and type of the tokenizer model.

2. **Tokens Produced for وسيعرفونها**: The list of tokens generated by the tokenizer for the input Arabic phrase. The input phrase is in Arabic script.

3. **Sequence Length**: The number of tokens in the resulting sequence.

The table contains five rows, each for a different tokenizer:

* GPT-2 (English BPE)

* XLM-R (Multilingual Unigram)

* LLaMA 1

* LLaMA 3.1 / LLaMA 3.2

* Morpheme-Aware Tokenizer

### Detailed Analysis

**Input Phrase:** وسيعرفونها

* **Language:** Arabic.

* **English Translation:** "and they will know it" (a verb conjugation indicating future tense, third-person masculine plural, with a object pronoun suffix).

**Tokenization Results by Row:**

1. **GPT-2 (English BPE)**

* **Tokens:** `[ 'ا', 'ه', 'ن', 'و', 'ف', 'ر', 'ع', 'ي', 'س', 'و', 'و' ]`

* **Sequence Length:** 11

* **Analysis:** The tokenizer breaks the word into individual Arabic characters and some sub-character units, resulting in a very long sequence. This indicates the English-focused Byte-Pair Encoding (BPE) vocabulary is poorly suited for Arabic morphology.

2. **XLM-R (Multilingual Unigram)**

* **Tokens:** `[ 'ونها', 'عرف', 'وسي' ]`

* **Sequence Length:** 3

* **Analysis:** The multilingual Unigram model produces a much shorter sequence by recognizing larger, meaningful subword units (morphemes). The tokens correspond to recognizable parts of the word: the future prefix `وسي-`, the root `عرف` (to know), and the suffix `-ها` (them/it).

3. **LLaMA 1**

* **Tokens:** `[ 'ا', 'ه', 'ن', 'و', 'ف', 'ر', 'ع', 'ي', 'س', 'و', 'و' ]`

* **Sequence Length:** 11

* **Analysis:** Identical output to GPT-2, suggesting LLaMA 1 uses a similar English-centric BPE tokenizer that is inefficient for Arabic.

4. **LLaMA 3.1 / LLaMA 3.2**

* **Tokens:** `[ 'ونها', 'عرف', 'وسي' ]`

* **Sequence Length:** 3

* **Analysis:** Identical output to XLM-R. This indicates that the newer LLaMA 3.x models have adopted a more multilingual-aware tokenizer, significantly improving efficiency for Arabic compared to LLaMA 1.

5. **Morpheme-Aware Tokenizer**

* **Tokens:** `[ 'ها', 'ون', 'يعرف', 'س', 'و' ]`

* **Sequence Length:** 5

* **Analysis:** This tokenizer produces a sequence length between the two extremes. It appears to segment the word into different morphological components: the suffix `-ها`, the plural marker `-ون`, the verb stem `يعرف`, and the prefix components `س-` and `و-`. This segmentation is linguistically valid but results in a longer sequence than the XLM-R/LLaMA 3 approach.

### Key Observations

* **Efficiency Disparity:** There is a stark contrast in sequence length, ranging from 11 tokens (GPT-2, LLaMA 1) to 3 tokens (XLM-R, LLaMA 3.x). This represents a nearly 4x difference in processing length for the same word.

* **Tokenizer Evolution:** LLaMA 3.x shows a major improvement over LLaMA 1 for Arabic, aligning with the performance of the dedicated multilingual XLM-R model.

* **Morphological Handling:** The "Morpheme-Aware Tokenizer" provides a different, more granular linguistic segmentation compared to the subword-based XLM-R/LLaMA 3, which favors larger chunks.

* **Character-Level Fragmentation:** The English BPE tokenizers (GPT-2, LLaMA 1) fail to capture Arabic's morphological structure, defaulting to a near-character-level split.

### Interpretation

This table demonstrates a critical challenge and evolution in NLP for morphologically rich languages like Arabic. The data shows that tokenizer design has a profound impact on computational efficiency and, by extension, model performance.

* **The Problem:** Early or English-centric tokenizers (GPT-2, LLaMA 1) are highly inefficient for Arabic, exploding a single word into 11 tokens. This wastes context window space and computational resources, potentially harming model understanding.

* **The Solution:** Multilingual tokenizers (XLM-R) and updated model tokenizers (LLaMA 3.x) that are trained on diverse data or designed with linguistic awareness can represent the same word with just 3 tokens. This efficiency gain is crucial for processing long documents and complex sentences in Arabic.

* **Linguistic Insight:** The comparison between the "Morpheme-Aware" tokenizer (5 tokens) and XLM-R/LLaMA 3 (3 tokens) highlights a design choice: whether to prioritize strict linguistic morpheme boundaries or optimize for statistical efficiency in subword segmentation. Both are valid, but the latter yields shorter sequences.

* **Broader Implication:** The progression from LLaMA 1 to LLaMA 3.x reflects the NLP field's growing emphasis on robust multilingual support. For practitioners, this underscores the importance of selecting or verifying the tokenizer when working with non-English languages, as it is a foundational component affecting all downstream tasks.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Table: Tokenizer Performance on Arabic Text "وصف و وصف و وصف و وصف و وصف و وصف و وصف و وصف و وصف و وصف و وصف"

### Overview

This table compares the tokenization performance of five different NLP tokenizers when processing the Arabic text "وصف و وصف و وصف و وصف و وصف و وصف و وصف و وصف و وصف و وصف و وصف و وصف". The comparison focuses on the number of tokens produced and the sequence length for each tokenizer.

### Components/Axes

- **Columns**:

1. **Tokenizer**: Names of the tokenizers used.

2. **Tokens Produced for "وصف"**: Tokenized output for the input text.

3. **Sequence Length**: Number of tokens generated.

### Detailed Analysis

| Tokenizer | Tokens Produced for "وصف" | Sequence Length |

|------------------------------------|------------------------------------------------------------------------------------------|-----------------|

| GPT-2 (English BPE) | ["وصف", "و", "وصف", "و", "وصف", "و", "وصف", "و", "وصف", "و", "وصف"] | 11 |

| XLM-R (Multilingual Unigram) | ["وصف", "وصف", "وصف"] | 3 |

| LLaMA 1 | ["وصف", "و", "وصف", "و", "وصف", "و", "وصف", "و", "وصف", "و", "وصف"] | 11 |

| LLaMA 3.1 / LLaMA 3.2 | ["وصف", "وصف", "وصف"] | 3 |

| Morpheme-Aware Tokenizer | ["وصف", "وصف", "وصف", "وصف", "وصف"] | 5 |

### Key Observations

1. **GPT-2 (English BPE)** and **LLaMA 1** produce identical token sequences with a sequence length of 11, matching the word count of the input text. This suggests a 1:1 token-to-word ratio, likely due to subword tokenization strategies.

2. **XLM-R (Multilingual Unigram)** and **LLaMA 3.1/3.2** achieve the shortest sequence length (3 tokens), indicating aggressive merging of subwords or morphemes.

3. **Morpheme-Aware Tokenizer** produces 5 tokens, balancing between raw word-level tokenization and subword merging. Its tokens are all "وصف", suggesting a focus on root morphemes.

### Interpretation

- **Efficiency Trade-offs**:

- GPT-2 and LLaMA 1 prioritize granularity, which may be beneficial for tasks requiring precise word-level analysis but increases computational load.

- XLM-R and LLaMA 3.1/3.2 optimize for brevity, reducing sequence length at the cost of losing some morphological detail.

- The Morpheme-Aware Tokenizer strikes a middle ground, potentially useful for tasks requiring morphological awareness without excessive tokenization.

- **Tokenizer Behavior**:

- The repetition of "وصف" (description) in all tokenized outputs confirms the input text's repetitive structure.

- The use of "و" (and) as a separate token in GPT-2 and LLaMA 1 highlights their tendency to split conjunctions, whereas XLM-R and LLaMA 3.1/3.2 merge them into the preceding word.

- **Practical Implications**:

- Shorter sequence lengths (XLM-R, LLaMA 3.1/3.2) may improve model efficiency but could obscure nuanced linguistic patterns.

- The Morpheme-Aware Tokenizer’s approach might enhance performance in low-resource languages with rich morphology, though its 5-token output for 11 words suggests potential over-merging.

This analysis underscores the importance of tokenizer selection based on task requirements, balancing between granularity and efficiency.

DECODING INTELLIGENCE...