## System Architecture Diagram: Memory-Augmented Parallel Processing Pipeline

### Overview

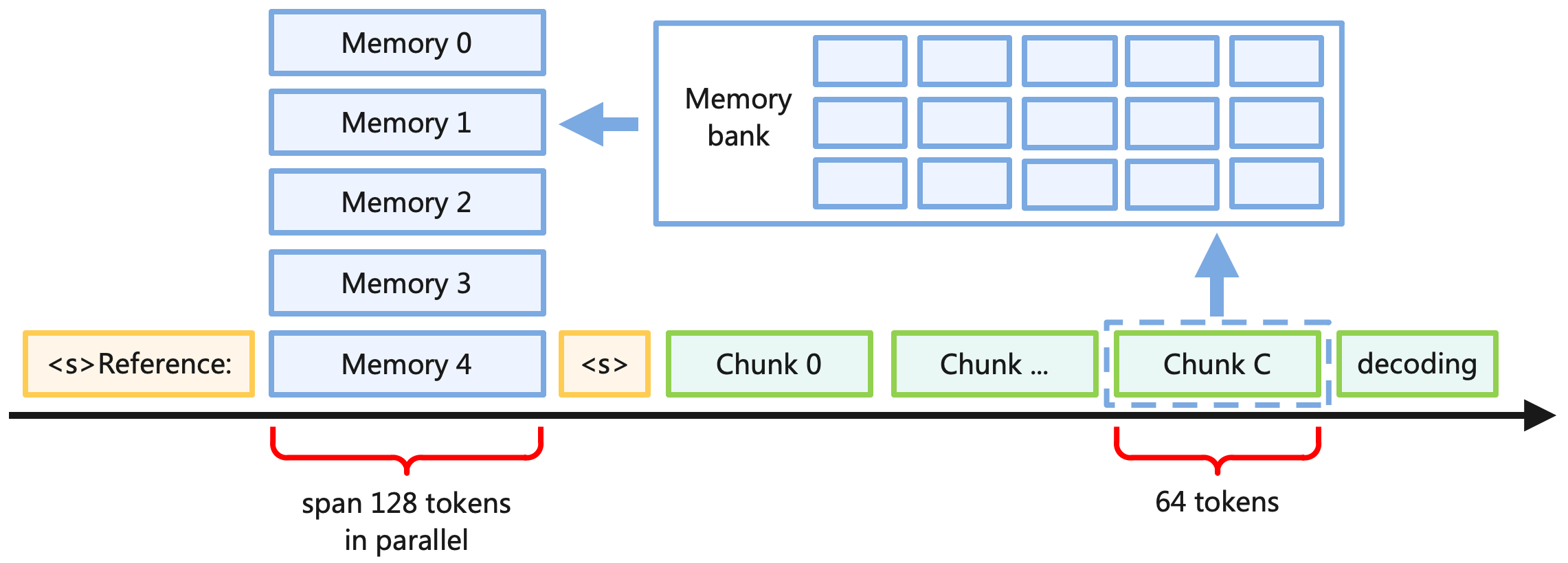

The image is a technical system architecture diagram illustrating a memory-augmented processing pipeline. It depicts a flow where a "Reference" input is processed in parallel against multiple memory slots, followed by a sequential, chunk-based decoding stage that interacts with a larger "Memory bank." The diagram uses color-coding and spatial arrangement to distinguish between parallel and sequential processing phases.

### Components/Axes

The diagram is organized into three main spatial regions:

1. **Top Region (Memory Bank & Slots):**

* **Memory bank:** A large, light-blue rectangular container positioned in the top-right quadrant. It contains a 3x5 grid of 15 smaller, empty, light-blue rectangular slots.

* **Memory Slots:** A vertical stack of five light-blue rectangles on the top-left, labeled from top to bottom:

* `Memory 0`

* `Memory 1`

* `Memory 2`

* `Memory 3`

* `Memory 4`

* **Relationship Arrow:** A solid blue arrow points from the `Memory bank` leftwards to the `Memory 1` slot, indicating a data flow or retrieval operation.

2. **Bottom Region (Processing Timeline):**

* A thick black horizontal arrow runs across the bottom, representing a timeline or processing sequence from left to right.

* **Reference Input (Left):** A yellow-outlined box labeled `<s>Reference:` is positioned at the start of the timeline.

* **Parallel Processing Span:** A red curly brace underneath the timeline spans from the `Reference` box to the `Memory 4` slot. The annotation below it reads: `span 128 tokens in parallel`.

* **Chunk Sequence (Right):** Following the parallel span, a series of green-outlined boxes are placed sequentially on the timeline:

* `<s>` (A yellow-outlined box, similar to the reference marker)

* `Chunk 0`

* `Chunk ...`

* `Chunk C` (This box is highlighted with a dashed blue outline)

* `decoding`

* **Chunk Interaction:** A solid blue arrow points upwards from the `Chunk C` box to the `Memory bank`, indicating that this specific chunk interacts with or retrieves data from the memory bank.

* **Chunk Size Annotation:** A second red curly brace underneath the timeline spans the `Chunk C` and `decoding` boxes. The annotation below it reads: `64 tokens`.

### Detailed Analysis

* **Color Coding:**

* **Light Blue:** Used for all memory-related components (`Memory bank`, `Memory 0-4` slots).

* **Yellow:** Used for sequence start markers (`<s>Reference:`, `<s>`).

* **Green:** Used for data chunks in the sequential processing phase (`Chunk 0`, `Chunk ...`, `Chunk C`, `decoding`).

* **Red:** Used for annotations describing token spans.

* **Black:** Used for the main timeline arrow.

* **Spatial Flow:** The process flows from left to right along the timeline. The initial `Reference` and the five `Memory` slots are processed in parallel (as indicated by the brace). The process then shifts to a sequential, chunk-by-chunk phase (`Chunk 0` to `decoding`).

* **Key Relationships:**

1. The `Memory bank` feeds data into the parallel processing stage (arrow to `Memory 1`).

2. The sequential processing stage, specifically `Chunk C`, feeds back into or queries the `Memory bank` (arrow from `Chunk C`).

3. The parallel phase handles a larger context window (`128 tokens`) compared to the focused interaction in the sequential phase (`64 tokens` for the final chunk/decoding step).

### Key Observations

1. **Hybrid Processing Model:** The system combines parallel processing of a reference against multiple memory slots with a subsequent sequential, chunk-based decoding process.

2. **Asymmetric Memory Interaction:** The `Memory bank` has a bidirectional relationship with the pipeline: it provides data to the parallel stage and receives input from the sequential stage (`Chunk C`).

3. **Token Span Discrepancy:** The parallel phase operates on a 128-token span, while the annotated sequential phase (specifically the final chunk and decoding) is associated with a 64-token span, suggesting a reduction in context window or a more focused operation during decoding.

4. **Highlighted Element:** `Chunk C` is uniquely emphasized with a dashed outline, marking it as a critical component that bridges the sequential processing and the memory bank.

### Interpretation

This diagram illustrates a sophisticated memory-augmented neural network architecture, likely for tasks like language modeling or machine translation. The design suggests a two-stage process:

1. **Contextualization Stage (Parallel):** A source "Reference" (e.g., a sentence to translate) is compared or attended against a set of memory slots (`Memory 0-4`) in parallel. This could represent retrieving relevant context or information from a fixed-size memory. The 128-token span indicates this stage handles a relatively broad context.

2. **Generation/Decoding Stage (Sequential):** The system then generates output sequentially in chunks. The interaction between `Chunk C` and the `Memory bank` implies that during generation, the model can dynamically access a larger, external memory store (`Memory bank`) to inform its predictions, moving beyond the limited slots used in the first stage. The 64-token annotation may indicate the size of the generation window or the granularity at which memory is accessed during decoding.

The architecture aims to balance efficient parallel processing of input with the flexible, memory-aware generation of output, addressing the challenge of maintaining coherent long-range dependencies in sequence-to-sequence tasks. The separation of a small, fast memory (slots 0-4) from a larger bank is a common pattern for optimizing memory access latency.