## Diagram: Reasoning Chain Evaluation Process

### Overview

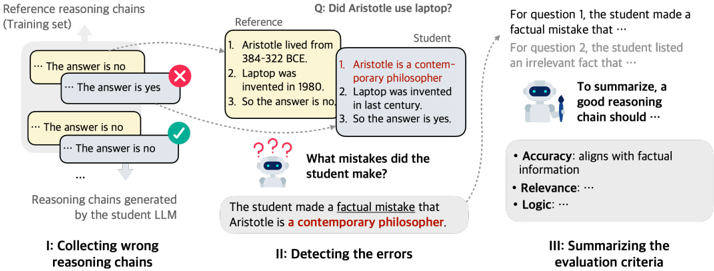

The image is a technical diagram illustrating a three-stage process for evaluating the reasoning chains generated by a student Large Language Model (LLM). It visually explains how errors are identified and summarized. The diagram is divided into three sequential sections labeled I, II, and III, flowing from left to right.

### Components/Axes

The diagram is segmented into three primary regions:

1. **Left Region (I: Collecting wrong reasoning chains):** Shows a "Reference reasoning chains (Training set)" box and a "Reasoning chains generated by the student LLM" box. Arrows connect these to example reasoning chains.

2. **Center Region (II: Detecting the errors):** Features a "Reference" box and a "Student" box side-by-side, with a question above them. Below, a robot icon and a text box explain the detected error.

3. **Right Region (III: Summarizing the evaluation criteria):** Contains a text block explaining the student's mistakes and a final summary box with bullet points.

**Key Text Labels & Annotations:**

* **Top Center Question:** "Q: Did Aristotle use laptop?"

* **Section I Labels:** "Reference reasoning chains (Training set)", "Reasoning chains generated by the student LLM".

* **Section II Labels:** "Reference", "Student", "What mistakes did the student make?".

* **Section III Labels:** "To summarize, a good reasoning chain should ...".

* **Evaluation Criteria Bullets:** "Accuracy: ...", "Relevance: ...", "Logic: ...".

* **Visual Indicators:** A red "X" marks an incorrect reasoning chain. A green checkmark (✓) marks a correct reasoning chain.

### Detailed Analysis / Content Details

**Section I: Collecting wrong reasoning chains**

* **Reference Reasoning Chain (Correct):**

1. Aristotle lived from 384–322 BCE.

2. Laptop was invented in 1980.

3. So the answer is no.

* **Student LLM Reasoning Chains (Examples):**

* Chain 1 (Marked with Red X): "... The answer is no ..." / "... The answer is yes ..." (Inconsistent).

* Chain 2 (Marked with Green ✓): "... The answer is no ..." / "... The answer is no ..." (Consistent, but may be based on flawed logic).

**Section II: Detecting the errors**

* **Reference Box:** Contains the same correct 3-step reasoning as above.

* **Student Box:** Contains a flawed 3-step reasoning chain:

1. **Aristotle is a contemporary philosopher.** (This line is highlighted in red text).

2. Laptop was invented in last century.

3. So the answer is yes.

* **Error Identification Text:** "The student made a **factual mistake** that Aristotle is a **contemporary philosopher**."

**Section III: Summarizing the evaluation criteria**

* **Explanatory Text:** "For question 1, the student made a factual mistake that ... For question 2, the student listed an irrelevant fact that ..."

* **Summary Box:** "To summarize, a good reasoning chain should ..."

* **Accuracy:** aligns with factual information

* **Relevance:**

* **Logic:**

### Key Observations

1. **Error Type:** The primary error highlighted is a **factual inaccuracy** (claiming Aristotle is contemporary), which directly leads to an incorrect conclusion.

2. **Process Flow:** The diagram shows a clear pipeline: 1) Collect reasoning outputs, 2) Compare against a reference to detect specific errors (factual, relevance, logical), 3) Synthesize the findings into general evaluation criteria.

3. **Visual Coding:** Color is used strategically: red for errors/incorrect elements, green for correct elements, and black for neutral explanatory text.

4. **Spatial Layout:** The legend/reference is consistently placed on the left or top of the student's work for direct comparison. The final summary is isolated on the right as the output of the process.

### Interpretation

This diagram demonstrates a methodology for **automated or semi-automated evaluation of LLM reasoning**. It moves beyond simple answer correctness to analyze the *process* of reasoning.

* **What it suggests:** The system evaluates reasoning chains on multiple dimensions: **factual Accuracy**, **Relevance** of the facts cited, and **Logical** coherence. A failure in any dimension (like the factual error shown) invalidates the chain.

* **Relationships:** The "Reference" serves as the ground truth. The "Student" chain is the artifact under test. The evaluation criteria in Section III are derived from the types of errors detected in Section II.

* **Underlying Message:** The goal is not just to mark an answer wrong, but to **diagnose why** the reasoning failed. This is crucial for improving LLM training, as it pinpoints whether the model lacks knowledge (accuracy), retrieves wrong information (relevance), or cannot structure arguments properly (logic). The process transforms specific error instances into generalizable quality metrics for reasoning.