## Flowchart: Process for Evaluating Reasoning Chains in Student Responses

### Overview

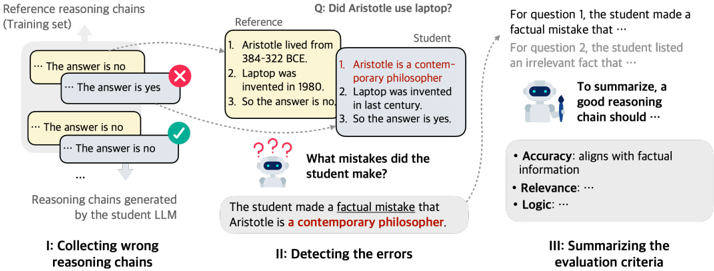

The image depicts a three-stage flowchart illustrating a process for analyzing and improving reasoning chains generated by students using large language models (LLMs). The flowchart emphasizes error detection, correction, and evaluation criteria for logical reasoning.

### Components/Axes

1. **Sections**:

- **I: Collecting wrong reasoning chains** (left)

- **II: Detecting the errors** (center)

- **III: Summarizing the evaluation criteria** (right)

2. **Visual Elements**:

- Text boxes with labels like "Reference reasoning chains (Training set)", "Reference", "Student", and "What mistakes did the student make?"

- Arrows indicating flow direction (left → center → right)

- Icons:

- Green checkmark (correct answer)

- Red X (incorrect answer)

- Robot with question marks (error detection)

- Robot holding a paintbrush (summarization)

3. **Text Content**:

- **Question**: "Did Aristotle use laptop?"

- **Reference Answer**:

1. Aristotle lived from 384-322 BCE.

2. Laptop was invented in 1980.

3. So the answer is no.

- **Student's Incorrect Reasoning**:

1. Aristotle is a contemporary philosopher.

2. Laptop was invented in the last century.

3. So the answer is yes.

- **Error Identification**: "The student made a factual mistake that Aristotle is a contemporary philosopher."

- **Evaluation Criteria**:

- Accuracy: aligns with factual information

- Relevance: ...

- Logic: ...

### Detailed Analysis

1. **Section I: Collecting wrong reasoning chains**

- Shows reference reasoning chains with training set examples.

- Displays conflicting student-generated chains (e.g., "The answer is yes" vs. "The answer is no").

- Highlights incorrect chains with red X marks.

2. **Section II: Detecting the errors**

- Focuses on identifying factual inaccuracies in student reasoning.

- Explicitly calls out the error: "Aristotle is a contemporary philosopher" (contradicts reference answer).

3. **Section III: Summarizing the evaluation criteria**

- Lists three criteria for valid reasoning chains:

- Accuracy (factual alignment)

- Relevance (contextual appropriateness)

- Logic (coherent structure)

### Key Observations

- The flowchart emphasizes **factual accuracy** as the primary evaluation metric, with explicit callouts to errors in historical knowledge.

- The student's reasoning chain contains a **temporal inconsistency** (Aristotle as contemporary) and a **misattributed invention timeline** (laptop in "last century" vs. 1980).

- The evaluation criteria prioritize **accuracy over relevance/logic**, suggesting factual correctness is foundational.

### Interpretation

This flowchart outlines a pedagogical framework for training LLMs to generate factually grounded reasoning chains. By:

1. Collecting diverse (correct/incorrect) examples,

2. Identifying specific factual errors,

3. Defining evaluation criteria,

The process aims to improve LLM outputs through structured error analysis. The example demonstrates how **temporal reasoning errors** (e.g., misdating inventions) can cascade into incorrect conclusions, underscoring the need for rigorous fact-checking in automated reasoning systems. The emphasis on accuracy aligns with Peircean principles of scientific inquiry, where factual verification precedes logical deduction.