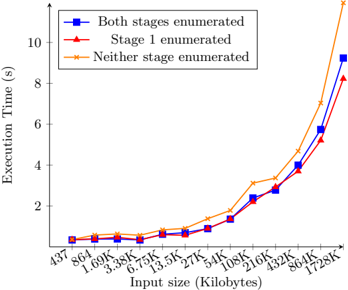

## Line Chart: Execution Time vs. Input Size for Different Enumeration Strategies

### Overview

This is a line chart comparing the execution time (in seconds) of three different computational strategies as the input size (in Kilobytes) increases. The chart demonstrates how performance scales with input size for methods that enumerate different stages of a process.

### Components/Axes

* **Chart Type:** Line chart with markers.

* **Y-Axis:** Labeled "Execution Time (s)". Scale is linear, ranging from 0 to 10 seconds, with major tick marks every 2 seconds.

* **X-Axis:** Labeled "Input size (Kilobytes)". The scale is logarithmic (base 2), with labeled tick marks at: 437, 864, 1.69K, 3.3K, 6.63K, 13.5K, 27K, 54K, 108K, 216K, 432K, 864K, 1728K.

* **Legend:** Located in the top-left corner of the chart area. It defines three data series:

1. **Blue line with square markers (■):** "Both stages enumerated"

2. **Red line with triangle markers (▲):** "Stage I enumerated"

3. **Orange line with cross markers (×):** "Neither stage enumerated"

### Detailed Analysis

The chart plots three data series, each showing an increasing, non-linear trend. The execution time grows slowly for small input sizes and then accelerates dramatically after approximately 108K.

**1. "Neither stage enumerated" (Orange line, × markers):**

* **Trend:** This line shows the most severe exponential growth. It starts as the slowest method for small inputs but becomes the slowest (highest execution time) by a significant margin for large inputs.

* **Approximate Data Points:**

* Input ~437B to 27KB: Execution time is near 0s.

* Input 54KB: ~0.5s

* Input 108KB: ~1.5s

* Input 216KB: ~3.2s

* Input 432KB: ~5.0s

* Input 864KB: ~8.0s

* Input 1728KB: ~10.0s (exceeds the chart's upper bound)

**2. "Both stages enumerated" (Blue line, ■ markers):**

* **Trend:** This line shows strong exponential growth but is consistently faster than the "Neither stage enumerated" method for larger inputs. It tracks closely with, but is slightly slower than, the "Stage I enumerated" method.

* **Approximate Data Points:**

* Input ~437B to 27KB: Execution time is near 0s.

* Input 54KB: ~0.4s

* Input 108KB: ~1.2s

* Input 216KB: ~2.5s

* Input 432KB: ~4.0s

* Input 864KB: ~6.0s

* Input 1728KB: ~9.2s

**3. "Stage I enumerated" (Red line, ▲ markers):**

* **Trend:** This line demonstrates the best performance (lowest execution time) for larger inputs, showing a similar exponential curve but with a slightly lower slope than the blue line.

* **Approximate Data Points:**

* Input ~437B to 27KB: Execution time is near 0s.

* Input 54KB: ~0.3s

* Input 108KB: ~1.0s

* Input 216KB: ~2.2s

* Input 432KB: ~3.8s

* Input 864KB: ~5.5s

* Input 1728KB: ~8.5s

### Key Observations

1. **Performance Crossover:** For very small inputs (< ~27KB), all three methods have negligible and nearly identical execution times. The performance differences become pronounced only as input size grows.

2. **Exponential Scaling:** All three strategies exhibit exponential time complexity relative to input size, as evidenced by the near-linear rise on this semi-log plot.

3. **Clear Performance Hierarchy:** For inputs larger than ~54KB, a consistent performance order is established: "Stage I enumerated" (fastest) < "Both stages enumerated" < "Neither stage enumerated" (slowest).

4. **Divergence Rate:** The gap between the best ("Stage I") and worst ("Neither") methods widens significantly as input size increases. At 1728KB, the "Neither" method is approximately 18% slower than the "Stage I" method.

### Interpretation

This chart likely illustrates the performance trade-offs in a compiler, query optimizer, or similar system where "enumerating stages" refers to performing optimizations or analysis upfront.

* **The data suggests** that performing some enumeration ("Stage I") provides a significant and growing performance benefit as problem size increases compared to doing no enumeration ("Neither").

* **The relationship** between the lines indicates that the overhead of enumerating *both* stages is slightly higher than enumerating only Stage I, but it is still vastly preferable to enumerating nothing for large inputs. This implies that the cost of the second stage's enumeration is not fully offset by its benefits in this specific workload, or that Stage I captures the most critical optimizations.

* **The notable anomaly** is the very tight clustering of all three lines below 27KB. This indicates that for small problems, the fixed overhead of any enumeration strategy dominates the variable cost, making the choice of strategy irrelevant. The strategic choice only matters for scaling to larger datasets.

* **In essence, the chart argues for the necessity of at least partial (Stage I) enumeration to achieve scalable performance,** with full enumeration being a slightly less optimal but still effective choice. Doing no enumeration leads to poor scalability.