## Diagram: Illustration of EWC, L2, and No Penalty in Relation to Error Landscapes

### Overview

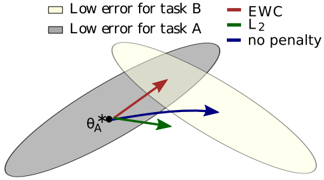

The image is a diagram illustrating the concepts of Elastic Weight Consolidation (EWC), L2 regularization, and no penalty in the context of two tasks, A and B. It shows overlapping regions representing low error for each task, along with arrows indicating the direction of parameter updates under different regularization schemes.

### Components/Axes

* **Regions:**

* Light gray region: "Low error for task A"

* Light yellow region: "Low error for task B"

* **Arrows:**

* Red arrow: "EWC"

* Green arrow: "L2"

* Blue arrow: "no penalty"

* **Point:**

* Black asterisk labeled "ΘA*"

### Detailed Analysis

The diagram shows two overlapping elliptical regions, representing areas of low error for task A (light gray) and task B (light yellow). The intersection of these regions represents a parameter space where both tasks can be performed with relatively low error.

The black asterisk labeled "ΘA*" is located within the "Low error for task A" region, near the intersection with the "Low error for task B" region. This point likely represents the optimal parameters for task A before considering task B.

The arrows emanating from "ΘA*" represent the direction of parameter updates under different regularization schemes:

* **EWC (Red Arrow):** The red arrow points towards the intersection of the two regions, suggesting that EWC encourages parameter updates that maintain performance on both tasks.

* **L2 (Green Arrow):** The green arrow points in a direction that is a compromise between optimizing for task A and task B, but not as strongly towards the intersection as EWC.

* **No Penalty (Blue Arrow):** The blue arrow points away from the intersection, suggesting that without regularization, the model may drift away from the optimal parameters for task A when learning task B.

### Key Observations

* EWC appears to guide the parameter updates towards a region where both tasks can be performed well.

* L2 regularization provides a compromise between the two tasks.

* Without regularization, the model may drift away from the optimal parameters for the initial task.

### Interpretation

The diagram illustrates how different regularization techniques affect the learning process in a multi-task setting. EWC, by penalizing deviations from important parameters for previous tasks, encourages the model to find a solution that performs well on both tasks. L2 regularization provides a more general form of regularization, while no penalty can lead to catastrophic forgetting of the initial task. The diagram visually represents the trade-offs between maintaining performance on previous tasks and learning new tasks.