\n

## Diagram: Catastrophic Forgetting Visualization

### Overview

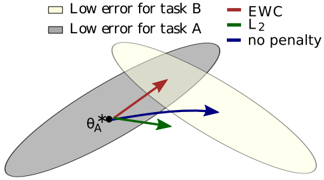

The image is a diagram illustrating the concept of catastrophic forgetting in machine learning, specifically how different regularization techniques (EWC, L2, no penalty) affect the weight updates when learning new tasks. It uses overlapping ellipses to represent regions of low error for two tasks (A and B) and arrows to show the direction of weight updates.

### Components/Axes

* **Ellipses:**

* Light Yellow: "Low error for task B"

* Gray: "Low error for task A"

* **Arrows:**

* Red: "EWC" (Elastic Weight Consolidation)

* Dashed Red: "L2"

* Green: "L2"

* Blue: "no penalty"

* **Angle Label:** θ<sub>A</sub>

* **Origin:** Marked with a black circle.

### Detailed Analysis or Content Details

The diagram shows the following:

* The ellipses representing "Low error for task A" and "Low error for task B" overlap significantly, indicating that achieving low error on both tasks simultaneously is possible, but requires a specific region of the weight space.

* The black circle at the origin represents a starting point or current weight configuration.

* **EWC (Red Arrow):** The red arrow points diagonally upwards and to the right, indicating a weight update that moves away from the origin and towards the intersection of the two ellipses. It appears to be the most constrained update, staying relatively close to the origin.

* **L2 (Dashed Red Arrow):** The dashed red arrow points slightly upwards and to the right, but is shorter than the EWC arrow. It represents a smaller weight update.

* **L2 (Green Arrow):** The green arrow points directly to the right, indicating a weight update that moves primarily in the direction of improving performance on the new task (B) without much consideration for the old task (A).

* **no penalty (Blue Arrow):** The blue arrow points diagonally upwards and to the right, and is the longest arrow, indicating the largest weight update. It moves furthest away from the origin and towards task B, potentially forgetting task A.

* The angle θ<sub>A</sub> is marked near the origin, suggesting it represents the angle between the weight update vectors and some reference direction related to task A.

### Key Observations

* The "no penalty" update (blue arrow) results in the largest change in weights and moves furthest away from the region of low error for task A.

* EWC (red arrow) provides the most constrained update, staying closest to the origin and attempting to balance performance on both tasks.

* L2 regularization (dashed red and green arrows) offers intermediate levels of constraint, with the green arrow prioritizing the new task.

* The diagram visually demonstrates how regularization techniques can mitigate catastrophic forgetting by limiting the magnitude and direction of weight updates.

### Interpretation

This diagram illustrates the trade-off between learning new tasks and retaining knowledge of old tasks in machine learning. Catastrophic forgetting occurs when learning a new task causes a significant drop in performance on previously learned tasks. The diagram shows how different regularization techniques attempt to address this problem.

* **EWC** aims to consolidate important weights for the old task, preventing them from being drastically changed during learning of the new task.

* **L2 regularization** penalizes large weights, which can help to prevent overfitting to the new task and preserve some knowledge of the old task.

* **No penalty** allows the model to freely adapt to the new task, potentially leading to catastrophic forgetting.

The overlapping ellipses represent the solution space where both tasks can be performed well. The arrows show how each method navigates this space. The diagram suggests that EWC is the most effective at staying within the overlapping region, while "no penalty" is the most likely to move outside of it, leading to forgetting. The angle θ<sub>A</sub> likely represents the degree to which the weight update deviates from the optimal direction for task A, providing a quantitative measure of forgetting.