TECHNICAL ASSET FINGERPRINT

8bdc7ad276af09ff8bf93e49

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

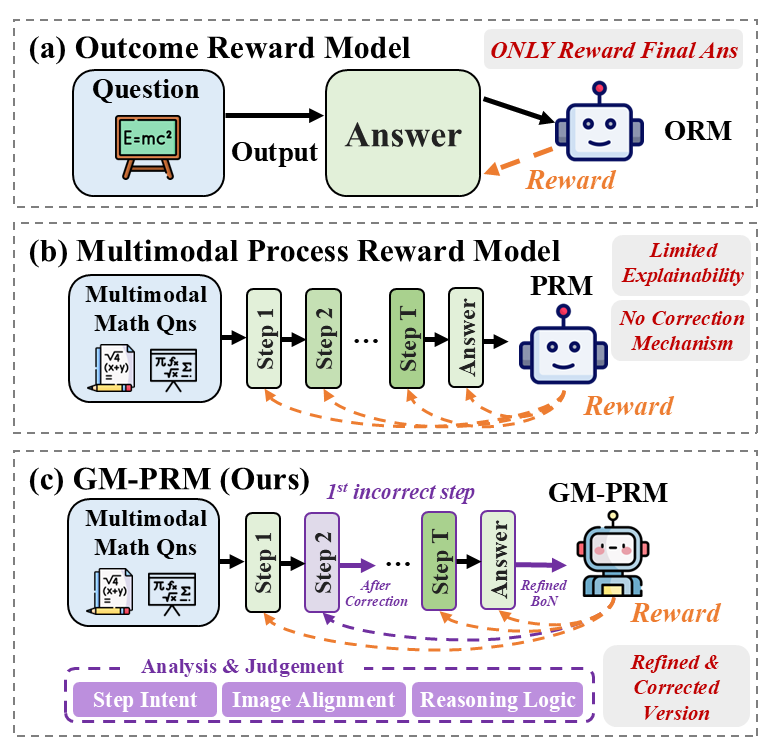

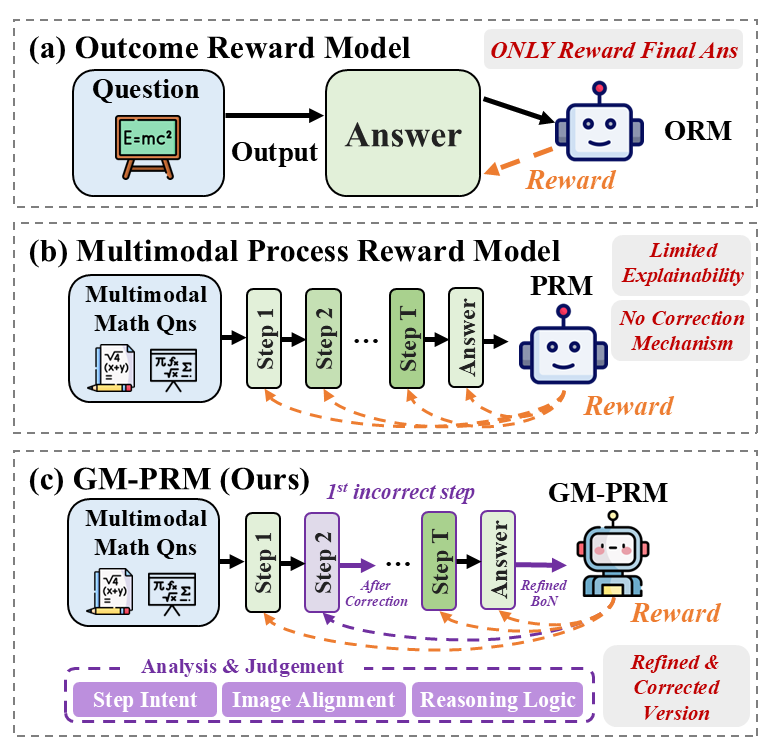

## Diagram: Comparison of Reward Models

### Overview

The image presents a comparative diagram illustrating three different reward models: Outcome Reward Model (ORM), Multimodal Process Reward Model (PRM), and GM-PRM (a proposed model). The diagram highlights the flow of information and the reward mechanisms associated with each model.

### Components/Axes

* **Title:** The image is divided into three sections, each representing a different reward model.

* (a) Outcome Reward Model

* (b) Multimodal Process Reward Model

* (c) GM-PRM (Ours)

* **Input:** Each model starts with an input.

* ORM: "Question" (represented by a blue box containing the equation E=mc²)

* PRM and GM-PRM: "Multimodal Math Qns" (represented by a blue box containing math equations)

* **Process:** The models process the input through a series of steps.

* ORM: A single "Output" arrow leading to "Answer" (green box).

* PRM and GM-PRM: A series of steps labeled "Step 1", "Step 2", ..., "Step T" (green boxes) leading to "Answer" (green box).

* **Reward:** Each model includes a reward mechanism.

* ORM, PRM, and GM-PRM: A robot icon labeled "ORM", "PRM", and "GM-PRM" respectively, receiving a "Reward" (orange dashed arrow).

* **Annotations:** Additional text annotations provide context and highlight key differences.

* ORM: "ONLY Reward Final Ans" (red text)

* PRM: "Limited Explainability", "No Correction Mechanism" (red text)

* GM-PRM: "1st incorrect step" (purple text), "After Correction" (purple arrow), "Refined BoN" (green text), "Refined & Corrected Version" (purple text)

* **Analysis & Judgement (GM-PRM):** A purple box at the bottom of the GM-PRM section contains the labels "Step Intent", "Image Alignment", and "Reasoning Logic".

### Detailed Analysis

**Outcome Reward Model (ORM):**

* The model takes a "Question" as input.

* The question is processed to produce an "Answer".

* The "ORM" agent receives a "Reward" only based on the final answer.

* The annotation "ONLY Reward Final Ans" emphasizes that the reward is solely based on the outcome.

**Multimodal Process Reward Model (PRM):**

* The model takes "Multimodal Math Qns" as input.

* The input is processed through a series of steps: "Step 1", "Step 2", ..., "Step T".

* The final step leads to an "Answer".

* The "PRM" agent receives a "Reward" based on the process.

* The annotations "Limited Explainability" and "No Correction Mechanism" highlight the limitations of this model.

**GM-PRM (Ours):**

* The model takes "Multimodal Math Qns" as input.

* The input is processed through a series of steps: "Step 1", "Step 2" (purple), ..., "Step T".

* The annotation "1st incorrect step" indicates a point where a correction mechanism is applied.

* The "After Correction" arrow shows the flow after a correction.

* The final step leads to an "Answer".

* The "GM-PRM" agent receives a "Reward" based on the refined process.

* The "Refined BoN" annotation suggests a refined version of something (likely a "Bag of Neurons" or similar concept).

* The "Refined & Corrected Version" annotation emphasizes the improvements made in this model.

* The "Analysis & Judgement" box indicates the model's ability to analyze and judge the steps involved.

### Key Observations

* The ORM is the simplest model, focusing only on the final outcome.

* The PRM considers the process but lacks explainability and a correction mechanism.

* The GM-PRM builds upon the PRM by incorporating a correction mechanism and analysis/judgment capabilities.

### Interpretation

The diagram illustrates the evolution of reward models for problem-solving, particularly in the context of multimodal math questions. The GM-PRM model is presented as an improvement over existing models by incorporating a correction mechanism and analysis/judgment capabilities. This suggests that the GM-PRM is more robust and capable of handling complex problems compared to the ORM and PRM models. The "Refined BoN" annotation implies that the GM-PRM utilizes a more sophisticated approach to processing information and generating solutions. The diagram highlights the importance of considering the process and incorporating feedback mechanisms in reward models for complex tasks.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

## Diagram: Model Comparison for Multimodal Question Answering

### Overview

The image presents a comparative diagram illustrating three different reward models for multimodal question answering, specifically focusing on mathematical questions. The models are labeled as (a) Outcome Reward Model (ORM), (b) Multimodal Process Reward Model (PRM), and (c) GM-PRM (the authors' proposed model). The diagram highlights the flow of information, the reward mechanisms, and the key differences in their approaches.

### Components/Axes

The diagram is structured into three horizontal sections, each representing a different model. Each section contains the following components:

* **Input:** Multimodal Math Questions or a single Question.

* **Process:** A series of steps (Step 1, Step 2, ... Step T) leading to an Answer.

* **Model Representation:** A stylized robot icon representing the reward model.

* **Reward:** An arrow indicating the reward signal.

* **Annotations:** Text labels describing the model's characteristics and limitations.

### Detailed Analysis or Content Details

**(a) Outcome Reward Model (ORM)**

* **Input:** A single question represented by the equation "E=mc²" within a rectangular box.

* **Process:** The question flows directly to an "Answer" box.

* **Model:** A robot icon labeled "ORM".

* **Reward:** A yellow arrow labeled "Reward" points from the "Answer" box to the "ORM".

* **Annotation:** "ONLY Reward Final Ans" is written next to the reward arrow.

**(b) Multimodal Process Reward Model (PRM)**

* **Input:** Multiple multimodal math questions are shown within rectangular boxes: "√4", "(x+y)²", "πr²/π", "m/v".

* **Process:** The questions flow through a series of "Step 1", "Step 2", ... "Step T" boxes, culminating in an "Answer" box. The steps are connected by purple arrows.

* **Model:** A robot icon labeled "PRM".

* **Reward:** A yellow arrow labeled "Reward" points from the "Answer" box to the "PRM".

* **Annotations:** "Limited Explainability" and "No Correction Mechanism" are written to the right of the "PRM".

**(c) GM-PRM (Ours)**

* **Input:** Similar to (b), multiple multimodal math questions are shown: "√4", "(x+y)²", "πr²/π", "m/v".

* **Process:** The questions flow through a series of "Step 1", "Step 2", ... "Step T" boxes. A dashed red arrow labeled "After Correction" indicates a feedback loop from a later step to an earlier step, suggesting a correction mechanism.

* **Model:** A robot icon labeled "GM-PRM" with a refined brain (BoN) inside.

* **Reward:** A yellow arrow labeled "Reward" points from the "Answer" box to the "GM-PRM".

* **Annotations:** "Refined & Corrected Version" is written to the right of the "GM-PRM".

* **Bottom Legend:** A colored legend is present:

* **Step Intent:** Purple

* **Image Alignment:** Teal

* **Reasoning Logic:** Orange

### Key Observations

* The ORM is the simplest model, only rewarding the final answer.

* The PRM introduces a process reward but lacks explainability and a correction mechanism.

* The GM-PRM builds upon the PRM by adding a correction mechanism and incorporating analysis and judgement based on step intent, image alignment, and reasoning logic.

* The dashed red arrow in the GM-PRM indicates a key difference: the ability to revisit and correct earlier steps.

* The legend at the bottom suggests that the GM-PRM utilizes these three components (Step Intent, Image Alignment, Reasoning Logic) during the process.

### Interpretation

The diagram illustrates the evolution of reward models for multimodal question answering. The ORM represents a basic approach, while the PRM attempts to improve upon it by rewarding intermediate steps. However, the GM-PRM, proposed by the authors, addresses the limitations of the PRM by incorporating a correction mechanism and leveraging step intent, image alignment, and reasoning logic. This suggests that a more nuanced approach, which considers the entire reasoning process and allows for self-correction, is crucial for achieving better performance in multimodal question answering tasks. The use of color-coding in the GM-PRM section highlights the importance of these three components in the model's decision-making process. The diagram effectively communicates the advantages of the GM-PRM over its predecessors, positioning it as a more sophisticated and effective solution.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Diagram: Comparison of Reward Models for Multimodal Math Reasoning

### Overview

The image is a technical diagram comparing three different reward model architectures for evaluating and improving multimodal mathematical reasoning. It is divided into three horizontal panels, labeled (a), (b), and (c), each illustrating a distinct model: the Outcome Reward Model (ORM), the Multimodal Process Reward Model (PRM), and the proposed GM-PRM (Ours). The diagram uses a flowchart style with boxes, arrows, and icons to depict the process flow, inputs, outputs, and feedback mechanisms for each model.

### Components/Axes

The diagram is structured into three distinct sections, each with its own title and flow.

**Panel (a): Outcome Reward Model**

* **Title:** `(a) Outcome Reward Model`

* **Input:** A box labeled `Question` containing a chalkboard icon with the equation `E=mc²`.

* **Process:** An arrow labeled `Output` points to a box labeled `Answer`.

* **Evaluation:** An arrow points from the `Answer` box to a blue robot icon labeled `ORM`.

* **Feedback:** A dashed orange arrow labeled `Reward` points from the `ORM` robot back to the `Answer` box.

* **Annotation:** Red text in the top-right corner states: `ONLY Reward Final Ans`.

**Panel (b): Multimodal Process Reward Model**

* **Title:** `(b) Multimodal Process Reward Model`

* **Input:** A box labeled `Multimodal Math Qns` containing icons of a math problem sheet and a pencil.

* **Process:** A sequence of boxes connected by arrows: `Step 1` -> `Step 2` -> `...` -> `Step T` -> `Answer`.

* **Evaluation:** An arrow points from the `Answer` box to a blue robot icon labeled `PRM`.

* **Feedback:** Multiple dashed orange arrows labeled `Reward` point from the `PRM` robot back to each intermediate step (`Step 1`, `Step 2`, `Step T`) and the final `Answer`.

* **Annotations:** Two gray boxes on the right list limitations: `Limited Explainability` and `No Correction Mechanism`.

**Panel (c): GM-PRM (Ours)**

* **Title:** `(c) GM-PRM (Ours)`

* **Input:** A box labeled `Multimodal Math Qns` (identical to panel b).

* **Process:** A sequence of boxes: `Step 1` -> `Step 2` -> `...` -> `Step T` -> `Answer`.

* **Key Modification:** `Step 2` is highlighted in purple. A purple arrow labeled `1st incorrect step` points from `Step 2` to a subsequent purple arrow labeled `After Correction` that points back into the process flow between `Step 2` and the `...` box.

* **Evaluation:** An arrow points from the `Answer` box to a purple robot icon labeled `GM-PRM`. The arrow is labeled `Refined BoN`.

* **Feedback:** Dashed orange arrows labeled `Reward` point from the `GM-PRM` robot back to each step and the answer.

* **Analysis Module:** A large purple dashed box at the bottom is labeled `Analysis & Judgement`. Inside, three connected purple boxes are labeled: `Step Intent`, `Image Alignment`, and `Reasoning Logic`.

* **Annotation:** A gray box on the right states: `Refined & Corrected Version`.

### Detailed Analysis

The diagram presents a clear evolution of model sophistication:

1. **Outcome Reward Model (ORM):** This is the simplest model. It takes a question, generates an answer, and the ORM provides a reward signal based **only** on the final answer's correctness. There is no evaluation of the reasoning process.

2. **Multimodal Process Reward Model (PRM):** This model introduces step-wise evaluation. It breaks down the solution into discrete steps (`Step 1` to `Step T`). The PRM provides reward signals for each intermediate step and the final answer. However, the diagram notes two critical flaws: it offers **limited explainability** for its rewards and has **no mechanism to correct** an identified incorrect step.

3. **GM-PRM (Ours):** This is the proposed, advanced model. It builds upon the PRM framework but introduces two major enhancements:

* **Correction Mechanism:** It can identify the `1st incorrect step` (shown as `Step 2` in purple) and initiate a correction process (`After Correction` arrow), leading to a `Refined BoN` (Best-of-N) answer.

* **Analysis & Judgement Module:** A dedicated component performs deep analysis based on three criteria: `Step Intent` (understanding the goal of the step), `Image Alignment` (ensuring the step correctly uses visual information), and `Reasoning Logic` (validating the logical flow). This module directly informs the GM-PRM's reward and correction process, addressing the "limited explainability" issue of the standard PRM. The resulting model is described as a `Refined & Corrected Version`.

### Key Observations

* **Visual Coding:** The diagram uses color consistently to denote the proposed model's components. Purple is used for the GM-PRM robot, the incorrect step, the correction flow, and the entire Analysis & Judgement module, visually distinguishing it from the blue ORM/PRM robots and orange reward arrows.

* **Flow Complexity:** The process flow increases in complexity from (a) to (c). Panel (a) is a simple loop, (b) adds parallel reward loops, and (c) adds a corrective branch and a parallel analysis subsystem.

* **Spatial Layout:** The three models are stacked vertically for direct comparison. The "Analysis & Judgement" module in (c) is placed at the bottom, acting as a foundational support for the GM-PRM process above it.

* **Iconography:** Simple icons (chalkboard, math sheet, pencil, robot) are used to represent concepts, making the diagram accessible. The robot's expression changes from a simple smile (ORM, PRM) to a more focused, determined look (GM-PRM), subtly implying greater capability.

### Interpretation

This diagram argues for a paradigm shift in reward modeling for multimodal reasoning tasks. It posits that evaluating only the final outcome (ORM) is insufficient. While evaluating each process step (PRM) is better, it remains a passive evaluator that cannot explain its judgments or intervene when errors occur.

The **GM-PRM** is presented as a solution that transforms the reward model from a passive judge into an active tutor. By integrating a structured `Analysis & Judgement` module that scrutinizes intent, visual grounding, and logic, it gains the explainability the PRM lacks. More importantly, by incorporating a `Correction Mechanism`, it can actively repair flawed reasoning chains. This suggests the GM-PRM is designed not just to score performance, but to **improve** the reasoning process itself, leading to more reliable and refined outputs. The diagram effectively communicates that the key innovation is the closed-loop system of analysis, judgment, reward, and correction.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

# Technical Document Analysis: Multimodal Math Problem-Solving Reward Models

## Overview

The image presents a comparative analysis of three reward models for evaluating multimodal math problem-solving processes. The diagram uses flowcharts with labeled components, directional arrows, and annotations to illustrate differences in reward mechanisms and correction capabilities.

---

## Diagram Components

### (a) Outcome Reward Model

**Title**: Outcome Reward Model

**Key Components**:

- **Question** (Input: Math equation "E=mc²")

- **Output** (Intermediate processing step)

- **Answer** (Final response)

- **ORM** (Robot icon with "Reward" arrow)

**Flow**:

```

Question → Output → Answer → Reward (only for final answers)

```

**Annotations**:

- Red text: "ONLY Reward Final Ans"

- ORM robot has a smiley face

---

### (b) Multimodal Process Reward Model

**Title**: Multimodal Process Reward Model

**Key Components**:

- **Multimodal Math Qns** (Input: Math problems with equations and diagrams)

- **Steps 1, 2, ..., T** (Intermediate reasoning steps)

- **Answer** (Final response)

- **PRM** (Robot icon with "Reward" arrow)

**Flow**:

```

Multimodal Math Qns → Step 1 → Step 2 → ... → Step T → Answer → Reward

```

**Annotations**:

- Red text box: "Limited Explainability"

- Red text box: "No Correction Mechanism"

- PRM robot has a neutral expression

---

### (c) GM-PRM (Ours)

**Title**: GM-PRM (Ours)

**Key Components**:

- **Multimodal Math Qns** (Input: Math problems with equations and diagrams)

- **Steps 1, 2, ..., T** (Intermediate reasoning steps)

- **1st incorrect step** (Highlighted with dashed arrow)

- **After Correction** (Dashed arrow indicating refinement)

- **Refined BoN** (Refined version of the answer)

- **GM-PRM** (Robot icon with "Reward" arrow)

**Flow**:

```

Multimodal Math Qns → Step 1 → Step 2 → ... → Step T → Answer → Reward

```

**Sub-process**:

```

Analysis & Judgement → Step Intent → Image Alignment → Reasoning Logic

```

**Annotations**:

- Red text: "Refined & Corrected Version"

- Dashed arrows indicate iterative refinement

- GM-PRM robot has a smiling face with cheeks

---

## Key Differences Between Models

| Feature | Outcome Reward Model (a) | Multimodal Process Reward Model (b) | GM-PRM (c) |

|------------------------|--------------------------|-------------------------------------|--------------------------------|

| **Reward Scope** | Final answer only | All steps | All steps + corrections |

| **Explainability** | Not specified | Limited | Improved through analysis |

| **Correction** | No | No | Yes (via "After Correction") |

| **Robot Icon** | ORM (smiley) | PRM (neutral) | GM-PRM (smiling with cheeks) |

---

## Spatial Grounding & Component Isolation

1. **Header**: Each section title (a, b, c) is bolded and positioned at the top of its respective flowchart.

2. **Main Chart**:

- Components are arranged left-to-right with vertical stacking for sub-processes (e.g., "Analysis & Judgement" in c).

- Arrows indicate sequential flow (solid for primary flow, dashed for corrections).

3. **Footer**: No explicit footer, but annotations are placed in red text boxes near relevant components.

---

## Trend Verification

- **No numerical data** present; trends are represented through structural differences in component connectivity and annotation emphasis.

---

## Conclusion

The diagram demonstrates a progression from simple outcome-based evaluation (a) to more sophisticated process-aware models (b and c). The proposed GM-PRM (c) introduces explicit correction mechanisms and multi-stage analysis, addressing limitations in explainability and adaptability observed in prior models.

DECODING INTELLIGENCE...