TECHNICAL ASSET FINGERPRINT

8bdcf27230ab46385aaa6faa

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

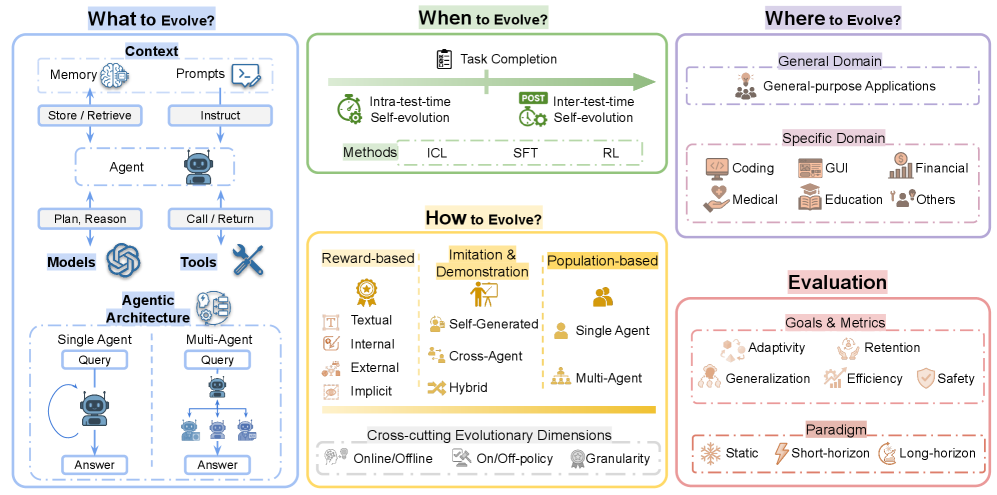

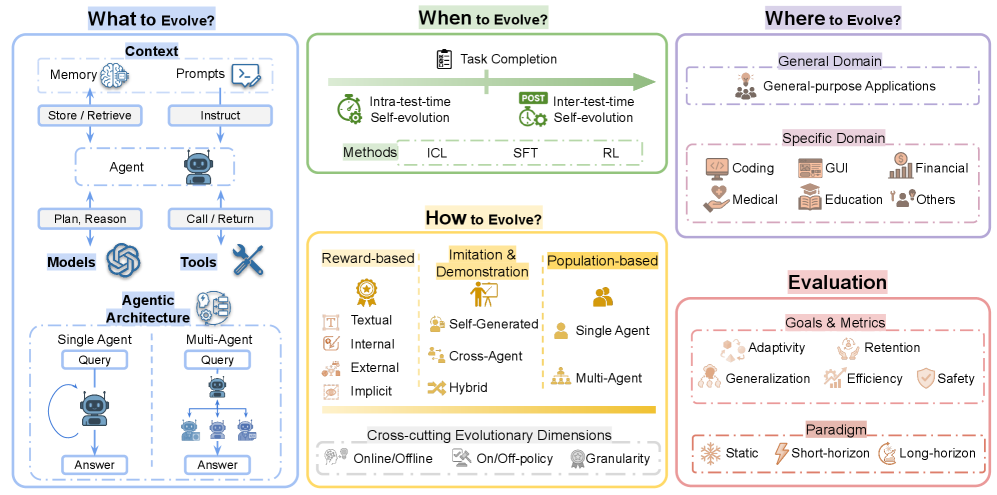

## Diagram: Dimensions of Evolving Agents

### Overview

The image is a diagram outlining key dimensions to consider when evolving agents. It is structured around four central questions: "What to Evolve?", "When to Evolve?", "Where to Evolve?", and "How to Evolve?". Each question is associated with a set of related concepts and categories, providing a framework for understanding the evolution of agents. The diagram also includes a section on "Evaluation" to assess the results of the evolution.

### Components/Axes

* **Main Titles:**

* What to Evolve? (Top-left, blue box)

* When to Evolve? (Top-center, green box)

* Where to Evolve? (Top-right, purple box)

* How to Evolve? (Center, yellow box)

* Evaluation (Bottom-right, pink box)

* **"What to Evolve?" (Blue Box):**

* Context: Memory, Prompts

* Agent

* Models, Tools

* Agentic Architecture: Single Agent, Multi-Agent

* Flow: Store/Retrieve (from Memory to Agent), Instruct (from Prompts to Agent), Plan/Reason (from Agent to Models), Call/Return (from Agent to Tools), Query (to Agent), Answer (from Agent)

* **"When to Evolve?" (Green Box):**

* Task Completion (represented by a checklist icon)

* Intra-test-time Self-evolution (represented by a clock icon)

* Inter-test-time Self-evolution (represented by a clock icon)

* Methods: ICL, SFT, RL

* **"Where to Evolve?" (Purple Box):**

* General Domain: General-purpose Applications

* Specific Domain: Coding, GUI, Financial, Medical, Education, Others

* **"How to Evolve?" (Yellow Box):**

* Reward-based: Textual, Internal, External, Implicit

* Imitation & Demonstration: Self-Generated, Cross-Agent, Hybrid

* Population-based: Single Agent, Multi-Agent

* Cross-cutting Evolutionary Dimensions: Online/Offline, On/Off-policy, Granularity

* **"Evaluation" (Pink Box):**

* Goals & Metrics: Adaptivity, Retention, Generalization, Efficiency, Safety

* Paradigm: Static, Short-horizon, Long-horizon

### Detailed Analysis or Content Details

* **"What to Evolve?" (Blue Box):**

* The "Context" section includes "Memory" (represented by a brain icon) and "Prompts" (represented by a text input icon).

* The "Agent" is represented by a robot icon.

* "Models" is represented by a swirling pattern icon, and "Tools" is represented by a wrench and screwdriver icon.

* The "Agentic Architecture" section shows a single agent querying and answering, and a multi-agent system with a central agent distributing queries to multiple agents and collecting answers.

* **"When to Evolve?" (Green Box):**

* The timeline progresses from "Intra-test-time Self-evolution" to "Inter-test-time Self-evolution".

* Methods listed are ICL, SFT, and RL.

* **"Where to Evolve?" (Purple Box):**

* The "Specific Domain" includes icons representing coding, GUI, financial, medical, education, and a generic "others" category.

* **"How to Evolve?" (Yellow Box):**

* "Reward-based" includes "Textual" (represented by a text icon), "Internal" (represented by a document icon), "External" (represented by a globe icon), and "Implicit" (represented by a speech bubble icon).

* "Imitation & Demonstration" includes "Self-Generated" (represented by a person icon), "Cross-Agent" (represented by multiple people icon), and "Hybrid" (represented by a wrench icon).

* "Population-based" includes "Single Agent" (represented by a single person icon) and "Multi-Agent" (represented by multiple people icon).

* "Cross-cutting Evolutionary Dimensions" includes "Online/Offline" (represented by a computer icon), "On/Off-policy" (represented by a switch icon), and "Granularity" (represented by a badge icon).

* **"Evaluation" (Pink Box):**

* "Goals & Metrics" includes "Adaptivity" (represented by a gear icon), "Retention" (represented by hands holding an object icon), "Generalization" (represented by a network icon), "Efficiency" (represented by a graph icon), and "Safety" (represented by a shield icon).

* "Paradigm" includes "Static" (represented by a snowflake icon), "Short-horizon" (represented by a lightning bolt icon), and "Long-horizon" (represented by a clock icon).

### Key Observations

* The diagram provides a structured overview of the different dimensions involved in evolving agents.

* Each dimension is broken down into specific categories and concepts, providing a detailed framework for analysis.

* The use of icons helps to visually represent the different concepts and categories.

* The flow diagrams in the "What to Evolve?" section illustrate the interactions between different components of the agentic architecture.

### Interpretation

The diagram serves as a comprehensive guide for researchers and practitioners interested in evolving agents. It highlights the key considerations and dimensions that need to be taken into account when designing and implementing evolutionary algorithms for agents. The framework encourages a holistic approach, considering not only the "how" but also the "what," "when," and "where" of agent evolution. The inclusion of an "Evaluation" section emphasizes the importance of assessing the performance and characteristics of evolved agents. The diagram suggests that successful agent evolution requires careful consideration of the context, methods, domains, and evaluation metrics.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Diagram: Agent Evolution Framework

### Overview

The image presents a diagram outlining a framework for agent evolution. It's structured around four key questions: "What to Evolve?", "When to Evolve?", "Where to Evolve?", and "How to Evolve?". The diagram uses a combination of icons, text, and arrows to illustrate the relationships between different components and concepts within this framework. The bottom section details "Cross-cutting Evolutionary Dimensions" and "Evaluation" metrics.

### Components/Axes

The diagram is divided into four main quadrants, each addressing one of the central questions.

* **What to Evolve?**: Includes components like "Context" (Memory, Prompts), "Agent" (Plan, Reason, Call/Return), "Models", and "Tools".

* **When to Evolve?**: Focuses on "Task Completion" and "Self-evolution" with methods like "ICL", "SFT", and "RL".

* **Where to Evolve?**: Categorizes evolution domains into "General Domain" and "Specific Domain" (Coding, GUI, Financial, Medical, Education, Others).

* **How to Evolve?**: Presents "Reward-based", "Imitation & Demonstration", and "Population-based" approaches.

* **Cross-cutting Evolutionary Dimensions**: Includes "Online/Offline", "On/Off-policy", and "Granularity".

* **Evaluation**: Lists "Goals & Metrics" such as Adaptivity, Retention, Generalization, Efficiency, and Safety, and "Paradigm" (Static, Short-horizon, Long-horizon).

### Detailed Analysis or Content Details

**What to Evolve?**

* **Context**: A light blue box with two sub-components: "Memory" (Store/Retrieve) and "Prompts" (Instruct). An arrow points from "Prompts" to "Agent".

* **Agent**: A robot icon representing the agent. It has two actions: "Plan, Reason" and "Call/Return". Arrows connect "Agent" to "Models" and "Tools".

* **Models**: A box labeled "Models".

* **Tools**: A box labeled "Tools" with a crossed-out icon.

* **Agentic Architecture**: Two sub-sections: "Single Agent Query" and "Multi-Agent Query", each with a robot icon and an "Answer" output.

**When to Evolve?**

* **Task Completion**: A green box with an arrow pointing to "Self-evolution".

* **Self-evolution**: A light green box with two sub-categories: "Intra-test-time" and "Inter-test-time". A "POST" label is placed between them.

* **Methods**: Three boxes: "ICL", "SFT", and "RL".

**Where to Evolve?**

* **General Domain**: A light purple box containing "General-purpose Applications".

* **Specific Domain**: A purple box containing icons and labels for: "Coding", "GUI", "Financial", "Medical", "Education", and "Others".

**How to Evolve?**

* **Reward-based**: A pink box with sub-categories: "Textual", "Internal", "External", and "Implicit".

* **Imitation & Demonstration**: An orange box with sub-categories: "Self-Generated", "Cross-Agent", and "Hybrid".

* **Population-based**: A yellow box with sub-categories: "Single Agent", "Multi-Agent".

**Cross-cutting Evolutionary Dimensions**:

* "Online/Offline"

* "On/Off-policy"

* "Granularity"

**Evaluation**:

* **Goals & Metrics**: Icons representing: "Adaptivity", "Retention", "Generalization", "Efficiency", and "Safety".

* **Paradigm**: "Static", "Short-horizon", and "Long-horizon".

### Key Observations

* The diagram emphasizes a cyclical process of agent evolution, starting with context and leading to evaluation.

* The "Self-evolution" component is highlighted as a key aspect of the "When to Evolve?" quadrant.

* The "Where to Evolve?" quadrant demonstrates the versatility of agent evolution across various domains.

* The "How to Evolve?" quadrant presents different strategies for driving agent improvement.

* The "Cross-cutting Evolutionary Dimensions" suggest considerations that apply across all stages of the evolution process.

### Interpretation

This diagram illustrates a comprehensive framework for developing and improving intelligent agents. It moves beyond simple task completion to incorporate continuous self-evolution, suggesting a focus on agents that can learn and adapt over time. The categorization of evolution domains ("Where to Evolve?") highlights the potential for applying this framework to a wide range of applications. The inclusion of "Cross-cutting Evolutionary Dimensions" and "Evaluation" metrics emphasizes the importance of considering broader factors and measuring progress effectively.

The diagram suggests a shift towards more sophisticated agent architectures ("Agentic Architecture") capable of handling complex queries and providing meaningful answers. The different "How to Evolve?" approaches (Reward-based, Imitation, Population-based) represent different learning paradigms, offering flexibility in how agents are trained and improved.

The "POST" label between "Intra-test-time" and "Inter-test-time" self-evolution suggests a potential iterative process of testing and refinement. The diagram doesn't provide specific data or numerical values, but rather a conceptual model for understanding the key components and relationships involved in agent evolution. It's a high-level overview intended to guide research and development in this field.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

\n

## Conceptual Diagram: A Framework for AI Agent Evolution

### Overview

This image presents a comprehensive conceptual framework for the evolution of AI agents. It is structured as a multi-panel diagram that categorizes the key dimensions and considerations involved in evolving AI systems. The framework is divided into five main sections, each addressing a fundamental question: "What to Evolve?", "When to Evolve?", "Where to Evolve?", "How to Evolve?", and "Evaluation". The diagram uses a combination of text labels, icons, and flow arrows to illustrate relationships and hierarchies.

### Components/Axes

The diagram is organized into five distinct, color-coded panels:

1. **Top-Left Panel (Blue Border): "What to Evolve?"**

* **Main Header:** "What to Evolve?"

* **Sub-sections & Labels:**

* **Context:** Contains "Memory" (brain icon) and "Prompts" (document icon). Arrows point from these to "Store / Retrieve" and "Instruct" respectively.

* **Agent:** A central robot icon labeled "Agent". It receives input from "Instruct" and "Store / Retrieve". It has outputs labeled "Plan, Reason" and "Call / Return".

* **Models & Tools:** "Models" (brain network icon) and "Tools" (wrench icon) are shown as resources the Agent uses.

* **Agentic Architecture:** This subsection is split into two columns:

* **Single Agent:** Shows a robot icon with a "Query" input and an "Answer" output, with a circular arrow indicating a loop.

* **Multi-Agent:** Shows a hierarchy with one robot at the top labeled "Query", connected to three subordinate robots, all leading to a final "Answer".

2. **Top-Center Panel (Green Border): "When to Evolve?"**

* **Main Header:** "When to Evolve?"

* **Central Element:** A horizontal timeline arrow labeled "Task Completion".

* **Phases:**

* **Left of center:** "Intra-test-time" with a sub-label "Self-evolution" and a stopwatch icon.

* **Right of center:** "Inter-test-time" with a sub-label "Self-evolution" and a "POST" stamp icon.

* **Methods Bar:** Below the timeline, a bar lists "Methods" with three entries: "ICL", "SFT", "RL".

3. **Top-Right Panel (Purple Border): "Where to Evolve?"**

* **Main Header:** "Where to Evolve?"

* **Categories:**

* **General Domain:** Contains "General-purpose Applications" (group of people icon).

* **Specific Domain:** Lists several domains with icons: "Coding" (computer), "GUI" (window), "Financial" (chart), "Medical" (heart with cross), "Education" (graduation cap), "Others" (tools).

4. **Bottom-Center Panel (Yellow Border): "How to Evolve?"**

* **Main Header:** "How to Evolve?"

* **Three Evolutionary Strategies:**

* **Reward-based:** Lists types: "Textual", "Internal", "External", "Implicit".

* **Imitation & Demonstration:** Lists sources: "Self-Generated", "Cross-Agent", "Hybrid".

* **Population-based:** Lists scales: "Single Agent", "Multi-Agent".

* **Cross-cutting Evolutionary Dimensions:** A bar at the bottom lists three dimensions: "Online/Offline", "On/Off-policy", "Granularity".

5. **Bottom-Right Panel (Red Border): "Evaluation"**

* **Main Header:** "Evaluation"

* **Two Sections:**

* **Goals & Metrics:** Lists six metrics with icons: "Adaptivity", "Retention", "Generalization", "Efficiency", "Safety".

* **Paradigm:** Lists three paradigms with icons: "Static", "Short-horizon", "Long-horizon".

### Detailed Analysis

* **Flow in "What to Evolve?":** The diagram suggests a flow where an Agent, situated within a Context (using Memory and Prompts), utilizes Models and Tools to perform actions (Plan, Reason; Call, Return). This agent can be architected as a Single Agent or a Multi-Agent system.

* **Temporal Framework in "When to Evolve?":** Evolution can occur during a task ("Intra-test-time") or after task completion ("Inter-test-time"). The listed methods (ICL, SFT, RL) are presented as techniques applicable across this timeline.

* **Domain Specificity in "Where to Evolve?":** The framework distinguishes between evolving for broad, general-purpose applications versus specialized domains like coding, finance, or medicine.

* **Methodological Approaches in "How to Evolve?":** Three primary strategies are outlined: learning from rewards, learning from demonstrations, and evolving populations of agents. These strategies are further characterized by cross-cutting dimensions like whether they are online/offline.

* **Assessment Criteria in "Evaluation":** The success of evolution is measured against goals like adaptivity and safety, and can be assessed under different operational paradigms (static vs. dynamic horizons).

### Key Observations

1. **Holistic Framework:** The diagram is designed to be a comprehensive taxonomy, covering the *what, when, where, how,* and *assessment* of AI agent evolution.

2. **Hierarchical and Relational Structure:** It uses clear visual hierarchies (main headers, sub-sections) and directional arrows to imply relationships and processes, particularly in the "What to Evolve?" panel.

3. **Iconography:** Each concept is paired with a simple, representative icon (e.g., brain for memory, wrench for tools, stopwatch for intra-test time), aiding quick visual parsing.

4. **Color-Coding:** Each major question is assigned a distinct border color (blue, green, purple, yellow, red), creating clear visual separation between the framework's pillars.

5. **Abstraction Level:** The diagram is highly abstract and conceptual. It does not contain numerical data, specific algorithms, or implementation details, but rather outlines a high-level research or design space.

### Interpretation

This diagram serves as a **conceptual map for the field of evolving AI agents**. It suggests that advancing agent capabilities is not a single problem but a multi-faceted challenge requiring simultaneous consideration of:

* **The Agent's Composition:** Its architecture, memory, use of tools, and models.

* **The Evolutionary Process:** The timing (during/after tasks) and techniques (like Reinforcement Learning or Imitation Learning) used.

* **The Operational Environment:** The breadth of tasks and domains the agent must handle.

* **The Evaluation Framework:** The metrics and paradigms used to define and measure "improvement."

The framework implies that progress in AI agents is systemic. For instance, evolving a "Multi-Agent" system (from "What") for "Financial" domains (from "Where") using "Population-based" methods (from "How") would require evaluation against "Efficiency" and "Safety" metrics (from "Evaluation"). It provides a structured vocabulary and checklist for researchers and engineers to定位 their work within the broader pursuit of more capable and adaptive AI systems. The inclusion of "Self-evolution" in both temporal phases points towards a goal of creating agents that can autonomously improve their own performance.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Diagram: Framework for Agent Evolution

### Overview

The image presents a structured framework for agent evolution, divided into six interconnected sections: "What to Evolve?", "When to Evolve?", "Where to Evolve?", "How to Evolve?", and "Evaluation". Each section outlines key components, methods, and considerations for evolving agents in technical systems.

---

### Components/Axes

#### Section 1: What to Evolve?

- **Context**:

- Memory (brain icon)

- Prompts (speech bubble)

- Store/Retrieve (file icon)

- Instruct (arrow)

- **Agent**:

- Plan, Reason, Call/Return (robot icon)

- **Models & Tools**:

- Models (cogwheel icon)

- Tools (wrench icon)

- **Agentic Architecture**:

- Single Agent (robot with query/answer flow)

- Multi-Agent (hierarchical robots with query/answer flow)

#### Section 2: When to Evolve?

- **Task Completion**:

- Intra-test-time Self-evolution (clock icon)

- Inter-test-time Self-evolution (clock with arrow)

- **Methods**:

- ICL (In-Context Learning)

- SFT (Supervised Fine-Tuning)

- RL (Reinforcement Learning)

#### Section 3: Where to Evolve?

- **General Domain**:

- General-purpose Applications (lightbulb icon)

- **Specific Domain**:

- Coding (computer icon)

- GUI (monitor icon)

- Financial (currency icon)

- Medical (heart icon)

- Education (book icon)

- Others (person icon)

#### Section 4: How to Evolve?

- **Reward-based**:

- Textual (trophy icon)

- Internal (checkmark icon)

- External (camera icon)

- Implicit (question mark icon)

- **Imitation & Demonstration**:

- Self-Generated (person icon)

- Cross-Agent (two people icon)

- Hybrid (person with gear icon)

- **Population-based**:

- Single Agent (person icon)

- Multi-Agent (group of people icon)

- **Cross-cutting Evolutionary Dimensions**:

- Online/Offline (clock icon)

- On/Off-Policy (clock with arrow)

- Granularity (magnifying glass icon)

#### Section 5: Evaluation

- **Goals & Metrics**:

- Adaptivity (person with gear icon)

- Retention (person with checkmark icon)

- Generalization (person with globe icon)

- Efficiency (clock icon)

- Safety (shield icon)

- **Paradigm**:

- Static (snowflake icon)

- Short-horizon (clock icon)

- Long-horizon (clock with arrow icon)

---

### Detailed Analysis

1. **What to Evolve?**

- Focuses on **contextual elements** (memory, prompts, tools) and **agentic capabilities** (planning, reasoning).

- Distinguishes between **single-agent** and **multi-agent architectures**, emphasizing hierarchical interactions.

2. **When to Evolve?**

- Highlights **evolution timing** (intra-test vs. inter-test) and **methods** (ICL, SFT, RL) for iterative improvement.

3. **Where to Evolve?**

- Categorizes domains into **general-purpose** and **domain-specific** applications (e.g., coding, finance).

4. **How to Evolve?**

- Outlines **evolution strategies**:

- **Reward-based**: Textual, internal/external feedback.

- **Imitation**: Self-generated, cross-agent, hybrid approaches.

- **Population-based**: Single/multi-agent collaboration.

- Cross-cutting dimensions (online/offline, policy, granularity) suggest adaptability across environments.

5. **Evaluation**

- Metrics prioritize **adaptivity, retention, generalization, efficiency, and safety**.

- Paradigms (static, short/long-horizon) define temporal and operational constraints.

---

### Key Observations

- **Hierarchical Structure**: The framework progresses from defining **what** to evolve (context/agent) to **how** and **where** to evolve, culminating in **evaluation**.

- **Cross-cutting Dimensions**: Online/offline and policy considerations imply flexibility in deployment and learning strategies.

- **Domain Specificity**: Specific domains (medical, education) suggest tailored evolution paths for specialized applications.

- **Evaluation Emphasis**: Safety and efficiency are critical, indicating a focus on robust, real-world applicability.

---

### Interpretation

This framework provides a **comprehensive roadmap** for evolving agents, balancing technical rigor (e.g., ICL, RL) with practical considerations (e.g., safety, domain specificity). The integration of **reward-based and population-based methods** suggests a hybrid approach to optimization, while cross-cutting dimensions (online/offline, granularity) address scalability and adaptability. The emphasis on **evaluation metrics** (safety, efficiency) underscores the importance of real-world performance over theoretical gains. Notably, the absence of explicit numerical data implies a conceptual rather than empirical framework, prioritizing strategic alignment over quantitative validation.

DECODING INTELLIGENCE...