## Diagram: Framework for Agent Evolution

### Overview

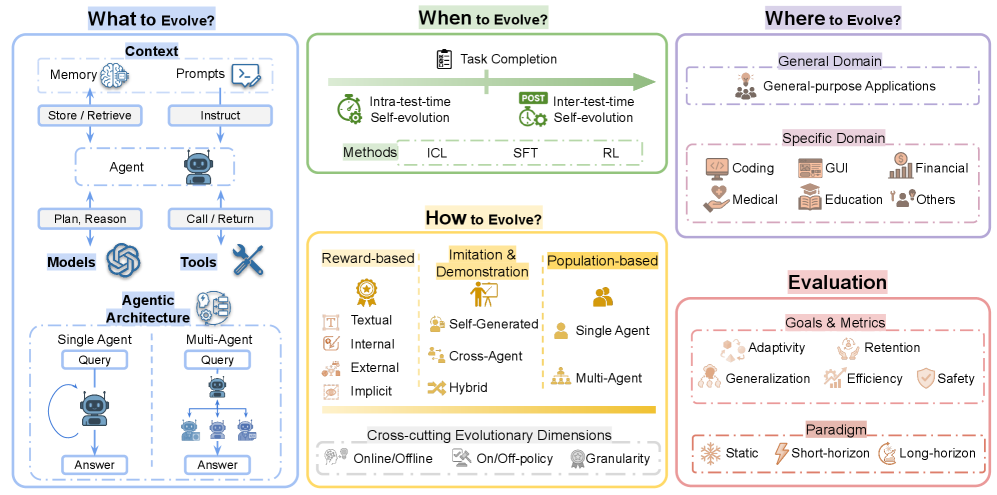

The image presents a structured framework for agent evolution, divided into six interconnected sections: "What to Evolve?", "When to Evolve?", "Where to Evolve?", "How to Evolve?", and "Evaluation". Each section outlines key components, methods, and considerations for evolving agents in technical systems.

---

### Components/Axes

#### Section 1: What to Evolve?

- **Context**:

- Memory (brain icon)

- Prompts (speech bubble)

- Store/Retrieve (file icon)

- Instruct (arrow)

- **Agent**:

- Plan, Reason, Call/Return (robot icon)

- **Models & Tools**:

- Models (cogwheel icon)

- Tools (wrench icon)

- **Agentic Architecture**:

- Single Agent (robot with query/answer flow)

- Multi-Agent (hierarchical robots with query/answer flow)

#### Section 2: When to Evolve?

- **Task Completion**:

- Intra-test-time Self-evolution (clock icon)

- Inter-test-time Self-evolution (clock with arrow)

- **Methods**:

- ICL (In-Context Learning)

- SFT (Supervised Fine-Tuning)

- RL (Reinforcement Learning)

#### Section 3: Where to Evolve?

- **General Domain**:

- General-purpose Applications (lightbulb icon)

- **Specific Domain**:

- Coding (computer icon)

- GUI (monitor icon)

- Financial (currency icon)

- Medical (heart icon)

- Education (book icon)

- Others (person icon)

#### Section 4: How to Evolve?

- **Reward-based**:

- Textual (trophy icon)

- Internal (checkmark icon)

- External (camera icon)

- Implicit (question mark icon)

- **Imitation & Demonstration**:

- Self-Generated (person icon)

- Cross-Agent (two people icon)

- Hybrid (person with gear icon)

- **Population-based**:

- Single Agent (person icon)

- Multi-Agent (group of people icon)

- **Cross-cutting Evolutionary Dimensions**:

- Online/Offline (clock icon)

- On/Off-Policy (clock with arrow)

- Granularity (magnifying glass icon)

#### Section 5: Evaluation

- **Goals & Metrics**:

- Adaptivity (person with gear icon)

- Retention (person with checkmark icon)

- Generalization (person with globe icon)

- Efficiency (clock icon)

- Safety (shield icon)

- **Paradigm**:

- Static (snowflake icon)

- Short-horizon (clock icon)

- Long-horizon (clock with arrow icon)

---

### Detailed Analysis

1. **What to Evolve?**

- Focuses on **contextual elements** (memory, prompts, tools) and **agentic capabilities** (planning, reasoning).

- Distinguishes between **single-agent** and **multi-agent architectures**, emphasizing hierarchical interactions.

2. **When to Evolve?**

- Highlights **evolution timing** (intra-test vs. inter-test) and **methods** (ICL, SFT, RL) for iterative improvement.

3. **Where to Evolve?**

- Categorizes domains into **general-purpose** and **domain-specific** applications (e.g., coding, finance).

4. **How to Evolve?**

- Outlines **evolution strategies**:

- **Reward-based**: Textual, internal/external feedback.

- **Imitation**: Self-generated, cross-agent, hybrid approaches.

- **Population-based**: Single/multi-agent collaboration.

- Cross-cutting dimensions (online/offline, policy, granularity) suggest adaptability across environments.

5. **Evaluation**

- Metrics prioritize **adaptivity, retention, generalization, efficiency, and safety**.

- Paradigms (static, short/long-horizon) define temporal and operational constraints.

---

### Key Observations

- **Hierarchical Structure**: The framework progresses from defining **what** to evolve (context/agent) to **how** and **where** to evolve, culminating in **evaluation**.

- **Cross-cutting Dimensions**: Online/offline and policy considerations imply flexibility in deployment and learning strategies.

- **Domain Specificity**: Specific domains (medical, education) suggest tailored evolution paths for specialized applications.

- **Evaluation Emphasis**: Safety and efficiency are critical, indicating a focus on robust, real-world applicability.

---

### Interpretation

This framework provides a **comprehensive roadmap** for evolving agents, balancing technical rigor (e.g., ICL, RL) with practical considerations (e.g., safety, domain specificity). The integration of **reward-based and population-based methods** suggests a hybrid approach to optimization, while cross-cutting dimensions (online/offline, granularity) address scalability and adaptability. The emphasis on **evaluation metrics** (safety, efficiency) underscores the importance of real-world performance over theoretical gains. Notably, the absence of explicit numerical data implies a conceptual rather than empirical framework, prioritizing strategic alignment over quantitative validation.