TECHNICAL ASSET FINGERPRINT

8c1358515cf271b457c1b067

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

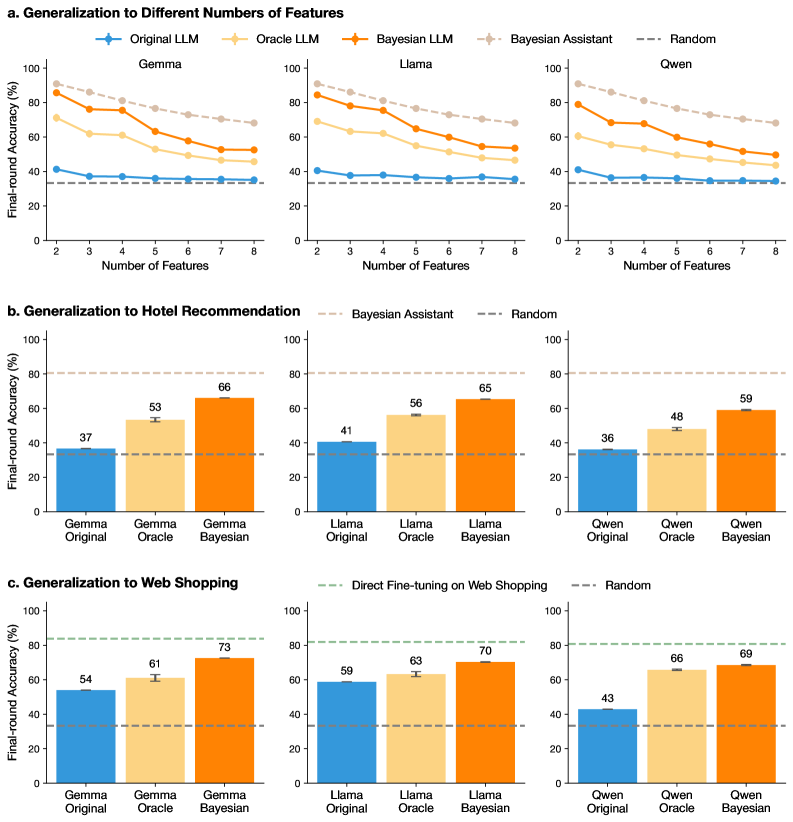

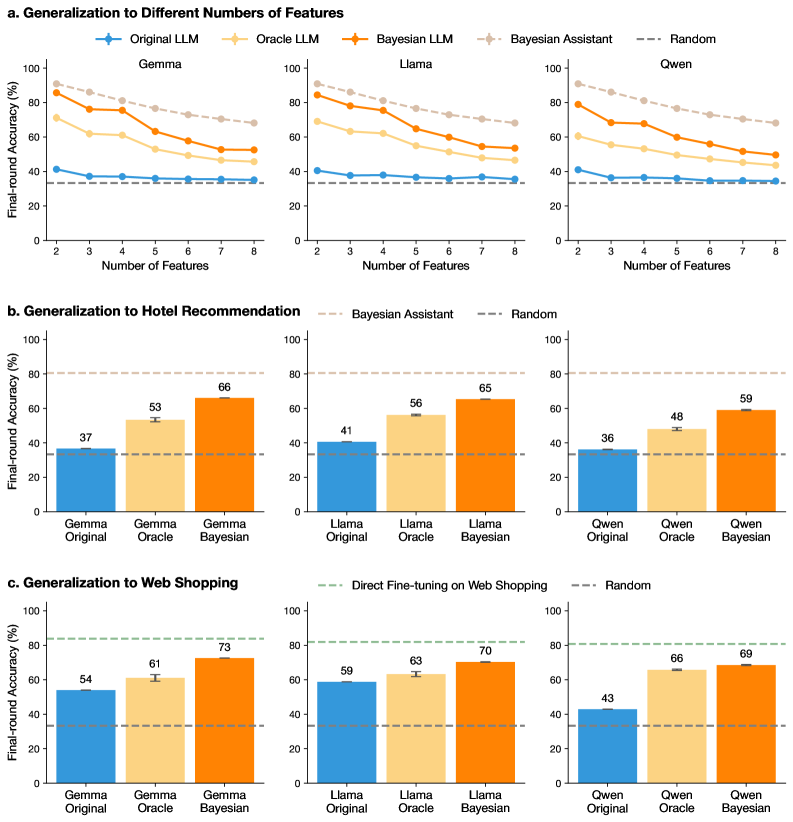

## Chart Type: Performance Comparison of Language Models

### Overview

The image presents a series of bar and line charts comparing the performance of different language models (Gemma, Llama, and Qwen) under various conditions. The charts are organized into three sections: generalization to different numbers of features, generalization to hotel recommendation, and generalization to web shopping. Each section compares the "Original," "Oracle," and "Bayesian" versions of each language model, along with baselines like "Bayesian Assistant" and "Random."

### Components/Axes

**General Components:**

* **Title:** The image is divided into three sections, each with a title: "a. Generalization to Different Numbers of Features," "b. Generalization to Hotel Recommendation," and "c. Generalization to Web Shopping."

* **Y-axis:** All charts share a common Y-axis labeled "Final-round Accuracy (%)" ranging from 0 to 100.

* **Legend:** Located at the top of the image, the legend identifies the different language model versions and baselines using color-coded lines and bars:

* Original LLM (Blue)

* Oracle LLM (Light Orange)

* Bayesian LLM (Orange)

* Bayesian Assistant (Light Gray, dashed line)

* Random (Dark Gray, dashed line)

**Section a: Generalization to Different Numbers of Features**

* **X-axis:** Labeled "Number of Features," ranging from 2 to 8.

* **Subplots:** Three subplots, one for each language model: Gemma, Llama, and Qwen.

* **Data Series:** Each subplot contains line plots for "Original LLM," "Oracle LLM," "Bayesian LLM," "Bayesian Assistant," and "Random."

**Section b: Generalization to Hotel Recommendation**

* **X-axis:** Categorical, with three categories for each language model: "Original," "Oracle," and "Bayesian."

* **Subplots:** Three subplots, one for each language model: Gemma, Llama, and Qwen.

* **Data Series:** Each subplot contains bar plots for "Original," "Oracle," and "Bayesian" versions of the language model.

**Section c: Generalization to Web Shopping**

* **X-axis:** Categorical, with three categories for each language model: "Original," "Oracle," and "Bayesian."

* **Subplots:** Three subplots, one for each language model: Gemma, Llama, and Qwen.

* **Data Series:** Each subplot contains bar plots for "Original," "Oracle," and "Bayesian" versions of the language model.

### Detailed Analysis

**Section a: Generalization to Different Numbers of Features**

* **Gemma:**

* Original LLM (Blue): Relatively flat line around 37% accuracy.

* Oracle LLM (Light Orange): Decreases from approximately 90% at 2 features to 55% at 8 features.

* Bayesian LLM (Orange): Decreases from approximately 95% at 2 features to 60% at 8 features.

* Bayesian Assistant (Light Gray, dashed line): Flat line around 80% accuracy.

* Random (Dark Gray, dashed line): Flat line around 37% accuracy.

* **Llama:**

* Original LLM (Blue): Relatively flat line around 41% accuracy.

* Oracle LLM (Light Orange): Decreases from approximately 80% at 2 features to 50% at 8 features.

* Bayesian LLM (Orange): Decreases from approximately 90% at 2 features to 55% at 8 features.

* Bayesian Assistant (Light Gray, dashed line): Flat line around 80% accuracy.

* Random (Dark Gray, dashed line): Flat line around 37% accuracy.

* **Qwen:**

* Original LLM (Blue): Relatively flat line around 36% accuracy.

* Oracle LLM (Light Orange): Decreases from approximately 75% at 2 features to 45% at 8 features.

* Bayesian LLM (Orange): Decreases from approximately 85% at 2 features to 50% at 8 features.

* Bayesian Assistant (Light Gray, dashed line): Flat line around 80% accuracy.

* Random (Dark Gray, dashed line): Flat line around 37% accuracy.

**Section b: Generalization to Hotel Recommendation**

* **Gemma:**

* Original (Blue): 37% accuracy.

* Oracle (Light Orange): 53% accuracy.

* Bayesian (Orange): 66% accuracy.

* **Llama:**

* Original (Blue): 41% accuracy.

* Oracle (Light Orange): 56% accuracy.

* Bayesian (Orange): 65% accuracy.

* **Qwen:**

* Original (Blue): 36% accuracy.

* Oracle (Light Orange): 48% accuracy.

* Bayesian (Orange): 59% accuracy.

**Section c: Generalization to Web Shopping**

* **Gemma:**

* Original (Blue): 54% accuracy.

* Oracle (Light Orange): 61% accuracy.

* Bayesian (Orange): 73% accuracy.

* **Llama:**

* Original (Blue): 59% accuracy.

* Oracle (Light Orange): 63% accuracy.

* Bayesian (Orange): 70% accuracy.

* **Qwen:**

* Original (Blue): 43% accuracy.

* Oracle (Light Orange): 66% accuracy.

* Bayesian (Orange): 69% accuracy.

### Key Observations

* In the "Generalization to Different Numbers of Features" section, the "Original LLM" models maintain a relatively constant accuracy regardless of the number of features, while the "Oracle LLM" and "Bayesian LLM" models show a decreasing trend in accuracy as the number of features increases.

* In both the "Hotel Recommendation" and "Web Shopping" sections, the "Bayesian" versions of each language model consistently outperform the "Original" and "Oracle" versions.

* The "Bayesian Assistant" baseline in the "Generalization to Different Numbers of Features" section consistently achieves around 80% accuracy.

* The "Random" baseline consistently achieves around 37% accuracy across all sections.

* The "Direct Fine-tuning on Web Shopping" baseline in the "Web Shopping" section achieves around 80% accuracy.

### Interpretation

The data suggests that the "Oracle" and "Bayesian" versions of the language models are more sensitive to the number of features, as their accuracy decreases as the number of features increases. This could indicate that these models are more prone to overfitting or that they require more data to generalize effectively with a larger number of features.

The "Bayesian" versions of the language models consistently outperform the "Original" and "Oracle" versions in the "Hotel Recommendation" and "Web Shopping" tasks, suggesting that the Bayesian approach is more effective for these specific tasks.

The baselines provide a point of reference for evaluating the performance of the language models. The "Random" baseline indicates the expected accuracy of a random guess, while the "Bayesian Assistant" and "Direct Fine-tuning on Web Shopping" baselines represent the performance of alternative approaches.

The error bars on the bar charts indicate the variability in the accuracy of the models. The error bars are relatively small, suggesting that the results are consistent and reliable.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Charts: Generalization Performance of LLMs

### Overview

The image presents three sets of charts comparing the performance of several Large Language Models (LLMs) – Gemma, Llama, Owen, and a Random baseline – across different generalization tasks. The first set (a) examines performance as a function of the number of features used. The second (b) focuses on generalization to Hotel Recommendation, and the third (c) on Web Shopping. All charts use Final-round Accuracy (%) as the y-axis. Error bars are present in the bar charts.

### Components/Axes

* **X-axis (Charts a):** Number of Features (ranging from 2 to 8).

* **Y-axis (All Charts):** Final-round Accuracy (%) (ranging from 0 to 100).

* **Legends (Chart a):**

* Original LLM (Red Line)

* Oracle LLM (Blue Line)

* Bayesian Assistant (Purple Line)

* Random (Gray Dashed Line)

* **X-axis Labels (Charts b & c):** Gemma Original, Gemma Oracle, Gemma Bayesian, Llama Original, Llama Oracle, Llama Bayesian, Owen Original, Owen Oracle, Owen Bayesian.

* **Legends (Charts b & c):**

* Bayesian Assistant (Charts b & c)

* Random (Charts b & c)

* Direct Fine-tuning on Web Shopping (Chart c)

### Detailed Analysis or Content Details

**a. Generalization to Different Numbers of Features**

* **Original LLM (Red):** Starts at approximately 70% accuracy with 2 features, dips to around 50% with 4 features, then rises to approximately 75% with 8 features.

* **Oracle LLM (Blue):** Starts at approximately 60% accuracy with 2 features, decreases to around 40% with 5 features, and then plateaus around 45% with 8 features.

* **Bayesian Assistant (Purple):** Starts at approximately 50% accuracy with 2 features, rises to a peak of around 70% with 6 features, and then declines to approximately 60% with 8 features.

* **Random (Gray Dashed):** Remains relatively constant around 20% accuracy across all feature numbers.

**b. Generalization to Hotel Recommendation**

* **Gemma Original:** Approximately 37% accuracy.

* **Gemma Oracle:** Approximately 53% accuracy.

* **Gemma Bayesian:** Approximately 66% accuracy.

* **Llama Original:** Approximately 41% accuracy.

* **Llama Oracle:** Approximately 56% accuracy.

* **Llama Bayesian:** Approximately 65% accuracy.

* **Owen Original:** Approximately 36% accuracy.

* **Owen Oracle:** Approximately 48% accuracy.

* **Owen Bayesian:** Approximately 59% accuracy.

**c. Generalization to Web Shopping**

* **Gemma Original:** Approximately 54% accuracy.

* **Gemma Oracle:** Approximately 61% accuracy.

* **Gemma Bayesian:** Approximately 73% accuracy.

* **Llama Original:** Approximately 59% accuracy.

* **Llama Oracle:** Approximately 63% accuracy.

* **Llama Bayesian:** Approximately 70% accuracy.

* **Owen Original:** Approximately 43% accuracy.

* **Owen Oracle:** Approximately 66% accuracy.

* **Owen Bayesian:** Approximately 69% accuracy.

### Key Observations

* The Bayesian Assistant consistently outperforms the Original and Oracle LLMs across all tasks.

* Performance generally increases with the addition of features (Chart a), but the relationship is not always linear. Some models show a dip in performance at intermediate feature counts.

* The Random baseline consistently performs poorly, indicating that the LLMs are learning something beyond chance.

* The Oracle LLM generally performs better than the Original LLM, but not as well as the Bayesian Assistant.

* Gemma Bayesian consistently achieves the highest accuracy in the Hotel Recommendation and Web Shopping tasks.

### Interpretation

The data suggests that the Bayesian Assistant approach is the most effective for generalization in these tasks. The improvement over the Original and Oracle LLMs indicates that the Bayesian method is better at incorporating prior knowledge and handling uncertainty. The varying performance with different numbers of features (Chart a) suggests that there is an optimal level of complexity for each model and task. The consistent poor performance of the Random baseline validates the effectiveness of the LLMs. The fact that the Oracle LLM performs better than the Original LLM suggests that providing access to additional information (the "oracle") can improve performance, but the Bayesian Assistant is still superior. The differences in performance between the models on different tasks (Hotel Recommendation vs. Web Shopping) suggest that the optimal model architecture and training data may vary depending on the specific application. The error bars in charts b and c indicate the variability of the results, and further statistical analysis would be needed to determine the significance of the observed differences.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Multi-Chart Figure: Generalization Performance of LLM Variants

### Overview

The image is a composite figure containing three distinct sections (labeled a, b, and c), each presenting performance comparisons of three Large Language Models (Gemma, Llama, Qwen) across different tasks. The charts evaluate "Final-round Accuracy (%)" for different model variants: Original, Oracle, and Bayesian. The overall theme is assessing how these models generalize to tasks with varying feature counts and to new domains (Hotel Recommendation, Web Shopping).

### Components/Axes

**Common Elements Across All Charts:**

* **Y-Axis:** "Final-round Accuracy (%)" ranging from 0 to 100.

* **Models Compared:** Gemma, Llama, Qwen (each in its own sub-chart within a section).

* **Model Variants (Legend):**

* **Original LLM** (Blue line/bar)

* **Oracle LLM** (Light yellow/beige line/bar)

* **Bayesian LLM** (Orange line/bar)

* **Baselines (Dashed Lines):**

* **Random** (Gray dashed line, ~33% accuracy)

* **Bayesian Assistant** (Light brown dashed line, ~80% accuracy in sections b & c)

* **Direct Fine-tuning on Web Shopping** (Green dashed line, ~82% accuracy in section c only)

**Section-Specific Components:**

**a. Generalization to Different Numbers of Features**

* **Chart Type:** Line charts.

* **X-Axis:** "Number of Features" with discrete markers at 2, 3, 4, 5, 6, 7, 8.

* **Legend:** Located at the top of the section, spanning all three sub-charts. Contains five entries: Original LLM, Oracle LLM, Bayesian LLM, Bayesian Assistant, Random.

* **Spatial Layout:** Three sub-charts arranged horizontally for Gemma (left), Llama (center), Qwen (right).

**b. Generalization to Hotel Recommendation**

* **Chart Type:** Bar charts.

* **X-Axis Categories (per sub-chart):** "[Model] Original", "[Model] Oracle", "[Model] Bayesian".

* **Legend:** Located at the top of the section. Contains two entries: Bayesian Assistant, Random.

* **Data Labels:** Numerical accuracy values are printed directly above each bar.

* **Spatial Layout:** Three sub-charts arranged horizontally for Gemma (left), Llama (center), Qwen (right).

**c. Generalization to Web Shopping**

* **Chart Type:** Bar charts.

* **X-Axis Categories (per sub-chart):** "[Model] Original", "[Model] Oracle", "[Model] Bayesian".

* **Legend:** Located at the top of the section. Contains two entries: Direct Fine-tuning on Web Shopping, Random.

* **Data Labels:** Numerical accuracy values are printed directly above each bar.

* **Spatial Layout:** Three sub-charts arranged horizontally for Gemma (left), Llama (center), Qwen (right).

### Detailed Analysis

**a. Generalization to Different Numbers of Features (Line Charts)**

* **Trend Verification:** For all models and variants, accuracy generally **slopes downward** as the number of features increases from 2 to 8. The Bayesian Assistant line remains relatively flat and high.

* **Gemma (Left Sub-chart):**

* **Original LLM (Blue):** Starts at ~41% (2 features), declines slightly to ~35% (8 features).

* **Oracle LLM (Light Yellow):** Starts at ~68% (2 features), declines to ~46% (8 features).

* **Bayesian LLM (Orange):** Starts at ~85% (2 features), declines to ~52% (8 features).

* **Bayesian Assistant (Light Brown Dashed):** Starts at ~90% (2 features), declines to ~68% (8 features).

* **Random (Gray Dashed):** Constant at ~33%.

* **Llama (Center Sub-chart):**

* **Original LLM (Blue):** Starts at ~40% (2 features), declines to ~35% (8 features).

* **Oracle LLM (Light Yellow):** Starts at ~68% (2 features), declines to ~46% (8 features).

* **Bayesian LLM (Orange):** Starts at ~84% (2 features), declines to ~53% (8 features).

* **Bayesian Assistant (Light Brown Dashed):** Starts at ~90% (2 features), declines to ~68% (8 features).

* **Random (Gray Dashed):** Constant at ~33%.

* **Qwen (Right Sub-chart):**

* **Original LLM (Blue):** Starts at ~41% (2 features), declines to ~35% (8 features).

* **Oracle LLM (Light Yellow):** Starts at ~60% (2 features), declines to ~44% (8 features).

* **Bayesian LLM (Orange):** Starts at ~78% (2 features), declines to ~49% (8 features).

* **Bayesian Assistant (Light Brown Dashed):** Starts at ~90% (2 features), declines to ~68% (8 features).

* **Random (Gray Dashed):** Constant at ~33%.

**b. Generalization to Hotel Recommendation (Bar Charts)**

* **Gemma (Left Sub-chart):**

* Original: 37%

* Oracle: 53%

* Bayesian: 66%

* **Llama (Center Sub-chart):**

* Original: 41%

* Oracle: 56%

* Bayesian: 65%

* **Qwen (Right Sub-chart):**

* Original: 36%

* Oracle: 48%

* Bayesian: 59%

* **Baselines:** Bayesian Assistant (~80%) and Random (~33%) are shown as horizontal dashed lines across all sub-charts.

**c. Generalization to Web Shopping (Bar Charts)**

* **Gemma (Left Sub-chart):**

* Original: 54%

* Oracle: 61%

* Bayesian: 73%

* **Llama (Center Sub-chart):**

* Original: 59%

* Oracle: 63%

* Bayesian: 70%

* **Qwen (Right Sub-chart):**

* Original: 43%

* Oracle: 66%

* Bayesian: 69%

* **Baselines:** Direct Fine-tuning on Web Shopping (~82%) and Random (~33%) are shown as horizontal dashed lines across all sub-charts.

### Key Observations

1. **Consistent Hierarchy:** Across all tasks and models, the performance hierarchy is consistent: **Bayesian LLM > Oracle LLM > Original LLM**. All variants outperform the Random baseline.

2. **Task Difficulty:** The "Number of Features" task (section a) shows a clear negative correlation between feature count and accuracy for all model variants. The "Hotel Recommendation" task (section b) appears more challenging than "Web Shopping" (section c), as indicated by lower overall accuracy scores.

3. **Model Comparison:** Gemma and Llama generally show similar performance patterns. Qwen's Original model often starts lower but its Oracle and Bayesian variants show significant gains, particularly in the Web Shopping task.

4. **Baseline Comparison:** The specialized baselines (Bayesian Assistant, Direct Fine-tuning) consistently achieve the highest accuracy (~80-82%), setting an upper benchmark that the Bayesian LLM variants approach but do not surpass in these evaluations.

### Interpretation

The data demonstrates the effectiveness of Bayesian methods in improving the generalization capability of LLMs. The **Bayesian LLM** variant consistently provides a substantial accuracy boost over the **Original LLM** and even the **Oracle LLM** (which likely has access to some privileged information). This suggests that incorporating Bayesian principles helps models better handle uncertainty and adapt to new tasks or more complex feature spaces.

The downward trend in section (a) indicates that all models struggle as the decision problem becomes more complex (more features). However, the Bayesian approach mitigates this decline more effectively. The strong performance of the "Bayesian Assistant" and "Direct Fine-tuning" baselines highlights that task-specific optimization yields the best results, but the Bayesian LLM offers a powerful general-purpose improvement without such specialized tuning.

The variation between models (e.g., Qwen's lower Original score in Web Shopping) suggests that the base model's pre-training or architecture influences its starting point, but the relative gains from the Oracle and Bayesian methods are robust across different model families. This implies the Bayesian framework is a broadly applicable technique for enhancing LLM performance in decision-making and generalization tasks.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Line Graphs: Generalization to Different Numbers of Features

### Overview

Three line graphs compare the performance of machine learning models (Gemma, Llama, Owen) across tasks (Generalization, Hotel Recommendation, Web Shopping) as the number of features increases. Models include Original LLM, Oracle LLM, Bayesian LLM, Bayesian Assistant, and Random baseline.

### Components/Axes

- **X-axis**: Number of Features (2–8)

- **Y-axis**: Final-round Accuracy (%)

- **Legends**:

- Blue: Original LLM

- Yellow: Oracle LLM

- Orange: Bayesian LLM

- Pink: Bayesian Assistant

- Dashed Gray: Random

### Detailed Analysis

1. **Gemma (Top-Left Graph)**

- **Trend**: All models decline in accuracy as features increase. Bayesian LLM (orange) starts highest (~85%) and declines slowly, while Original LLM (blue) starts at ~40% and plateaus.

- **Data Points**:

- 2 features: Bayesian LLM 85%, Oracle LLM 70%, Original LLM 40%

- 8 features: Bayesian LLM 60%, Oracle LLM 50%, Original LLM 35%

2. **Llama (Top-Center Graph)**

- **Trend**: Similar decline, but Bayesian LLM maintains higher accuracy (~80% at 2 features vs. 60% for Original LLM).

- **Data Points**:

- 2 features: Bayesian LLM 80%, Oracle LLM 65%, Original LLM 38%

- 8 features: Bayesian LLM 65%, Oracle LLM 55%, Original LLM 37%

3. **Owen (Top-Right Graph)**

- **Trend**: Bayesian LLM starts at ~85% (2 features) and declines to ~60% (8 features). Original LLM starts at ~40% and drops to ~30%.

- **Data Points**:

- 2 features: Bayesian LLM 85%, Oracle LLM 70%, Original LLM 40%

- 8 features: Bayesian LLM 60%, Oracle LLM 50%, Original LLM 30%

### Key Observations

- Bayesian models consistently outperform others across all tasks and feature counts.

- Oracle LLM performs better than Original LLM but lags behind Bayesian models.

- Random baseline remains flat (~30–40%) across all tasks.

### Interpretation

Bayesian models demonstrate superior generalization, maintaining higher accuracy even with increased feature complexity. Oracle models approximate Bayesian performance but with a steeper decline, suggesting they may overfit or lack adaptability. The Random baseline’s flat performance indicates that all models outperform chance.

---

## Bar Charts: Generalization to Hotel Recommendation & Web Shopping

### Overview

Bar charts compare model performance (Gemma, Llama, Owen) in specific tasks (Hotel Recommendation, Web Shopping) using Original, Oracle, and Bayesian variants.

### Components/Axes

- **X-axis**: Model Variants (Original, Oracle, Bayesian)

- **Y-axis**: Final-round Accuracy (%)

- **Legends**:

- Blue: Original

- Yellow: Oracle

- Orange: Bayesian

### Detailed Analysis

1. **Hotel Recommendation (Bottom-Left Graph)**

- **Trend**: Bayesian models dominate:

- Gemma Bayesian: 66%

- Llama Bayesian: 65%

- Owen Bayesian: 59%

- **Original/Oracle**:

- Gemma Original: 37%, Oracle: 53%

- Llama Original: 41%, Oracle: 56%

- Owen Original: 36%, Oracle: 48%

2. **Web Shopping (Bottom-Center Graph)**

- **Trend**: Bayesian models again lead:

- Gemma Bayesian: 73%

- Llama Bayesian: 70%

- Owen Bayesian: 69%

- **Original/Oracle**:

- Gemma Original: 54%, Oracle: 61%

- Llama Original: 59%, Oracle: 63%

- Owen Original: 43%, Oracle: 66%

### Key Observations

- Bayesian models achieve **20–30% higher accuracy** than Oracle models in Web Shopping.

- In Hotel Recommendation, Bayesian models outperform Oracle by **10–15%**.

- Original models perform poorly compared to Oracle and Bayesian variants.

### Interpretation

Bayesian models excel in domain-specific tasks (Hotel Recommendation, Web Shopping), likely due to their probabilistic reasoning. Oracle models act as strong baselines but lack the adaptability of Bayesian approaches. The gap between Original and Oracle models highlights the importance of model architecture over raw data.

---

## General Trends Across All Charts

1. **Bayesian Superiority**: Bayesian models consistently outperform others, suggesting their probabilistic framework enhances generalization.

2. **Feature Complexity Trade-off**: Accuracy declines as features increase, but Bayesian models degrade slower, indicating robustness.

3. **Oracle as Midpoint**: Oracle models bridge the gap between Original and Bayesian but fail to match Bayesian adaptability.

4. **Random Baseline**: All models outperform chance (30–40%), validating their utility.

## Conclusion

Bayesian models demonstrate the best generalization across tasks and feature complexity, making them ideal for dynamic, real-world applications. Oracle models serve as strong benchmarks, while Original models require significant improvement for practical use. The data underscores the value of probabilistic reasoning in machine learning systems.

DECODING INTELLIGENCE...