## Diagram: Convolutional Layer Configuration

### Overview

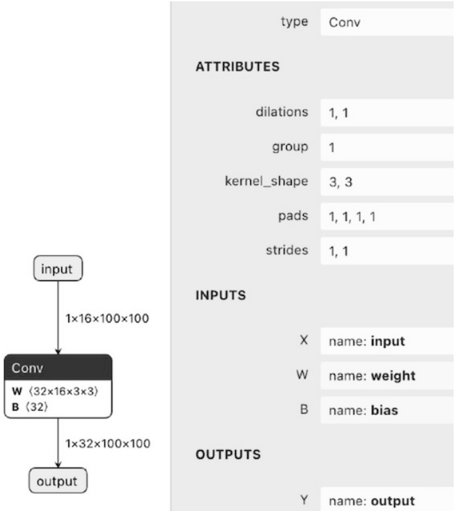

The image displays a technical diagram of a convolutional layer (Conv) within a neural network architecture. It consists of two primary sections: a visual flowchart on the left illustrating the data flow and tensor dimensions, and a detailed attribute table on the right specifying the layer's parameters and I/O definitions.

### Components/Axes

**Left Section - Flowchart:**

* **Nodes:** Three rectangular nodes connected by directional arrows.

* Top Node: Labeled `input`.

* Middle Node: Labeled `Conv` with sub-labels `W (32×16×3×3)` and `B (32)`.

* Bottom Node: Labeled `output`.

* **Connections & Labels:**

* Arrow from `input` to `Conv`: Labeled with tensor dimensions `1×16×100×100`.

* Arrow from `Conv` to `output`: Labeled with tensor dimensions `1×32×100×100`.

**Right Section - Attribute Table:**

The table is organized into four main sections with labels in bold uppercase.

* **Header:** `type` with value `Conv`.

* **Section 1: ATTRIBUTES**

* `dilations`: `1, 1`

* `group`: `1`

* `kernel_shape`: `3, 3`

* `pads`: `1, 1, 1, 1`

* `strides`: `1, 1`

* **Section 2: INPUTS**

* `X`: `name: input`

* `W`: `name: weight`

* `B`: `name: bias`

* **Section 3: OUTPUTS**

* `Y`: `name: output`

### Detailed Analysis

The diagram provides a complete specification for a 2D convolution operation.

**Flowchart Analysis:**

* The input tensor has a shape of `(batch_size=1, channels=16, height=100, width=100)`.

* The convolutional layer (`Conv`) applies a weight kernel (`W`) of shape `(output_channels=32, input_channels=16, kernel_height=3, kernel_width=3)` and a bias vector (`B`) of length `32`.

* The output tensor shape is `(1, 32, 100, 100)`. The spatial dimensions (height and width) remain unchanged at 100x100, which is consistent with the provided padding and stride parameters.

**Attribute Table Analysis:**

* **Kernel & Stride:** The convolution uses a `3x3` kernel (`kernel_shape`) with a stride of `1` in both dimensions (`strides: 1, 1`).

* **Padding:** Padding of `1` is applied to all four sides of the input (`pads: 1, 1, 1, 1`), which explains why the output spatial dimensions (100x100) match the input dimensions despite the 3x3 kernel.

* **Dilation & Groups:** Dilation is `1` (standard convolution), and the operation uses a single group (`group: 1`), meaning it's a standard convolution, not a depthwise or grouped convolution.

* **I/O Mapping:** The table explicitly maps the flowchart's abstract labels to standard neural network terminology:

* `input` (X) corresponds to the input feature map.

* `weight` (W) corresponds to the convolutional kernel.

* `bias` (B) corresponds to the additive bias term.

* `output` (Y) corresponds to the output feature map.

### Key Observations

1. **Dimensional Consistency:** The output spatial size (100x100) is preserved from the input. This is a direct result of applying symmetric padding (`pads: 1, 1, 1, 1`) with a 3x3 kernel and stride 1. The formula `output_size = (input_size - kernel_size + 2*padding) / stride + 1` yields `(100 - 3 + 2*1)/1 + 1 = 100`.

2. **Channel Transformation:** The layer transforms the input from 16 channels to 32 channels, as indicated by the weight kernel's first dimension (`32`) and the output tensor's second dimension (`32`).

3. **Parameter Count:** The total number of trainable parameters for this layer can be calculated from the provided shapes: `(32 * 16 * 3 * 3) + 32 = 4,640` parameters (weights + biases).

### Interpretation

This diagram is a precise technical specification for a single convolutional layer, likely from a neural network model definition or a framework like ONNX (Open Neural Network Exchange). It serves as a blueprint for implementation.

* **Function:** The layer's purpose is to extract 32 different features from the 16-channel input using 3x3 spatial filters. The padding ensures the feature map's spatial resolution is maintained, which is common in architectures like U-Net or when stacking layers without immediate downsampling.

* **Relationship Between Elements:** The flowchart provides a high-level, intuitive view of data transformation (tensor shapes), while the attribute table contains the exact, low-level parameters required to compute that transformation. They are two representations of the same operation.

* **Notable Implication:** The use of `group=1` and `dilations=1` indicates this is a standard, non-specialized convolution. The configuration is straightforward and represents a fundamental building block in convolutional neural networks (CNNs) for tasks like image processing.