## Line Chart: Maze - State Prediction Accuracy by Layer Index

### Overview

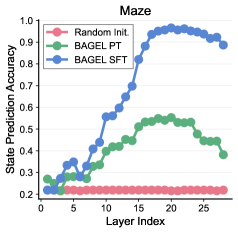

The image is a line chart titled "Maze" that plots "State Prediction Accuracy" against "Layer Index" for three different model initialization or training methods. The chart compares the performance of a randomly initialized model against two variants of a model named "BAGEL" (FT and SFT) across 26 layers (indexed 0-25).

### Components/Axes

* **Chart Title:** "Maze" (centered at the top).

* **Y-Axis:**

* **Label:** "State Prediction Accuracy" (vertical, left side).

* **Scale:** Linear, ranging from 0.2 to 1.0, with major tick marks at 0.2, 0.3, 0.4, 0.5, 0.6, 0.7, 0.8, 0.9, and 1.0.

* **X-Axis:**

* **Label:** "Layer Index" (horizontal, bottom).

* **Scale:** Linear, ranging from 0 to 25, with major tick marks at intervals of 5 (0, 5, 10, 15, 20, 25).

* **Legend:** Located in the top-left corner of the plot area. It contains three entries:

1. **Random Init.** - Represented by a red line with circular markers.

2. **BAGEL FT** - Represented by a green line with circular markers.

3. **BAGEL SFT** - Represented by a blue line with circular markers.

### Detailed Analysis

The chart displays three distinct data series, each showing a different trend in accuracy across the layers.

1. **Random Init. (Red Line):**

* **Trend:** The line is essentially flat, showing no meaningful improvement across layers.

* **Data Points:** The accuracy starts at approximately 0.2 at Layer Index 0 and remains constant at ~0.2 for all layers up to 25. This serves as a baseline.

2. **BAGEL FT (Green Line):**

* **Trend:** The line shows a gradual, modest upward trend that peaks in the middle layers before declining.

* **Data Points:**

* Starts at ~0.25 at Layer 0.

* Increases slowly, reaching ~0.4 at Layer 10.

* Peaks at approximately 0.55 between Layers 18-20.

* Declines after Layer 20, ending at approximately 0.4 at Layer 25.

3. **BAGEL SFT (Blue Line):**

* **Trend:** The line shows a strong, sigmoidal (S-shaped) growth pattern. It starts low, experiences a period of rapid increase, and then plateaus at a high accuracy level.

* **Data Points:**

* Starts at ~0.2 at Layer 0, similar to the random baseline.

* Begins a steep ascent around Layer 5.

* Crosses 0.5 accuracy near Layer 10.

* Reaches a plateau near its peak accuracy of approximately 0.95 around Layer 18.

* Maintains this high accuracy (~0.95) through Layer 25, with a very slight downward trend in the final layers.

### Key Observations

* **Performance Hierarchy:** There is a clear and significant performance gap. BAGEL SFT dramatically outperforms both BAGEL FT and the Random Init. baseline, especially in deeper layers (index >10).

* **Layer Sensitivity:** The effectiveness of the BAGEL models is highly dependent on the layer index. The most substantial gains for BAGEL SFT occur between layers 5 and 15.

* **Peak Performance Layer:** Both BAGEL variants achieve their peak accuracy in the later layers (18-20), but BAGEL SFT's peak is much higher and more sustained.

* **Baseline Comparison:** The flat red line confirms that random initialization provides no predictive capability for this task, highlighting that the observed accuracies for the BAGEL models are due to their training/finetuning methods.

### Interpretation

This chart likely visualizes the internal representational quality of different neural network models (or different training stages of the same model) on a "Maze" state prediction task. The "Layer Index" suggests we are looking at the output or intermediate representations from successive layers of a deep network.

* **What the data suggests:** The "BAGEL SFT" (likely Supervised Fine-Tuning) method is highly effective at teaching the model to predict maze states, with representations becoming progressively more informative through the network's depth until they saturate at a high accuracy. "BAGEL FT" (likely Fine-Tuning) provides only a modest improvement over random, suggesting this training method is less effective for this specific task or may be optimizing for a different objective.

* **How elements relate:** The layer-wise progression shows how information is transformed and refined within the network. The early layers (0-5) for all models have low accuracy, indicating they extract only basic features. The mid-to-late layers are where task-specific, high-level representations are formed, with BAGEL SFT doing this most successfully.

* **Notable anomalies/trends:** The slight decline in accuracy for BAGEL FT and BAGEL SFT in the very last layers (22-25) is interesting. It could indicate over-smoothing, a slight degradation of specialized features, or that the final layers are optimized for a different part of the overall model pipeline not directly measured by this state prediction probe. The stark difference between FT and SFT outcomes underscores the critical importance of the training methodology.