## Violin Plot: Elementary Math Accuracy by LLM Configuration

### Overview

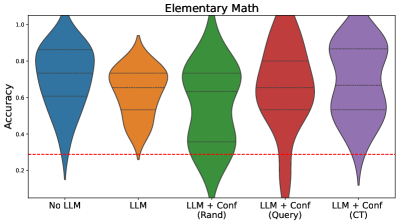

The image displays a violin plot titled "Elementary Math," comparing the distribution of accuracy scores across five different experimental conditions involving Large Language Models (LLMs). The plot visualizes the probability density of the data at different values, with wider sections representing a higher frequency of data points.

### Components/Axes

* **Chart Title:** "Elementary Math" (centered at the top).

* **Y-Axis:** Labeled "Accuracy." The scale runs from 0.0 to 1.0, with major tick marks at 0.0, 0.2, 0.4, 0.6, 0.8, and 1.0.

* **X-Axis:** Contains five categorical labels corresponding to the experimental conditions:

1. No LLM

2. LLM

3. LLM + Conf (Rand)

4. LLM + Conf (Query)

5. LLM + Conf (CT)

* **Data Series:** Five distinct colored violin plots, one for each category on the x-axis. The colors are (from left to right): blue, orange, green, red, and purple.

* **Reference Line:** A horizontal red dashed line is drawn across the plot at approximately y = 0.3.

* **Internal Markers:** Each violin contains three horizontal black lines, representing the quartiles (25th, 50th/median, and 75th percentiles) of the distribution.

### Detailed Analysis

The analysis proceeds from left to right, matching the x-axis order.

1. **No LLM (Blue Violin):**

* **Trend/Shape:** The distribution is broad and somewhat symmetric, with the widest section (highest density) centered around the median. It tapers smoothly towards both the high and low accuracy extremes.

* **Key Values:** The median (middle black line) is approximately **0.70**. The interquartile range (IQR, distance between the top and bottom black lines) spans roughly from **0.55 to 0.85**. The full range extends from near **0.15** to **1.0**.

2. **LLM (Orange Violin):**

* **Trend/Shape:** This distribution is narrower and more concentrated than the "No LLM" case. It is slightly skewed towards lower accuracy, with a longer tail extending downward.

* **Key Values:** The median is lower, at approximately **0.60**. The IQR is tighter, spanning from about **0.50 to 0.75**. The range extends from approximately **0.25** to **0.95**.

3. **LLM + Conf (Rand) (Green Violin):**

* **Trend/Shape:** This distribution is distinctly **bimodal**, with two clear peaks (widest sections). One peak is in the lower accuracy range (~0.4), and another is in the higher range (~0.8). This suggests two subgroups within the data.

* **Key Values:** The median line sits between the two modes, at approximately **0.65**. The IQR is wide, from about **0.45 to 0.85**. The overall range is very broad, from near **0.10** to **1.0**.

4. **LLM + Conf (Query) (Red Violin):**

* **Trend/Shape:** This is the tallest and narrowest violin, indicating a highly concentrated distribution. The density is sharply peaked around the median, with very thin tails.

* **Key Values:** The median is high, at approximately **0.70**. The IQR is very narrow, spanning from about **0.65 to 0.75**. The range is also constrained, from roughly **0.30** to **0.90**.

5. **LLM + Conf (CT) (Purple Violin):**

* **Trend/Shape:** This distribution is broad and appears slightly right-skewed (longer tail towards higher accuracy). It has a wide, dense central region.

* **Key Values:** This condition shows the highest median, at approximately **0.80**. The IQR spans from about **0.70 to 0.90**. The range extends from near **0.20** to **1.0**.

### Key Observations

* **Baseline Reference:** The red dashed line at **Accuracy ≈ 0.3** likely represents a baseline performance level, such as random guessing or a simple heuristic. All distributions are predominantly above this line.

* **Impact of Confidence Calibration:** Adding confidence calibration ("Conf") generally shifts the median accuracy upward compared to the base "LLM" condition, except for the "Rand" variant which shows high variance.

* **Highest & Most Consistent Performance:** The **"LLM + Conf (CT)"** condition achieves the highest median accuracy (~0.80) and maintains a broad, high-performing distribution. The **"LLM + Conf (Query)"** condition shows the most consistent (least variable) performance, with the narrowest spread.

* **Bimodal Anomaly:** The **"LLM + Conf (Rand)"** condition is a clear outlier in shape, exhibiting a bimodal distribution. This indicates that the random confidence calibration method produces highly inconsistent results, splitting performance into a low-accuracy group and a high-accuracy group.

* **Performance Hierarchy (by Median):** LLM + Conf (CT) > LLM + Conf (Query) ≈ No LLM > LLM + Conf (Rand) > LLM.

### Interpretation

This chart demonstrates the effect of different LLM augmentation strategies on performance in elementary math tasks. The core finding is that **structured confidence calibration methods (Query and CT) can improve both the median accuracy and the reliability (consistency) of LLM outputs** compared to using a base LLM alone or using an unstructured (Random) calibration method.

* The **"No LLM"** baseline performs surprisingly well, suggesting the task may have patterns that are accessible without model assistance, or that the "LLM" being tested is not specialized for this domain.

* The poor and inconsistent performance of **"LLM + Conf (Rand)"** highlights that the *method* of confidence calibration is critical; a naive random approach introduces noise and bifurcates outcomes.

* The tight distribution of **"LLM + Conf (Query)"** suggests it makes the model's performance highly predictable, which is valuable for deployment where reliability is key.

* The high median of **"LLM + Conf (CT)"** suggests it is the most effective method for boosting raw accuracy, though with slightly more variability than the Query method.

* The red baseline at 0.3 provides crucial context, showing that even the worst-performing condition (the lower mode of the Rand method) still generally outperforms a minimal baseline.

In summary, the data argues for the implementation of specific, structured confidence calibration techniques (particularly CT and Query variants) to enhance and stabilize LLM performance on elementary math problems, moving beyond both unaugmented models and simplistic calibration approaches.