## Diagram: Privacy Layer for LLM/Gen AI Tools

### Overview

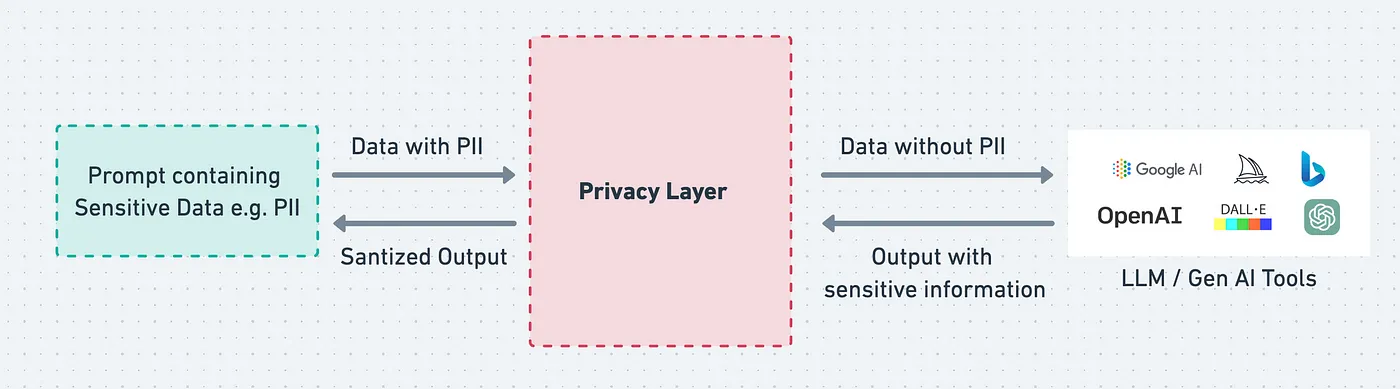

The image is a diagram illustrating how a privacy layer can be used to sanitize data containing Personally Identifiable Information (PII) before it is processed by Large Language Models (LLMs) or Generative AI tools. The diagram shows the flow of data from a prompt containing sensitive data, through a privacy layer, and then to AI tools, with a corresponding flow of output back.

### Components/Axes

* **Left Box (Input):** A dashed green box labeled "Prompt containing Sensitive Data e.g. PII".

* **Right Box (Output):** A white box containing logos for Google AI, DALL-E, and OpenAI, labeled "OpenAI" above and "LLM / Gen AI Tools" below.

* **Center Box (Privacy Layer):** A dashed red box labeled "Privacy Layer".

* **Arrows:**

* A gray arrow from the left box to the center box, labeled "Data with PII".

* A gray arrow from the center box to the right box, labeled "Data without PII".

* A gray arrow from the right box to the center box, labeled "Output with sensitive information".

* A gray arrow from the center box to the left box, labeled "Santized Output".

### Detailed Analysis

* **Input (Left Box):** The green box represents the initial prompt that contains sensitive data, such as PII.

* **Privacy Layer (Center Box):** The red box represents the privacy layer, which is responsible for sanitizing the input data and removing PII. It also receives potentially sensitive output from the AI tools and sanitizes it before sending it back.

* **LLM/Gen AI Tools (Right Box):** The white box represents the LLMs or Generative AI tools, such as Google AI, DALL-E, and OpenAI. These tools receive data without PII from the privacy layer and generate output, which may contain sensitive information.

* **Data Flow:**

* Data with PII flows from the input prompt to the privacy layer.

* Data without PII flows from the privacy layer to the LLM/Gen AI tools.

* Output with sensitive information flows from the LLM/Gen AI tools to the privacy layer.

* Sanitized output flows from the privacy layer back to the origin of the prompt.

### Key Observations

* The diagram highlights the importance of a privacy layer in protecting sensitive data when using LLMs or Generative AI tools.

* The privacy layer acts as an intermediary between the input prompt and the AI tools, ensuring that PII is removed before processing and that sensitive information in the output is sanitized.

* The diagram shows a closed-loop system, where the privacy layer handles both input and output data.

### Interpretation

The diagram illustrates a common approach to addressing privacy concerns when using LLMs and Generative AI. By implementing a privacy layer, organizations can reduce the risk of exposing sensitive data to AI models and potentially violating privacy regulations. The privacy layer sanitizes input prompts to remove PII before they are processed by the AI, and it also sanitizes the output to remove any sensitive information that may have been generated. This helps to ensure that the AI models are not trained on or used to generate data that could compromise individual privacy. The inclusion of logos for Google AI, DALL-E, and OpenAI suggests that this privacy layer approach is applicable to a variety of AI tools.