## System Architecture Diagram: Privacy Layer for AI Interactions

### Overview

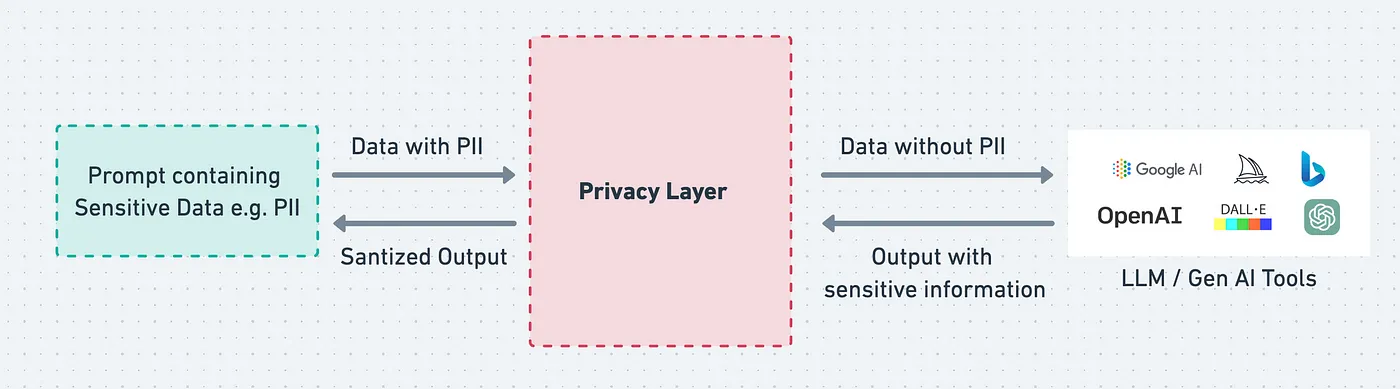

This image is a system architecture diagram illustrating a privacy-preserving data flow between a user prompt containing sensitive information and external Large Language Model (LLM) or Generative AI tools. The diagram depicts a central "Privacy Layer" that sanitizes data before it leaves the user's environment and re-sanitizes output before it returns.

### Components/Axes

The diagram is composed of three primary rectangular components arranged horizontally, connected by directional arrows indicating data flow.

1. **Left Component (Source):**

* **Shape:** Rectangle with a dashed, teal-colored border.

* **Label Text:** "Prompt containing Sensitive Data e.g. PII"

* **Position:** Left side of the diagram.

2. **Central Component (Processing Layer):**

* **Shape:** Rectangle with a dashed, red-colored border.

* **Label Text:** "Privacy Layer"

* **Position:** Center of the diagram.

3. **Right Component (External Service):**

* **Shape:** Rectangle with a solid, light gray border.

* **Label Text:** "LLM / Gen AI Tools"

* **Logos/Icons:** Contains logos for:

* Google AI (text and multicolored dot logo)

* OpenAI (text and black sailboat logo)

* DALL-E (text and multicolored square logo)

* A blue "b" logo (likely Bing)

* A green circular logo with a white symbol (likely ChatGPT)

* **Position:** Right side of the diagram.

**Data Flow Arrows & Labels:**

* **Top Arrow (Left to Right):** Labeled "Data with PII". Originates from the left component, points to the central Privacy Layer.

* **Bottom Arrow (Right to Left):** Labeled "Sanitized Output". Originates from the central Privacy Layer, points to the left component.

* **Top Arrow (Center to Right):** Labeled "Data without PII". Originates from the central Privacy Layer, points to the right component.

* **Bottom Arrow (Right to Center):** Labeled "Output with sensitive information". Originates from the right component, points to the central Privacy Layer.

### Detailed Analysis

The diagram explicitly defines a bidirectional data sanitization process:

1. **Outbound Flow (User to AI):**

* A user's prompt, which may contain Personally Identifiable Information (PII) or other sensitive data, is sent to the Privacy Layer.

* The Privacy Layer processes this input, removing or obfuscating the sensitive elements.

* The resulting "Data without PII" is then transmitted to the external LLM/Gen AI tools.

2. **Inbound Flow (AI to User):**

* The external AI tools generate a response, which is labeled as potentially containing "sensitive information." This could be because the AI model itself might inadvertently echo or infer sensitive data from the sanitized prompt or its training.

* This raw output is sent back to the Privacy Layer.

* The Privacy Layer performs a second sanitization pass on the AI's response.

* The final "Sanitized Output" is delivered back to the user's environment (the original prompt source).

### Key Observations

* **Bidirectional Sanitization:** The Privacy Layer is not a one-way filter. It actively scrubs data both on the way out to the AI service and on the way back from it.

* **Explicit PII Handling:** The diagram specifically names "PII" as the type of sensitive data being protected, indicating a focus on privacy regulations like GDPR or CCPA.

* **Third-Party Service Agnosticism:** The right-hand box groups multiple, distinct AI service providers (Google, OpenAI, Microsoft/Bing) under a single label, suggesting the Privacy Layer is designed to work as a gateway to various external tools.

* **Visual Coding:** The use of dashed borders for the user-side components (source and privacy layer) versus a solid border for the external service visually distinguishes the trusted internal environment from the external, untrusted one.

### Interpretation

This diagram represents a **privacy-by-design architecture** for leveraging commercial AI services. Its core purpose is to enable the use of powerful, external LLMs while mitigating the risk of exposing sensitive user data.

* **What it demonstrates:** It shows a technical solution to the conflict between data privacy and AI utility. Organizations can harness advanced AI capabilities without directly feeding raw, sensitive information into third-party systems.

* **How elements relate:** The Privacy Layer acts as a mandatory, intelligent proxy. It decouples the user's data environment from the AI service environment, ensuring compliance and reducing the attack surface for data leaks.

* **Notable implications:**

* **Compliance:** This architecture is likely a response to strict data protection laws. It provides an auditable trail showing that PII was stripped before leaving the organization.

* **Trust Model:** It shifts trust from the external AI provider to the internal Privacy Layer. The security and effectiveness of the entire system depend on the robustness of this layer's sanitization algorithms.

* **Potential Limitation:** The diagram implies the Privacy Layer can perfectly identify and remove all sensitive data ("Data without PII") and all sensitive information in the output. In practice, this is a complex challenge, and the system's efficacy would depend on the sophistication of its detection and redaction mechanisms. The label "Output with sensitive information" acknowledges that the raw AI response is not inherently safe.