## Diagram: Data Flow Through Privacy Layer

### Overview

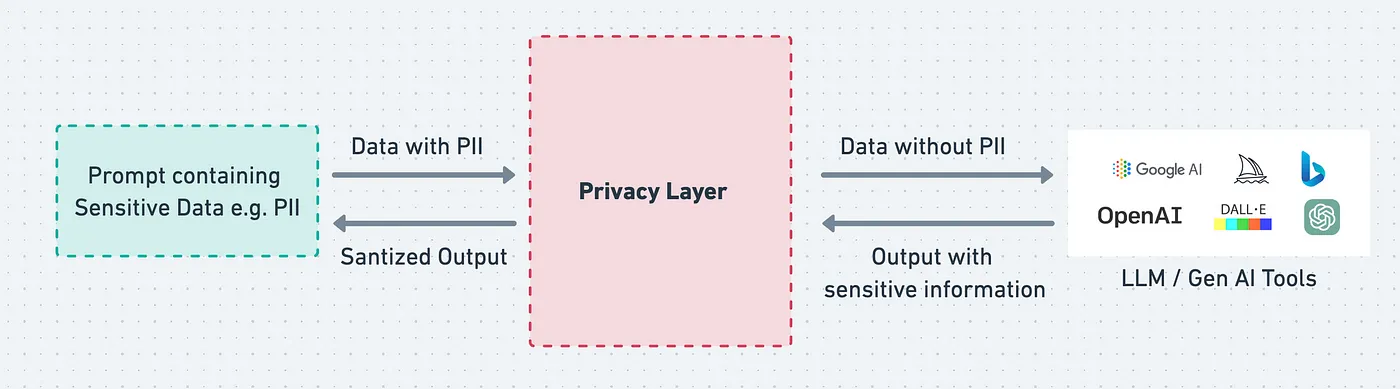

The diagram illustrates the flow of data through a privacy layer system, showing how sensitive information is processed and sanitized before reaching AI tools. It highlights the transformation of data containing Personally Identifiable Information (PII) into sanitized outputs while acknowledging potential risks of residual sensitive information.

### Components/Axes

1. **Central Component**:

- **Privacy Layer** (pink dashed box)

- Acts as the core processing unit for data sanitization

2. **Input Side**:

- **Prompt containing Sensitive Data e.g. PII** (green dashed box)

- Arrows indicate bidirectional flow between raw data and sanitized output

3. **Output Side**:

- **Data without PII** (left arrow from Privacy Layer)

- **Output with sensitive information** (right arrow from Privacy Layer)

4. **External Tools**:

- **LLM/Gen AI Tools** (right panel)

- Includes logos of:

- Google AI (grid icon)

- OpenAI (sailboat icon)

- DALL-E (color blocks)

- Bloomberg (B icon)

- Other tools (green circular icon)

### Detailed Analysis

- **Data Flow**:

- Sensitive data enters the Privacy Layer from the left

- Two output paths emerge:

- Sanitized output (left arrow)

- Potentially compromised output (right arrow)

- **Tool Integration**:

- Multiple AI platforms receive processed data

- Tools include both text (Google AI, OpenAI) and image generation (DALL-E) systems

- **Color Coding**:

- Privacy Layer: Pink (dashed border)

- Input/Output: Gray arrows

- Tools: Color-coded icons matching legend

### Key Observations

1. **Bidirectional Data Flow**: The Privacy Layer processes data in both directions, suggesting iterative refinement

2. **Residual Risk**: Explicit acknowledgment that some sensitive information may persist in outputs

3. **Tool Diversity**: Represents both general-purpose (Google AI, OpenAI) and specialized (DALL-E) AI systems

4. **Visual Hierarchy**: Privacy Layer dominates central position, emphasizing its critical role

### Interpretation

This diagram reveals a fundamental tension in AI data processing: the need to balance data utility with privacy protection. The Privacy Layer's central position underscores its role as a critical control point, while the dual output arrows highlight the imperfect nature of current sanitization methods. The inclusion of multiple AI tools suggests this system is designed for enterprise environments requiring integration with various machine learning platforms. The persistent risk of sensitive information leakage (right arrow) implies that organizations must implement additional safeguards beyond basic PII removal, particularly when handling highly regulated data types. The bidirectional flow between raw data and sanitized output suggests an iterative approach to privacy management, where outputs may be re-evaluated and reprocessed as needed.