## [Dual Line Charts]: Performance Comparison of Multi-Armed Bandit Agents

### Overview

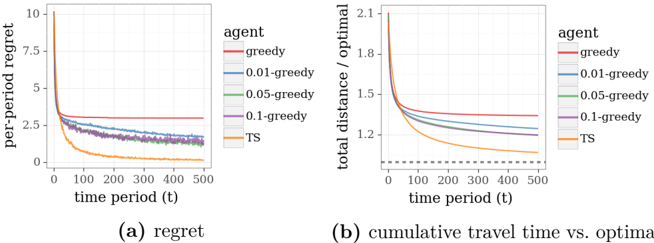

The image displays two side-by-side line charts comparing the performance of five different agent algorithms over 500 time periods. The charts are labeled (a) and (b) and share a common legend. The overall theme is the evaluation of algorithmic efficiency, likely in a routing or resource allocation context, measuring "regret" and "travel time" against an optimal baseline.

### Components/Axes

* **Chart (a) - Left:**

* **Title/Label:** `(a) regret` (centered below the chart).

* **Y-axis:** Label is `per-period regret`. Scale runs from 0 to 10 with major ticks at 0, 2.5, 5, 7.5, and 10.

* **X-axis:** Label is `time period (t)`. Scale runs from 0 to 500 with major ticks at 0, 100, 200, 300, 400, and 500.

* **Chart (b) - Right:**

* **Title/Label:** `(b) cumulative travel time vs. optimal` (centered below the chart).

* **Y-axis:** Label is `total distance / optimal`. Scale runs from 1.0 to 2.1 with major ticks at 1.0, 1.2, 1.5, 1.8, and 2.1.

* **X-axis:** Label is `time period (t)`. Scale runs from 0 to 500 with major ticks at 0, 100, 200, 300, 400, and 500.

* **Legend (Positioned to the right of both charts, applying to both):**

* **Header:** `agent`

* **Entries (with corresponding line colors):**

1. `greedy` - Red line

2. `0.01-greedy` - Blue line

3. `0.05-greedy` - Green line

4. `0.1-greedy` - Purple line

5. `TS` - Orange line

* **Additional Element in Chart (b):** A horizontal dashed black line at `y = 1.0`, representing the optimal baseline (total distance / optimal = 1).

### Detailed Analysis

**Chart (a): Per-Period Regret**

* **Trend Verification:** All five lines show a decreasing trend, starting high and converging towards lower values as time period `t` increases. The rate of decrease and final asymptotic value differ significantly between agents.

* **Data Series & Approximate Values:**

* **`greedy` (Red):** Starts near 10. Drops rapidly until ~t=50, then plateaus at a relatively high level. At t=500, regret is approximately **2.8**.

* **`0.01-greedy` (Blue):** Starts near 10. Drops more slowly than `greedy`. At t=500, regret is approximately **1.8**.

* **`0.05-greedy` (Green):** Starts near 10. Follows a path very close to, but slightly below, the `0.01-greedy` line. At t=500, regret is approximately **1.7**.

* **`0.1-greedy` (Purple):** Starts near 10. Follows a path very close to, but slightly below, the `0.05-greedy` line. At t=500, regret is approximately **1.6**.

* **`TS` (Orange):** Starts near 10. Shows the steepest initial decline, dropping below all other lines by t=50. Continues to decrease steadily. At t=500, regret is the lowest, approximately **0.2**.

**Chart (b): Cumulative Travel Time vs. Optimal**

* **Trend Verification:** All five agent lines show a decreasing trend, starting above the optimal baseline (dashed line at 1.0) and converging towards it. The `TS` agent approaches the baseline most closely.

* **Data Series & Approximate Values:**

* **`greedy` (Red):** Starts above 2.1. Drops quickly initially, then flattens. At t=500, the ratio is approximately **1.35**.

* **`0.01-greedy` (Blue):** Starts above 2.1. Decreases steadily. At t=500, the ratio is approximately **1.22**.

* **`0.05-greedy` (Green):** Starts above 2.1. Follows a path slightly below `0.01-greedy`. At t=500, the ratio is approximately **1.18**.

* **`0.1-greedy` (Purple):** Starts above 2.1. Follows a path slightly below `0.05-greedy`. At t=500, the ratio is approximately **1.15**.

* **`TS` (Orange):** Starts above 2.1. Shows the most rapid convergence. By t=200, it is already below 1.2. At t=500, it is the closest to optimal, with a ratio of approximately **1.05**.

* **Optimal Baseline (Dashed Black):** Constant at **1.0**.

### Key Observations

1. **Clear Performance Hierarchy:** There is a consistent performance order across both metrics: `TS` (best) > `0.1-greedy` > `0.05-greedy` > `0.01-greedy` > `greedy` (worst).

2. **Thompson Sampling (TS) Dominance:** The `TS` agent significantly outperforms all epsilon-greedy variants. Its regret approaches near-zero, and its cumulative travel time is within ~5% of optimal by t=500.

3. **Effect of Epsilon:** Among the greedy agents, a higher exploration rate (epsilon) correlates with better long-term performance. `0.1-greedy` consistently outperforms `0.05-greedy`, which outperforms `0.01-greedy`.

4. **Convergence Behavior:** All algorithms show learning (improving performance over time), but their convergence rates and final asymptotes differ markedly. The `greedy` algorithm (with no exploration) gets stuck at a suboptimal performance level.

5. **Metric Correlation:** The trends in per-period regret (a) are mirrored in the cumulative travel time ratio (b), suggesting regret is a good proxy for overall inefficiency in this context.

### Interpretation

This data demonstrates a classic exploration-exploitation trade-off in multi-armed bandit or reinforcement learning problems.

* **What the data suggests:** The pure `greedy` algorithm, which always exploits the current best-known option, fails to discover better alternatives, leading to high permanent regret and inefficiency (~35% worse than optimal). Introducing a small probability of random exploration (`0.01-greedy`) improves performance, and increasing this probability further (`0.1-greedy`) yields better results, as the agent gathers more information about its environment.

* **Why TS excels:** Thompson Sampling (`TS`) employs a more sophisticated, probabilistic approach to exploration. It maintains a belief distribution over which options are best and samples from this distribution to make decisions. This allows it to explore more intelligently and efficiently than fixed-rate epsilon-greedy strategies, leading to dramatically faster learning and near-optimal performance.

* **Practical Implication:** For the problem modeled here (likely a dynamic routing or task assignment problem), employing a Thompson Sampling agent would result in significantly lower cumulative costs (travel time) compared to simpler heuristic strategies. The charts provide strong empirical evidence for the value of Bayesian exploration methods over naive epsilon-greedy approaches in this domain.

* **Underlying Assumption:** The "optimal" baseline (dashed line) represents the performance of an agent with perfect knowledge. The goal of the learning agents is to approach this line. The `TS` agent's trajectory shows it is successfully converging towards this ideal.