TECHNICAL ASSET FINGERPRINT

8da947248519ce32a3935372

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

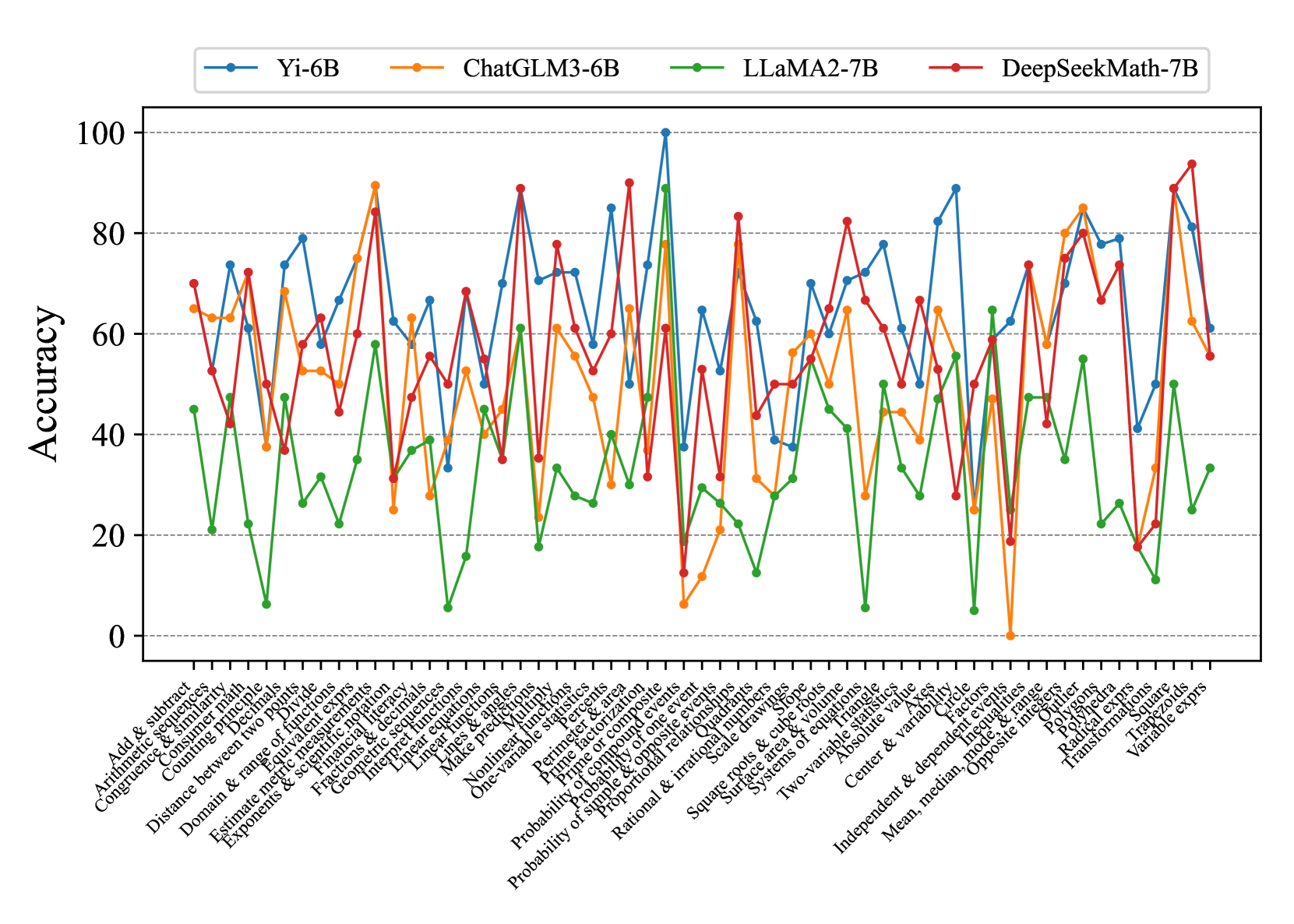

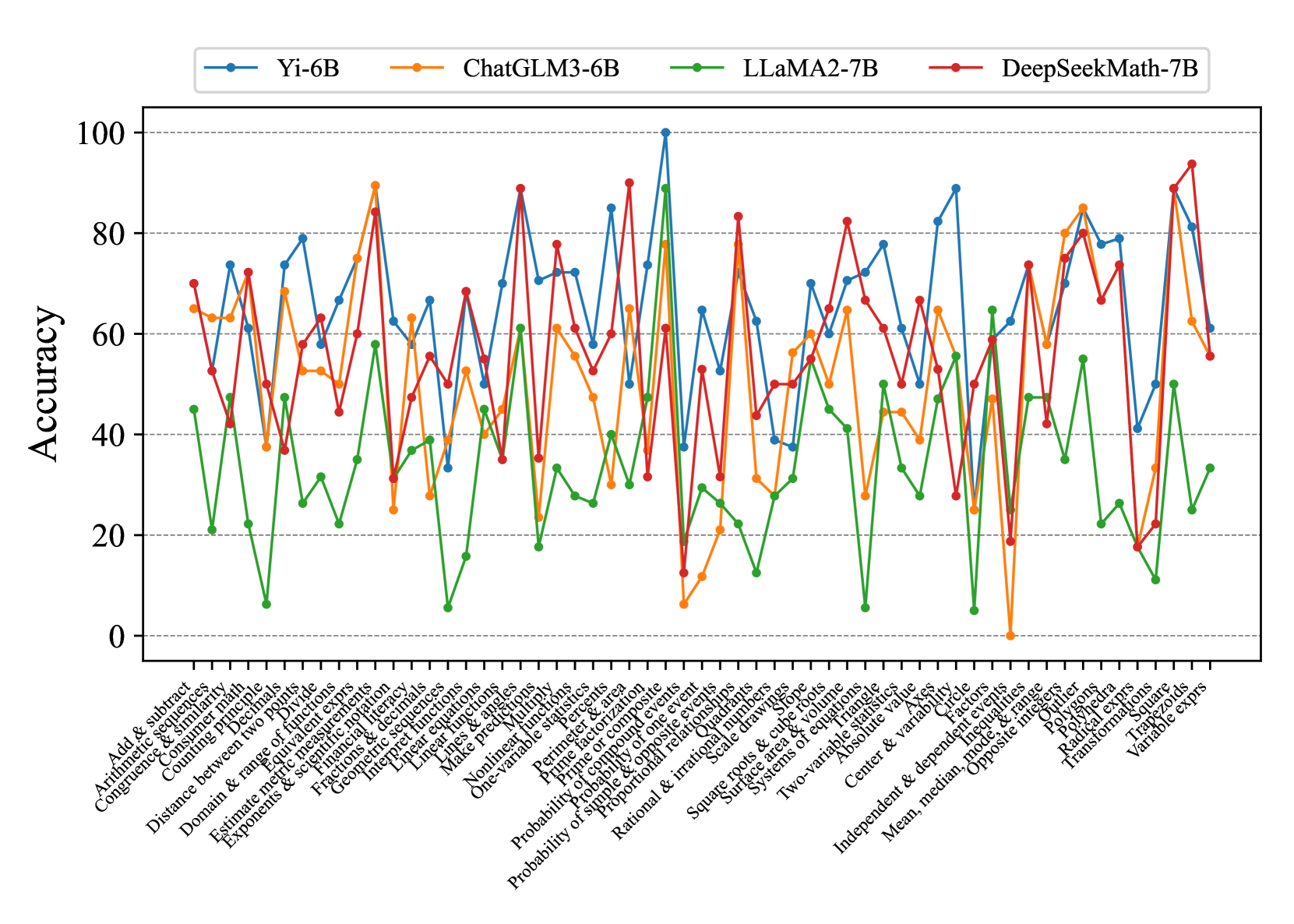

## Line Chart: Model Accuracy on Math Problems

### Overview

The image is a line chart comparing the accuracy of four different language models (Yi-6B, ChatGLM3-6B, LLaMA2-7B, and DeepSeekMath-7B) on a variety of math problem types. The x-axis represents different math topics, and the y-axis represents the accuracy score, ranging from 0 to 100.

### Components/Axes

* **Title:** There is no explicit title on the chart.

* **X-axis:** Represents different math problem types. The labels are densely packed and rotated for readability. The labels are:

* Add & subtract

* Arithmetic sequences

* Congruence & similarity

* Consumer math

* Counting principle

* Distance between two points

* Domain & range of functions

* Estimate metric measurements

* Exponents & scientific notation

* Financial literacy

* Geometric sequences

* Interpret functions

* Linear equations

* Linear functions

* Lines & angles

* Make predictions

* Multiply

* Nonlinear functions

* One-variable statistics

* Percents

* Perimeter & area

* Prime factorization

* Prime or composite

* Probability of compound events

* Probability of one event

* Probability of simple & opposite events

* Proportional relationships

* Quadrants

* Radical expressions

* Rational & irrational numbers

* Scale drawings

* Slope

* Square

* Square roots & cube roots

* Surface area & volume

* Systems of equations

* Transformations

* Trapezoids

* Triangle

* Two-variable statistics

* Variable expressions

* Absolute value

* Center & variability

* Circle

* Divide

* Equivalent expressions

* Factors

* Inequalities

* Independent & dependent events

* Mean, median, mode, & range

* Opposite integers

* Outliers

* Polygons

* Polyhedra

* **Y-axis:** Represents "Accuracy" and ranges from 0 to 100, with tick marks at intervals of 20.

* **Legend:** Located at the top of the chart.

* **Blue:** Yi-6B

* **Orange:** ChatGLM3-6B

* **Green:** LLaMA2-7B

* **Red:** DeepSeekMath-7B

### Detailed Analysis

* **Yi-6B (Blue):** Generally fluctuates between 50 and 80 accuracy, with some dips and peaks.

* **ChatGLM3-6B (Orange):** Shows a similar trend to Yi-6B, but often with slightly lower accuracy on many problem types.

* **LLaMA2-7B (Green):** Consistently has the lowest accuracy across almost all problem types, often below 40, and sometimes near 0.

* **DeepSeekMath-7B (Red):** Appears to have the highest accuracy overall, frequently reaching above 70 and sometimes peaking near 90.

**Specific Data Points (Approximate):**

It's difficult to provide exact values without a grid, but here are some approximate data points for a few problem types:

* **Add & Subtract:**

* Yi-6B: ~70

* ChatGLM3-6B: ~65

* LLaMA2-7B: ~45

* DeepSeekMath-7B: ~70

* **Exponents & Scientific Notation:**

* Yi-6B: ~80

* ChatGLM3-6B: ~70

* LLaMA2-7B: ~30

* DeepSeekMath-7B: ~75

* **Prime Factorization:**

* Yi-6B: ~50

* ChatGLM3-6B: ~40

* LLaMA2-7B: ~20

* DeepSeekMath-7B: ~50

* **Absolute Value:**

* Yi-6B: ~70

* ChatGLM3-6B: ~70

* LLaMA2-7B: ~60

* DeepSeekMath-7B: ~80

### Key Observations

* DeepSeekMath-7B generally outperforms the other models.

* LLaMA2-7B consistently underperforms compared to the other models.

* Yi-6B and ChatGLM3-6B have similar performance, with Yi-6B often slightly better.

* There is significant variance in accuracy across different problem types for all models.

### Interpretation

The chart demonstrates the varying capabilities of different language models in solving math problems. DeepSeekMath-7B appears to be the most proficient, suggesting it may have been specifically trained or optimized for mathematical reasoning. LLaMA2-7B's lower performance indicates it may not be as well-suited for these types of tasks. The fluctuations in accuracy across different problem types highlight the challenges that even the best models face in handling the diverse range of mathematical concepts. The data suggests that model architecture and training data play a crucial role in determining a language model's ability to solve math problems accurately.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Line Chart: Model Accuracy on Math Problems

### Overview

This line chart compares the accuracy of four different language models – Yi-6B, ChatGLM3-6B, LLaMA2-7B, and DeepSeekMath-7B – across a range of mathematical problem types. The x-axis represents the problem type, and the y-axis represents the accuracy, ranging from 0 to 100. The chart displays the performance of each model as a line, allowing for a visual comparison of their strengths and weaknesses.

### Components/Axes

* **X-axis Title:** Problem Type (Categorical)

* **Y-axis Title:** Accuracy (Numerical, 0-100)

* **Legend:** Located at the top-center of the chart.

* Yi-6B (Blue Line)

* ChatGLM3-6B (Orange Line)

* LLaMA2-7B (Green Line)

* DeepSeekMath-7B (Black Line)

* **Problem Types (X-axis labels):**

1. Arithmetic & significant figures

2. Add & subtract

3. Arithmetic & similar triangles

4. Congruence

5. Combining like terms

6. Distance between two points

7. Domain & range

8. Estimate medical measurements

9. Exponents & radicals

10. Fractional exponents

11. Integer exponents

12. Linear functions

13. Make inequalities

14. Nonlinear multiple choice

15. One variable equations

16. Perimeter & area

17. Prime factorization

18. Probability of a single event

19. Probability of compound events

20. Probability of independent events

21. Rational & irrational numbers

22. Square roots & cube roots

23. Systems of equations

24. Two-variable equations

25. Absolute value

26. Center & variability

27. Independent & dependent variables

28. Mean, median, mode

29. Polynomials

30. Transformations

31. Variable exponents

### Detailed Analysis

Here's a breakdown of each model's performance, based on the visual trends and approximate data points:

* **Yi-6B (Blue Line):** This line generally fluctuates between 20 and 80 accuracy. It shows a peak of approximately 85 accuracy around the "Linear functions" problem type. It dips to around 10-20 accuracy for "Rational & irrational numbers" and "Square roots & cube roots". The line exhibits significant volatility across different problem types.

* **ChatGLM3-6B (Orange Line):** This model demonstrates the lowest overall accuracy, consistently staying below 40. It has a slight peak around 35-40 for "Arithmetic & significant figures" and "Add & subtract". It reaches its lowest point, near 0, for "Square roots & cube roots". The line is relatively flat, indicating consistent low performance.

* **LLaMA2-7B (Green Line):** This model shows a moderate level of accuracy, generally between 30 and 70. It has a peak of approximately 70 accuracy around "Polynomials". It dips to around 20-30 for "Rational & irrational numbers" and "Square roots & cube roots". The line is more stable than Yi-6B, but less consistently high-performing than DeepSeekMath-7B.

* **DeepSeekMath-7B (Black Line):** This model consistently achieves the highest accuracy, frequently exceeding 80. It reaches a peak of approximately 90 accuracy around "Linear functions" and "Polynomials". It dips to around 50-60 for "Square roots & cube roots" and "Variable exponents". The line is generally smooth, indicating robust performance across most problem types.

### Key Observations

* DeepSeekMath-7B consistently outperforms the other models across almost all problem types.

* ChatGLM3-6B consistently underperforms, exhibiting the lowest accuracy.

* All models struggle with "Rational & irrational numbers" and "Square roots & cube roots", showing a significant drop in accuracy for these problem types.

* Yi-6B and LLaMA2-7B show more variability in their performance, with larger fluctuations in accuracy depending on the problem type.

* "Linear functions" and "Polynomials" appear to be the easiest problem types for the models, as they consistently achieve higher accuracy on these.

### Interpretation

The data suggests that DeepSeekMath-7B is the most capable model for solving a wide range of mathematical problems, likely due to its specialized training or architecture. ChatGLM3-6B appears to be the least effective, potentially indicating a lack of mathematical reasoning capabilities. The consistent struggles with "Rational & irrational numbers" and "Square roots & cube roots" across all models suggest these concepts are particularly challenging for language models, possibly due to the need for precise numerical manipulation and understanding of abstract mathematical principles. The variability in Yi-6B and LLaMA2-7B's performance highlights the importance of problem-specific expertise; these models may excel in certain areas but struggle in others. The chart demonstrates a clear hierarchy of performance among the models, with DeepSeekMath-7B setting a high benchmark for mathematical problem-solving. The differences in performance could be attributed to differences in model size, training data, and architectural choices. Further investigation into the training data and model architectures could provide insights into the reasons for these performance disparities.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Multi-Line Chart: Accuracy of Four AI Models Across Mathematical Topics

### Overview

This image is a multi-line chart comparing the performance (accuracy) of four different large language models (LLMs) across a wide range of mathematical problem categories. The chart visualizes how each model's accuracy fluctuates significantly depending on the specific mathematical topic.

### Components/Axes

* **Chart Type:** Multi-line chart with markers.

* **Y-Axis:**

* **Label:** "Accuracy"

* **Scale:** Linear, from 0 to 100.

* **Major Ticks:** 0, 20, 40, 60, 80, 100.

* **X-Axis:**

* **Label:** Not explicitly labeled, but contains a dense list of mathematical topics.

* **Content:** A series of 52 distinct mathematical categories, listed from left to right. The text is rotated approximately 45 degrees for readability.

* **Language:** All x-axis labels are in English.

* **Legend:**

* **Position:** Top-center, above the plot area.

* **Content:** Four entries, each associating a color and marker style with a model name.

1. **Blue line with circle markers:** Yi-6B

2. **Orange line with circle markers:** ChatGLM3-6B

3. **Green line with circle markers:** LLaMA2-7B

4. **Red line with circle markers:** DeepSeekMath-7B

### Detailed Analysis

The chart shows high variability in model performance. Below is an analysis grouped by general mathematical domain, listing approximate accuracy values (to the nearest 5%) for key points. Values are approximate due to visual estimation from the chart.

**1. Arithmetic & Basic Operations (Leftmost section):**

* **Trend:** Models show moderate to high accuracy, with significant divergence.

* **Data Points (Approx.):**

* *Add & subtract:* Yi-6B ~70%, ChatGLM3-6B ~65%, LLaMA2-7B ~45%, DeepSeekMath-7B ~70%.

* *Arithmetic sequences:* All models dip, with LLaMA2-7B lowest (~20%).

* *Consumer math:* Yi-6B peaks (~75%), others are lower (30-50%).

**2. Algebra & Equations (Left-center section):**

* **Trend:** Extreme volatility. Some models achieve near-perfect scores on specific topics while failing on others.

* **Data Points (Approx.):**

* *Linear equations:* Yi-6B ~70%, ChatGLM3-6B ~55%, LLaMA2-7B ~45%, DeepSeekMath-7B ~70%.

* *Nonlinear functions:* A notable peak for DeepSeekMath-7B (~90%) and Yi-6B (~85%).

* *Probability of compound events:* A major low point for all models. Yi-6B ~35%, ChatGLM3-6B ~10%, LLaMA2-7B ~15%, DeepSeekMath-7B ~15%.

**3. Geometry & Measurement (Center section):**

* **Trend:** Mixed performance. LLaMA2-7B consistently underperforms in this domain.

* **Data Points (Approx.):**

* *Perimeter & area:* DeepSeekMath-7B ~90%, Yi-6B ~60%, ChatGLM3-6B ~65%, LLaMA2-7B ~40%.

* *Pythagorean theorem:* DeepSeekMath-7B ~85%, others between 40-65%.

* *Surface area & volume:* All models show a dip, with LLaMA2-7B near 0%.

**4. Advanced & Applied Topics (Right-center section):**

* **Trend:** Continued high variance. Some advanced topics see strong performance from specialized models.

* **Data Points (Approx.):**

* *Two-variable statistics:* Yi-6B ~80%, DeepSeekMath-7B ~65%, others lower.

* *Systems of equations:* DeepSeekMath-7B ~80%, Yi-6B ~70%, ChatGLM3-6B ~65%, LLaMA2-7B ~45%.

* *Independent & dependent events:* A catastrophic drop for ChatGLM3-6B to ~0%. Others range from 20-75%.

**5. Calculus & Functions (Rightmost section):**

* **Trend:** DeepSeekMath-7B and Yi-6B show strong, leading performance.

* **Data Points (Approx.):**

* *Transformations:* DeepSeekMath-7B ~90%, Yi-6B ~80%, ChatGLM3-6B ~60%, LLaMA2-7B ~25%.

* *Variable exprs (final point):* DeepSeekMath-7B ~55%, Yi-6B ~60%, ChatGLM3-6B ~55%, LLaMA2-7B ~35%.

### Key Observations

1. **Model Specialization:** DeepSeekMath-7B (red) frequently achieves the highest peaks, especially in geometry, algebra, and calculus topics, suggesting strong mathematical specialization. Yi-6B (blue) is a consistent high performer across many domains.

2. **General Weakness:** LLaMA2-7B (green) is consistently the lowest or among the lowest performers across nearly all categories, rarely exceeding 50% accuracy.

3. **Topic-Specific Failures:** All models exhibit severe performance drops on specific topics. Notable universal low points include "Probability of compound events" and "Surface area & volume." ChatGLM3-6B has an extreme outlier near 0% on "Independent & dependent events."

4. **High Volatility:** No model demonstrates smooth, consistent performance. Accuracy is highly dependent on the specific mathematical concept being tested.

### Interpretation

This chart demonstrates that mathematical reasoning in LLMs is not a monolithic capability but a highly fragmented one. Performance is exquisitely sensitive to the specific sub-domain of mathematics.

* **What the data suggests:** The models likely have uneven coverage in their training data or differing architectural biases for handling symbolic vs. numerical reasoning. The near-perfect scores on some topics (e.g., DeepSeekMath-7B on "Perimeter & area") contrasted with near-zero scores on others indicate brittle knowledge rather than robust, generalized mathematical understanding.

* **How elements relate:** The x-axis represents a spectrum of mathematical complexity and abstraction. The jagged, non-parallel lines show that model capabilities are not simply "better" or "worse" in a linear fashion; they are incomparable across different topics. A model strong in algebra may be weak in geometry.

* **Notable anomalies:** The catastrophic failure of ChatGLM3-6B on "Independent & dependent events" is a critical outlier. It suggests a potential fundamental gap in its training or reasoning approach for probabilistic concepts involving dependency, which is a core statistical idea. The universal struggle with "Probability of compound events" highlights a common challenge area for current LLMs in handling layered probabilistic logic.

**Conclusion for a Technical Document:** The chart provides compelling evidence that evaluating LLMs on "math" as a single category is insufficient. A granular, topic-by-topic analysis is required to understand a model's true capabilities and limitations. For applications requiring mathematical reasoning, model selection must be tightly coupled to the specific mathematical domain of the task.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Line Chart: ModelAccuracy Across Math Topics

### Overview

The chart compares the accuracy of four AI models (Yi-6B, ChatGLM3-6B, LLaMA2-7B, DeepSeekMath-7B) across 30+ math topics. Accuracy is measured on a 0–100% scale, with each model represented by a distinct colored line. The x-axis lists math topics, while the y-axis shows accuracy percentages.

### Components/Axes

- **Legend**: Top-left corner, with four entries:

- **Yi-6B** (blue line)

- **ChatGLM3-6B** (orange line)

- **LLaMA2-7B** (green line)

- **DeepSeekMath-7B** (red line)

- **X-axis**: Labeled "Math Topics," listing 30+ categories (e.g., "Add & subtract," "Probability & statistics," "Geometry & range").

- **Y-axis**: Labeled "Accuracy," with ticks at 0, 20, 40, 60, 80, 100.

### Detailed Analysis

1. **Yi-6B (Blue)**:

- Peaks at ~95% in "Probability & statistics" and "Geometry & range."

- Dips below 40% in "Linear equations" and "Nonlinear functions."

- Average accuracy: ~65–75% across most topics.

2. **ChatGLM3-6B (Orange)**:

- Strong performance in "Exponents & scientific notation" (~85%).

- Struggles in "Linear equations" (~30%) and "Systems of equations" (~40%).

- Average accuracy: ~55–70%.

3. **LLaMA2-7B (Green)**:

- Consistently mid-range (40–60%) across most topics.

- Peaks at ~70% in "Probability & statistics" and "Geometry & range."

- Lowest accuracy: ~10% in "Linear equations."

4. **DeepSeekMath-7B (Red)**:

- Highest overall accuracy (~85–95%) in "Probability & statistics," "Geometry & range," and "Exponents & scientific notation."

- Dips below 40% in "Linear equations" and "Nonlinear functions."

- Average accuracy: ~70–85%.

### Key Observations

- **Outliers**:

- LLaMA2-7B (green) has the lowest accuracy in "Linear equations" (~10%).

- DeepSeekMath-7B (red) achieves the highest accuracy in "Probability & statistics" (~95%).

- **Trends**:

- Yi-6B and DeepSeekMath-7B show the most variability, with sharp peaks and troughs.

- ChatGLM3-6B and LLaMA2-7B exhibit more stable but lower performance.

### Interpretation

The data highlights model-specific strengths and weaknesses:

- **DeepSeekMath-7B** excels in advanced topics like probability and geometry, suggesting robust training in these areas.

- **LLaMA2-7B** underperforms in linear equations, indicating potential gaps in foundational math training.

- **Yi-6B** and **ChatGLM3-6B** show mixed results, with Yi-6B performing better in high-variability topics and ChatGLM3-6B struggling in linear systems.

The chart underscores the importance of model selection based on the target math domain. For example, DeepSeekMath-7B would be preferable for probability tasks, while LLaMA2-7B might be avoided for linear equations.

DECODING INTELLIGENCE...