## Diagram: LLM Prompt Processing for Sentiment Classification

### Overview

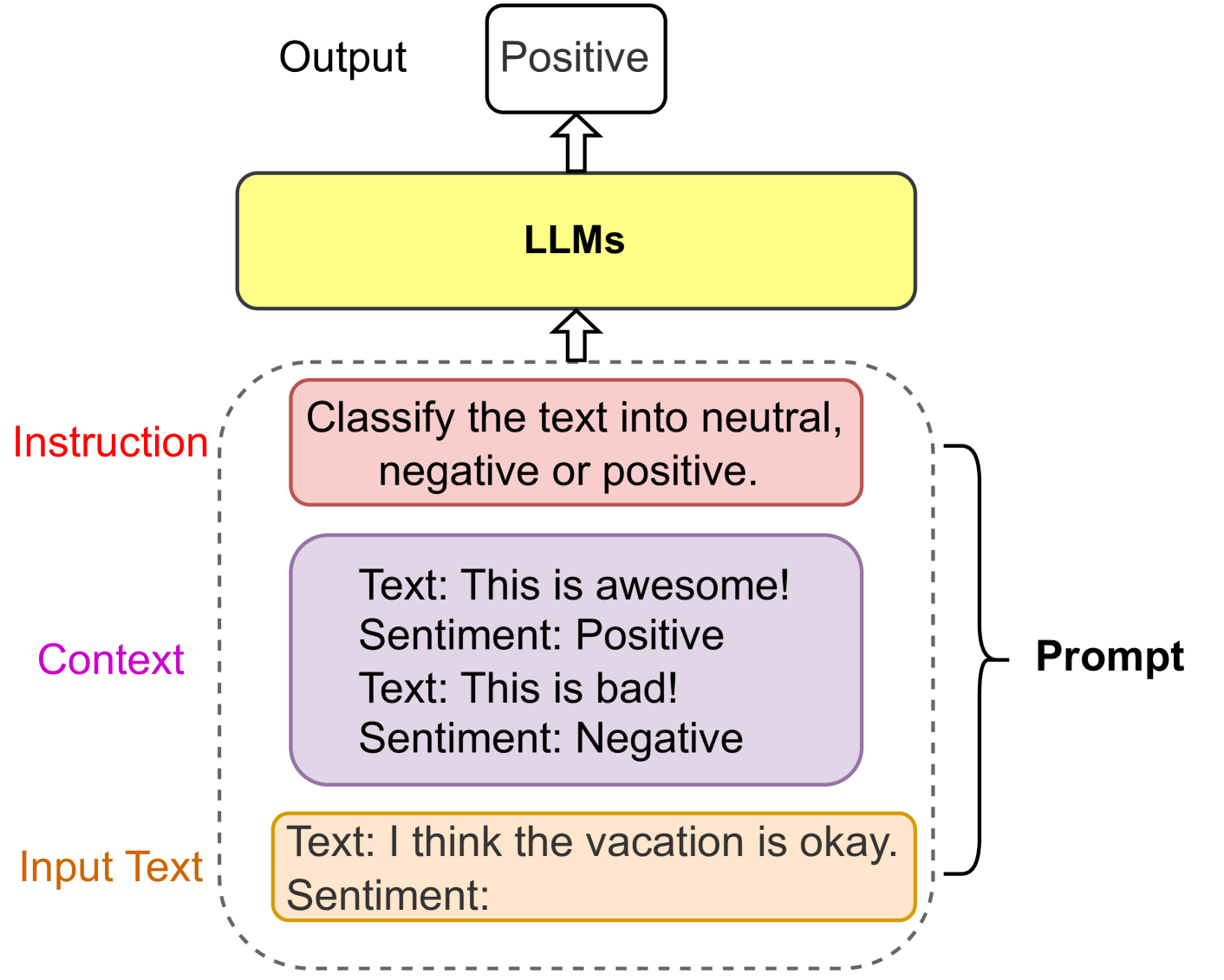

This image is a flowchart or process diagram illustrating how a Large Language Model (LLM) processes a structured prompt to perform a sentiment classification task. The diagram shows the flow from input components, through the LLM, to a final output.

### Components/Axes

The diagram is organized vertically with a clear upward flow indicated by arrows. The components are color-coded and labeled.

**Spatial Layout:**

* **Bottom Region:** Contains the "Input Text" component.

* **Middle Region (within a dashed box):** Contains the "Context" and "Instruction" components, collectively labeled as the "Prompt" on the right side.

* **Upper-Middle Region:** Contains the central "LLMs" processing block.

* **Top Region:** Contains the final "Output" component.

**Component Details (from bottom to top):**

1. **Input Text (Orange Box, Bottom):**

* **Label (Left):** `Input Text` (in orange text).

* **Content (Inside Box):**

* `Text: I think the vacation is okay.`

* `Sentiment:`

2. **Prompt (Dashed Box Enclosure):**

* A dashed gray line encloses the "Instruction" and "Context" boxes.

* A right curly brace `}` on the right side of this dashed box is labeled `Prompt` in black text.

3. **Context (Purple Box, Middle of dashed area):**

* **Label (Left):** `Context` (in purple text).

* **Content (Inside Box):**

* `Text: This is awesome!`

* `Sentiment: Positive`

* `Text: This is bad!`

* `Sentiment: Negative`

4. **Instruction (Red Box, Top of dashed area):**

* **Label (Left):** `Instruction` (in red text).

* **Content (Inside Box):** `Classify the text into neutral, negative or positive.`

5. **LLMs (Yellow Box, Center):**

* A large, central yellow rectangle with rounded corners.

* **Label (Inside Box):** `LLMs` in bold black text.

* An upward-pointing arrow connects the dashed "Prompt" box to the bottom of this "LLMs" box.

6. **Output (White Box, Top):**

* A white rectangle with a black border.

* **Label (Left):** `Output` in black text.

* **Content (Inside Box):** `Positive`

* An upward-pointing arrow connects the top of the "LLMs" box to the bottom of this "Output" box.

### Detailed Analysis

The diagram explicitly defines the structure of a prompt used to instruct an LLM. The prompt is composed of three distinct parts:

1. **Instruction:** A direct command specifying the task (`Classify the text into neutral, negative or positive.`).

2. **Context:** Few-shot examples providing the model with demonstrations of the task format and expected output. It includes two text-sentiment pairs.

3. **Input Text:** The new, unseen data point (`I think the vacation is okay.`) for which the model must generate a prediction.

The flow is linear and unidirectional: The combined Prompt (Instruction + Context + Input Text) is fed into the LLMs block. The LLM processes this structured input and generates a single-word classification, `Positive`, as the Output.

### Key Observations

* **Structured Prompting:** The diagram highlights a "few-shot" or "in-context learning" prompting technique, where examples are provided within the prompt itself.

* **Color-Coding:** Each component type (Input Text, Context, Instruction) is consistently color-coded (orange, purple, red) both in its label and its box border.

* **Task Specificity:** The instruction is explicit and limits the output space to three categories: neutral, negative, or positive.

* **Example Discrepancy:** The "Context" provides examples for "Positive" and "Negative" sentiments but does not include an example for "Neutral," which is listed as a possible classification in the instruction.

* **Output Format:** The final output is a single categorical label, matching the format demonstrated in the context examples.

### Interpretation

This diagram serves as a clear educational or technical schematic for how modern LLMs can be directed to perform specific NLP tasks through carefully constructed prompts. It demonstrates the principle of **in-context learning**, where the model learns the task from the provided examples within the prompt, without requiring weight updates.

The relationship between components is hierarchical and sequential: The **Instruction** defines the goal, the **Context** provides the pattern, and the **Input Text** is the subject of the operation. The **LLMs** block acts as the inference engine that maps the structured input to the correct output category.

A notable point for investigation is the **absence of a "Neutral" example** in the context. While the instruction includes "neutral" as a possible class, the model is only shown examples of "Positive" and "Negative." This could lead to ambiguity or bias in the model's classification of truly neutral statements, as it has not been explicitly shown what a neutral output looks like in this specific format. The chosen input text, "I think the vacation is okay," is itself a potential candidate for a neutral sentiment, making the example particularly relevant for testing the model's generalization beyond its provided context. The diagram implies the model correctly outputs "Positive," suggesting it may interpret "okay" as leaning positive, or that the lack of a neutral example influences its decision boundary.