## Layered Architecture Diagram: Computational Framework Hierarchy

### Overview

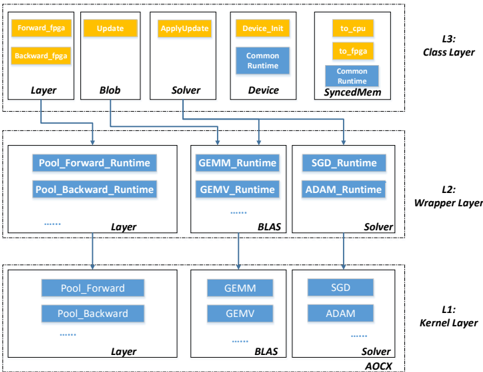

The image displays a three-tiered architectural diagram illustrating the hierarchical structure of a computational framework, likely for machine learning or numerical computing. The diagram shows how high-level class abstractions (L3) map through wrapper layers (L2) to low-level kernel implementations (L1). The flow is top-down, with arrows indicating dependency or implementation relationships from higher to lower layers.

### Components/Axes

The diagram is organized into three horizontal layers, each labeled on the right side:

- **L3: Class Layer** (Top)

- **L2: Wrapper Layer** (Middle)

- **L1: Kernel Layer** (Bottom)

Each layer contains grouped components represented as boxes with internal text. Arrows connect components vertically between layers, showing a direct mapping from a component in a higher layer to its counterpart in the layer below.

**Color Coding:**

- **Yellow boxes/text:** Primarily used in L3 for tags and update operations.

- **Blue boxes/text:** Used in L2 for runtime functions and in L1 for kernel functions. The blue in L2 appears slightly darker than in L1.

- **White boxes with black text:** Used for component group labels (e.g., "Layer", "BLAS", "Solver").

### Detailed Analysis

#### L3: Class Layer (Top)

This layer contains high-level class abstractions. Components are arranged from left to right:

1. **Layer**: Contains two yellow sub-components: `Forward_tags` and `Backward_tags`.

2. **Blob**: Contains one yellow sub-component: `Update`.

3. **Solver**: Contains one yellow sub-component: `ApplyUpdate`.

4. **Device**: Contains two blue sub-components: `Device_init` (top) and `Common Runtime` (bottom).

5. **SyncedMem**: Contains three blue sub-components: `to_cpu` (top), `to_gpu` (middle), and `Common Runtime` (bottom).

#### L2: Wrapper Layer (Middle)

This layer contains runtime wrapper functions. Components are arranged from left to right:

1. **Group Label: "Layer"**: Contains blue sub-components: `Pool_Forward_Runtime`, `Pool_Backward_Runtime`, and an ellipsis (`...`) indicating additional functions.

2. **Group Label: "BLAS"**: Contains blue sub-components: `GEMM_Runtime`, `GMV_Runtime`, and an ellipsis (`...`).

3. **Group Label: "Solver"**: Contains blue sub-components: `SGD_Runtime`, `ADAM_Runtime`, and an ellipsis (`...`).

#### L1: Kernel Layer (Bottom)

This layer contains the core kernel implementations. Components are arranged from left to right:

1. **Group Label: "Layer"**: Contains blue sub-components: `Pool_Forward`, `Pool_Backward`, and an ellipsis (`...`).

2. **Group Label: "BLAS"**: Contains blue sub-components: `GEMM`, `GMV`, and an ellipsis (`...`).

3. **Group Label: "Solver"**: Contains blue sub-components: `SGD`, `ADAM`, and an ellipsis (`...`).

4. **Additional Label: "AOCX"**: Positioned at the bottom-right corner, below the "Solver" group. Its exact relationship is not connected by an arrow but is placed within the L1 layer's boundary.

**Flow and Relationships:**

- Arrows originate from the bottom of each L3 component group and point to the top of the corresponding L2 component group.

- Arrows originate from the bottom of each L2 component group and point to the top of the corresponding L1 component group.

- This creates three clear vertical pipelines:

- **Layer Pipeline:** L3 `Layer` → L2 `Layer` → L1 `Layer`

- **BLAS Pipeline:** L3 `Blob` & `Solver` (indirectly via arrows from their group) → L2 `BLAS` → L1 `BLAS`

- **Solver Pipeline:** L3 `Solver` → L2 `Solver` → L1 `Solver`

- The `Device` and `SyncedMem` components in L3 do not have direct downward arrows to L2, suggesting they may be cross-cutting concerns or used by multiple components.

### Key Observations

1. **Strict Hierarchical Mapping:** The architecture enforces a clear separation of concerns, with each layer having a distinct responsibility (Abstraction, Wrapper/Runtime, Implementation).

2. **Naming Convention:** Function names follow a pattern where the L2 "Runtime" versions (e.g., `GEMM_Runtime`) directly correspond to the L1 kernel versions (e.g., `GEMM`).

3. **Ellipses Indicate Extensibility:** The use of `...` in L2 and L1 groups signifies that the shown functions (Pool, GEMM, SGD, ADAM) are examples, and the framework supports additional, unspecified operations.

4. **Color-Coded Functionality:** Yellow is consistently used for metadata and control operations (`tags`, `Update`, `ApplyUpdate`), while blue is used for computational kernels and their runtime wrappers.

5. **Ambiguous Element:** The label `AOCX` in the bottom-right of L1 is not connected via the standard arrow flow. It may represent a specific hardware target (e.g., Intel FPGA OpenCL), a build artifact, or a separate module.

### Interpretation

This diagram illustrates a well-structured software architecture designed for performance portability and modularity, common in high-performance computing and machine learning libraries.

- **What it demonstrates:** The framework abstracts low-level, hardware-specific kernel implementations (L1) behind stable runtime interfaces (L2), which are then exposed through high-level, user-facing classes (L3). This allows the underlying kernels (e.g., for different CPUs, GPUs, or accelerators) to be swapped or optimized without changing the user API.

- **Relationships:** The vertical arrows represent a "implements" or "calls" relationship. A user interacting with the `Layer` class (L3) will ultimately trigger `Pool_Forward_Runtime` (L2), which executes the `Pool_Forward` kernel (L1). The `Device` and `SyncedMem` classes in L3 likely provide essential services (initialization, memory management) used across all pipelines.

- **Notable Design Choices:** Separating `Blob` (data container) and `Solver` (optimization algorithm) at the class level, but having their runtime components (`Update`, `ApplyUpdate`) interact with the BLAS and Solver pipelines, suggests a clean separation between data, computation, and optimization logic. The presence of both `GEMM` (General Matrix Multiply) and `GMV` (General Matrix-Vector multiply) indicates support for fundamental linear algebra operations. The inclusion of `SGD` and `ADAM` points to a focus on stochastic gradient-based optimization, core to modern machine learning.

- **Uncertainty/Ambiguity:** The exact role of `AOCX` is unclear from the diagram alone. It may be a target platform identifier or a component outside the main three-layer hierarchy. The ellipses mean the full scope of supported operations is not shown.