TECHNICAL ASSET FINGERPRINT

8e20b8f4c40d224f97dc22f7

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

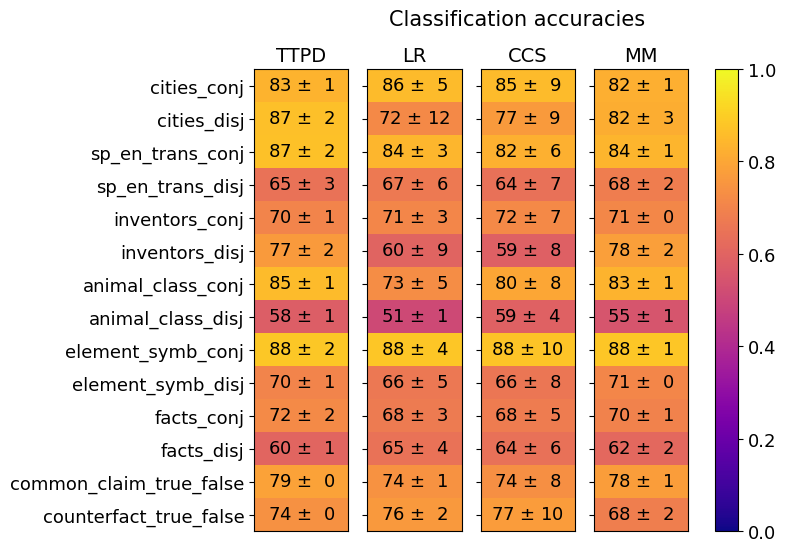

## Heatmap: Classification Accuracies

### Overview

The image is a heatmap displaying classification accuracies for different models (TTPD, LR, CCS, MM) across various categories (cities_conj, cities_disj, etc.). The color intensity represents the accuracy score, ranging from dark blue (low accuracy) to bright yellow (high accuracy). Each cell contains the accuracy score with its associated uncertainty.

### Components/Axes

* **Title:** Classification accuracies

* **Columns (Models):** TTPD, LR, CCS, MM

* **Rows (Categories):** cities\_conj, cities\_disj, sp\_en\_trans\_conj, sp\_en\_trans\_disj, inventors\_conj, inventors\_disj, animal\_class\_conj, animal\_class\_disj, element\_symb\_conj, element\_symb\_disj, facts\_conj, facts\_disj, common\_claim\_true\_false, counterfact\_true\_false

* **Colorbar:** Ranges from 0.0 (dark blue) to 1.0 (bright yellow), representing the classification accuracy score.

### Detailed Analysis or ### Content Details

Here's a breakdown of the accuracy scores for each model and category:

* **TTPD:**

* cities\_conj: 83 ± 1

* cities\_disj: 87 ± 2

* sp\_en\_trans\_conj: 87 ± 2

* sp\_en\_trans\_disj: 65 ± 3

* inventors\_conj: 70 ± 1

* inventors\_disj: 77 ± 2

* animal\_class\_conj: 85 ± 1

* animal\_class\_disj: 58 ± 1

* element\_symb\_conj: 88 ± 2

* element\_symb\_disj: 70 ± 1

* facts\_conj: 72 ± 2

* facts\_disj: 60 ± 1

* common\_claim\_true\_false: 79 ± 0

* counterfact\_true\_false: 74 ± 0

* **LR:**

* cities\_conj: 86 ± 5

* cities\_disj: 72 ± 12

* sp\_en\_trans\_conj: 84 ± 3

* sp\_en\_trans\_disj: 67 ± 6

* inventors\_conj: 71 ± 3

* inventors\_disj: 60 ± 9

* animal\_class\_conj: 73 ± 5

* animal\_class\_disj: 51 ± 1

* element\_symb\_conj: 88 ± 4

* element\_symb\_disj: 66 ± 5

* facts\_conj: 68 ± 3

* facts\_disj: 65 ± 4

* common\_claim\_true\_false: 74 ± 1

* counterfact\_true\_false: 76 ± 2

* **CCS:**

* cities\_conj: 85 ± 9

* cities\_disj: 77 ± 9

* sp\_en\_trans\_conj: 82 ± 6

* sp\_en\_trans\_disj: 64 ± 7

* inventors\_conj: 72 ± 7

* inventors\_disj: 59 ± 8

* animal\_class\_conj: 80 ± 8

* animal\_class\_disj: 59 ± 4

* element\_symb\_conj: 88 ± 10

* element\_symb\_disj: 66 ± 8

* facts\_conj: 68 ± 5

* facts\_disj: 64 ± 6

* common\_claim\_true\_false: 74 ± 8

* counterfact\_true\_false: 77 ± 10

* **MM:**

* cities\_conj: 82 ± 1

* cities\_disj: 82 ± 3

* sp\_en\_trans\_conj: 84 ± 1

* sp\_en\_trans\_disj: 68 ± 2

* inventors\_conj: 71 ± 0

* inventors\_disj: 78 ± 2

* animal\_class\_conj: 83 ± 1

* animal\_class\_disj: 55 ± 1

* element\_symb\_conj: 88 ± 1

* element\_symb\_disj: 71 ± 0

* facts\_conj: 70 ± 1

* facts\_disj: 62 ± 2

* common\_claim\_true\_false: 78 ± 1

* counterfact\_true\_false: 68 ± 2

### Key Observations

* **High Accuracy:** The "element\_symb\_conj" category consistently shows high accuracy (around 88) across all models.

* **Low Accuracy:** The "animal\_class\_disj" category consistently shows lower accuracy across all models, ranging from 51 to 59.

* **Model Performance:** TTPD generally shows high accuracy across most categories. LR and CCS have more variability in their performance. MM is generally consistent, but sometimes lower than TTPD.

* **Uncertainty:** The uncertainty values vary across models and categories, with LR and CCS often having higher uncertainty (larger standard deviation) compared to TTPD and MM.

### Interpretation

The heatmap provides a visual comparison of the classification accuracies of four different models across a range of categories. The color gradient allows for quick identification of high-performing and low-performing areas.

The data suggests that:

* Some categories are inherently easier to classify than others (e.g., "element\_symb\_conj" vs. "animal\_class\_disj").

* The TTPD model generally performs well across all categories.

* The LR and CCS models have more variable performance, suggesting they might be more sensitive to the specific characteristics of each category.

* The MM model provides a consistent level of accuracy, although it may not reach the highest levels achieved by TTPD in some categories.

The uncertainty values indicate the variability in the model's performance. Higher uncertainty suggests that the model's accuracy can fluctuate more depending on the specific data it is trained on.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

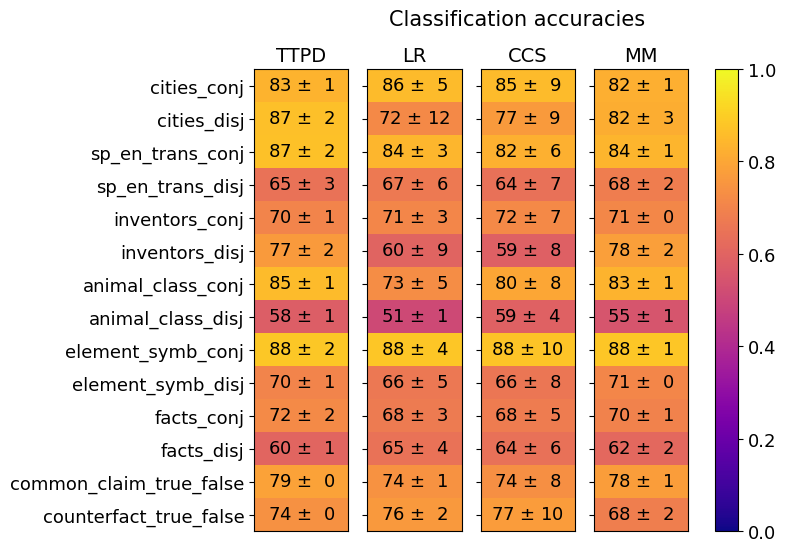

## Heatmap: Classification Accuracies

### Overview

This image presents a heatmap displaying classification accuracies for various datasets and methods. The heatmap uses a color gradient from red (low accuracy) to green (high accuracy), with a scale from 0.0 to 1.0. The data is organized in a table format, with datasets listed on the y-axis and classification methods on the x-axis. Each cell represents the accuracy score (mean ± standard deviation) for a specific dataset-method combination.

### Components/Axes

* **Y-axis (Datasets):**

* cities_conj

* cities_disj

* sp_en_trans_conj

* sp_en_trans_disj

* inventors_conj

* inventors_disj

* animal_class_conj

* animal_class_disj

* element_symb_conj

* element_symb_disj

* facts_conj

* facts_disj

* common_claim_true_false

* counterfact_true_false

* **X-axis (Classification Methods):**

* TTPD

* LR

* CCS

* MM

* **Color Scale (Accuracy):**

* 0.0 (Dark Blue)

* 0.2 (Blue)

* 0.4 (Light Blue)

* 0.6 (Green)

* 0.8 (Yellow)

* 1.0 (Bright Yellow)

* **Title:** Classification accuracies

### Detailed Analysis

The heatmap displays accuracy scores with standard deviations. The values are presented as "mean ± standard deviation".

* **TTPD:**

* cities_conj: 83 ± 1

* cities_disj: 87 ± 2

* sp_en_trans_conj: 87 ± 2

* sp_en_trans_disj: 65 ± 3

* inventors_conj: 70 ± 1

* inventors_disj: 77 ± 2

* animal_class_conj: 85 ± 1

* animal_class_disj: 58 ± 1

* element_symb_conj: 88 ± 2

* element_symb_disj: 70 ± 1

* facts_conj: 72 ± 2

* facts_disj: 60 ± 1

* common_claim_true_false: 79 ± 0

* counterfact_true_false: 74 ± 0

* **LR:**

* cities_conj: 86 ± 5

* cities_disj: 72 ± 12

* sp_en_trans_conj: 84 ± 3

* sp_en_trans_disj: 67 ± 6

* inventors_conj: 71 ± 3

* inventors_disj: 60 ± 9

* animal_class_conj: 73 ± 5

* animal_class_disj: 51 ± 1

* element_symb_conj: 88 ± 4

* element_symb_disj: 66 ± 5

* facts_conj: 68 ± 5

* facts_disj: 65 ± 4

* common_claim_true_false: 74 ± 1

* counterfact_true_false: 76 ± 2

* **CCS:**

* cities_conj: 85 ± 9

* cities_disj: 77 ± 9

* sp_en_trans_conj: 82 ± 6

* sp_en_trans_disj: 64 ± 7

* inventors_conj: 72 ± 7

* inventors_disj: 59 ± 8

* animal_class_conj: 80 ± 8

* animal_class_disj: 59 ± 4

* element_symb_conj: 88 ± 10

* element_symb_disj: 66 ± 8

* facts_conj: 68 ± 5

* facts_disj: 64 ± 6

* common_claim_true_false: 74 ± 8

* counterfact_true_false: 77 ± 10

* **MM:**

* cities_conj: 82 ± 1

* cities_disj: 82 ± 3

* sp_en_trans_conj: 84 ± 1

* sp_en_trans_disj: 68 ± 2

* inventors_conj: 71 ± 0

* inventors_disj: 78 ± 2

* animal_class_conj: 83 ± 1

* animal_class_disj: 55 ± 1

* element_symb_conj: 88 ± 1

* element_symb_disj: 71 ± 0

* facts_conj: 70 ± 1

* facts_disj: 62 ± 2

* common_claim_true_false: 78 ± 1

* counterfact_true_false: 68 ± 2

### Key Observations

* The "element_symb_conj" dataset consistently achieves the highest accuracy across all methods, with values close to 1.0 (bright yellow).

* "animal_class_disj" and "sp_en_trans_disj" generally have lower accuracy scores (towards the blue end of the spectrum) compared to other datasets.

* TTPD and MM generally perform better than LR and CCS, especially on the "conj" datasets.

* The standard deviations are relatively small for most data points, indicating consistent results.

### Interpretation

The heatmap demonstrates the performance of four different classification methods (TTPD, LR, CCS, MM) on fourteen different datasets. The results suggest that the choice of method significantly impacts accuracy, and that certain datasets are more challenging to classify than others. The consistently high accuracy of TTPD and MM, particularly on the "conj" datasets, indicates their suitability for these types of tasks. The low accuracy on "animal_class_disj" and "sp_en_trans_disj" suggests that these datasets may require more sophisticated methods or feature engineering. The small standard deviations indicate that the results are reliable and not heavily influenced by random variation. The "conj" datasets generally perform better than the "disj" datasets, suggesting that the conjunctive nature of the data simplifies the classification task. The heatmap provides a clear visual comparison of method performance across datasets, enabling informed decisions about which method to use for a given task.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Heatmap: Classification Accuracies

### Overview

The image is a heatmap titled "Classification accuracies" that displays the performance (accuracy with standard deviation) of four different methods (TTPD, LR, CCS, MM) across 14 distinct classification tasks or datasets. The tasks are listed as rows, and the methods as columns. Each cell contains a numerical accuracy value (as a percentage) followed by its standard deviation (±). A color bar on the right provides a visual scale for the accuracy values, ranging from 0.0 (dark purple) to 1.0 (bright yellow).

### Components/Axes

* **Title:** "Classification accuracies" (top center).

* **Column Headers (Methods):** TTPD, LR, CCS, MM (top row, left to right).

* **Row Labels (Tasks/Datasets):** Listed vertically on the left side. The 14 tasks are:

1. `cities_conj`

2. `cities_disj`

3. `sp_en_trans_conj`

4. `sp_en_trans_disj`

5. `inventors_conj`

6. `inventors_disj`

7. `animal_class_conj`

8. `animal_class_disj`

9. `element_symb_conj`

10. `element_symb_disj`

11. `facts_conj`

12. `facts_disj`

13. `common_claim_true_false`

14. `counterfact_true_false`

* **Color Bar/Legend:** Positioned vertically on the far right. It maps color to accuracy value, with a scale marked at 0.0, 0.2, 0.4, 0.6, 0.8, and 1.0. The gradient runs from dark purple (0.0) through red/orange to bright yellow (1.0).

* **Data Grid:** A 14-row by 4-column grid of colored cells, each containing the text "[Accuracy] ± [Standard Deviation]".

### Detailed Analysis

The following table reconstructs the data from the heatmap. Values are percentages.

| Task | TTPD | LR | CCS | MM |

| :--- | :--- | :--- | :--- | :--- |

| **cities_conj** | 83 ± 1 | 86 ± 5 | 85 ± 9 | 82 ± 1 |

| **cities_disj** | 87 ± 2 | 72 ± 12 | 77 ± 9 | 82 ± 3 |

| **sp_en_trans_conj** | 87 ± 2 | 84 ± 3 | 82 ± 6 | 84 ± 1 |

| **sp_en_trans_disj** | 65 ± 3 | 67 ± 6 | 64 ± 7 | 68 ± 2 |

| **inventors_conj** | 70 ± 1 | 71 ± 3 | 72 ± 7 | 71 ± 0 |

| **inventors_disj** | 77 ± 2 | 60 ± 9 | 59 ± 8 | 78 ± 2 |

| **animal_class_conj** | 85 ± 1 | 73 ± 5 | 80 ± 8 | 83 ± 1 |

| **animal_class_disj** | 58 ± 1 | 51 ± 1 | 59 ± 4 | 55 ± 1 |

| **element_symb_conj** | 88 ± 2 | 88 ± 4 | 88 ± 10 | 88 ± 1 |

| **element_symb_disj** | 70 ± 1 | 66 ± 5 | 66 ± 8 | 71 ± 0 |

| **facts_conj** | 72 ± 2 | 68 ± 3 | 68 ± 5 | 70 ± 1 |

| **facts_disj** | 60 ± 1 | 65 ± 4 | 64 ± 6 | 62 ± 2 |

| **common_claim_true_false** | 79 ± 0 | 74 ± 1 | 74 ± 8 | 78 ± 1 |

| **counterfact_true_false** | 74 ± 0 | 76 ± 2 | 77 ± 10 | 68 ± 2 |

**Visual Trend Verification:**

* **High Accuracy (Yellow/Orange Cells):** The `element_symb_conj` row is uniformly bright yellow/orange, indicating consistently high accuracy (~88%) across all methods. The `cities_conj` and `sp_en_trans_conj` rows also show strong performance.

* **Low Accuracy (Purple/Red Cells):** The `animal_class_disj` row contains the darkest cells, particularly for LR (51 ± 1), indicating the lowest performance in the set. The `sp_en_trans_disj` and `facts_disj` rows also show relatively lower accuracies.

* **Method Consistency:** The TTPD and MM columns generally show lower standard deviations (e.g., ±0, ±1, ±2) compared to LR and CCS, which often have higher variance (e.g., ±12, ±10), suggesting more stable performance for TTPD and MM across runs or folds.

* **Task Difficulty Pattern:** For most tasks, the "_disj" variant (e.g., `cities_disj`, `inventors_disj`) shows lower accuracy than its "_conj" counterpart, with a few exceptions like `facts_disj` vs `facts_conj` for LR and CCS.

### Key Observations

1. **Best Overall Performance:** The task `element_symb_conj` achieves the highest and most consistent accuracy (88%) across all four methods.

2. **Worst Overall Performance:** The task `animal_class_disj` has the lowest accuracy, with LR performing worst at 51 ± 1%.

3. **Largest Performance Gap:** The `inventors_disj` task shows a significant gap between methods, with TTPD (77%) and MM (78%) far outperforming LR (60%) and CCS (59%).

4. **Highest Variance:** The LR and CCS methods exhibit the highest standard deviations in several cells (e.g., LR on `cities_disj`: ±12, CCS on `element_symb_conj`: ±10), indicating less reliable or more variable results for those method-task combinations.

5. **Task Naming Convention:** The row labels suggest a systematic evaluation across different knowledge domains (cities, translations, inventors, animal classification, element symbols, general facts) and logical constructs ("_conj" likely for conjunctions, "_disj" for disjunctions, and "true_false" for binary verification tasks).

### Interpretation

This heatmap provides a comparative benchmark of four classification methods across a diverse set of tasks, likely from a natural language processing or knowledge reasoning domain. The data suggests several insights:

* **Task Complexity:** The consistent drop in accuracy from "_conj" to "_disj" tasks implies that disjunctive reasoning (involving "or") is generally more challenging for these models than conjunctive reasoning (involving "and"). This is a common finding in logical reasoning benchmarks.

* **Method Specialization:** No single method is universally superior. TTPD and MM appear more robust (lower variance) and perform particularly well on tasks like `inventors_disj` and `animal_class_conj`. LR and CCS, while sometimes competitive (e.g., on `cities_conj`), show greater instability and struggle significantly on specific tasks like `animal_class_disj`.

* **Domain-Specific Strengths:** The perfect consistency on `element_symb_conj` (all methods at 88%) suggests this task may be more about factual recall (knowing element symbols) than complex reasoning, making it equally solvable by all approaches. In contrast, tasks involving real-world knowledge (`animal_class`, `inventors`) reveal larger performance disparities between methods.

* **Reliability Indicator:** The standard deviation values are crucial. A method with high accuracy but high variance (like CCS on `element_symb_conj`: 88 ± 10) may be less trustworthy in practice than a slightly less accurate but more stable method (like MM on the same task: 88 ± 1).

In summary, the heatmap reveals that the choice of optimal method is highly dependent on the specific nature of the classification task, with clear patterns emerging around logical structure and knowledge domain. It serves as a diagnostic tool to identify strengths, weaknesses, and reliability of different algorithmic approaches.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Heatmap: Classification Accuracies

### Overview

The image is a heatmap visualizing classification accuracies across different methods (TTPD, LR, CCS, MM) and linguistic categories (e.g., cities, inventors, animal classes). Values are represented by color intensity (purple = low, yellow = high) and annotated with numerical accuracies and standard deviations.

### Components/Axes

- **Y-axis (Categories)**:

- cities_conj, cities_disj

- sp_en_trans_conj, sp_en_trans_disj

- inventors_conj, inventors_disj

- animal_class_conj, animal_class_disj

- element_symb_conj, element_symb_disj

- facts_conj, facts_disj

- common_claim_true_false, counterfact_true_false

- **X-axis (Methods)**: TTPD, LR, CCS, MM

- **Legend**: Color scale from 0.0 (purple) to 1.0 (yellow), with intermediate values (0.2, 0.4, 0.6, 0.8).

- **Title**: "Classification accuracies" (top center).

### Detailed Analysis

#### Y-axis Categories and Method Performance

1. **cities_conj**:

- TTPD: 83 ± 1 (light yellow)

- LR: 86 ± 5 (yellow)

- CCS: 85 ± 9 (yellow)

- MM: 82 ± 1 (yellow)

2. **cities_disj**:

- TTPD: 87 ± 2 (yellow)

- LR: 72 ± 12 (orange)

- CCS: 77 ± 9 (orange)

- MM: 82 ± 3 (yellow)

3. **sp_en_trans_conj**:

- TTPD: 87 ± 2 (yellow)

- LR: 84 ± 3 (yellow)

- CCS: 82 ± 6 (orange)

- MM: 84 ± 1 (yellow)

4. **sp_en_trans_disj**:

- TTPD: 65 ± 3 (orange)

- LR: 67 ± 6 (orange)

- CCS: 64 ± 7 (orange)

- MM: 68 ± 2 (orange)

5. **inventors_conj**:

- TTPD: 70 ± 1 (orange)

- LR: 71 ± 3 (orange)

- CCS: 72 ± 7 (orange)

- MM: 71 ± 0 (orange)

6. **inventors_disj**:

- TTPD: 77 ± 2 (orange)

- LR: 60 ± 9 (red)

- CCS: 59 ± 8 (red)

- MM: 78 ± 2 (orange)

7. **animal_class_conj**:

- TTPD: 85 ± 1 (yellow)

- LR: 73 ± 5 (orange)

- CCS: 80 ± 8 (orange)

- MM: 83 ± 1 (yellow)

8. **animal_class_disj**:

- TTPD: 58 ± 1 (red)

- LR: 51 ± 1 (red)

- CCS: 59 ± 4 (red)

- MM: 55 ± 1 (red)

9. **element_symb_conj**:

- TTPD: 88 ± 2 (yellow)

- LR: 88 ± 4 (yellow)

- CCS: 88 ± 10 (yellow)

- MM: 88 ± 1 (yellow)

10. **element_symb_disj**:

- TTPD: 70 ± 1 (orange)

- LR: 66 ± 5 (orange)

- CCS: 66 ± 8 (orange)

- MM: 71 ± 0 (orange)

11. **facts_conj**:

- TTPD: 72 ± 2 (orange)

- LR: 68 ± 3 (orange)

- CCS: 68 ± 5 (orange)

- MM: 70 ± 1 (orange)

12. **facts_disj**:

- TTPD: 60 ± 1 (red)

- LR: 65 ± 4 (orange)

- CCS: 64 ± 6 (orange)

- MM: 62 ± 2 (orange)

13. **common_claim_true_false**:

- TTPD: 79 ± 0 (orange)

- LR: 74 ± 1 (orange)

- CCS: 74 ± 8 (orange)

- MM: 78 ± 1 (orange)

14. **counterfact_true_false**:

- TTPD: 74 ± 0 (orange)

- LR: 76 ± 2 (orange)

- CCS: 77 ± 10 (orange)

- MM: 68 ± 2 (orange)

### Key Observations

- **Highest accuracies**:

- Conjunction tasks (e.g., `element_symb_conj`) consistently achieve near-perfect scores (88 ± 1–10) across all methods.

- TTPD and MM outperform others in disjunction tasks (e.g., `cities_disj`, `inventors_disj`).

- **Lowest accuracies**:

- Disjunction tasks (e.g., `animal_class_disj`) show poor performance (51–59%) across all methods.

- `inventors_disj` and `animal_class_disj` have the highest variability (large standard deviations).

- **Method trends**:

- TTPD and MM generally outperform LR and CCS in conjunction tasks.

- LR struggles with disjunction tasks (e.g., `cities_disj`: 72 ± 12).

### Interpretation

The data suggests that **conjunction tasks** (e.g., `element_symb_conj`) are easier to classify than disjunction tasks (e.g., `animal_class_disj`), likely due to simpler syntactic structures. Methods like **TTPD** and **MM** demonstrate robustness across both task types, while **LR** and **CCS** underperform in disjunction scenarios. The high variability in disjunction tasks (e.g., `inventors_disj`: 60 ± 9) indicates potential challenges in handling negated or complex logical structures. The near-perfect performance on conjunction tasks implies these methods are well-suited for straightforward syntactic relationships but may lack generalization to more nuanced linguistic patterns.

DECODING INTELLIGENCE...