TECHNICAL ASSET FINGERPRINT

8e81f7460aa1d219c80d5815

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

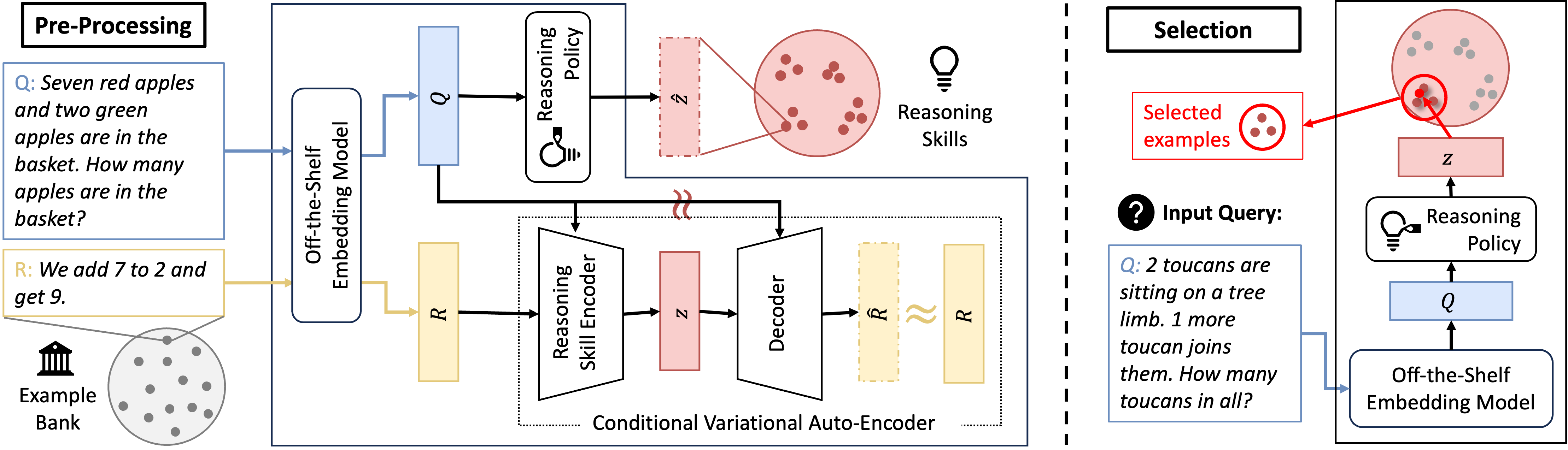

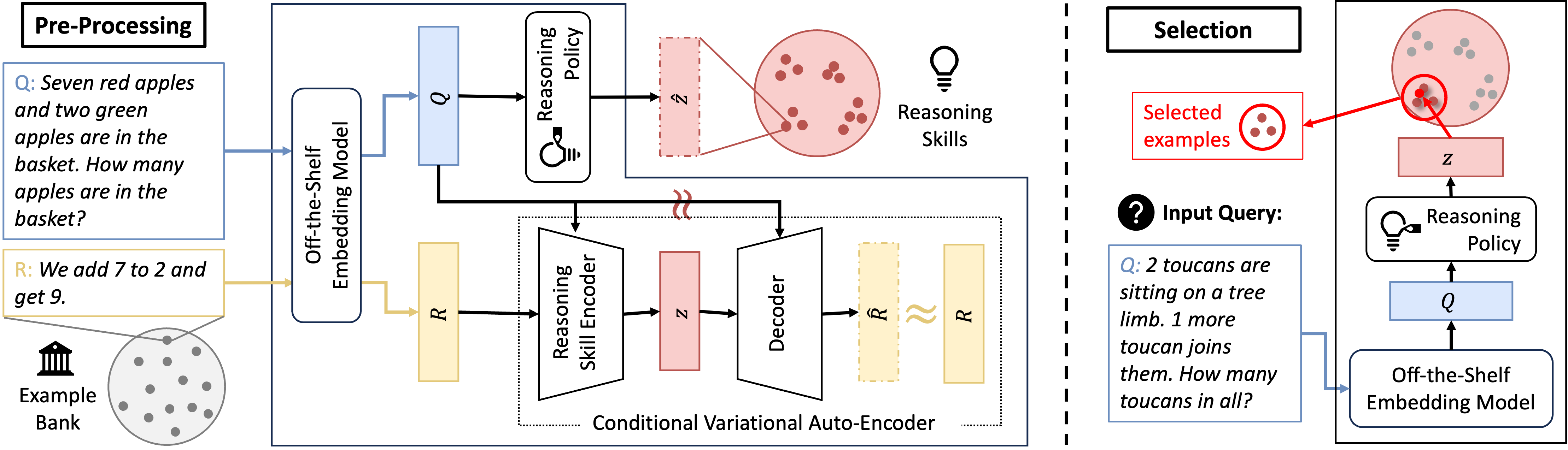

## Diagram: Reasoning Skill Encoding and Example Selection System

### Overview

The image is a technical system diagram illustrating a two-stage process for handling reasoning tasks. The left stage, "Pre-Processing," details how reasoning skills are encoded from a bank of examples. The right stage, "Selection," shows how a new input query is processed to retrieve relevant examples from a learned skill space. The overall system appears designed for few-shot learning or in-context learning, where a model selects pertinent examples to solve new problems.

### Components/Axes

The diagram is divided into two primary sections by a vertical dashed line.

**Left Section: Pre-Processing**

* **Header:** "Pre-Processing" (top-left, black text in a white box with a black border).

* **Input Example:**

* A blue-bordered box contains a question (Q): "Seven red apples and two green apples are in the basket. How many apples are in the basket?"

* A yellow-bordered box contains the corresponding reasoning/response (R): "We add 7 to 2 and get 9."

* **Example Bank:** An icon of a classical building (labeled "Example Bank") points to a circle containing multiple grey dots, representing a collection of stored examples.

* **Core Processing Pipeline:**

1. **Off-the-Shelf Embedding Model:** A rounded rectangle. It receives the Question (Q) and Response (R) as inputs.

2. **Outputs of Embedding Model:**

* A blue rectangle labeled **Q** (embedding of the question).

* A yellow rectangle labeled **R** (embedding of the response).

3. **Reasoning Policy:** A rounded rectangle with a lightbulb icon. It takes the question embedding **Q** as input.

4. **Latent Variable (z):** The Reasoning Policy outputs a pink rectangle labeled **z**. This represents a latent skill code.

5. **Skill Space Visualization:** A large pink circle containing clusters of red dots. An arrow from **z** points to a specific cluster, indicating that **z** selects or represents a region in this skill space. The text "Reasoning Skills" is placed next to this circle.

6. **Conditional Variational Auto-Encoder (CVAE):** A dotted-line box enclosing:

* **Reasoning Skill Encoder:** A trapezoid taking the response embedding **R** as input and outputting a pink rectangle labeled **z** (the encoded skill).

* **Decoder:** A trapezoid taking the latent skill **z** as input and outputting a yellow dashed rectangle labeled **R̂** (reconstructed response embedding).

* A wavy yellow line connects **R̂** to the original **R**, indicating a reconstruction loss or similarity measure.

**Right Section: Selection**

* **Header:** "Selection" (top-center, black text in a white box with a black border).

* **Input Query:**

* A black circle with a white question mark icon is labeled "Input Query:".

* Below it, a blue-bordered box contains a new question (Q): "2 toucans are sitting on a tree limb. 1 more toucan joins them. How many toucans in all?"

* **Processing Pipeline for New Query:**

1. **Off-the-Shelf Embedding Model:** A rounded rectangle receives the new query **Q**.

2. **Question Embedding (Q):** A blue rectangle, output of the embedding model.

3. **Reasoning Policy:** A rounded rectangle with a lightbulb icon takes **Q** as input.

4. **Latent Skill Code (z):** The Reasoning Policy outputs a pink rectangle labeled **z**.

* **Example Selection:**

* A large pink circle (same "Skill Space" as on the left) contains grey dots and one highlighted red cluster.

* The latent code **z** points to this red cluster.

* A red arrow originates from this cluster and points to a red-bordered box labeled "Selected examples," which contains three red dots. This indicates the retrieval of examples whose skill codes are near **z** in the latent space.

### Detailed Analysis

The diagram describes a method to learn and utilize "reasoning skills" for problem-solving.

1. **Pre-Processing (Skill Encoding):**

* The system starts with a bank of solved examples (Q, R pairs).

* For each example, an off-the-shelf model creates embeddings for the question (Q) and the reasoning response (R).

* A **Reasoning Policy** network analyzes the question embedding (Q) to produce a latent variable **z**. This **z** is intended to capture the *type of reasoning skill* required (e.g., addition, comparison).

* A **Conditional Variational Auto-Encoder (CVAE)** is trained to ensure the latent space is meaningful. The encoder maps the response embedding (R) to a skill code **z**, and the decoder tries to reconstruct the response (R̂) from that code. The link between the policy's **z** and the encoder's **z** (shown by a wavy red line) suggests they are trained to be consistent or are the same network.

* The result is a structured "Skill Space" (pink circle) where examples are clustered by the underlying reasoning skill they demonstrate.

2. **Selection (Skill-Based Retrieval):**

* When a new, unseen query arrives, it is embedded and passed through the same **Reasoning Policy**.

* The policy predicts the latent skill code **z** that this new query likely requires.

* This **z** is used to query the pre-processed skill space. The system selects examples from the cluster nearest to **z**.

* These "Selected examples" are then presumably provided as few-shot context to a final model to solve the new query.

### Key Observations

* **Two-Stage Architecture:** The system clearly separates the offline encoding of existing knowledge (Pre-Processing) from the online application to new problems (Selection).

* **Latent Skill Space:** The core innovation is the creation of an interpretable, structured latent space (**z**) where proximity corresponds to similarity in reasoning type, not just surface-level text similarity.

* **Role of the CVAE:** The CVAE acts as a regularizer, forcing the latent variable **z** to contain sufficient information to reconstruct the reasoning response (R), thereby ensuring it captures meaningful skill information.

* **Use of Off-the-Shelf Models:** The diagram specifies the use of pre-existing embedding models, indicating this framework is model-agnostic and can be built on top of existing language models.

* **Visual Consistency:** Colors are used consistently: blue for questions/queries (Q), yellow for responses/reasoning (R), pink for the latent skill space and codes (z), and red for selected items.

### Interpretation

This diagram outlines a sophisticated approach to improving AI reasoning through structured example retrieval. The key insight is that not all examples are equally useful for a given problem; usefulness depends on the underlying reasoning skill.

* **What it demonstrates:** The system learns to abstract away from the specific content of a problem (apples vs. toucans) to identify the core reasoning operation (addition). By clustering examples by this abstract skill, it can retrieve the most pedagogically relevant examples for a new problem, which should lead to more efficient and accurate few-shot learning.

* **How elements relate:** The **Reasoning Policy** is the central component, acting as a bridge between the input question and the skill space. The **CVAE** provides the training framework to make the skill space meaningful. The **Example Bank** is the raw material, and the **Selection** module is the application.

* **Notable implications:** This method could reduce the prompt sensitivity of large language models by providing more consistently relevant examples. It also introduces a level of interpretability, as the latent space **z** could potentially be analyzed to understand what reasoning skills the model has learned. The separation of skill encoding from problem-solving allows the skill space to be built once and reused for many different queries.

DECODING INTELLIGENCE...