## Diagram: Technical Architecture for Reasoning-Based Question Answering System

### Overview

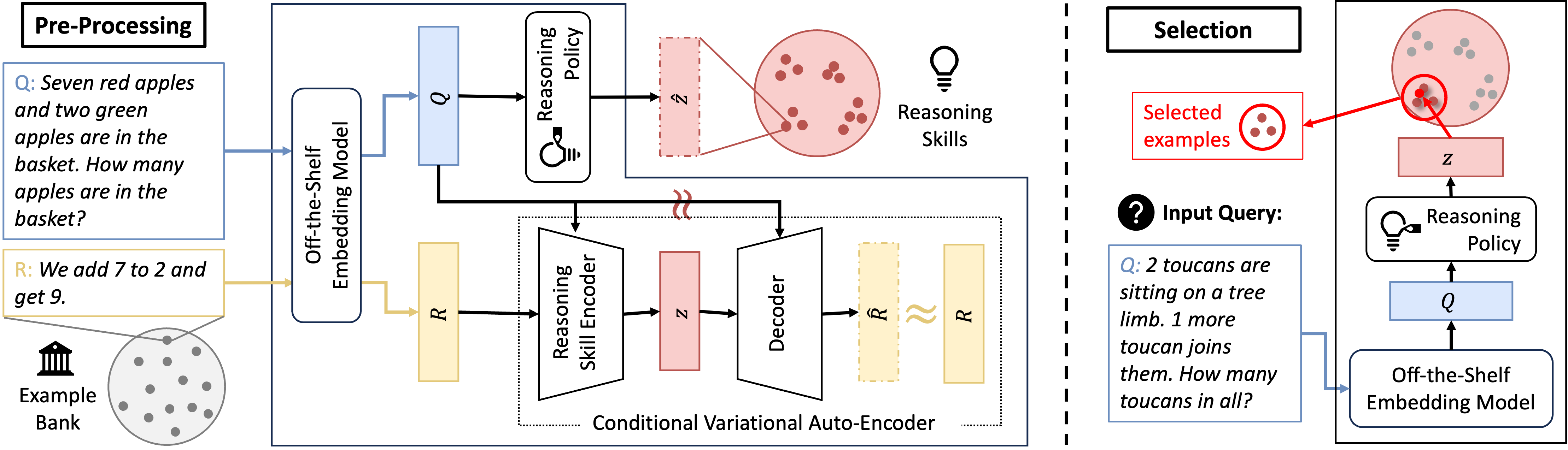

The diagram illustrates a two-stage technical architecture for processing and answering reasoning-based questions. It combines off-the-shelf embedding models with custom reasoning components, featuring explicit example selection and conditional variational auto-encoder (CVAE) components. The system processes natural language queries through multiple stages of encoding, reasoning, and decoding.

### Components/Axes

1. **Pre-Processing Section (Left Side)**

- Input Query Box: Contains sample questions (e.g., "Seven red apples...")

- Off-the-Shelf Embedding Model: Standard NLP model for initial text representation

- Reasoning Policy: Decision-making component for reasoning strategy

- Example Bank: Repository of solved examples (shown with apple/toucan illustrations)

- Reasoning Encoder/Decoder: CVAE components for structured reasoning

2. **Selection Section (Right Side)**

- Input Query Box: Contains second sample question ("2 toucans...")

- Selected Examples Highlight: Visual indicator of retrieved examples

- Reasoning Skills Visualization: Circular diagram with colored dots representing reasoning steps

- Reasoning Policy: Same component as in pre-processing section

- CVAE Components: Mirroring the left section's encoder/decoder structure

3. **Connecting Elements**

- Arrows showing data flow between components

- Mathematical notation (Q for queries, R for responses, Z for latent representations)

- Example Bank icon (classical building symbol)

- Reasoning Skills icon (lightbulb)

### Detailed Analysis

1. **Pre-Processing Flow**

- Input queries are first processed by an off-the-shelf embedding model

- The reasoning policy determines how to handle the query

- The system either generates a direct answer (R) or stores it in the example bank

- The CVAE components (Reasoning Encoder/Decoder) process the query through latent space (Z)

2. **Selection Mechanism**

- New queries trigger example retrieval from the bank

- Selected examples are highlighted in red

- The reasoning skills visualization shows step-by-step problem decomposition

- The same CVAE architecture processes both direct and example-based reasoning

3. **Mathematical Notation**

- Q: Represents input queries (e.g., "How many apples...")

- R: Denotes system responses (e.g., "We add 7 to 2...")

- Z: Latent space representation in the CVAE architecture

### Key Observations

1. The system uses both direct reasoning and example-based reasoning

2. The reasoning policy acts as a central decision-making component

3. The example bank serves as a knowledge repository for similar problems

4. The CVAE architecture enables structured reasoning through latent space manipulation

5. Visual elements (colors, icons) help distinguish different components

### Interpretation

This architecture demonstrates a hybrid approach to question answering that combines:

1. **Pre-trained Language Models**: For initial text understanding

2. **Custom Reasoning Components**: For problem-solving logic

3. **Example-Based Learning**: Through the example bank mechanism

4. **Probabilistic Reasoning**: Via the conditional variational auto-encoder

The system appears designed to handle arithmetic and simple logical reasoning tasks by:

- First determining the appropriate reasoning strategy

- Either generating a direct answer or retrieving similar examples

- Processing through a structured reasoning pipeline

- Maintaining consistency between direct and example-based reasoning paths

The use of CVAE suggests the system can handle uncertainty in reasoning steps while maintaining coherent problem-solving trajectories. The explicit example selection mechanism indicates an emphasis on leveraging past solutions for new problems.