TECHNICAL ASSET FINGERPRINT

8f2eb67deacfab06cce50537

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## Diagram: Training Stages for Knowledge Graph Empowerment, Enhancement, and Generalization

### Overview

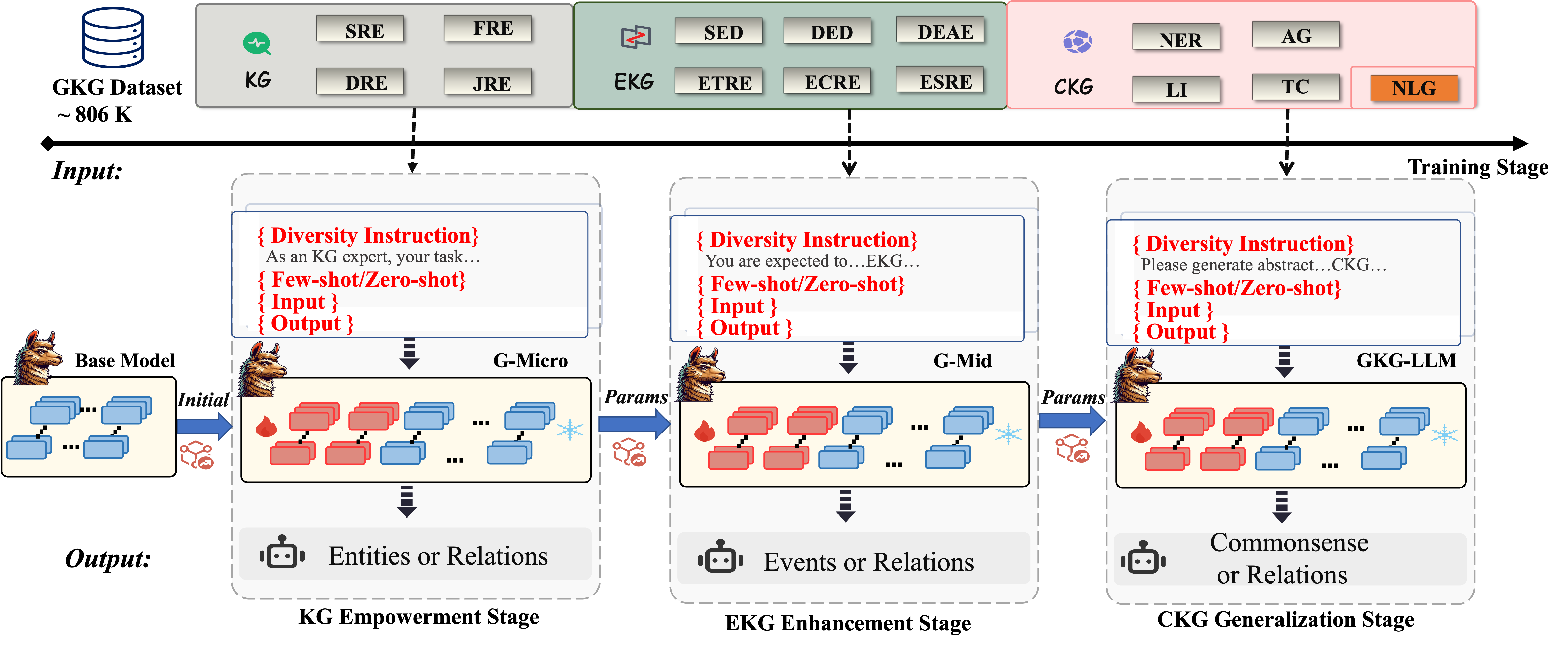

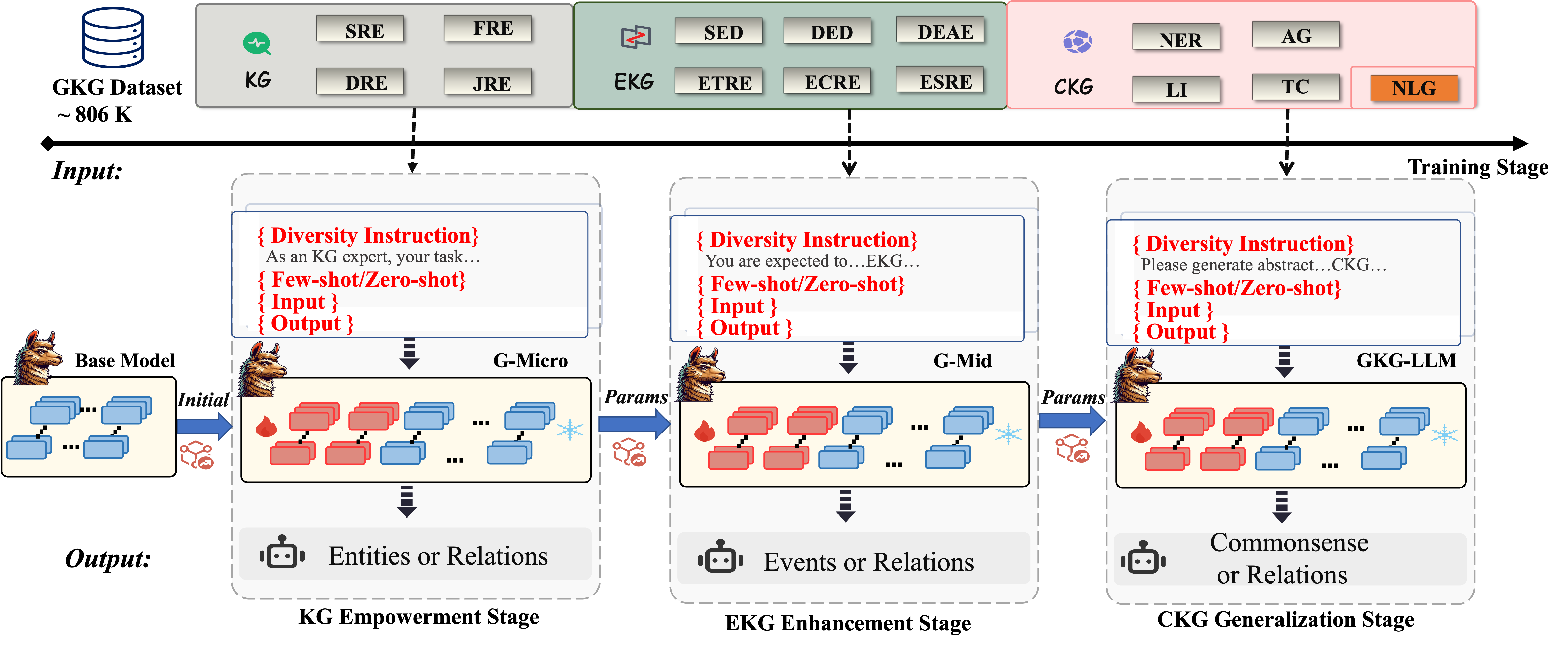

The image is a diagram illustrating a multi-stage training process involving Knowledge Graphs (KG), Event Knowledge Graphs (EKG), and Commonsense Knowledge Graphs (CKG). The process uses a base model and progresses through three stages: KG Empowerment, EKG Enhancement, and CKG Generalization. Each stage involves diversity instruction, few-shot/zero-shot learning, input, and output.

### Components/Axes

* **Header:** Contains labels for different knowledge graph types and tasks.

* **KG (Knowledge Graph):** Includes SRE (Semantic Relation Extraction), DRE (Domain Relation Extraction).

* **EKG (Event Knowledge Graph):** Includes SED (Semantic Event Detection), ETRE (Event Temporal Relation Extraction), ECRE (Event Cause Relation Extraction), ESRE (Event Spatial Relation Extraction), DED (Domain Event Detection), DEAE (Domain Event Argument Extraction).

* **CKG (Commonsense Knowledge Graph):** Includes NER (Named Entity Recognition), LI (Linguistic Inference), AG (Argument Generation), TC (Textual Completion), NLG (Natural Language Generation).

* **Main Body:** Illustrates the three training stages.

* **Input:** Indicates the GKG Dataset of approximately 806K.

* **Training Stage:** A horizontal arrow indicating the progression of the training process.

* **KG Empowerment Stage:** Involves a "Base Model" and "G-Micro" model. The input is "Entities or Relations".

* **EKG Enhancement Stage:** Involves a "G-Mid" model. The input is "Events or Relations".

* **CKG Generalization Stage:** Involves a "GKG-LLM" model. The input is "Commonsense or Relations".

* **Footer:** Labels the output of each stage.

* **Output:** Indicates the type of output generated at each stage.

### Detailed Analysis

1. **Data Source:** The training process uses a "GKG Dataset" of approximately 806K.

2. **Training Stages:**

* **KG Empowerment Stage:**

* Starts with a "Base Model" represented by blue blocks.

* Transitions to a "G-Micro" model, where the blocks are a mix of red and blue, indicating a transformation or enhancement.

* The process is guided by "{ Diversity Instruction} As an KG expert, your task... {Few-shot/Zero-shot} { Input } { Output }".

* The output is "Entities or Relations".

* **EKG Enhancement Stage:**

* The "G-Micro" model transitions to a "G-Mid" model, again with a mix of red and blue blocks.

* The process is guided by "{ Diversity Instruction} You are expected to...EKG... { Few-shot/Zero-shot} { Input } { Output }".

* The output is "Events or Relations".

* **CKG Generalization Stage:**

* The "G-Mid" model transitions to a "GKG-LLM" model, with a mix of red and blue blocks.

* The process is guided by "{ Diversity Instruction} Please generate abstract...CKG... { Few-shot/Zero-shot} { Input } { Output }".

* The output is "Commonsense or Relations".

3. **Model Progression:** The models progress from "Base Model" to "G-Micro", "G-Mid", and finally "GKG-LLM". The transition between models is indicated by arrows labeled "Initial" and "Params".

4. **Visual Representation:** The blue blocks likely represent initial data or states, while the red blocks represent transformed or enhanced data/states. The snowflake icon may represent a cooling or refinement process. The flame icon may represent a heating or intensification process.

### Key Observations

* The diagram illustrates a pipeline for training models to handle different types of knowledge graphs.

* Each stage focuses on a specific type of knowledge graph: KG, EKG, and CKG.

* The models are progressively enhanced through the stages, as indicated by the transition from "Base Model" to "G-Micro", "G-Mid", and "GKG-LLM".

* The use of "Diversity Instruction" and "Few-shot/Zero-shot" learning suggests a focus on improving the model's ability to generalize and adapt to new data.

### Interpretation

The diagram presents a structured approach to training models for knowledge graph tasks. The progression from KG to EKG to CKG suggests an increasing level of complexity and abstraction. The use of diversity instruction and few-shot/zero-shot learning indicates a focus on building models that can handle a wide range of tasks with limited data. The visual representation of the models and data transformations provides a high-level overview of the training process. The diagram highlights the importance of each stage in building a comprehensive knowledge graph system.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

## Diagram: Knowledge Graph Enhanced Large Language Model Training Pipeline

### Overview

This diagram illustrates a three-stage pipeline for training a large language model (LLM) enhanced with knowledge graphs (KG). The pipeline begins with a KG dataset, progresses through micro and mid-level training stages (G-Micro, G-Mid), and culminates in a KG-LLM stage. Each stage involves inputting data, processing it through a model, and generating an output. The diagram emphasizes the use of "Diversity Instruction" at each stage.

### Components/Axes

The diagram is structured into three main columns representing the three training stages: KG Empowerment, EKG Enhancement, and CKG Generalization. Each stage has an "Input" section, a processing block (G-Micro, G-Mid, GK-LLM), and an "Output" section. A "Base Model" is shown at the bottom, feeding into the first stage. A "GKG Dataset ~806K" cylinder is at the top-left, representing the initial data source.

**Input Stage Labels (Top Row):**

* **KG:** SRE, FRE, DRE, JRE

* **EKG:** SED, DED, DEAE, ETRE, ECRE, ESIE

* **CKG:** NER, AG, LI, TC, NLG

**Stage Titles:**

* KG Empowerment Stage

* EKG Enhancement Stage

* CKG Generalization Stage

**Diversity Instruction Boxes:**

* "As an KG expert, your task..." (KG Empowerment)

* "You are expected to...EKG..." (EKG Enhancement)

* "Please generate abstract...CKG..." (CKG Generalization)

**Model Blocks:**

* G-Micro

* G-Mid

* GK-LLM

**Output Icons:**

* Entities or Relations (KG Empowerment)

* Events or Relations (EKG Enhancement)

* Commonsense or Relations (CKG Generalization)

### Detailed Analysis or Content Details

The diagram shows a flow of information from the GKG Dataset (~806K) to the Base Model, then through the three stages.

**Stage 1: KG Empowerment**

* **Input:** The KG input consists of four categories: SRE, FRE, DRE, and JRE.

* **Processing:** The input is fed into the G-Micro model. The model is shown as a series of interconnected boxes, with arrows indicating data flow. "Params" are passed from the G-Micro model to the next stage.

* **Output:** The output is represented by an icon of entities or relations.

**Stage 2: EKG Enhancement**

* **Input:** The EKG input consists of six categories: SED, DED, DEAE, ETRE, ECRE, and ESIE.

* **Processing:** The input is fed into the G-Mid model, which is similarly structured as G-Micro. "Params" are passed from the G-Mid model to the next stage.

* **Output:** The output is represented by an icon of events or relations.

**Stage 3: CKG Generalization**

* **Input:** The CKG input consists of five categories: NER, AG, LI, TC, and NLG.

* **Processing:** The input is fed into the GK-LLM model, which is similarly structured as G-Micro and G-Mid.

* **Output:** The output is represented by an icon of commonsense or relations.

The "Training Stage" label is positioned at the top-right, indicating the overall context of the diagram. The "Input" label is positioned at the top-left, and the "Output" label is positioned at the bottom-center. The "Diversity Instruction" boxes are placed above each model block, indicating their role in guiding the training process.

### Key Observations

The diagram highlights a sequential training process, where each stage builds upon the previous one. The use of "Diversity Instruction" suggests a focus on generating varied and robust outputs. The increasing complexity of the input categories (4 in KG, 6 in EKG, 5 in CKG) might indicate a growing need for more nuanced data as the model progresses. The outputs shift from basic entities/relations to more complex events/relations and finally to commonsense/relations, suggesting a progression in the model's understanding capabilities.

### Interpretation

This diagram depicts a pipeline for enhancing a large language model with knowledge graphs. The three stages – KG Empowerment, EKG Enhancement, and CKG Generalization – represent a phased approach to integrating knowledge into the model. The initial stage focuses on establishing a foundation of entities and relations, the second stage refines this with event-based knowledge, and the final stage aims to instill commonsense reasoning. The "Diversity Instruction" component suggests a deliberate effort to avoid biases and promote generalization. The diagram implies that the model starts with a "Base Model" and iteratively improves its performance through the three stages, leveraging the knowledge graphs and the specified training instructions. The use of "Params" passing between stages suggests a form of transfer learning or fine-tuning. The diagram is a high-level overview and doesn't provide specific details about the model architectures or training algorithms used.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Diagram: Multi-Stage Knowledge Graph-Enhanced Language Model Training Pipeline

### Overview

This image is a technical flowchart illustrating a three-stage training pipeline for enhancing a Large Language Model (LLM) with knowledge from various knowledge graphs (KGs). The process begins with a base model and a large dataset, progressing through specialized stages to produce a final model capable of handling diverse knowledge tasks. The diagram details the input data sources, the specific tasks involved at each stage, the model evolution, and the expected outputs.

### Components/Axes

The diagram is organized into three horizontal layers and three vertical stages.

**Top Layer (Input Data & Tasks):**

* **Leftmost Element:** An icon of a database labeled **"GKG Dataset"** with a size annotation **"~ 806 K"**.

* **Three Colored Task Boxes:**

1. **Grey Box (KG):** Contains tasks: **SRE, FRE, DRE, JRE**.

2. **Green Box (EKG):** Contains tasks: **SED, DED, DEAE, ETRE, ECRE, ESRE**.

3. **Pink Box (CKG):** Contains tasks: **NER, AG, LI, TC, NLG**. The **NLG** task is highlighted with an orange background.

* A thick black arrow labeled **"Input:"** on the left and **"Training Stage"** on the right runs beneath these boxes, indicating the flow of data into the training process.

**Middle Layer (Model Training Stages):**

This layer shows the sequential training stages, each with a consistent internal structure.

* **Stage 1: KG Empowerment Stage**

* **Input Model:** **"Base Model"** (represented by a llama icon and a neural network diagram).

* **Process:** Receives a **"{ Diversity Instruction}"** template: *"As an KG expert, your task..."*, along with **"{ Few-shot/Zero-shot}"**, **"{ Input }"**, and **"{ Output }"** placeholders.

* **Model:** **"G-Micro"** (llama icon with a neural network showing some red "active" and blue "frozen" layers).

* **Output:** A robot icon with the text **"Entities or Relations"**.

* **Stage 2: EKG Enhancement Stage**

* **Input Model:** The **"G-Micro"** model from the previous stage, with parameters (**"Params"**) transferred via a blue arrow.

* **Process:** Receives a **"{ Diversity Instruction}"** template: *"You are expected to...EKG..."*, with the same placeholder structure.

* **Model:** **"G-Mid"** (llama icon with a neural network).

* **Output:** A robot icon with the text **"Events or Relations"**.

* **Stage 3: CKG Generalization Stage**

* **Input Model:** The **"G-Mid"** model from the previous stage, with parameters (**"Params"**) transferred.

* **Process:** Receives a **"{ Diversity Instruction}"** template: *"Please generate abstract...CKG..."*, with the same placeholder structure.

* **Model:** **"GKG-LLM"** (final llama icon with a neural network).

* **Output:** A robot icon with the text **"Commonsense or Relations"**.

**Bottom Layer (Stage Labels):**

* Labels corresponding to the three stages above: **"KG Empowerment Stage"**, **"EKG Enhancement Stage"**, and **"CKG Generalization Stage"**.

### Detailed Analysis

* **Data Flow:** The pipeline is strictly sequential. The Base Model is initialized and trained in the first stage to become G-Micro. G-Micro's parameters are then used to initialize the second stage, producing G-Mid. Finally, G-Mid's parameters initialize the third stage, resulting in the final GKG-LLM.

* **Task Progression:** The tasks evolve in complexity and abstraction:

* **KG Stage:** Focuses on fundamental knowledge graph tasks (e.g., SRE - Subject Relation Extraction, FRE - Fact Relation Extraction).

* **EKG Stage:** Focuses on event-centric knowledge graph tasks (e.g., SED - Event Detection, ETRE - Event Temporal Relation Extraction).

* **CKG Stage:** Focuses on commonsense knowledge graph tasks and generation (e.g., NER - Named Entity Recognition, NLG - Natural Language Generation).

* **Training Methodology:** Each stage uses a structured prompt template featuring a **"Diversity Instruction"** tailored to the stage's focus (KG, EKG, CKG), combined with few-shot or zero-shot learning paradigms.

* **Visual Metaphors:**

* The **llama icon** represents the core LLM being trained.

* The **neural network diagrams** within each model box use **red blocks** to likely symbolize active/trainable parameters and **blue blocks** to symbolize frozen parameters.

* The **robot icon** represents the model's output capability for that stage.

### Key Observations

1. **Staged Specialization:** The training is not monolithic. It deliberately breaks down the complex goal of "knowledge-enhanced LLM" into three manageable, specialized phases.

2. **Parameter Efficiency:** The use of parameter transfer ("Params" arrows) between stages suggests a continual learning or fine-tuning approach, building upon previously learned knowledge rather than training from scratch each time.

3. **Task Diversity:** The extensive list of acronyms (SRE, FRE, SED, NER, etc.) indicates the model is being trained on a wide array of specific sub-tasks within the broader KG, EKG, and CKG domains.

4. **Output Evolution:** The model's designated output becomes progressively more abstract: from concrete "Entities or Relations," to "Events or Relations," and finally to "Commonsense or Relations."

### Interpretation

This diagram outlines a sophisticated methodology for creating a specialized LLM. The core insight is that general knowledge is not monolithic; it can be decomposed into structured knowledge (KG), dynamic event knowledge (EKG), and implicit commonsense knowledge (CKG). By training a model sequentially on these domains—starting with the most structured and moving to the most abstract—the pipeline aims to build a robust and versatile knowledge-aware system (**GKG-LLM**).

The "Diversity Instruction" in each stage is critical. It likely prevents the model from overfitting to a narrow task format, encouraging it to learn the underlying knowledge structure rather than just pattern matching. The progression from few-shot/zero-shot learning in the prompts also suggests the final model is intended to generalize well to new, unseen tasks with minimal examples.

The entire process is data-hungry, as indicated by the large **GKG Dataset (~806 K)**. The final model, **GKG-LLM**, is positioned as the culmination of this process, capable of handling not just extraction and classification (like NER) but also generative tasks (NLG) grounded in commonsense knowledge. This suggests the goal is to create an LLM that doesn't just retrieve facts but can reason and generate text with a deeper understanding of how entities, events, and everyday concepts interrelate.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Diagram: Multi-Stage Knowledge Graph Processing Pipeline

### Overview

The diagram illustrates a three-stage pipeline for processing knowledge graphs (KG), with progressive enhancement through entity/relation extraction, event/relation modeling, and commonsense generalization. The pipeline uses a base model with iterative parameter updates across stages.

### Components/Axes

1. **Legend** (Top-center):

- Blue: Entities/Relations

- Red: Events/Relations

- Gray: Commonsense/Relations

- Fire icon: Parameters

- Snowflake icon: Outputs

2. **Stages** (Left to Right):

- **KG Empowerment Stage** (Blue background):

- Input: Diversity instructions + few-shot examples

- Base Model: Initial blue rectangles (entities)

- Output: Entities or Relations (blue rectangles)

- **EKG Enhancement Stage** (Green background):

- Input: Modified diversity instructions

- G-Micro/G-Mid: Red/blue rectangles with fire icons

- Output: Events or Relations (red/blue rectangles)

- **CKG Generalization Stage** (Pink background):

- Input: Abstract diversity instructions

- G-KG-LLM: Red/blue rectangles with fire icons

- Output: Commonsense or Relations (gray rectangles)

3. **Flow Arrows**:

- Blue arrows: Parameter transfer between stages

- Dashed black arrows: Input/output connections

- Solid black arrows: Stage progression

### Detailed Analysis

1. **KG Empowerment Stage**:

- Base model processes diversity instructions with few-shot examples

- Initial output: Entities (blue) and Relations (red)

- Parameters (fire icon) extracted for next stage

2. **EKG Enhancement Stage**:

- G-Micro/G-Mid models process enhanced instructions

- Output: Events (red) and Relations (blue)

- Parameters refined through iterative processing

3. **CKG Generalization Stage**:

- G-KG-LLM model handles abstract instructions

- Final output: Commonsense relations (gray) and Relations (blue)

- Parameters optimized for generalization

### Key Observations

1. Color-coded progression: Blue → Red → Gray outputs indicate increasing abstraction

2. Parameter flow: Fire icons show continuous optimization across stages

3. Input complexity: Instructions evolve from concrete examples to abstract prompts

4. Output diversity: Each stage produces multiple relation types with distinct colors

### Interpretation

This pipeline demonstrates a knowledge distillation process where:

1. Raw entity extraction (KG Empowerment) provides foundational data

2. Event modeling (EKG Enhancement) adds temporal dynamics

3. Commonsense relations (CKG Generalization) enable reasoning beyond explicit facts

The parameter transfer (fire icons) suggests knowledge distillation between models, while the color-coded outputs show increasing semantic complexity. The use of few-shot/zero-shot methods indicates the system's ability to adapt to new domains with minimal examples. The final stage's commonsense relations imply the system can infer implicit connections not present in the original dataset.

DECODING INTELLIGENCE...