## Diagram: Multi-Stage Knowledge Graph Processing Pipeline

### Overview

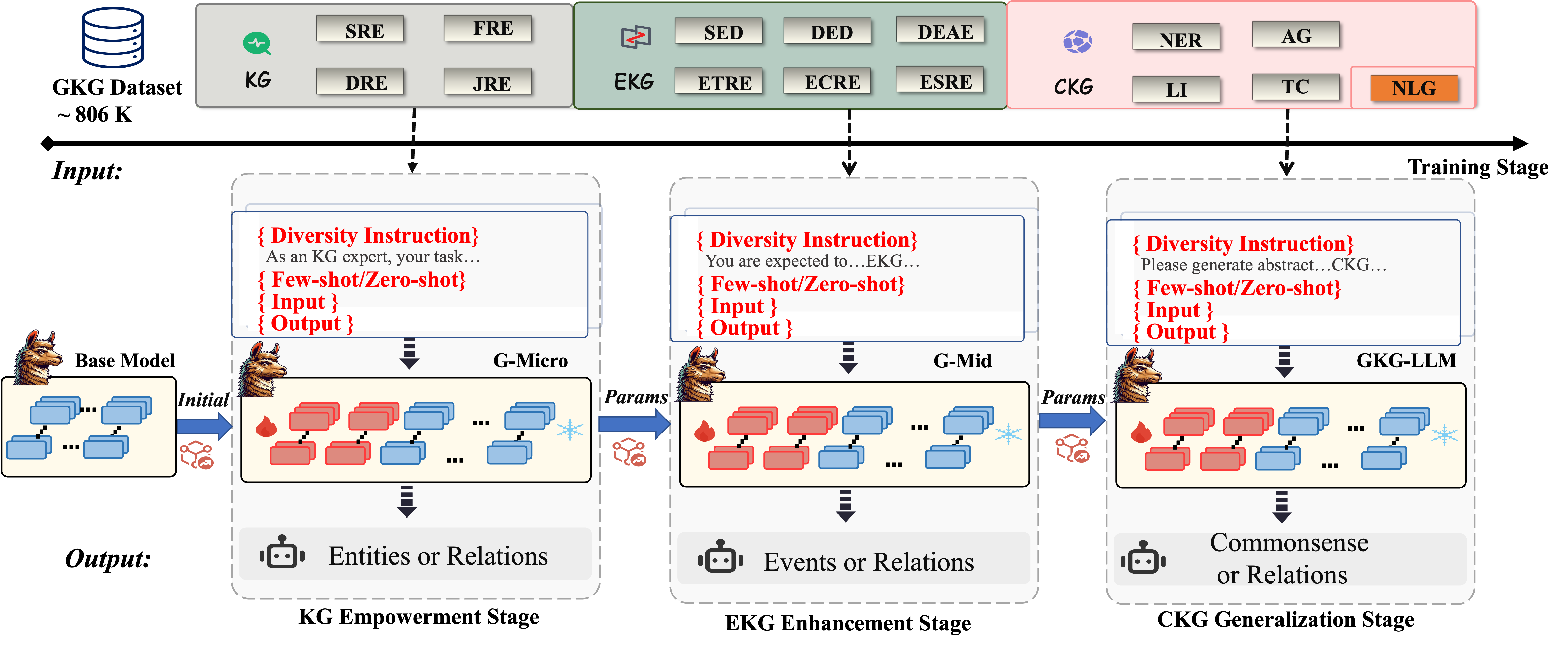

The diagram illustrates a three-stage pipeline for processing knowledge graphs (KG), with progressive enhancement through entity/relation extraction, event/relation modeling, and commonsense generalization. The pipeline uses a base model with iterative parameter updates across stages.

### Components/Axes

1. **Legend** (Top-center):

- Blue: Entities/Relations

- Red: Events/Relations

- Gray: Commonsense/Relations

- Fire icon: Parameters

- Snowflake icon: Outputs

2. **Stages** (Left to Right):

- **KG Empowerment Stage** (Blue background):

- Input: Diversity instructions + few-shot examples

- Base Model: Initial blue rectangles (entities)

- Output: Entities or Relations (blue rectangles)

- **EKG Enhancement Stage** (Green background):

- Input: Modified diversity instructions

- G-Micro/G-Mid: Red/blue rectangles with fire icons

- Output: Events or Relations (red/blue rectangles)

- **CKG Generalization Stage** (Pink background):

- Input: Abstract diversity instructions

- G-KG-LLM: Red/blue rectangles with fire icons

- Output: Commonsense or Relations (gray rectangles)

3. **Flow Arrows**:

- Blue arrows: Parameter transfer between stages

- Dashed black arrows: Input/output connections

- Solid black arrows: Stage progression

### Detailed Analysis

1. **KG Empowerment Stage**:

- Base model processes diversity instructions with few-shot examples

- Initial output: Entities (blue) and Relations (red)

- Parameters (fire icon) extracted for next stage

2. **EKG Enhancement Stage**:

- G-Micro/G-Mid models process enhanced instructions

- Output: Events (red) and Relations (blue)

- Parameters refined through iterative processing

3. **CKG Generalization Stage**:

- G-KG-LLM model handles abstract instructions

- Final output: Commonsense relations (gray) and Relations (blue)

- Parameters optimized for generalization

### Key Observations

1. Color-coded progression: Blue → Red → Gray outputs indicate increasing abstraction

2. Parameter flow: Fire icons show continuous optimization across stages

3. Input complexity: Instructions evolve from concrete examples to abstract prompts

4. Output diversity: Each stage produces multiple relation types with distinct colors

### Interpretation

This pipeline demonstrates a knowledge distillation process where:

1. Raw entity extraction (KG Empowerment) provides foundational data

2. Event modeling (EKG Enhancement) adds temporal dynamics

3. Commonsense relations (CKG Generalization) enable reasoning beyond explicit facts

The parameter transfer (fire icons) suggests knowledge distillation between models, while the color-coded outputs show increasing semantic complexity. The use of few-shot/zero-shot methods indicates the system's ability to adapt to new domains with minimal examples. The final stage's commonsense relations imply the system can infer implicit connections not present in the original dataset.