TECHNICAL ASSET FINGERPRINT

8f2f074ee07c8b0b3a7b003d

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

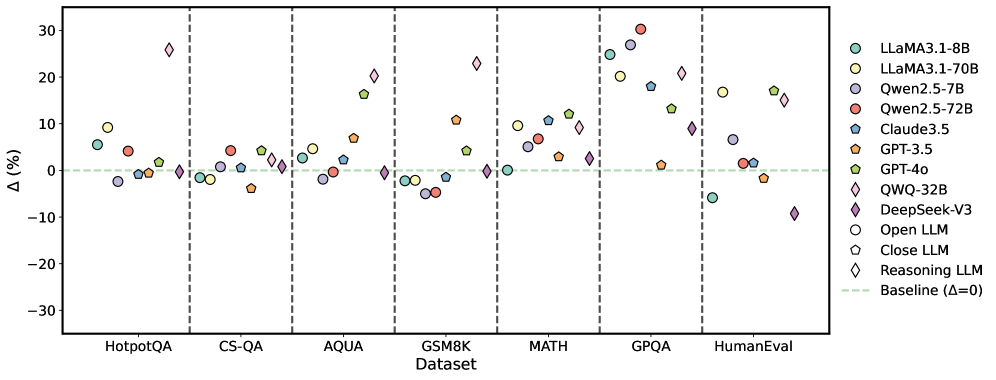

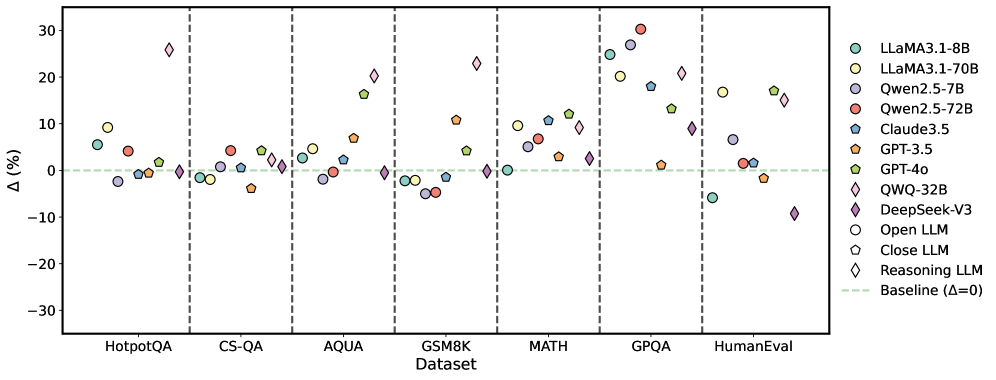

## Scatter Plot: LLM Performance on Various Datasets

### Overview

The image is a scatter plot comparing the performance of various Large Language Models (LLMs) on different datasets. The y-axis represents the percentage difference (Δ (%)), and the x-axis represents the datasets. Each LLM is represented by a unique color and marker. A horizontal dashed line indicates the baseline performance (Δ = 0).

### Components/Axes

* **X-axis:** Datasets: HotpotQA, CS-QA, AQUA, GSM8K, MATH, GPQA, HumanEval.

* **Y-axis:** Δ (%) - Percentage difference, ranging from -30 to 30 with increments of 10.

* **Legend:** Located on the right side of the plot, associating each LLM with a specific color and marker.

* LLaMA3.1-8B (light teal circle)

* LLaMA3.1-70B (light yellow circle)

* Qwen2.5-7B (light purple circle)

* Qwen2.5-72B (red circle)

* Claude3.5 (teal pentagon)

* GPT-3.5 (orange pentagon)

* GPT-4o (light green pentagon)

* QWQ-32B (light teal diamond)

* DeepSeek-V3 (purple diamond)

* Open LLM (white circle)

* Close LLM (white pentagon)

* Reasoning LLM (white diamond)

* Baseline (light green dashed line)

### Detailed Analysis

**Dataset: HotpotQA**

* LLaMA3.1-8B (light teal circle): ~5%

* LLaMA3.1-70B (light yellow circle): ~3%

* Qwen2.5-7B (light purple circle): ~-1%

* Qwen2.5-72B (red circle): ~5%

* Claude3.5 (teal pentagon): ~6%

* GPT-3.5 (orange pentagon): ~1%

* GPT-4o (light green pentagon): ~5%

* QWQ-32B (light teal diamond): ~2%

* DeepSeek-V3 (purple diamond): ~-1%

**Dataset: CS-QA**

* LLaMA3.1-8B (light teal circle): ~-1%

* LLaMA3.1-70B (light yellow circle): ~-1%

* Qwen2.5-7B (light purple circle): ~-2%

* Qwen2.5-72B (red circle): ~3%

* Claude3.5 (teal pentagon): ~2%

* GPT-3.5 (orange pentagon): ~-1%

* GPT-4o (light green pentagon): ~1%

* QWQ-32B (light teal diamond): ~1%

* DeepSeek-V3 (purple diamond): ~-1%

**Dataset: AQUA**

* LLaMA3.1-8B (light teal circle): ~5%

* LLaMA3.1-70B (light yellow circle): ~6%

* Qwen2.5-7B (light purple circle): ~-1%

* Qwen2.5-72B (red circle): ~-1%

* Claude3.5 (teal pentagon): ~7%

* GPT-3.5 (orange pentagon): ~5%

* GPT-4o (light green pentagon): ~6%

* QWQ-32B (light teal diamond): ~1%

* DeepSeek-V3 (purple diamond): ~2%

**Dataset: GSM8K**

* LLaMA3.1-8B (light teal circle): ~-1%

* LLaMA3.1-70B (light yellow circle): ~-1%

* Qwen2.5-7B (light purple circle): ~-4%

* Qwen2.5-72B (red circle): ~-3%

* Claude3.5 (teal pentagon): ~-1%

* GPT-3.5 (orange pentagon): ~-5%

* GPT-4o (light green pentagon): ~0%

* QWQ-32B (light teal diamond): ~0%

* DeepSeek-V3 (purple diamond): ~-4%

**Dataset: MATH**

* LLaMA3.1-8B (light teal circle): ~10%

* LLaMA3.1-70B (light yellow circle): ~11%

* Qwen2.5-7B (light purple circle): ~6%

* Qwen2.5-72B (red circle): ~10%

* Claude3.5 (teal pentagon): ~7%

* GPT-3.5 (orange pentagon): ~4%

* GPT-4o (light green pentagon): ~10%

* QWQ-32B (light teal diamond): ~11%

* DeepSeek-V3 (purple diamond): ~3%

**Dataset: GPQA**

* LLaMA3.1-8B (light teal circle): ~20%

* LLaMA3.1-70B (light yellow circle): ~22%

* Qwen2.5-7B (light purple circle): ~18%

* Qwen2.5-72B (red circle): ~29%

* Claude3.5 (teal pentagon): ~2%

* GPT-3.5 (orange pentagon): ~-3%

* GPT-4o (light green pentagon): ~14%

* QWQ-32B (light teal diamond): ~27%

* DeepSeek-V3 (purple diamond): ~3%

**Dataset: HumanEval**

* LLaMA3.1-8B (light teal circle): ~-6%

* LLaMA3.1-70B (light yellow circle): ~-1%

* Qwen2.5-7B (light purple circle): ~-2%

* Qwen2.5-72B (red circle): ~5%

* Claude3.5 (teal pentagon): ~1%

* GPT-3.5 (orange pentagon): ~-3%

* GPT-4o (light green pentagon): ~1%

* QWQ-32B (light teal diamond): ~16%

* DeepSeek-V3 (purple diamond): ~-9%

### Key Observations

* The performance of the LLMs varies significantly across different datasets.

* Some models (e.g., QWQ-32B) show high variance in performance, excelling in some datasets (GPQA) but underperforming in others (HumanEval).

* The baseline (Δ = 0) serves as a reference point, with some models consistently outperforming it while others fluctuate around it.

* MATH and GPQA datasets seem to be more challenging, with a wider range of performance differences among the models.

* GSM8K shows generally negative performance differences for most models.

### Interpretation

The scatter plot provides a comparative analysis of LLM performance across various datasets. The data suggests that the choice of LLM can significantly impact performance depending on the specific task or dataset. The variability in performance highlights the importance of selecting the appropriate model for a given application. The positive and negative percentage differences indicate whether a model is performing better or worse than a certain baseline (likely another model or a previous version). The datasets themselves likely represent different types of tasks or challenges, which explains the varying performance of the LLMs. The plot also reveals potential strengths and weaknesses of each model, which can inform future development and optimization efforts.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

## Scatter Plot: Performance Comparison of Large Language Models

### Overview

This scatter plot compares the performance of several Large Language Models (LLMs) across seven different datasets. The y-axis represents the performance difference (Δ) in percentage points, while the x-axis lists the datasets used for evaluation. A horizontal dashed line at Δ=0 indicates the baseline performance.

### Components/Axes

* **X-axis:** Dataset - with the following categories: HotpotQA, CS-QA, AQUA, GSM8K, MATH, GPQA, HumanEval.

* **Y-axis:** Δ (%) - Performance Difference in percentage. Scale ranges from approximately -30% to 30%.

* **Legend:** Located in the top-right corner, identifies each LLM with a corresponding color and marker shape. The LLMs included are:

* LLaMA3.1-8B (Light Pink Circle)

* LLaMA3.1-70B (Light Yellow Circle)

* Qwen2.5-7B (Light Blue Circle)

* Qwen2.5-72B (Red Circle)

* Claude3.5 (Dark Blue Triangle)

* GPT-3.5 (Gray Triangle)

* GPT-4o (Purple Diamond)

* QWQ-32B (Orange Diamond)

* DeepSeek-V3 (Dark Purple Diamond)

* Open LLM (White Circle)

* Close LLM (Light Gray Circle)

* Reasoning LLM (Light Blue Diamond)

* Baseline (Δ=0) (Horizontal Dashed Green Line)

### Detailed Analysis

The plot shows the performance difference of each LLM relative to a baseline (Δ=0) on each dataset.

* **HotpotQA:** Most models cluster around 0% to 10%. LLaMA3.1-8B shows a slight negative difference (around -5%), while Qwen2.5-72B shows a positive difference (around 5-10%).

* **CS-QA:** Similar to HotpotQA, most models are within 0% to 10%. Qwen2.5-72B shows a more pronounced positive difference (around 10-15%).

* **AQUA:** A wider range of performance differences is observed. GPT-4o and DeepSeek-V3 show the highest positive differences (around 15-25%). LLaMA3.1-8B and Qwen2.5-7B show negative differences (around -5% to -10%).

* **GSM8K:** GPT-4o and DeepSeek-V3 again exhibit the largest positive differences (around 15-25%). Qwen2.5-72B shows a moderate positive difference (around 5-10%).

* **MATH:** GPT-4o and DeepSeek-V3 have the highest positive differences (around 20-30%). Other models are generally closer to the baseline.

* **GPQA:** GPT-4o and DeepSeek-V3 show significant positive differences (around 15-25%). Claude3.5 also shows a positive difference (around 10%).

* **HumanEval:** GPT-4o shows a large positive difference (around 15-20%). Qwen2.5-72B shows a moderate positive difference (around 5-10%).

**Specific Data Points (Approximate):**

* **GPT-4o:** AQUA (~22%), GSM8K (~22%), MATH (~28%), GPQA (~20%), HumanEval (~18%)

* **DeepSeek-V3:** AQUA (~18%), GSM8K (~18%), MATH (~20%), GPQA (~15%)

* **Qwen2.5-72B:** HotpotQA (~8%), CS-QA (~12%), AQUA (~5%), GSM8K (~8%), MATH (~2%), GPQA (~5%), HumanEval (~8%)

* **LLaMA3.1-8B:** HotpotQA (~-5%), CS-QA (~2%), AQUA (~-8%), GSM8K (~-2%), MATH (~-2%), GPQA (~2%), HumanEval (~2%)

### Key Observations

* GPT-4o and DeepSeek-V3 consistently outperform other models across most datasets, particularly on the more challenging ones (AQUA, GSM8K, MATH, GPQA).

* Qwen2.5-72B generally performs better than Qwen2.5-7B.

* LLaMA3.1-8B shows relatively lower performance compared to other models, especially on AQUA and GSM8K.

* The performance differences are more pronounced on datasets requiring reasoning and mathematical abilities (AQUA, GSM8K, MATH, GPQA).

### Interpretation

The data suggests that GPT-4o and DeepSeek-V3 are currently the leading LLMs in terms of overall performance, demonstrating superior capabilities in complex reasoning and mathematical problem-solving. The consistent outperformance of these models across multiple datasets indicates a robust and generalizable advantage. The performance differences between the 7B and 72B versions of Qwen highlight the importance of model size for achieving higher accuracy. The relatively lower performance of LLaMA3.1-8B suggests that it may require further optimization or scaling to compete with the state-of-the-art models. The datasets themselves appear to be ordered by difficulty, with the more challenging datasets exhibiting larger performance variations between models. The baseline (Δ=0) serves as a crucial reference point, allowing for a clear assessment of the relative improvements or declines in performance achieved by each LLM.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Scatter Plot: Model Performance Delta (Δ%) Across Datasets

### Overview

The image is a scatter plot comparing the performance change (Δ%) of various large language models (LLMs) across seven different benchmark datasets. The plot uses distinct markers and colors to represent different models and model types (Open, Closed, Reasoning). A baseline at Δ=0 is indicated.

### Components/Axes

* **X-Axis (Categorical):** Labeled "Dataset". Seven datasets are listed from left to right, separated by vertical dashed gray lines:

1. HotpotQA

2. CS-QA

3. AQUA

4. GSM8K

5. MATH

6. GPQA

7. HumanEval

* **Y-Axis (Numerical):** Labeled "Δ(%)". The scale ranges from -30 to 30, with major tick marks at intervals of 10 (-30, -20, -10, 0, 10, 20, 30).

* **Baseline:** A horizontal dashed green line at y=0, labeled "Baseline (Δ=0)" in the legend.

* **Legend (Top-Right Corner):** Contains two sections.

* **Models (by color):**

* LLaMA3.1-8B (Teal circle)

* LLaMA3.1-70B (Light green circle)

* Qwen2.5-7B (Light purple circle)

* Qwen2.5-72B (Red circle)

* Claude3.5 (Blue pentagon)

* GPT-3.5 (Orange pentagon)

* GPT-4o (Green pentagon)

* QWQ-32B (Purple diamond)

* DeepSeek-V3 (Pink diamond)

* **Model Types (by shape):**

* Open LLM (Circle)

* Close LLM (Pentagon)

* Reasoning LLM (Diamond)

### Detailed Analysis

The plot shows the Δ(%) for each model on each dataset. Values are approximate based on visual positioning relative to the y-axis.

**1. HotpotQA:**

* LLaMA3.1-8B (Teal circle): ~ +5%

* LLaMA3.1-70B (Light green circle): ~ +9%

* Qwen2.5-7B (Light purple circle): ~ -2%

* Qwen2.5-72B (Red circle): ~ +4%

* Claude3.5 (Blue pentagon): ~ -1%

* GPT-3.5 (Orange pentagon): ~ 0%

* GPT-4o (Green pentagon): ~ +2%

* QWQ-32B (Purple diamond): ~ 0%

* DeepSeek-V3 (Pink diamond): ~ +26% (Significant outlier)

**2. CS-QA:**

* LLaMA3.1-8B (Teal circle): ~ -1%

* LLaMA3.1-70B (Light green circle): ~ -1%

* Qwen2.5-7B (Light purple circle): ~ 0%

* Qwen2.5-72B (Red circle): ~ +4%

* Claude3.5 (Blue pentagon): ~ +1%

* GPT-3.5 (Orange pentagon): ~ -3%

* GPT-4o (Green pentagon): ~ +4%

* QWQ-32B (Purple diamond): ~ +1%

* DeepSeek-V3 (Pink diamond): ~ +2%

**3. AQUA:**

* LLaMA3.1-8B (Teal circle): ~ +3%

* LLaMA3.1-70B (Light green circle): ~ +2%

* Qwen2.5-7B (Light purple circle): ~ -2%

* Qwen2.5-72B (Red circle): ~ +1%

* Claude3.5 (Blue pentagon): ~ +7%

* GPT-3.5 (Orange pentagon): ~ +7%

* GPT-4o (Green pentagon): ~ +16%

* QWQ-32B (Purple diamond): ~ 0%

* DeepSeek-V3 (Pink diamond): ~ +20%

**4. GSM8K:**

* LLaMA3.1-8B (Teal circle): ~ -2%

* LLaMA3.1-70B (Light green circle): ~ -1%

* Qwen2.5-7B (Light purple circle): ~ -4%

* Qwen2.5-72B (Red circle): ~ -4%

* Claude3.5 (Blue pentagon): ~ -1%

* GPT-3.5 (Orange pentagon): ~ +11%

* GPT-4o (Green pentagon): ~ +5%

* QWQ-32B (Purple diamond): ~ 0%

* DeepSeek-V3 (Pink diamond): ~ +23%

**5. MATH:**

* LLaMA3.1-8B (Teal circle): ~ 0%

* LLaMA3.1-70B (Light green circle): ~ +10%

* Qwen2.5-7B (Light purple circle): ~ +5%

* Qwen2.5-72B (Red circle): ~ +7%

* Claude3.5 (Blue pentagon): ~ +11%

* GPT-3.5 (Orange pentagon): ~ +3%

* GPT-4o (Green pentagon): ~ +12%

* QWQ-32B (Purple diamond): ~ +9%

* DeepSeek-V3 (Pink diamond): ~ +2%

**6. GPQA:**

* LLaMA3.1-8B (Teal circle): ~ +25%

* LLaMA3.1-70B (Light green circle): ~ +20%

* Qwen2.5-7B (Light purple circle): ~ +27%

* Qwen2.5-72B (Red circle): ~ +30% (Highest value in plot)

* Claude3.5 (Blue pentagon): ~ +18%

* GPT-3.5 (Orange pentagon): ~ +1%

* GPT-4o (Green pentagon): ~ +13%

* QWQ-32B (Purple diamond): ~ +21%

* DeepSeek-V3 (Pink diamond): ~ +9%

**7. HumanEval:**

* LLaMA3.1-8B (Teal circle): ~ -6%

* LLaMA3.1-70B (Light green circle): ~ +17%

* Qwen2.5-7B (Light purple circle): ~ +7%

* Qwen2.5-72B (Red circle): ~ +2%

* Claude3.5 (Blue pentagon): ~ +1%

* GPT-3.5 (Orange pentagon): ~ -1%

* GPT-4o (Green pentagon): ~ +17%

* QWQ-32B (Purple diamond): ~ +15%

* DeepSeek-V3 (Pink diamond): ~ -9% (Notable negative outlier)

### Key Observations

1. **GPQA Shows Largest Gains:** All models show a positive Δ on the GPQA dataset, with most clustering between +10% and +30%. This suggests a significant performance improvement for all evaluated models on this specific benchmark.

2. **DeepSeek-V3 Variability:** The DeepSeek-V3 model (pink diamond) shows extreme variability. It has the highest single Δ on HotpotQA (~+26%) and a strong positive on GSM8K (~+23%), but the most negative Δ on HumanEval (~-9%).

3. **Model Type Trends:** "Reasoning LLMs" (diamonds) are not uniformly superior. While DeepSeek-V3 and QWQ-32B show high peaks, they also show dips. "Close LLMs" (pentagons) like GPT-4o and Claude3.5 show consistently moderate to high positive Δs across most datasets.

4. **Dataset Difficulty Signal:** Datasets like CS-QA and AQUA show Δ values clustered closer to zero for many models, suggesting less performance change or a harder baseline. In contrast, GPQA and GSM8K show wider spreads and higher positive values.

5. **HumanEval Divergence:** Performance on HumanEval is highly split. Some models (LLaMA3.1-70B, GPT-4o) show large gains (~+17%), while others (LLaMA3.1-8B, DeepSeek-V3) show losses.

### Interpretation

This chart likely visualizes the performance delta (Δ) of various LLMs compared to a previous version or a baseline model on specific reasoning and knowledge benchmarks. The data suggests:

* **Benchmark-Specific Progress:** The large positive Δs on GPQA and GSM8K indicate that recent model iterations or specific model types have made substantial progress on these quantitative and scientific reasoning tasks.

* **Inconsistent Gains:** Performance improvement is not uniform. A model excelling on one type of task (e.g., DeepSeek-V3 on HotpotQA) may underperform on another (e.g., HumanEval code generation), highlighting the challenge of creating general-purpose models.

* **The "Reasoning LLM" Category is Not Monolithic:** The diamond-shaped markers do not cluster together, indicating that being categorized as a "Reasoning LLM" does not guarantee a specific performance profile. Architectural or training differences within this category lead to divergent outcomes.

* **Scale vs. Specialization:** Larger models (e.g., LLaMA3.1-70B) generally show positive Δs, but smaller specialized models or different architectures can achieve higher peaks on specific tasks, as seen with Qwen2.5-72B on GPQA.

The chart effectively communicates that LLM advancement is multifaceted, with gains concentrated in certain domains and significant variability between models, even within the same size or type category.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Scatter Plot: Language Model Performance Comparison Across Datasets

### Overview

The image is a scatter plot comparing the performance of various large language models (LLMs) across multiple question-answering and reasoning datasets. The y-axis represents percentage change (Δ%) relative to a baseline (Δ=0), while the x-axis categorizes datasets. Each model is represented by a distinct color and shape, with performance variations visualized as data points scattered across the plot.

### Components/Axes

- **X-Axis (Dataset)**: Labeled "Dataset" with categories:

HotpotQA | CS-QA | AQUA | GSM8K | MATH | GPQA | HumanEval

(Separated by vertical dashed lines)

- **Y-Axis (Δ%)**: Labeled "Δ (%)" with a baseline at 0% (green dashed line).

- **Legend**: Located on the right, mapping colors/shapes to models:

- LLaMA3.1-8B (teal circle)

- LLaMA3.1-70B (yellow circle)

- Qwen2.5-7B (purple circle)

- Qwen2.5-72B (red circle)

- Claude3.5 (blue pentagon)

- GPT-3.5 (orange pentagon)

- GPT-4o (green pentagon)

- QWQ-32B (pink diamond)

- DeepSeek-V3 (purple diamond)

- Open LLM (open circle)

- Close LLM (open pentagon)

- Reasoning LLM (open diamond)

- Baseline (Δ=0) (green dashed line)

### Detailed Analysis

- **Dataset Performance**:

- **GPQA**: QWQ-32B (pink diamond) shows the highest improvement (~25%), while DeepSeek-V3 (purple diamond) has the largest decline (~-10%).

- **MATH**: QWQ-32B peaks again (~20%), with GPT-4o (green pentagon) at ~15%.

- **HumanEval**: QWQ-32B drops sharply (~-5%), while DeepSeek-V3 shows the steepest decline (~-15%).

- **GSM8K**: Most models cluster near baseline (0–5%), except QWQ-32B (~10%) and GPT-4o (~12%).

- **Model Trends**:

- **QWQ-32B**: Consistently high performance in GPQA and MATH, but weaker in HumanEval.

- **DeepSeek-V3**: Strong in early datasets (e.g., HotpotQA: ~5%) but declines in later ones.

- **LLaMA3.1-70B**: Stable mid-range performance (~5–10%) across most datasets.

- **GPT-4o**: Strong in GSM8K and MATH (~10–15%), weaker in HumanEval (~-2%).

### Key Observations

1. **QWQ-32B** dominates GPQA and MATH but underperforms in HumanEval.

2. **DeepSeek-V3** shows a U-shaped trend: strong early, weak late.

3. **GPT-4o** excels in reasoning-heavy datasets (GSM8K, MATH) but struggles with HumanEval.

4. **Baseline (Δ=0)**: Most models cluster near this line, indicating mixed performance relative to the baseline.

### Interpretation

The plot highlights dataset-specific strengths and weaknesses of LLMs. QWQ-32B’s performance suggests it is optimized for complex reasoning (GPQA, MATH), while its drop in HumanEval may reflect challenges with nuanced or ambiguous queries. DeepSeek-V3’s decline in later datasets could indicate overfitting or sensitivity to question complexity. GPT-4o’s consistency in reasoning tasks aligns with its reputation for advanced problem-solving, but its HumanEval dip suggests limitations in real-world applicability. The baseline (Δ=0) serves as a critical reference, emphasizing that many models only marginally outperform or underperform the baseline across datasets.

DECODING INTELLIGENCE...