## Diagram: Knowledge Distillation Taxonomy

### Overview

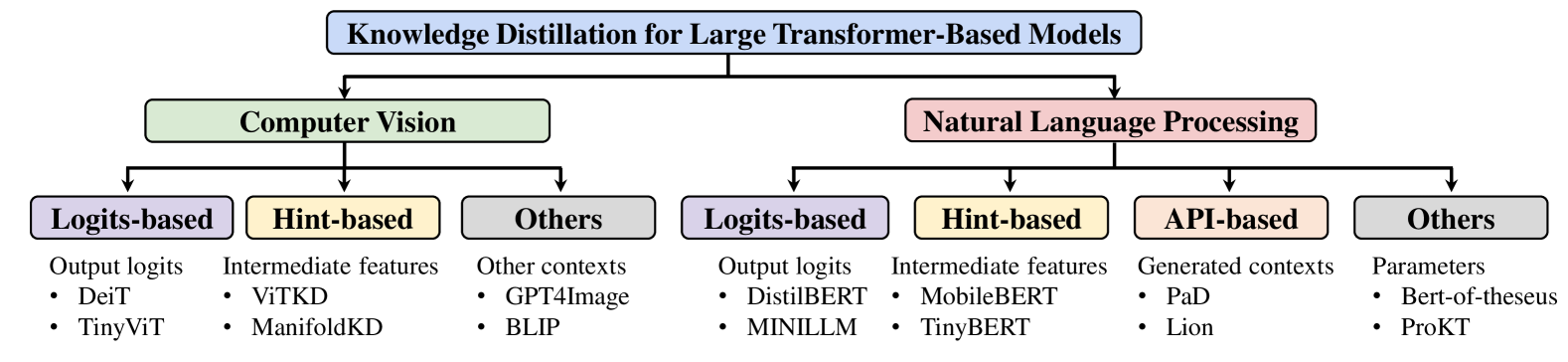

The image is a hierarchical diagram illustrating a taxonomy of knowledge distillation techniques for large transformer-based models. The diagram branches from a central topic into two main categories: Computer Vision and Natural Language Processing. Each of these categories is further divided into subcategories based on the distillation method used: Logits-based, Hint-based, and Others. Natural Language Processing has an additional category: API-based. Each subcategory lists specific models or techniques.

### Components/Axes

* **Main Title:** Knowledge Distillation for Large Transformer-Based Models (Blue box at the top)

* **First Level Categories:**

* Computer Vision (Green box, left side)

* Natural Language Processing (Red box, right side)

* **Second Level Categories (Computer Vision):**

* Logits-based (Purple box, left)

* Description: Output logits

* Hint-based (Yellow box, center-left)

* Description: Intermediate features

* Others (Gray box, right-left)

* Description: Other contexts

* **Second Level Categories (Natural Language Processing):**

* Logits-based (Purple box, left)

* Description: Output logits

* Hint-based (Yellow box, center-left)

* Description: Intermediate features

* API-based (Orange box, center-right)

* Description: Generated contexts

* Others (Gray box, right)

* Description: Parameters

### Detailed Analysis or ### Content Details

**Computer Vision:**

* **Logits-based:**

* DeiT

* TinyViT

* **Hint-based:**

* ViTKD

* ManifoldKD

* **Others:**

* GPT4Image

* BLIP

**Natural Language Processing:**

* **Logits-based:**

* DistilBERT

* MINILLM

* **Hint-based:**

* MobileBERT

* TinyBERT

* **API-based:**

* PaD

* Lion

* **Others:**

* Bert-of-theseus

* ProKT

### Key Observations

* The diagram provides a structured overview of knowledge distillation methods.

* Computer Vision and Natural Language Processing are the two main application areas.

* Logits-based and Hint-based approaches are common to both domains.

* Natural Language Processing has an additional category, API-based, which is not present in Computer Vision.

* The "Others" category exists in both domains, suggesting that there are knowledge distillation techniques that do not fit neatly into the Logits-based, Hint-based, or API-based categories.

### Interpretation

The diagram illustrates the landscape of knowledge distillation techniques applied to large transformer-based models, specifically in the fields of Computer Vision and Natural Language Processing. The categorization highlights the different types of information used to transfer knowledge from a larger, more complex model (teacher) to a smaller, more efficient model (student). Logits-based methods focus on matching the output probabilities of the teacher, Hint-based methods use intermediate feature representations, and API-based methods leverage generated contexts. The "Others" category suggests the existence of less conventional or hybrid approaches. The diagram serves as a useful reference for researchers and practitioners in the field, providing a clear overview of the available techniques and their applications.