\n

## Diagram: Knowledge Distillation for Large Transformer-Based Models

### Overview

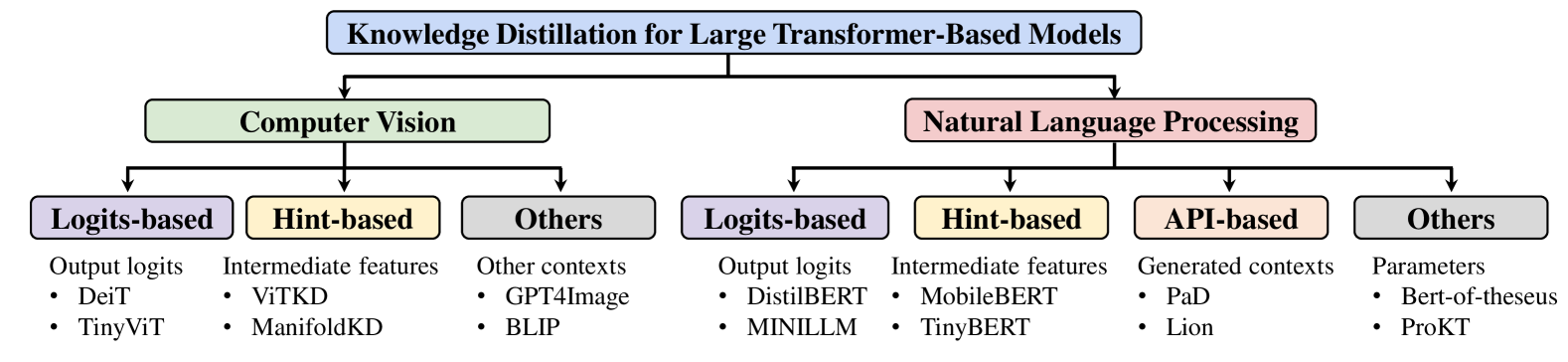

This diagram illustrates the different approaches to knowledge distillation for large transformer-based models, categorized by application domain (Computer Vision and Natural Language Processing) and distillation method. It presents a hierarchical structure, branching out from the main title into two primary areas, each further subdivided into specific techniques.

### Components/Axes

The diagram consists of a central title, two main branches representing "Computer Vision" and "Natural Language Processing", and subsequent sub-branches representing different distillation methods within each domain: "Logits-based", "Hint-based", "API-based", and "Others". Each sub-branch lists specific models or techniques associated with that approach.

### Detailed Analysis or Content Details

The diagram is structured as follows:

**Header:** "Knowledge Distillation for Large Transformer-Based Models" – positioned at the top-center.

**Level 1 Branches:**

* **Computer Vision** – positioned to the left of the header, connected by a downward arrow.

* **Natural Language Processing** – positioned to the right of the header, connected by a downward arrow.

**Level 2 Branches (under Computer Vision):**

* **Logits-based** – positioned below "Computer Vision", connected by a downward arrow.

* Lists: "Output logits", "Deit", "TinyViT"

* **Hint-based** – positioned to the right of "Logits-based", connected by a downward arrow.

* Lists: "Intermediate features", "ViTKD", "ManifoldKD"

* **Others** – positioned to the right of "Hint-based", connected by a downward arrow.

* Lists: "Other contexts", "GPT4Image", "BLIP"

**Level 2 Branches (under Natural Language Processing):**

* **Logits-based** – positioned below "Natural Language Processing", connected by a downward arrow.

* Lists: "Output logits", "DistilBERT", "MINILLM"

* **Hint-based** – positioned to the right of "Logits-based", connected by a downward arrow.

* Lists: "Intermediate features", "MobileBERT", "TinyBERT"

* **API-based** – positioned to the right of "Hint-based", connected by a downward arrow.

* Lists: "Generated contexts", "PaD", "Lion"

* **Others** – positioned to the right of "API-based", connected by a downward arrow.

* Lists: "Parameters", "Bert-of-theseus", "ProKT"

### Key Observations

The diagram highlights that knowledge distillation techniques vary depending on the application domain. Computer Vision primarily utilizes Logits-based, Hint-based, and Other context-based methods, while Natural Language Processing employs Logits-based, Hint-based, API-based, and Parameter-based methods. The "Others" category in both domains suggests the existence of less common or emerging techniques.

### Interpretation

This diagram provides a taxonomy of knowledge distillation approaches for large transformer models. It demonstrates that the choice of distillation method is influenced by the specific task (Computer Vision vs. Natural Language Processing). The diagram suggests a structured approach to understanding and categorizing the diverse landscape of knowledge distillation techniques. The inclusion of specific model names (e.g., Deit, DistilBERT) provides concrete examples of how these techniques are applied in practice. The branching structure implies a hierarchical relationship, where the main categories (Logits-based, Hint-based, etc.) represent broad strategies, and the listed models represent specific implementations of those strategies. The diagram doesn't provide quantitative data or performance comparisons, but rather serves as a conceptual overview of the field.