## Diagram: Knowledge Distillation for Large Transformer-Based Models

### Overview

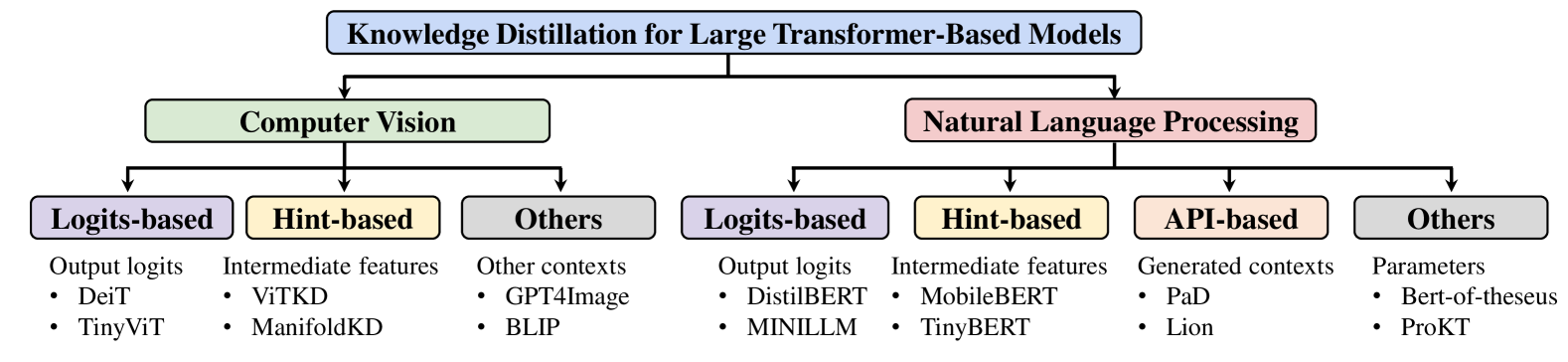

The image is a hierarchical flowchart or taxonomy diagram illustrating the categorization of knowledge distillation techniques for large transformer-based models. It organizes methods into two primary domains—Computer Vision and Natural Language Processing—and further subdivides them by the type of distillation signal or approach used, providing specific model examples for each category.

### Components/Axes

The diagram is structured as a top-down tree with the following components:

1. **Root Node (Top Center):** A blue rectangular box containing the main title: "Knowledge Distillation for Large Transformer-Based Models".

2. **Primary Branches (Second Level):** Two arrows descend from the root node to two main category boxes:

* **Left Branch:** A green box labeled "Computer Vision".

* **Right Branch:** A pink box labeled "Natural Language Processing".

3. **Secondary Branches (Third Level):** Arrows descend from each primary category to sub-category boxes.

* **Under "Computer Vision":** Three boxes.

* Left: A purple box labeled "Logits-based".

* Center: A yellow box labeled "Hint-based".

* Right: A grey box labeled "Others".

* **Under "Natural Language Processing":** Four boxes.

* Left: A purple box labeled "Logits-based".

* Center-Left: A yellow box labeled "Hint-based".

* Center-Right: A peach box labeled "API-based".

* Right: A grey box labeled "Others".

4. **Descriptive Text & Examples (Fourth Level):** Below each third-level sub-category box, there is a text block consisting of a brief description followed by a bulleted list of example models or methods.

### Detailed Analysis

The diagram's content is fully transcribed below, organized by the primary domain.

**Computer Vision Branch:**

* **Sub-category: Logits-based**

* Description: "Output logits"

* Examples:

* DeiT

* TinyViT

* **Sub-category: Hint-based**

* Description: "Intermediate features"

* Examples:

* ViTKD

* ManifoldKD

* **Sub-category: Others**

* Description: "Other contexts"

* Examples:

* GPT4Image

* BLIP

**Natural Language Processing Branch:**

* **Sub-category: Logits-based**

* Description: "Output logits"

* Examples:

* DistilBERT

* MINILLM

* **Sub-category: Hint-based**

* Description: "Intermediate features"

* Examples:

* MobileBERT

* TinyBERT

* **Sub-category: API-based**

* Description: "Generated contexts"

* Examples:

* PaD

* Lion

* **Sub-category: Others**

* Description: "Parameters"

* Examples:

* Bert-of-theseus

* ProKT

### Key Observations

1. **Structural Symmetry and Divergence:** The diagram shows a parallel structure for the "Logits-based" and "Hint-based" categories across both CV and NLP domains, using identical labels and color coding (purple and yellow, respectively). This suggests these are fundamental, cross-domain distillation paradigms.

2. **Domain-Specific Categories:** The NLP branch includes an additional, distinct category—"API-based" (peach box)—which is not present under Computer Vision. This indicates a methodological approach specific to or more prevalent in language model distillation.

3. **Consistent Categorization Logic:** The "Others" category (grey box) exists in both domains but contains different descriptive text and examples, reflecting domain-specific alternative techniques. In CV, it refers to "Other contexts," while in NLP, it refers to "Parameters."

4. **Model Examples:** The listed examples are well-known models or frameworks within their respective fields (e.g., DistilBERT, TinyBERT for NLP; DeiT, ViTKD for CV), serving as concrete instantiations of each distillation approach.

### Interpretation

This diagram serves as a **conceptual taxonomy** for the field of knowledge distillation applied to transformer models. It does not present empirical data or performance metrics but rather organizes the landscape of methodological approaches.

* **What it demonstrates:** The primary insight is that knowledge distillation is not a monolithic technique. The field has evolved specialized strategies based on *what* knowledge is transferred (logits, intermediate features, generated contexts, parameters) and the *context* in which it is applied (vision vs. language tasks).

* **Relationship between elements:** The hierarchy explicitly shows that the choice of distillation method is a secondary consideration, dependent first on the primary application domain (CV or NLP). The color-coding reinforces that similar methodological families (Logits-based, Hint-based) exist across domains, while unique categories (API-based) highlight domain-specific innovations.

* **Notable patterns:** The presence of "API-based" distillation only under NLP is significant. It likely refers to methods where a large "teacher" model acts as an API to generate training contexts or data for a smaller "student" model, a paradigm more naturally suited to generative language tasks. The "Others" category in NLP focusing on "Parameters" (e.g., Bert-of-theseus, which replaces layers) points to architectural manipulation as a distinct form of knowledge transfer.

* **Purpose and utility:** This diagram is a foundational reference for researchers or engineers. It helps answer: "Given my domain (vision or language), what are the high-level families of distillation techniques available, and what are some representative models I should look at?" It provides a structured entry point into the literature, mapping broad strategies to specific, citable works.