## Flowchart: Knowledge Distillation for Large Transformer-Based Models

### Overview

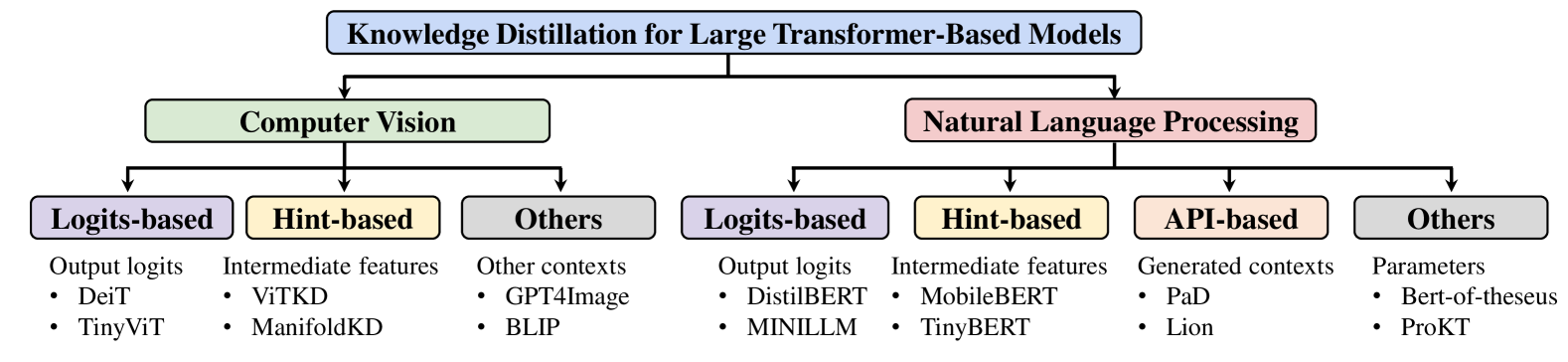

The diagram illustrates a hierarchical taxonomy of knowledge distillation techniques applied to large transformer-based models, divided into two primary domains: **Computer Vision** and **Natural Language Processing (NLP)**. Each domain branches into subcategories (Logits-based, Hint-based, API-based, Others) with specific model examples.

### Components/Axes

- **Main Title**: "Knowledge Distillation for Large Transformer-Based Models" (top center).

- **Primary Branches**:

- **Computer Vision** (left, green rectangle).

- **Natural Language Processing** (right, pink rectangle).

- **Subcategories**:

- **Logits-based**: Output logits (e.g., DeiT, TinyViT for CV; DistilBERT, MINILLM for NLP).

- **Hint-based**: Intermediate features (e.g., ViTKD, ManifoldKD for CV; MobileBERT, TinyBERT for NLP).

- **API-based**: Generated contexts (e.g., PaD, Lion for NLP).

- **Others**: Contexts, parameters, or unrelated models (e.g., GPT4Image, BLIP for CV; Bert-of-theseus, ProKT for NLP).

### Detailed Analysis

- **Computer Vision**:

- **Logits-based**: DeiT, TinyViT (output logits).

- **Hint-based**: ViTKD, ManifoldKD (intermediate features).

- **Others**: GPT4Image, BLIP (other contexts).

- **Natural Language Processing**:

- **Logits-based**: DistilBERT, MINILLM (output logits).

- **Hint-based**: MobileBERT, TinyBERT (intermediate features).

- **API-based**: PaD, Lion (generated contexts).

- **Others**: Bert-of-theseus, ProKT (parameters).

### Key Observations

1. **Divergent Approaches**: Computer Vision focuses on logits and hints, while NLP incorporates API-based methods alongside logits and hints.

2. **Overlap in "Others"**: Both domains include foundational models (e.g., Bert-of-theseus, ProKT) under "Others," suggesting these are not directly tied to distillation techniques.

3. **Hierarchical Structure**: The flowchart emphasizes domain-specific adaptations of distillation methods, with NLP showing greater methodological diversity.

### Interpretation

The diagram highlights how knowledge distillation strategies are tailored to domain requirements. Computer Vision prioritizes direct logit and feature-based distillation, while NLP leverages APIs for context generation, reflecting the complexity of language tasks. The inclusion of "Others" in both domains indicates foundational models or parameters that may support but are not central to distillation workflows. This taxonomy underscores the adaptability of transformer architectures across modalities, with NLP demonstrating more varied distillation paradigms.