## Diagram: Multilingual Text Processing Pipeline

### Overview

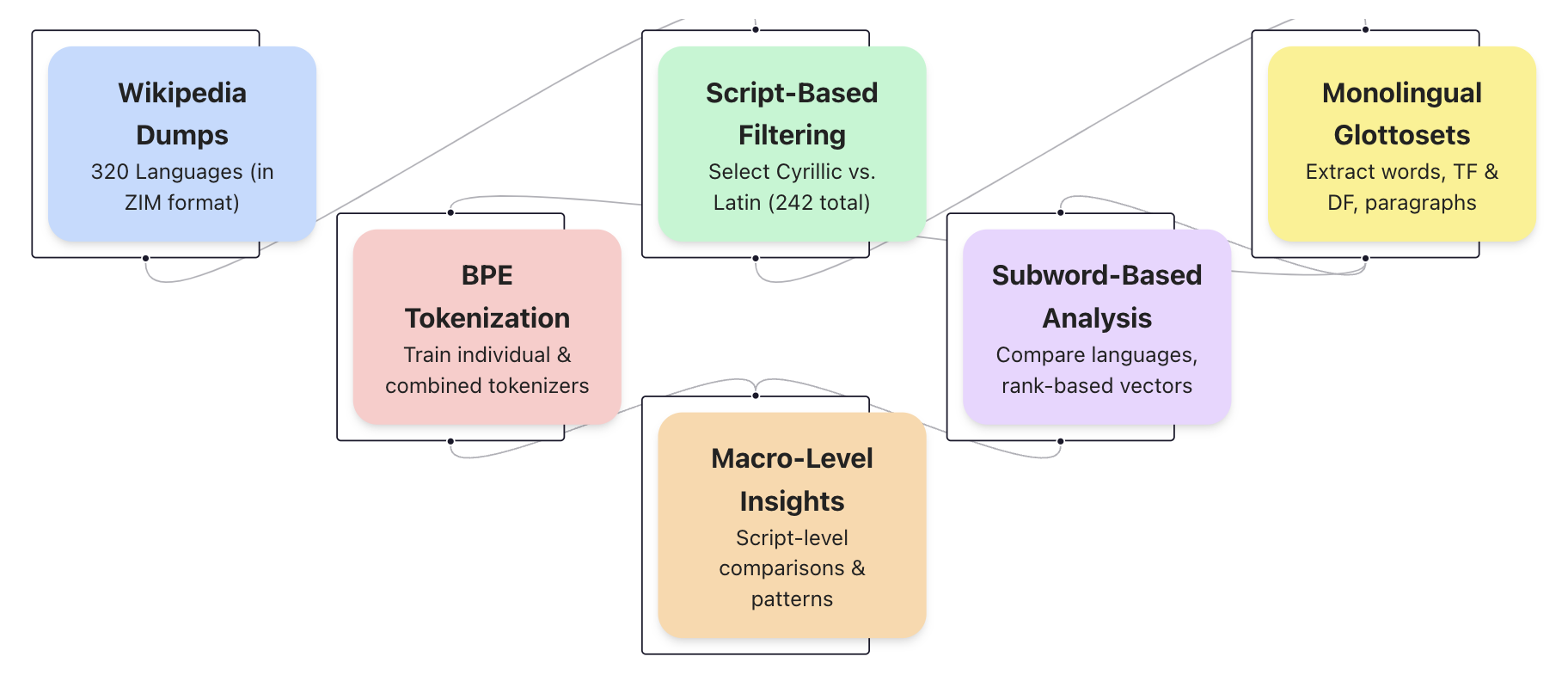

The image displays a flowchart illustrating a six-stage pipeline for processing multilingual text data derived from Wikipedia. The process flows from left to right, beginning with raw data acquisition and culminating in macro-level analysis. The diagram uses color-coded boxes to represent distinct stages, connected by directional arrows indicating the workflow.

### Components/Axes

The diagram consists of six primary components (boxes) arranged in a staggered, left-to-right flow. Each box has a title and a brief description.

1. **Box 1 (Top-Left, Light Blue):**

* **Title:** Wikipedia Dumps

* **Description:** 320 Languages (in ZIM format)

2. **Box 2 (Center-Left, Light Pink):**

* **Title:** BPE Tokenization

* **Description:** Train individual & combined tokenizers

3. **Box 3 (Top-Center, Light Green):**

* **Title:** Script-Based Filtering

* **Description:** Select Cyrillic vs. Latin (242 total)

4. **Box 4 (Center-Right, Light Purple):**

* **Title:** Subword-Based Analysis

* **Description:** Compare languages, rank-based vectors

5. **Box 5 (Top-Right, Light Yellow):**

* **Title:** Monolingual Glottosets

* **Description:** Extract words, TF & DF, paragraphs

6. **Box 6 (Bottom-Center, Light Orange):**

* **Title:** Macro-Level Insights

* **Description:** Script-level comparisons & patterns

**Flow Connections (Arrows):**

* A primary arrow flows from **Wikipedia Dumps** to **Script-Based Filtering**.

* A secondary arrow flows from **Wikipedia Dumps** to **BPE Tokenization**.

* An arrow flows from **BPE Tokenization** to **Script-Based Filtering**.

* An arrow flows from **Script-Based Filtering** to **Monolingual Glottosets**.

* An arrow flows from **Script-Based Filtering** to **Subword-Based Analysis**.

* An arrow flows from **Monolingual Glottosets** to **Subword-Based Analysis**.

* An arrow flows from **Subword-Based Analysis** to **Macro-Level Insights**.

* An arrow flows from **BPE Tokenization** to **Macro-Level Insights**.

### Detailed Analysis

The pipeline describes a structured methodology for computational linguistics research.

* **Stage 1 - Data Acquisition:** The process begins with "Wikipedia Dumps" for 320 languages, sourced in the ZIM file format, which is optimized for offline use.

* **Stage 2 - Initial Processing:** The data splits into two parallel paths:

* **Path A (Tokenization):** "BPE Tokenization" involves training Byte Pair Encoding tokenizers, both for individual languages and combined sets.

* **Path B (Filtering):** "Script-Based Filtering" narrows the dataset to languages using Cyrillic or Latin scripts, resulting in a subset of 242 languages.

* **Stage 3 - Data Extraction & Analysis:** The filtered data from Path B feeds into two subsequent stages:

* "Monolingual Glottosets" focuses on extracting core linguistic units: words, Term Frequency (TF), Document Frequency (DF), and paragraphs.

* "Subword-Based Analysis" uses the tokenizers from Path A and the filtered data to compare languages and create rank-based vectors.

* **Stage 4 - Synthesis:** Both the "Subword-Based Analysis" and the initial "BPE Tokenization" feed into the final stage, "Macro-Level Insights," which performs script-level comparisons and identifies broader patterns.

### Key Observations

1. **Non-Linear Flow:** The diagram is not a simple linear sequence. It features parallel processing (BPE Tokenization and Script-Based Filtering occur concurrently) and multiple convergence points.

2. **Data Reduction:** There is a clear data reduction step, moving from 320 languages to a focused set of 242 based on writing script.

3. **Dual Analysis Paths:** The pipeline employs two complementary analysis methods: subword-based (using BPE) and word/paragraph-based (in the Glottosets).

4. **Central Role of Script:** The "Script-Based Filtering" stage acts as a major hub, directing flow to three other stages (Glottosets, Subword Analysis, and indirectly to Macro Insights).

### Interpretation

This flowchart outlines a sophisticated research pipeline for comparative linguistics using large-scale, multilingual Wikipedia data. The process is designed to move from broad, raw data to specific, analyzable units and finally to high-level insights.

* **Purpose:** The pipeline enables systematic comparison of languages, particularly between those using Cyrillic and Latin scripts. It likely aims to study morphological complexity, vocabulary overlap, or other quantitative linguistic features across language families.

* **Methodological Rigor:** The use of both BPE (a standard in NLP for handling rare words) and traditional word/paragraph extraction suggests a comprehensive approach, capturing both subword morphology and lexical statistics.

* **Scalability:** Starting with 320 languages in a standardized format (ZIM) indicates the pipeline is built for scalability and reproducibility.

* **Inferred Goal:** The final "Macro-Level Insights" stage suggests the ultimate goal is not just language-specific analysis, but the discovery of universal or script-dependent patterns in human language structure as reflected in encyclopedia text. The branching and merging of paths highlight that these insights are derived from synthesizing multiple analytical perspectives.